Command Palette

Search for a command to run...

GTC 2026 | From Vera Rubin to NemoClaw: Nvidia's Future Extends Beyond GPUs?

At the annual NVIDIA GTC, CEO Jensen Huang's keynote speech has always been regarded as an important indicator of the global AI industry. From next-generation GPU architecture to software ecosystem development, this keynote often foreshadows the hot technologies and development directions of AI computing infrastructure in the coming years.

On March 16th local time, the keynote speech of GTC 2026 was held as scheduled. 63-year-old Jensen Huang appeared in his signature leather jacket and passionately presented a series of major new products at the San Jose Stadium in California.

Not just GPUs

As the "star product" in NVIDIA's AI chip roadmap,The Vera Rubin platform also whetted the appetite of the audience at this year's GTC conference—it consists of 7 groundbreaking chips, 5 racks, and 1 supercomputer.Jensen Huang called it a leap forward in technology. Among the most noteworthy are the Rubin GPU, NVIDIA Groq 3 LPX, and NVIDIA Vera CPU.

First up is the Rubin GPU, a brand-new architecture designed specifically for Agentic AI that was officially unveiled in January of this year. It features a third-generation Transformer Engine with hardware-accelerated adaptive compression, providing 50 petaflops of NVFP4 computing performance for AI inference and supporting NVLink 72 full interconnect.

Secondly, there's the implementation of Groq technology at NVIDIA. Since Jensen Huang spent $20 billion to acquire a license for Groq technology at the end of 2025, there has been speculation that this move was to "abandon GPUs in favor of LPUs." Now, the dust has finally settled, and the two have formed a good synergy and complementarity.

In large-scale deployments, LPU clusters function as a massive single processor for accelerating fast, deterministic inference. When deployed in conjunction with Vera Rubin NVL72, the Rubin GPUs and LPUs jointly improve decoding performance by computing each layer of the AI model for each output token. Based on this, NVIDIA has introduced the LPX rack with 256 LPU processors, designed specifically to meet the low latency and large context requirements of agent systems.When combined with Vera Rubin, it can deliver up to 35 times the inference throughput per megawatt for trillion-parameter models.

Finally, there's the NVIDIA Vera CPU, the world's first processor designed for the era of Agentic AI and reinforcement learning.Its operating efficiency is twice that of traditional rack-mount CPUs, and its operating speed is faster than the 50%.It can deliver higher AI throughput, responsiveness, and efficiency for large-scale AI services, such as programming assistants, consumer and enterprise intelligent agents. Jensen Huang stated, "The CPU is no longer just supporting models, but driving them. With groundbreaking performance and energy efficiency, Vera unlocks AI systems that think faster and scale more effectively."

Based on this, NVIDIA also released a brand new Vera CPU rack that integrates 256 liquid-cooled Vera CPUs, supporting more than 22,500 concurrent CPU environments, each of which can run independently at full speed.

The debut of Vera Rubin marks another upgrade in NVIDIA's competitiveness in the era of Agentic AI. From the computing power base of Vera CPU to the inference pinnacle of Rubin GPU, and the storage revolution of BlueField-4 DPU, NVIDIA is pushing every link of the AI factory to new heights through ultimate collaborative design.

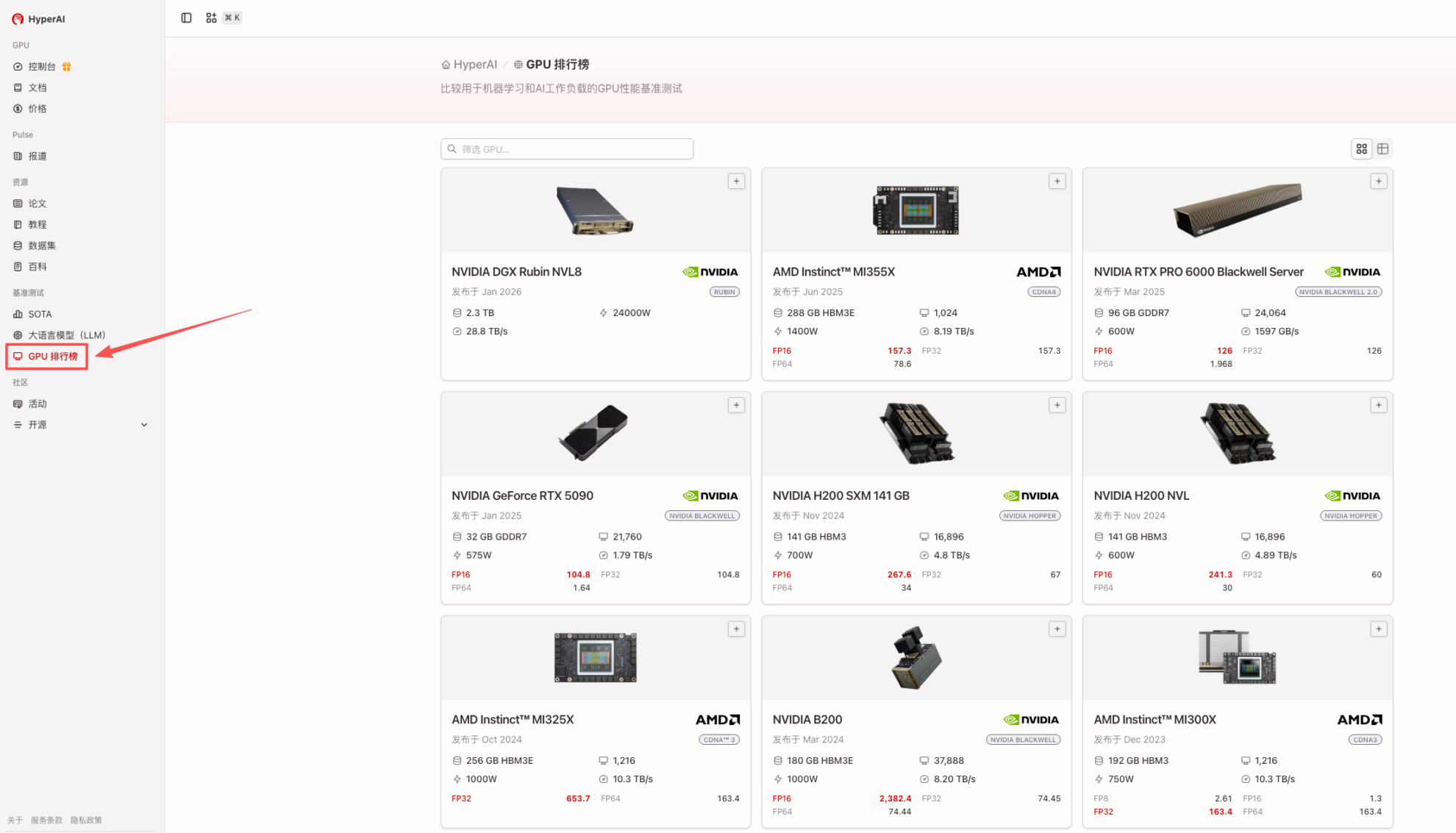

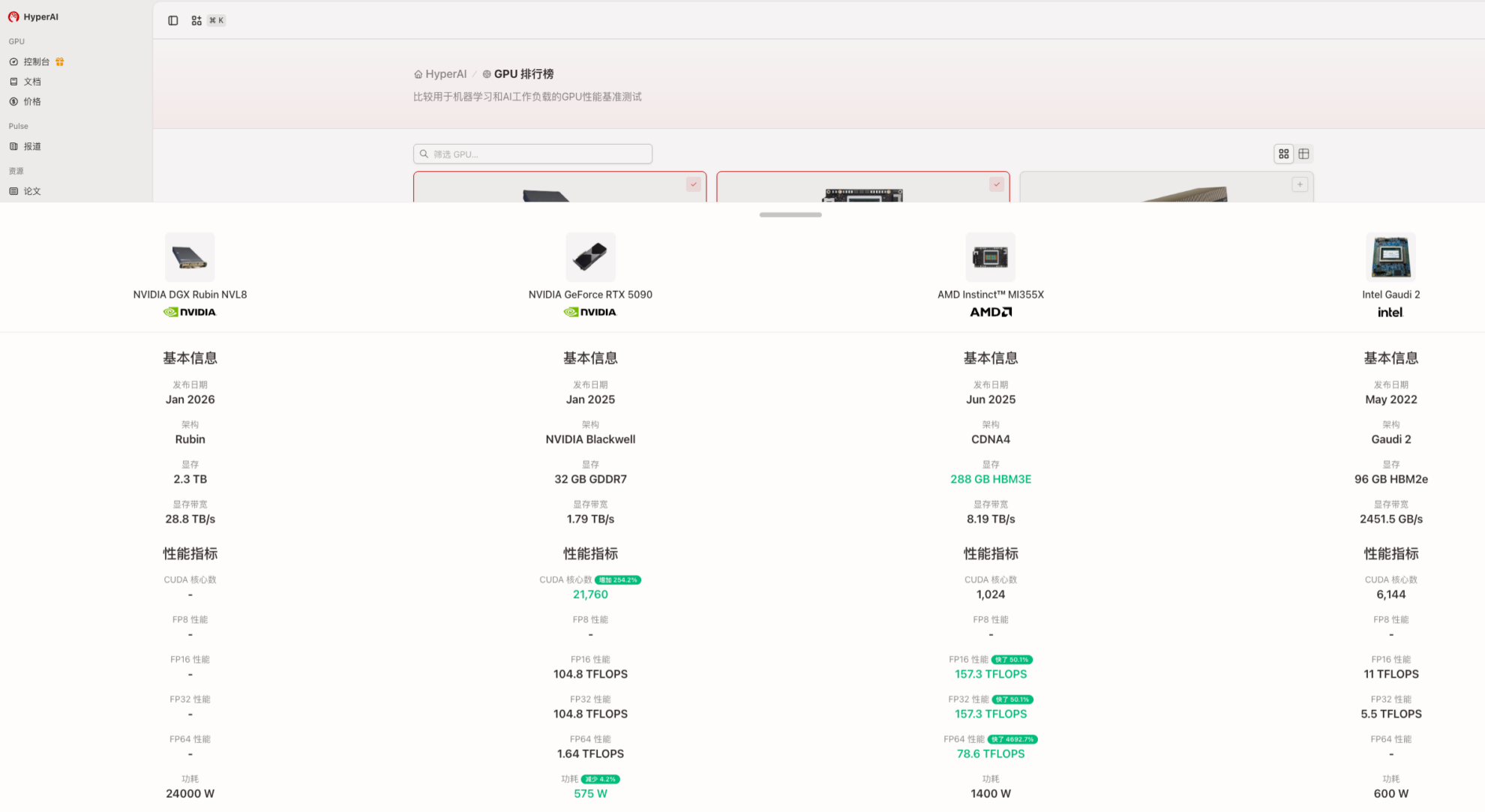

For developers and businesses, while enjoying the benefits of such a massive and continuously improving chip matrix, a more practical challenge arises: with increasingly complex GPU models and a plethora of computing power metrics, how can they move beyond manufacturer specifications and objectively compare the true performance of different hardware? In other words, how can they accurately identify the most suitable option for their business from a vast array of choices?

In view of this,HyperAI has launched a "GPU Ranking List" to build a GPU selection and decision-making reference platform for AI/large model/HPC scenarios.It supports cross-vendor and cross-architecture comparisons, using unified comparison rules to help users make correct and rational technical decisions in the complex GPU/AI accelerator market. HyperAI will keep track of the latest product updates to provide developers with practical tools centered on real-world AI workloads.

The newly released Rubin GPU performance comparison is now available. Check out the GPU rankings now:

https://hyper.ai/gpu-leaderboard

NemoClaw: Optimize OpenClaw with a single command.

Following the release of its next-generation chip roadmap, NVIDIA also simultaneously provided its answer to the "next stage of AI" on the software level: NemoClaw.

"OpenClaw has brought the next frontier of AI to everyone and has become the fastest-growing open-source project in history," Huang Renxun highly praised the project. "Mac and Windows are the operating systems for personal computers..."OpenClaw, on the other hand, is an operating system for personal AI.This is the moment the entire industry has been waiting for—the beginning of a new software renaissance.

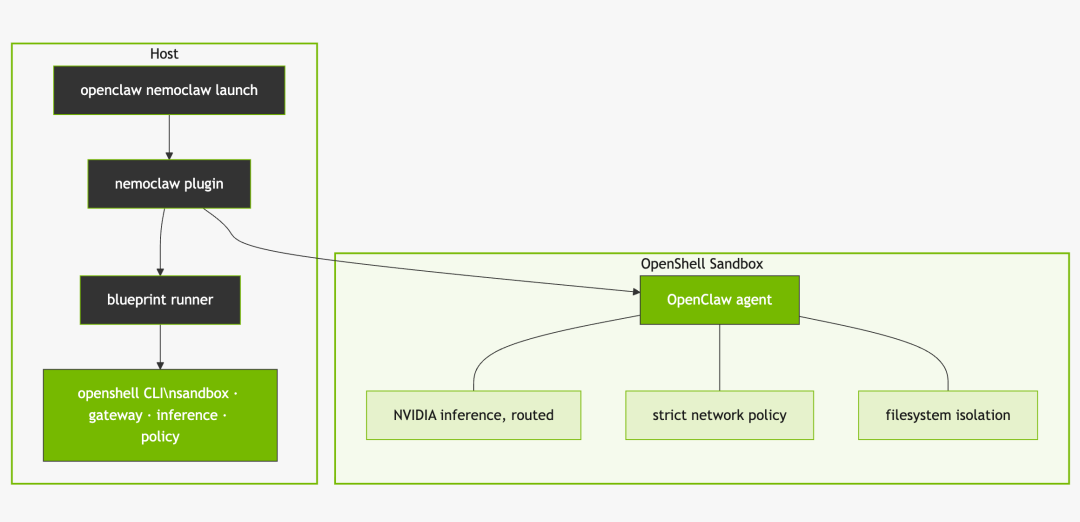

NemoClaw utilizes the NVIDIA Agent Toolkit software to optimize OpenClaw with a single command.This integrates it directly into the NVIDIA ecosystem. NemoClaw installs OpenShell, providing an open-source model and an isolated sandbox environment to enhance data privacy and security for autonomous agents. This solution provides claws with a previously missing underlying infrastructure layer, enabling them to gain the access necessary to perform tasks while also being constrained by policy-based security, network, and privacy safeguards. (See diagram below.)

NemoClaw supports the use of any programmable agent. With its open agent architecture, it can invoke open-source models (including NVIDIA Nemotron) running on a user's local system. Simultaneously, through a privacy router, the agent can also access cutting-edge models running in the cloud.The combination of local and cloud models provides a foundation for agents to learn new skills and complete complex tasks within established privacy and security constraints.

Within this framework, Jensen Huang's emphasis on a "personal AI operating system" is beginning to have a clearer path to implementation: agents are no longer just interfaces for calling models, but rather digital executors capable of long-term operation and continuous learning. If the newly released GPUs and system architecture provide the computing power foundation for this vision, then NemoClaw defines the agent's operating method and security boundaries at the software level—together, they constitute NVIDIA's complete narrative of "AI factories" and "AI workforce."

To some extent, NemoClaw further lowers the development threshold for OpenClaw. However, for developers, quickly validating use cases is equally important; therefore,HyperAI provides developers worldwide with an out-of-the-box runtime environment and online notebooks.You can start building your own AI agent without complicated configuration.

Online running link:

OpenClaw: Running API calls using Free-CPU

https://hyper.ai/notebooks/49888

OpenClaw GPU Running Tutorial

https://hyper.ai/notebooks/49890

Undoubtedly, the annual GTC conference has long been considered the "AI Spring Festival Gala," not only a stage for NVIDIA to showcase its capabilities but also a trendsetter for technology. Numerous media outlets have provided coverage of this technological extravaganza, with various products and model updates flooding the public's attention. Over the next period, HyperAI will continue to share in-depth information about the high-quality open-source models and datasets released at this conference, and provide online experiences. Stay tuned.