Command Palette

Search for a command to run...

Online Tutorials | Easily Deploy NVIDIA's Latest Physical AI Models, Covering Humanoid Robots/Human Motion Generation/Diffusion Model Fine-tuning, etc.

At the recently concluded GTC 2026, in addition to the highly anticipated new GPUs, NVIDIA also focused a significant amount of attention on a more concrete and practical direction:Physical AI.

This concept, repeatedly mentioned by Jensen Huang, reveals a key conclusion: AI only truly becomes the infrastructure driving industrial transformation when it no longer exists merely on a screen but is able to perceive the physical environment, understand tasks, and perform actions. This concept highly overlaps with "Embodied AI," emphasizing the deep coupling of AI with the real world—not just "moving," but acting reliably in complex environments.

Therefore, we can see at GTC 2026, a leading technology conference, that everything from basic humanoid robot models to high-fidelity motion generation and a unified human body modeling system has been discussed.The series of models released by NVIDIA no longer just focus on the model's capabilities, but revolve around "action" and "execution".

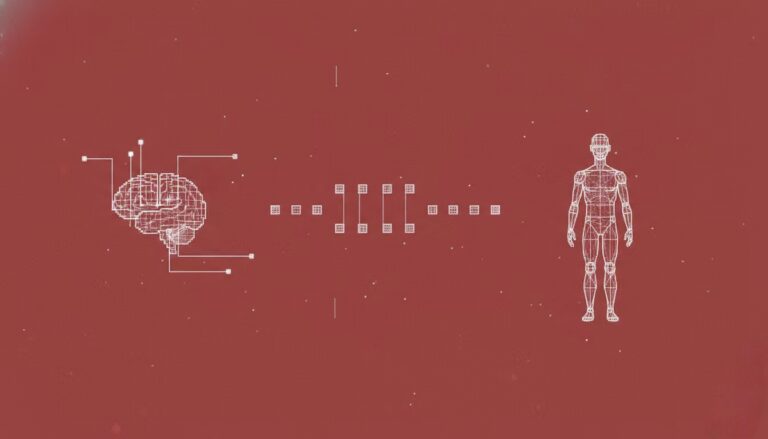

in,The three open-source projects are NVIDIA Isaac GR00T, Kimodo, and SOMA-X.They approach the same problem from three levels: decision-making, generation, and representation—how to enable machines to perform complex actions more naturally and efficiently.

One is responsible for understanding tasks and translating them into executable behaviors; another focuses on generating detailed and realistic motion trajectories; and the third attempts to solve the long-standing problem of fragmented human models, enabling smoother collaboration between different systems. Each of these capabilities has its own clear value, but more importantly, they all point to a more practical goal: to make robots move from being "active" to being "user-friendly."

besides,NVIDIA also released FDFO, a training method for diffusion models, which provides underlying support for the above capabilities from the perspective of generative model optimization.

To enable global developers to quickly experience the open-source achievements of GTC 2026 in a more accessible and stable environment, the HyperAI website (hyper.ai) has launched the following online tutorials in its tutorial section:

NVIDIA Isaac GR00T: A Basic Model for General-Purpose Humanoid Robots

Run online:https://go.hyper.ai/2Cjvr

SOMA-X: A unified parametric human body model framework

Run online:https://go.hyper.ai/UcEI7

Kimodo: Human and Robot Motion Generation Models

Run online:https://go.hyper.ai/p99vI

FDFO: Finite Differential Flow Optimization

Run online:https://go.hyper.ai/ikihN

HyperAI is offering registration benefits for new users.For just $1, you can get 20 hours of RTX 5090 computing power (original price $7).The resource is permanently valid.

NVIDIA Isaac GR00T

General Humanoid Robot Basic Model

The NVIDIA Isaac GR00T N1.6 is an open-source Vision-Language-Action (VLA) model released in March 2026, designed specifically for skill learning in general-purpose humanoid robots. This model employs a cross-embodiment design, enabling it to receive multimodal inputs, including language and images, and perform manipulative tasks in diverse environments.

The neural network architecture of GR00T N1.6 combines a visual language foundation model with a Diffusion Transformer head for continuous motion denoising. This model is trained on diverse robot data, including dual-arm robots, semi-humanoid robots, and large-scale humanoid robots, and can be post-trained to adapt to different robot forms, tasks, and environments.

Run online:https://go.hyper.ai/2Cjvr

SOMA-X: A unified parametric human body model framework

Parametric human body models, including Skinned Multi-Person Linear (SMPL), SMPL-X, Multi-Task Human Representation (MHR), Anny, and GarmentMeasurements, are widely used in fields such as human body reconstruction, animation, and simulation.

However, these models suffer from fundamental incompatibility at the underlying level: each model defines its own mesh topology, joint hierarchy, and parameterization method, making seamless integration impossible. Therefore, when it's necessary to combine the advantages of different models (e.g., combining the age control capabilities of one model with motion data from another), it's often necessary to develop a separate adapter for each pair of models. This not only increases development costs but also severely limits the system's interoperability and practical applications.

Against this backdrop, NVIDIA Labs released SOMA-X to address compatibility issues between parametric human models. It provides a standardized human topology and skeletal rigging system as a common central hub for all supported parametric human models. Instead of replacing existing models, it achieves unification by mapping the static shapes of each model to a shared representation. This approach allows any supported identity model to be driven within a unified animation pipeline without the need for custom adapters or model-specific redirection, significantly improving the system's versatility and scalability.

Run online:https://go.hyper.ai/UcEI7

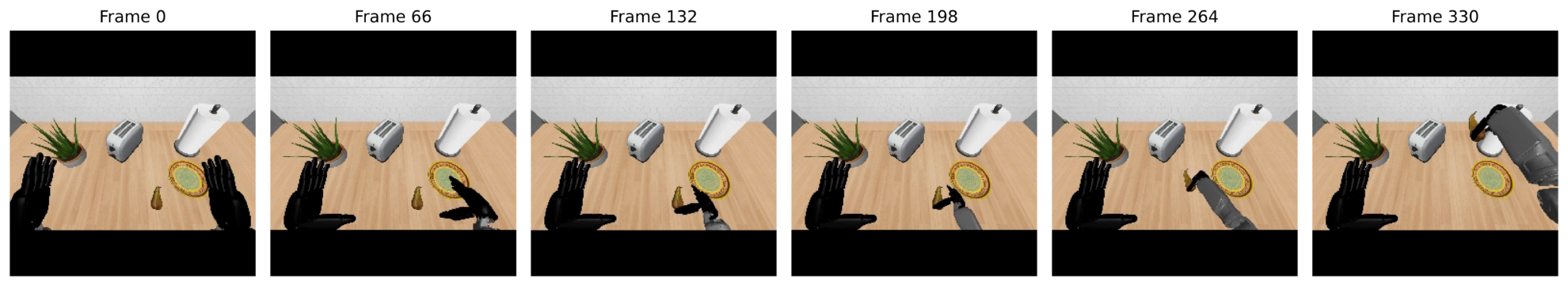

Kimodo: Human and Robot Motion Generation Models

Kimodo is a kinematic motion diffusion model released by NVIDIA Research in March 2026. Trained on a large-scale (700 hours), commercially available optical motion capture dataset, this model generates high-quality human and humanoid robot motion and can be controlled via text prompts and rich kinematic constraints such as full-body pose keyframes, end effector position/rotation, 2D paths, and 2D waypoints.

Kimodo supports multiple bone types, including:

* SOMA: Human skeleton, 30 joints

* Unitree G1: Humanoid robot skeleton with 34 joints

* SMPL-X: A parametric human body model with 22 joints.

This model employs a diffusion architecture, combining a text encoder with a motion constraint mechanism, enabling it to generate smooth and natural motion sequences based on natural language descriptions and keyframe constraints.

Run online:https://go.hyper.ai/p99vI

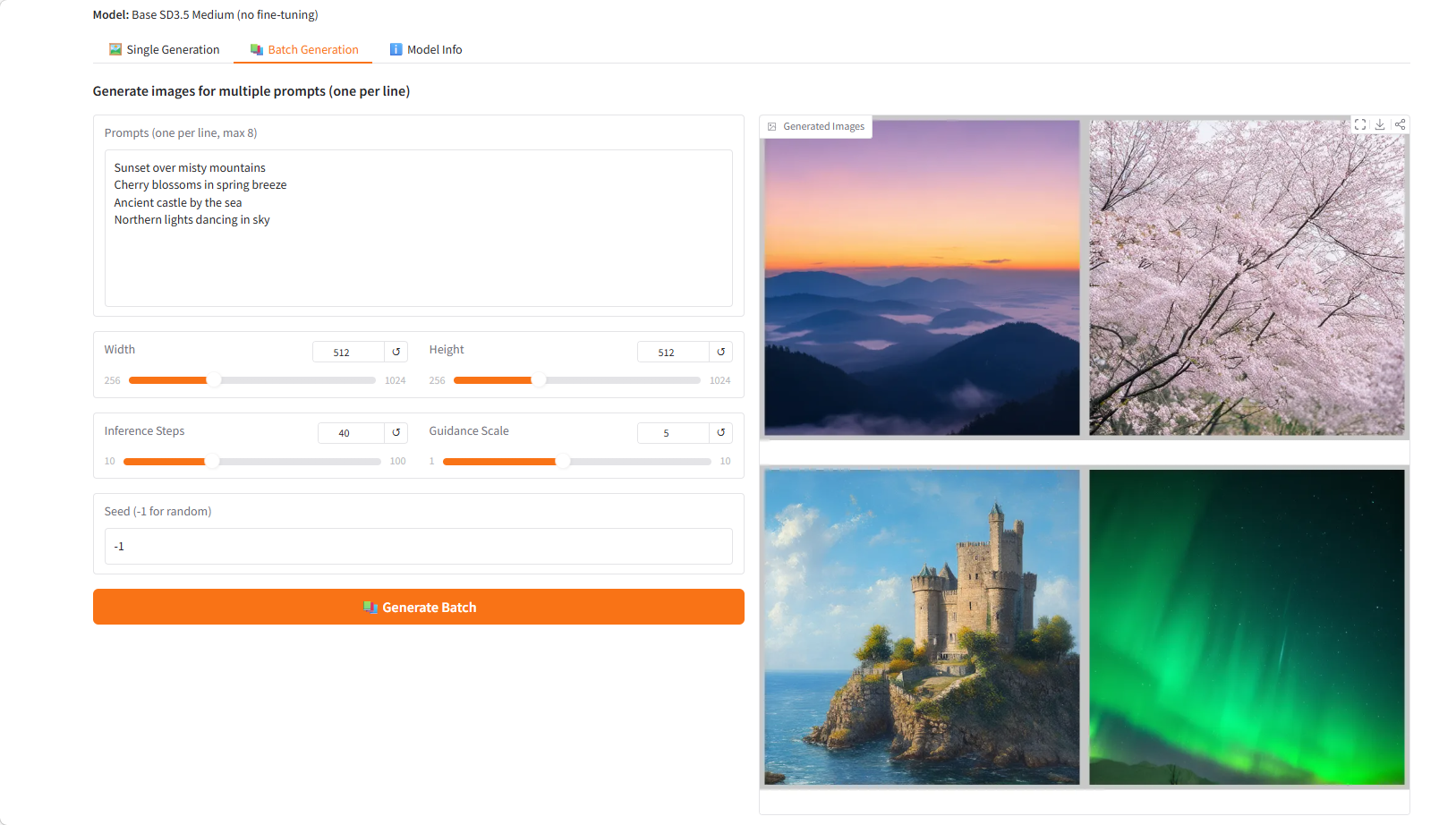

FDFO: Finite Differential Flow Optimization

FDFO (Finite Difference Flow Optimization) is a streaming diffusion model fine-tuning method released by NVIDIA in March 2026, based on finite difference gradient estimation. This method optimizes the model's generative quality by training it on Stable Diffusion 3.5 Medium using reward signals from Visual Language Model (VLM) scores and/or PickScores after reinforcement learning.

FDFO solves the gradient estimation problem in the fine-tuning of traditional diffusion models by achieving efficient and stable gradient calculation through the finite difference method. While maintaining the original capabilities of the model, this method significantly improves the alignment, aesthetic quality, and realism between the generated image and the text prompt.

Run online:https://go.hyper.ai/ikihN