Command Palette

Search for a command to run...

Online Tutorial | 41k Stars Achieved: HKU Team open-sources ultra-lightweight AI Assistant Nanobot, Implementing OpenClaw Core Functionality in 4000 Lines of code.

The groundbreaking OpenClaw has propelled large language models from simple dialogue tools into "digital employees" capable of continuous online interaction, multi-platform collaboration, tool invocation, and task execution. However, its massive codebase of over 400,000 lines has deterred many developers from learning and developing it further.

In this context,The HKU Data Intelligence Lab (HKUDS) has open-sourced nanobot, a lightweight personal AI assistant.The Agent capability is compressed to less than 4,000 lines of pure Python code, significantly reducing complexity by approximately 991 TP3T while retaining core functionality. This "subtraction" design has made it a hit in the open-source community, currently boasting 41.1k stars on GitHub.

In terms of capabilities, nanobot has not sacrificed practicality for its lightweight design; on the contrary, it has continuously enhanced its functional boundaries through ongoing iterations. The latest version supports Office document reading, SSE streaming output with OpenAI-compatible APIs, enhanced multi-session reliability, and cross-session memory and stable operation across multiple channels.

Meanwhile, its built-in WebUI continues to improve, offering multi-language switching, dark mode, and real-time chat capabilities, and enabling multi-session concurrent interaction via WebSocket. At the model and ecosystem level,nanobot supports multiple model interfaces such as Kimi K2.6 and MiniMax, and is compatible with local inference solutions such as LM Studio.It also introduces runtime tool invocation (SelfTool) and automatic skill discovery (Dream) mechanisms, enabling agents to dynamically expand their capability boundaries.

Furthermore, the project has been continuously refined in terms of engineering details, including context compression, atomic session writing and automatic repair mechanisms, long message splitting (Telegram), email loop protection, and a more rigorous sandbox execution environment. These improvements significantly enhance the system's stability and availability in real production environments. Combined with MCP (Model Context Protocol), developers can also flexibly integrate external tools and services to build more complex automated processes.

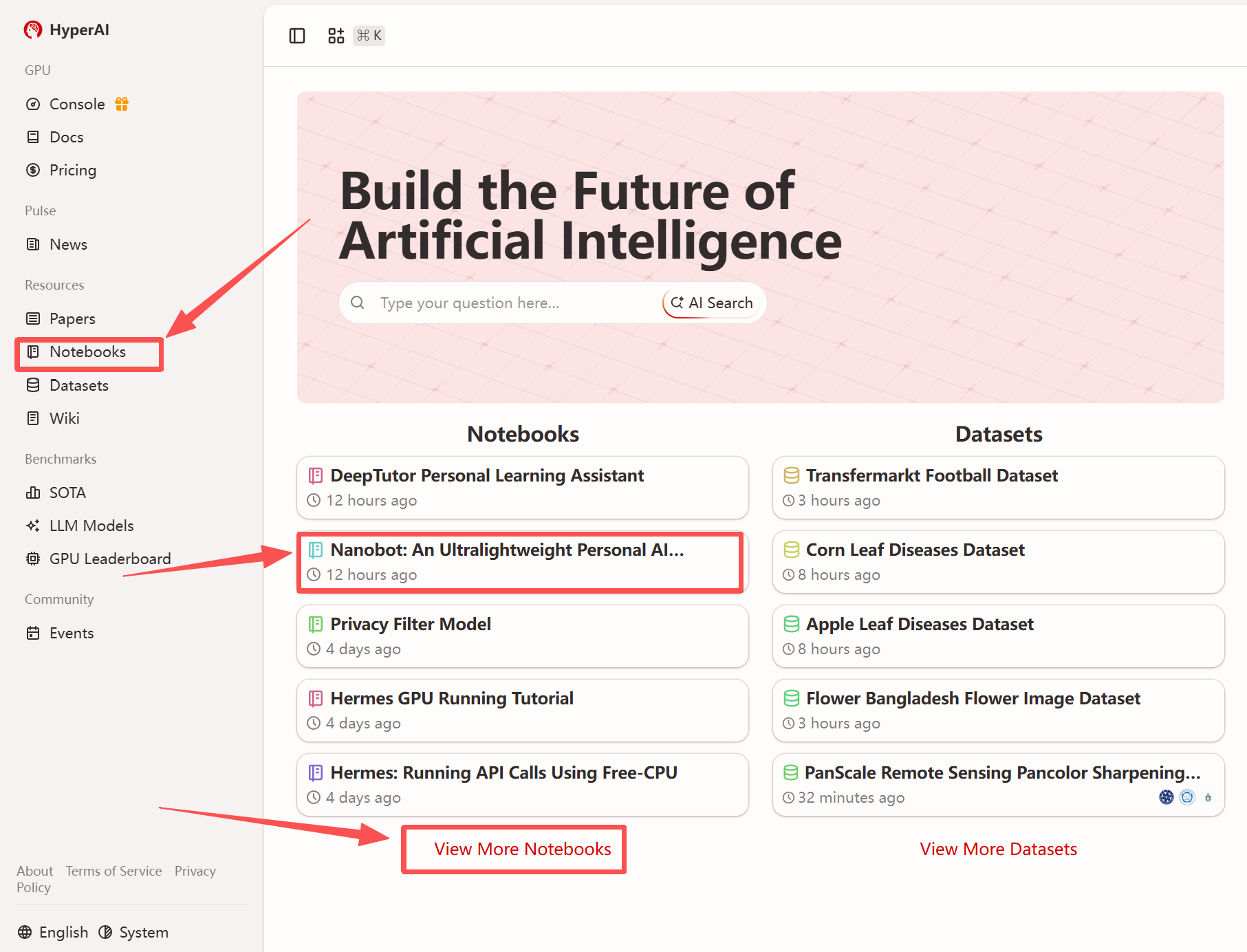

More importantly, the development barrier surrounding nanobot is also being further lowered—currently,HyperAI's tutorial section now features "Nanobot: An Ultra-Lightweight Personal AI Assistant".Once the environment is set up, the GLM-4.7-Flash model is deployed locally using vLLM. If you're interested, come and experience this lightweight AI agent with HyperAI – it's easy to use!

Run online:

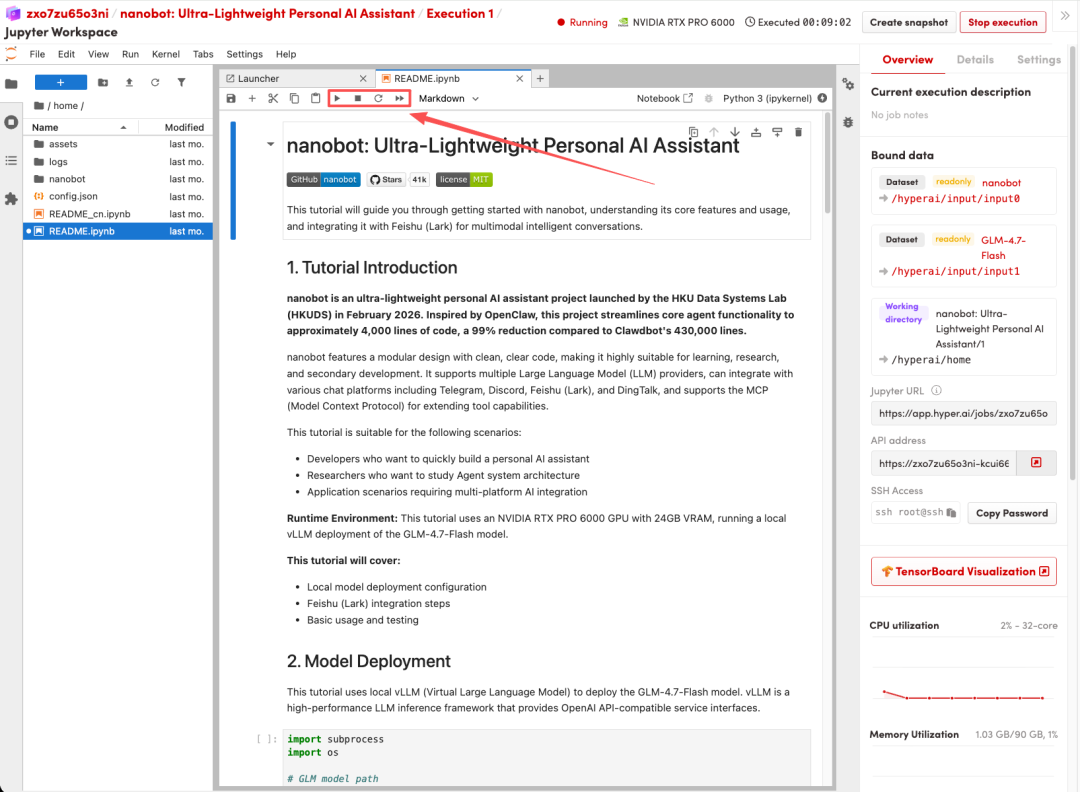

The tutorial includes the following:

- Local model deployment configuration

- Lark integration steps

- Basic usage and testing

More online tutorials:

Welcome to visit our official website for more information:

Demo Run

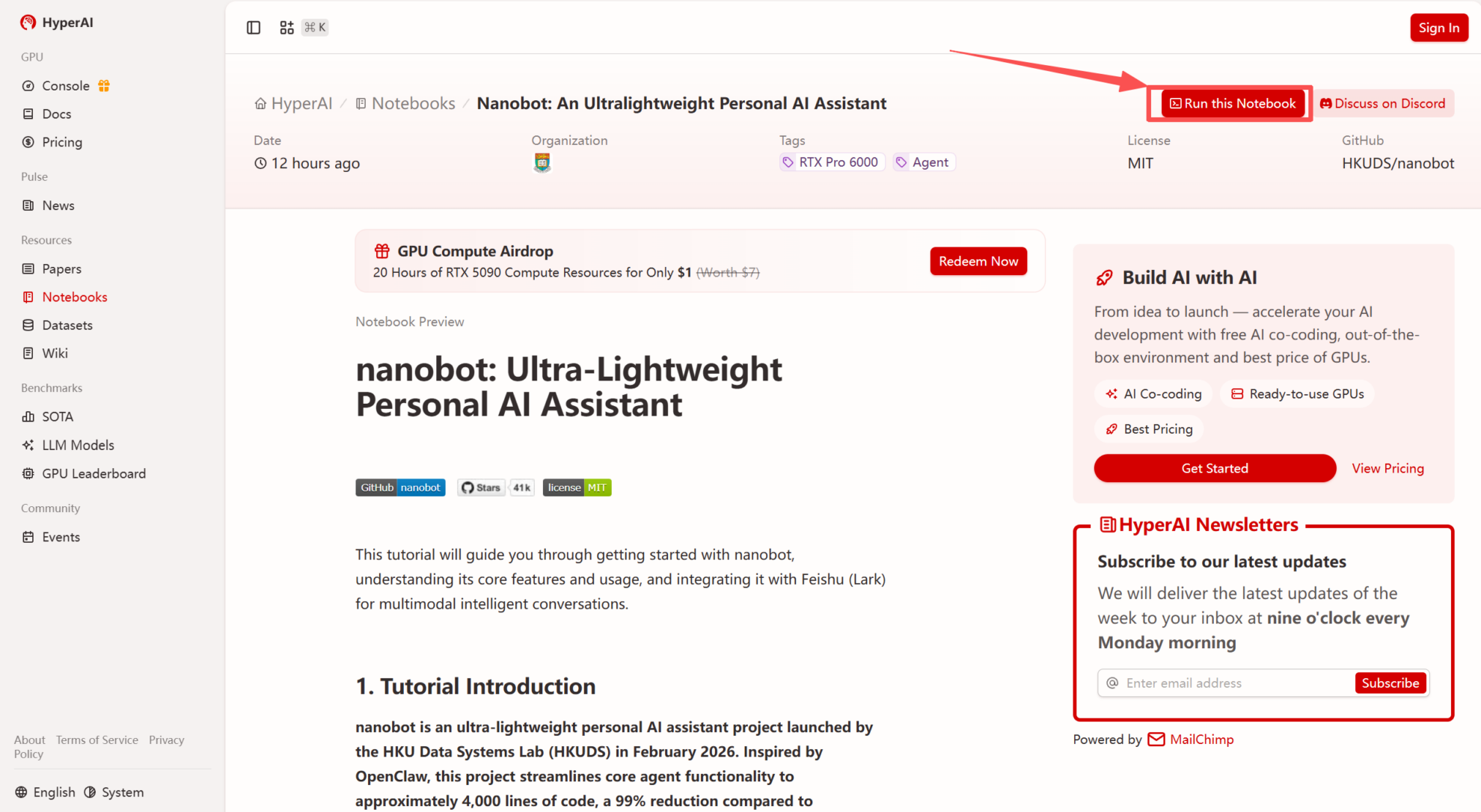

1. After entering the hyper.ai homepage, select the "Tutorials" page, or click "View More Tutorials", select "Nanobot: Ultra-Lightweight Personal AI Assistant", and click "Run this tutorial".

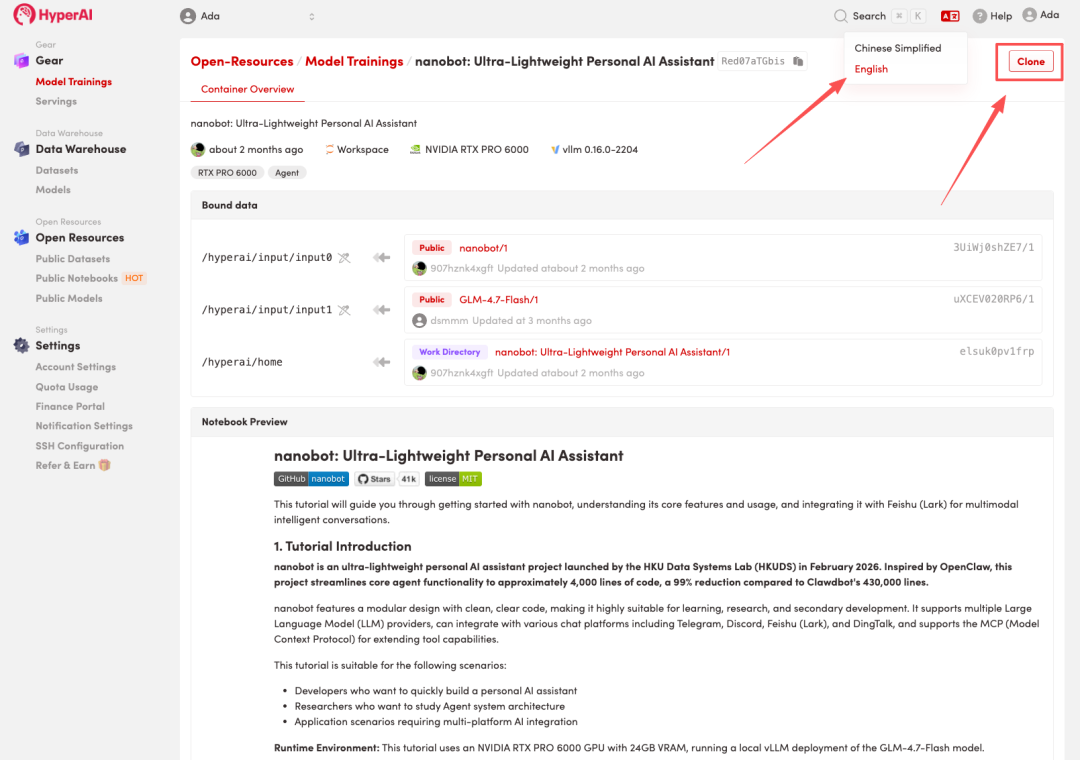

2. After the page redirects, click "Clone" in the upper right corner to clone the tutorial into your own container.

Note: You can switch languages in the upper right corner of the page. Currently, Chinese and English are available. This tutorial will show the steps in English.

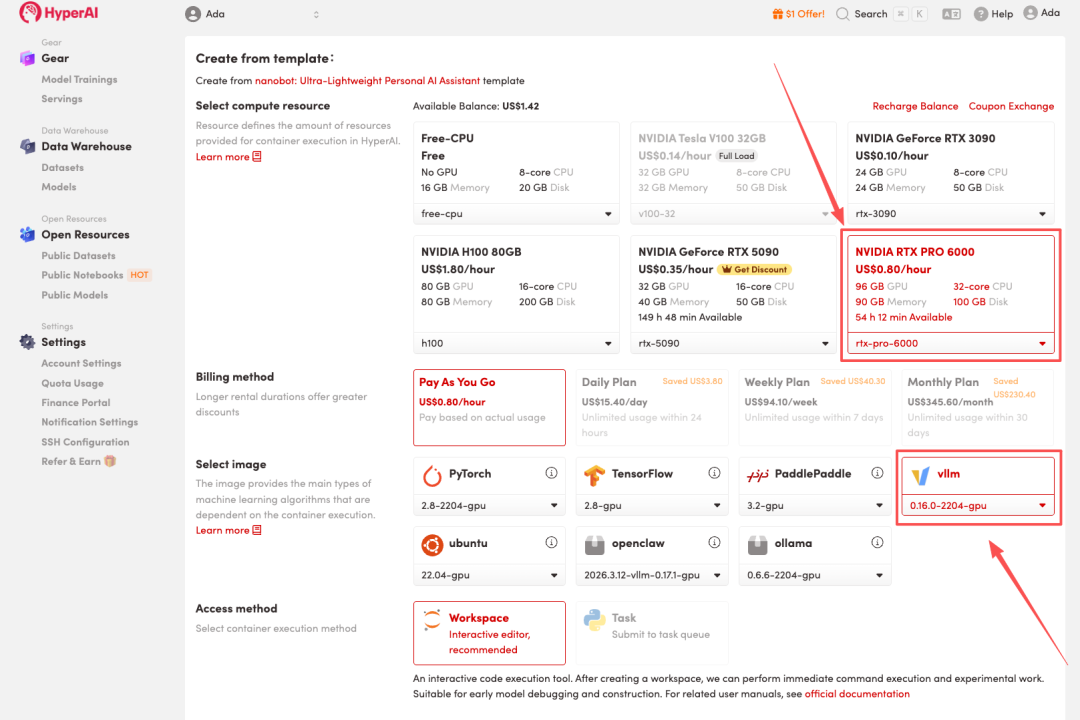

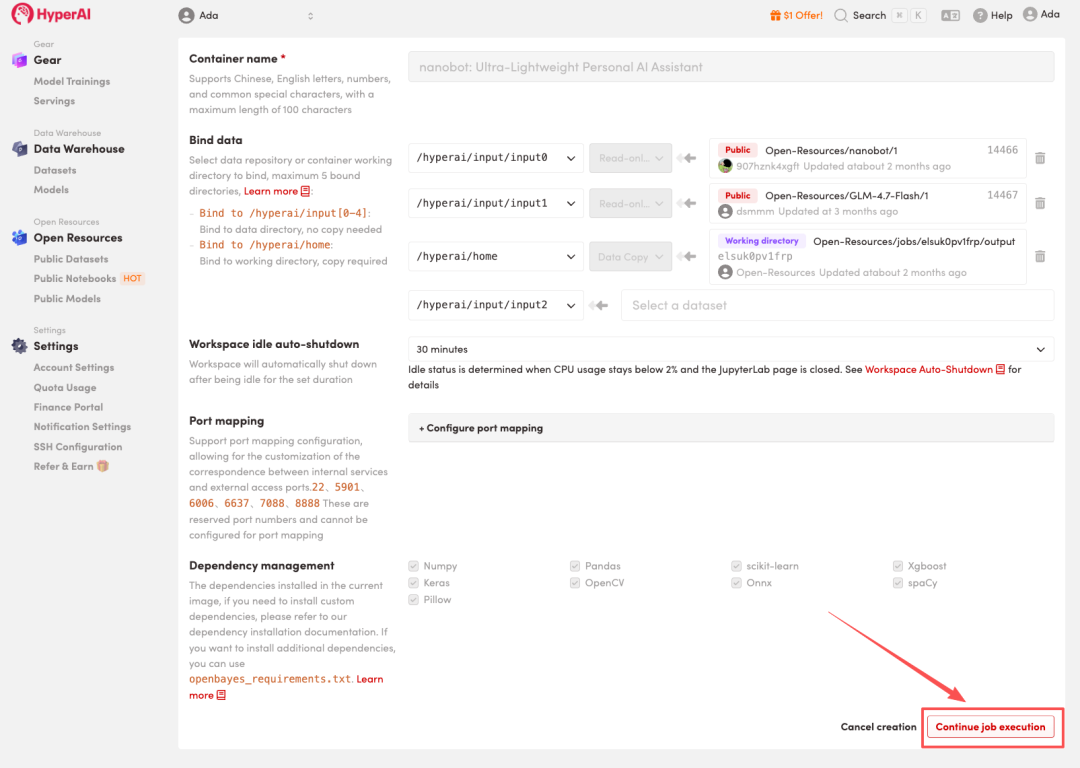

3. Select the "NVIDIA RTX PRO6000" and "vLLM" images, and click "Continue job execution".

HyperAI is offering a registration bonus for new users: for just $1, you can get 20 hours of RTX 5090 computing power (originally priced at $7), and the resources are valid indefinitely.

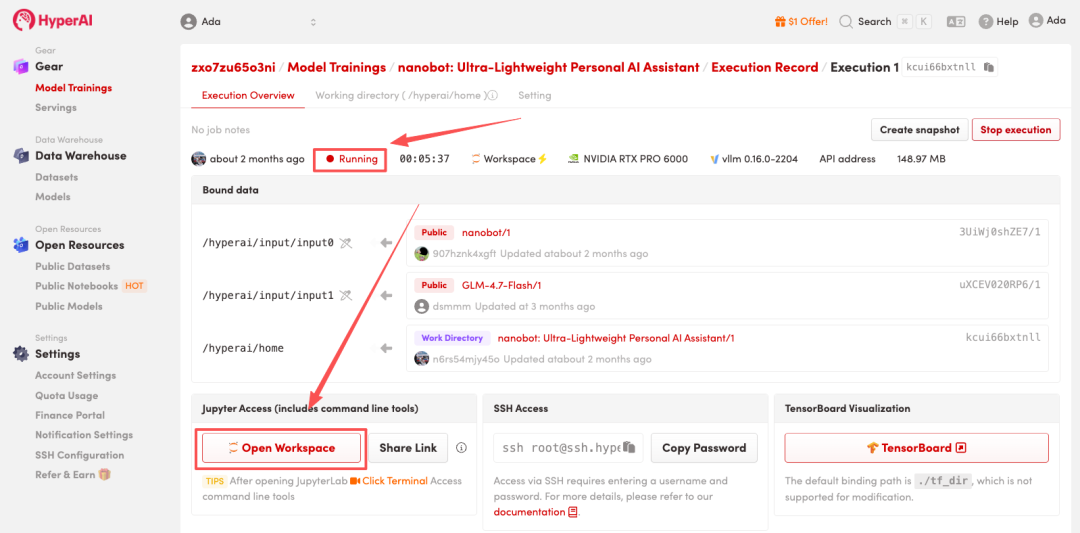

4. Wait for resources to be allocated. Once the status changes to "Running", click "Open Workspace" to enter the Jupyter Workspace.

Effect display

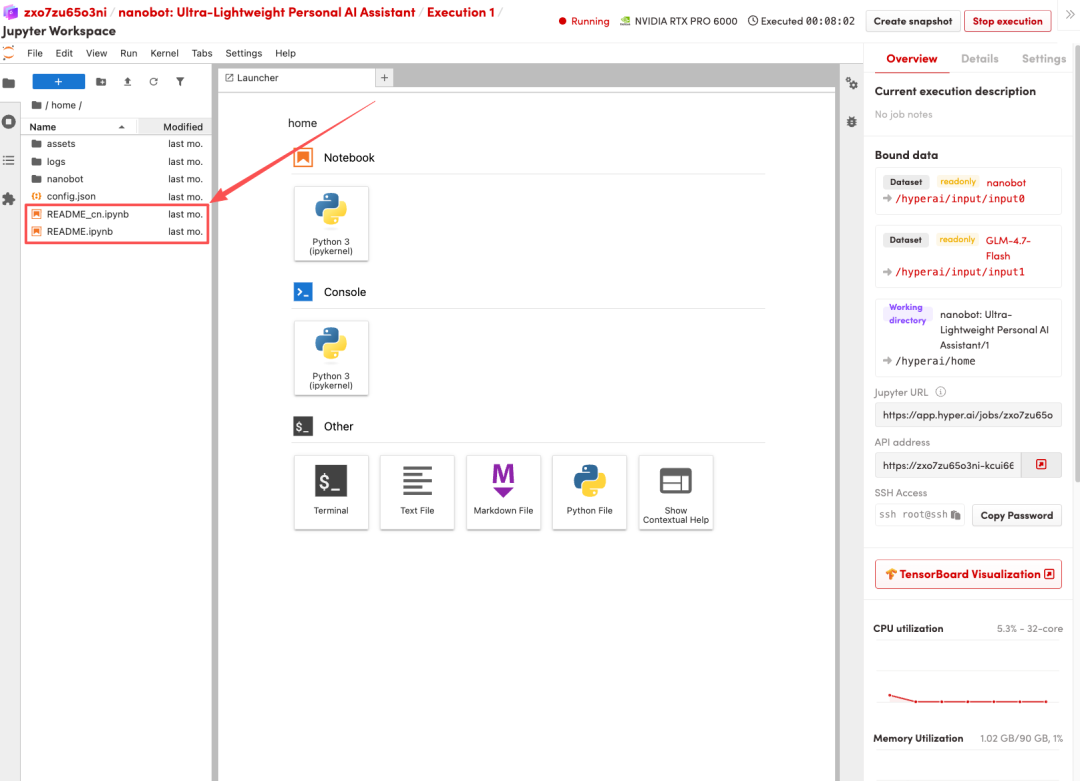

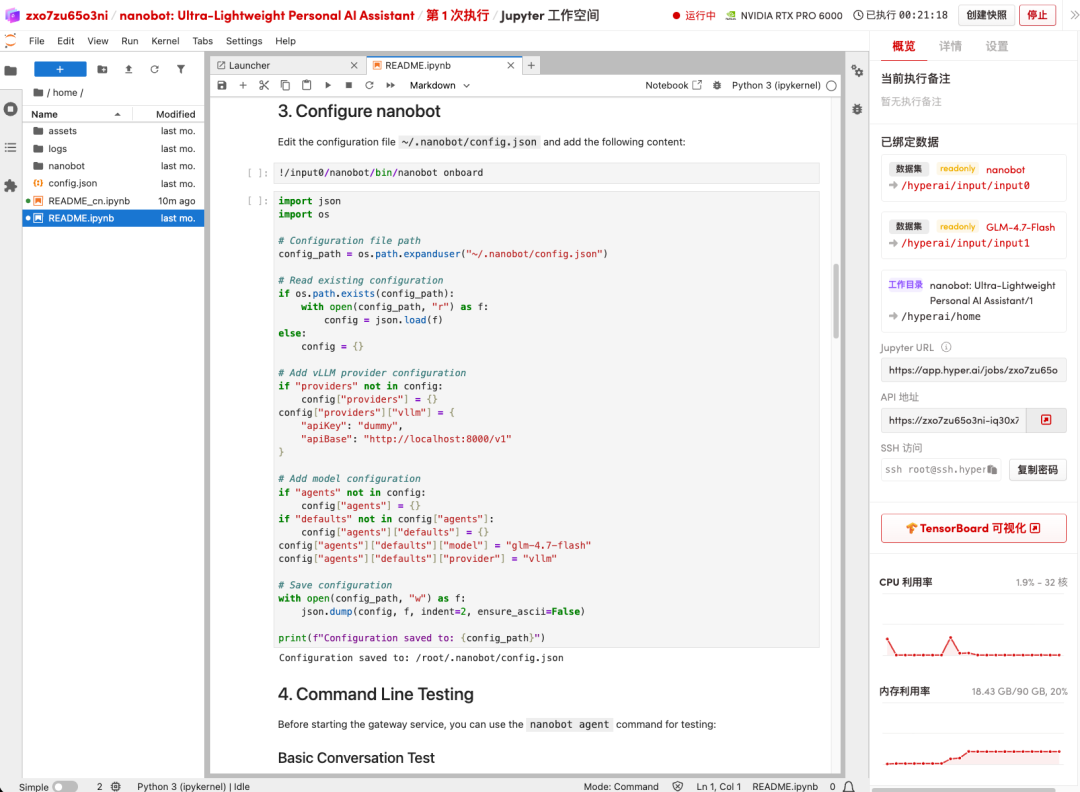

After the page redirects, click the README file on the left and follow the steps in the file to configure, test the command line, and integrate the relevant application (Lark).

About the team

nanobot was officially open-sourced by HKUDS in February this year. The team leader is Huang Chao, an assistant professor and doctoral supervisor at the University of Hong Kong. His research interests cover large-scale AI agents, language models and graph machine learning. His research results have been cited more than 17,000 times on Google Scholar.

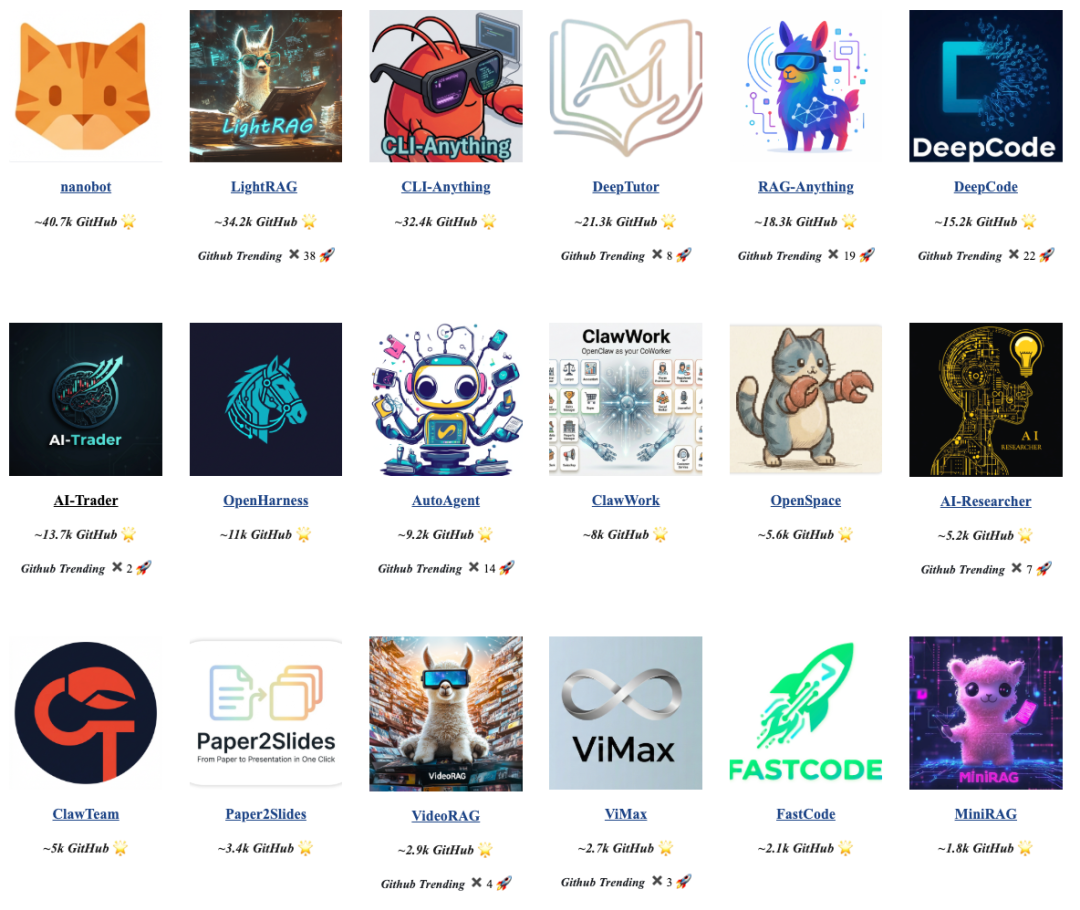

In addition to nanobot, Professor Huang Chao and his team have also released several other highly influential open-source projects, including LightRAG and CLI-Anything. The HKUDS GitHub open-source platform has accumulated over 240,000 GitHub stars, ranking among the top 50 globally, and has appeared on the GitHub Trending list more than 100 times.