AI Breakthrough: New KVzip Tech Compresses LLM Memory by 3–4× for Faster, Efficient Chatbots

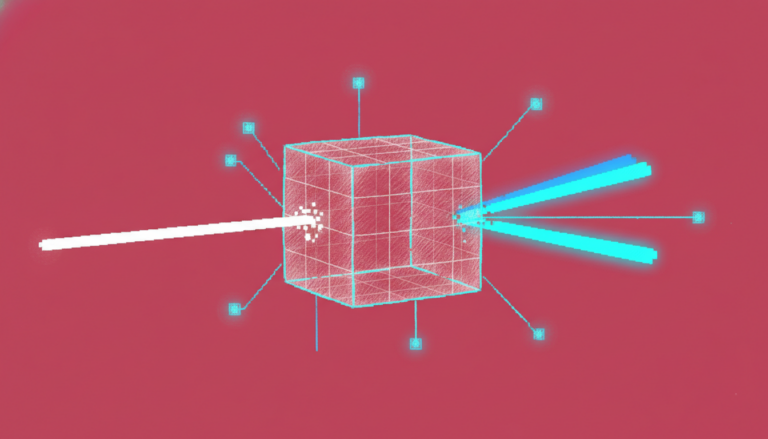

A team of researchers from Seoul National University’s College of Engineering, led by Professor Hyun Oh Song of the Department of Computer Science and Engineering, has developed a breakthrough AI technology called KVzip that can compress the conversation memory of large language model (LLM)-based chatbots by 3 to 4 times. The research, published on the arXiv preprint server, addresses a key challenge in long-context AI interactions: the growing memory and computational demands of extended dialogues and document summarization. Conversation memory refers to the temporary storage of past exchanges—questions, answers, and context—that a chatbot uses to generate coherent, context-aware responses. As conversations grow longer, this memory can become unwieldy, increasing response time and system cost. Existing memory compression methods often work only for a single query, leading to performance drops when new questions are asked. KVzip overcomes this limitation by intelligently compressing memory in a way that preserves essential context for future queries. Instead of storing every detail, it identifies and retains only the information necessary to reconstruct context, enabling reuse across multiple follow-up questions without recompression. This results in up to 4× reduction in memory usage and approximately 2× faster response times, all while maintaining the same level of accuracy. The technique has been tested on major open-source LLMs such as Llama 3.1, Qwen 2.5, and Gemma 3, handling contexts as long as 170,000 tokens. It performs consistently across diverse tasks including question answering, code understanding, reasoning, and retrieval, with no degradation in quality over multiple rounds of interaction. KVzip has already been integrated into NVIDIA’s open-source KV cache compression library, KVPress, making it accessible for real-world deployment. Its ability to maintain stable performance across different query types makes it ideal for enterprise applications like retrieval-augmented generation (RAG) systems and personalized chatbots, where efficiency and scalability are critical. The technology also holds promise for mobile and edge devices, where memory and processing power are limited. By enabling on-device long-context personalization with minimal overhead, KVzip could support more responsive and private AI interactions. Professor Hyun Oh Song emphasized the significance of reusable, high-fidelity compressed memory, stating that KVzip allows LLM agents to maintain long-term understanding without sacrificing performance. Dr. Jang-Hyun Kim, the lead researcher, noted that the method can be seamlessly applied to real-world systems, ensuring faster, consistent responses in long conversations. Dr. Kim will join Apple’s AI/ML Foundation Models team as a machine learning researcher. The research group also presented two additional papers at NeurIPS 2025 and one in the journal Transactions on Machine Learning Research (TMLR). One paper introduced Q-Palette, a fractional-bit quantizer that improves inference speed by 36% while maintaining performance. Another proposed Guided-ReST, a reinforcement learning method that boosted reasoning accuracy by 10% and efficiency by 50% on a challenging benchmark. The third paper presented a scalable causal inference method for identifying key variables in large systems, achieving state-of-the-art results in gene regulatory network analysis.