Command Palette

Search for a command to run...

Tstars-Tryon 1.0: تجربة قياس افتراضية قوية وواقعية لمختلف قطع الأزياء

Tstars-Tryon 1.0: تجربة قياس افتراضية قوية وواقعية لمختلف قطع الأزياء

الملخص

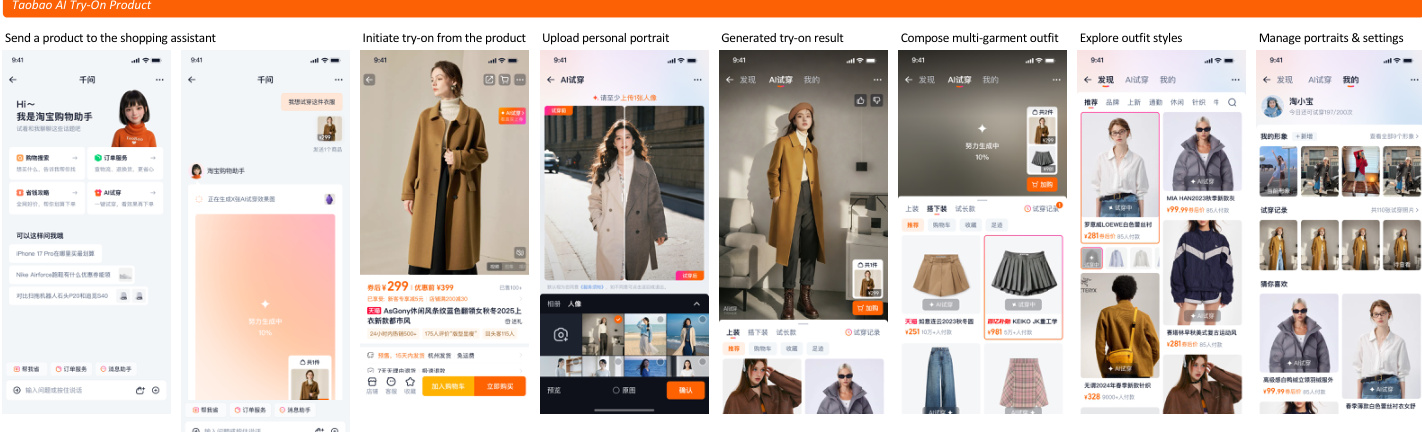

فتحت التطورات الأخيرة في مجالات توليد الصور وتحريرها آفاقاً جديدة لتجربة الملابس الافتراضية (virtual try-on). ومع ذلك، لا تزال الطرق الحالية تواجه صعوبات في تلبية المتطلبات المعقدة في العالم الحقيقي. لذا، نقدم Tstars-Tryon 1.0، وهو نظام تجربة ملابس افتراضية بمقاييس تجارية، يتميز بالقوة، والواقعية، وتعدد الاستخدامات، والكفاءة العالية.أولاً، يحافظ نظامنا على معدل نجاح مرتفع في الحالات الصعبة، مثل الوضعيات الجسدية المتطرفة (extreme poses)، والتغيرات الشديدة في الإضاءة، وضبابية الحركة (motion blur)، وغيرها من الظروف الطبيعية غير المنضبطة (in-the-wild conditions). ثانياً، يقدم النظام نتائج واقعية للغاية (photorealistic) مع تفاصيل دقيقة، حيث يحافظ بأمانة على ملمس الملابس، وخصائص المواد، والسمات الهيكلية، مع تجنب الشوائب الرقمية (artifacts) الشائعة الناتجة عن الذكاء الاصطناعي بشكل كبير. ثالثاً، وبالإضافة إلى تجربة الملابس، يدعم نموذجنا تكويناً مرناً للصور المتعددة (ما يصل إلى 6 صور مرجعية) عبر 8 فئات من الأزياء، مع تحكم منسق في هوية الشخص والخلفية. رابعاً، وللتغلب على عقبات زمن الاستجابة (latency) في النشر التجاري، تم تحسين نظامنا بشكل مكثف لسرعة الاستدلال (inference speed)، مما يوفر توليداً فورياً تقريباً لضمان تجربة مستخدم سلسة.تتحقق هذه القدرات من خلال تصميم نظام متكامل يشمل بنية النموذج من البداية إلى النهاية (end-to-end model architecture)، ومحرك بيانات قابل للتوسع، وبنية تحتية قوية، ونموذج تدريب متعدد المراحل. وقد أظهر التقييم المكثف والنشر المنتج واسع النطاق أن Tstars-Tryon 1.0 يحقق أداءً عاماً رائداً. ودعماً للأبحاث المستقبلية، قمنا أيضاً بإطلاق معيار تقييم شامل (comprehensive benchmark). تم نشر النموذج على نطاق صناعي في تطبيق Taobao، حيث يخدم ملايين المستخدمين عبر عشرات الملايين من الطلبات.

One-sentence Summary

The authors propose Tstars-Tryon 1.0, a commercial-scale virtual try-on system that utilizes a multi-stage training paradigm and a scalable data engine to deliver robust, photorealistic, and near real-time results across eight fashion categories, including tops, pants, skirts, dresses, coats, shoes, bags, and hats.

Key Contributions

- The paper introduces Tstars-Tryon 1.0, a commercial-scale virtual try-on system that utilizes an integrated design of end-to-end architecture, a scalable data engine, and a multi-stage training paradigm to achieve high success rates in challenging in-the-wild conditions such as extreme poses and motion blur.

- This work presents a general-purpose framework capable of flexible multi-image composition using up to six reference images across eight fashion categories, including tops, shoes, and bags, while maintaining photorealistic garment textures and coordinated control over person identity and background.

- The research provides a highly optimized system for near real-time inference and introduces a comprehensive benchmark to support future development, with successful large-scale deployment on the Taobao App serving millions of users.

Introduction

Virtual try-on technology is essential for modern e-commerce, yet existing academic models often fail to meet the demands of real-world commercial deployment. Current benchmarks are limited by simplistic studio backgrounds, a narrow focus on basic clothing categories, and a reliance on pristine flat-lay garment images that do not reflect the complex, unconstrained photos provided by actual users. The authors introduce Tstars-Tryon 1.0, a commercial-scale system designed to handle extreme poses, diverse lighting, and multi-item compositions across eight fashion categories including accessories. By integrating a scalable data engine with a multi-stage training paradigm, the authors achieve a robust balance between high-fidelity photorealism and the near real-time inference speeds required for large-scale industrial applications.

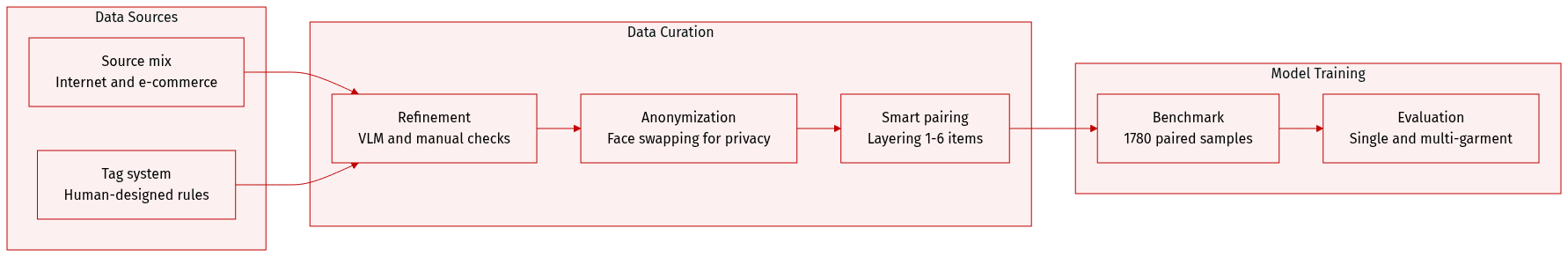

Dataset

The authors introduce the Tstars-VTON Benchmark, a large scale dataset designed to evaluate virtual try on models under commercial grade standards.

- Dataset Composition and Sources: The authors collect data from the internet and proprietary e-commerce domains. The benchmark consists of 1780 refined paired samples that cover 5 garment categories (tops, dresses, coats, pants, and skirts) and 3 accessory categories (shoes, hats, and bags). These are further divided into 465 fine grained subcategories.

- Key Details and Diversity: The dataset is designed to support complex multi item scenarios, where samples can include between 1 and 6 layered items. It features diverse model characteristics, including a gender distribution of 74.9% female and 25.1% male, and various age groups from children to seniors. To increase difficulty, the authors include complex poses (29.6%) and intricate in the wild backgrounds (over 40%).

- Processing and Metadata Construction: The authors employ a three stage pipeline for construction:

- Collection: A hybrid retrieval strategy combines automated platform extraction with manual collection guided by a multi dimensional tag system.

- Refinement and Annotation: Data undergoes a two stage tag retrieval and refinement process. Metadata is initially derived from SKU metadata, refined by a VLM based pipeline, and finalized through manual verification to ensure accuracy across 11 model tag dimensions and 13 garment tag dimensions.

- Anonymization: To ensure privacy, all model portraits undergo a face swapping process where faces are matched to licensed surrogates based on skin tone, gender, and age.

- Pairing and Usage: The authors use a structured layering logic for the try on pairing strategy. This ensures that outfit combinations follow realistic physical and semantic rules, such as gender matching and proper clothing layers. The benchmark supports both single garment and multi garment evaluation, including a fully unpaired setting that decouples the model and garment databases to maximize combinatorial diversity.

Method

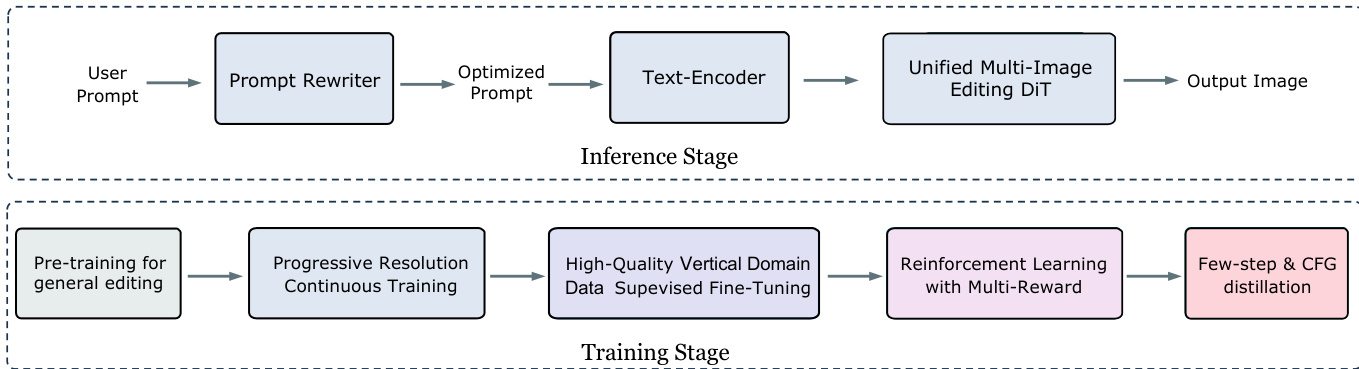

The authors leverage a two-stage framework for their try-on model, consisting of a training stage and an inference stage. The training stage begins with pre-training on general editing tasks, followed by progressive resolution continuous training to refine the model's ability to handle high-resolution outputs. This is then followed by high-quality vertical domain supervised fine-tuning, where the model is optimized using carefully curated data specific to the clothing domain. The final phase of training employs reinforcement learning with multi-reward signals to further enhance the model's performance, culminating in few-step and CFG distillation to improve inference efficiency and output quality.

The inference stage is initiated by a user prompt, which is processed through a prompt rewriter to generate an optimized prompt. This optimized prompt is then encoded by a text encoder, which feeds into a unified multi-image editing DiT (Diffusion Transformer) model to produce the final output image.

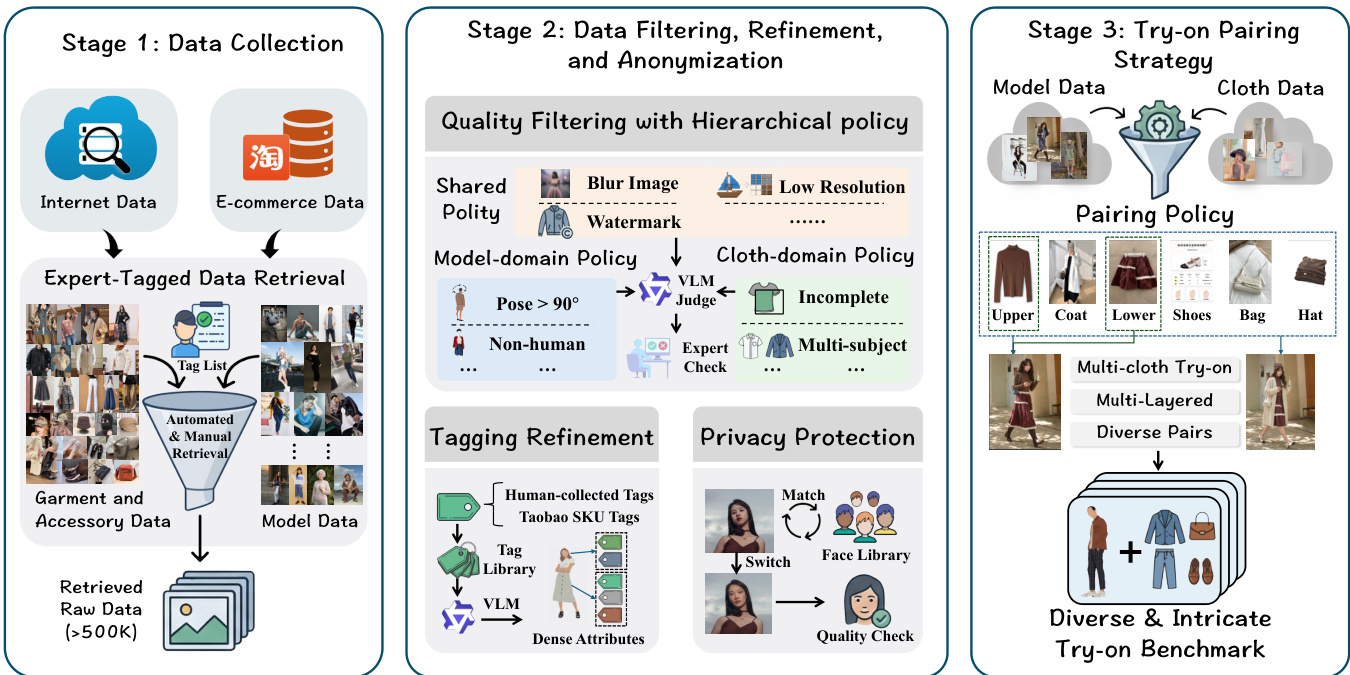

The model's training process is underpinned by a three-stage data pipeline. Stage 1 focuses on data collection, where raw data is gathered from internet sources and e-commerce platforms. This data undergoes expert-tagged retrieval and a combination of automated and manual filtering to produce a large set of garment and accessory data, as well as model data. Stage 2 involves data filtering, refinement, and anonymization. This stage includes quality filtering using hierarchical policies, such as a model-domain policy that filters based on pose and human presence, and a cloth-domain policy that checks for incomplete or multi-subject images. A VLM judge and expert check are used to validate the data. Tagging refinement ensures accurate attribute labeling, and privacy protection mechanisms, including face library matching and quality checks, are applied to safeguard user data. Stage 3 is the try-on pairing strategy, which pairs model and cloth data based on a pairing policy to generate diverse and intricate try-on benchmarks, including multi-cloth and multi-layered combinations.

Experiment

The evaluation utilizes the Tstars-VTON Benchmark, covering single-garment and complex multi-garment scenarios, alongside academic benchmarks and human preference studies to validate commercial readiness. Results demonstrate that the model excels in maintaining identity consistency, background preservation, and intricate garment textures even under extreme poses or lighting. Notably, the system shows superior stability in multi-item coordination and cross-domain applications, such as dressing 3D avatars or anime characters, while maintaining significantly higher inference speeds than existing proprietary and open-source models.

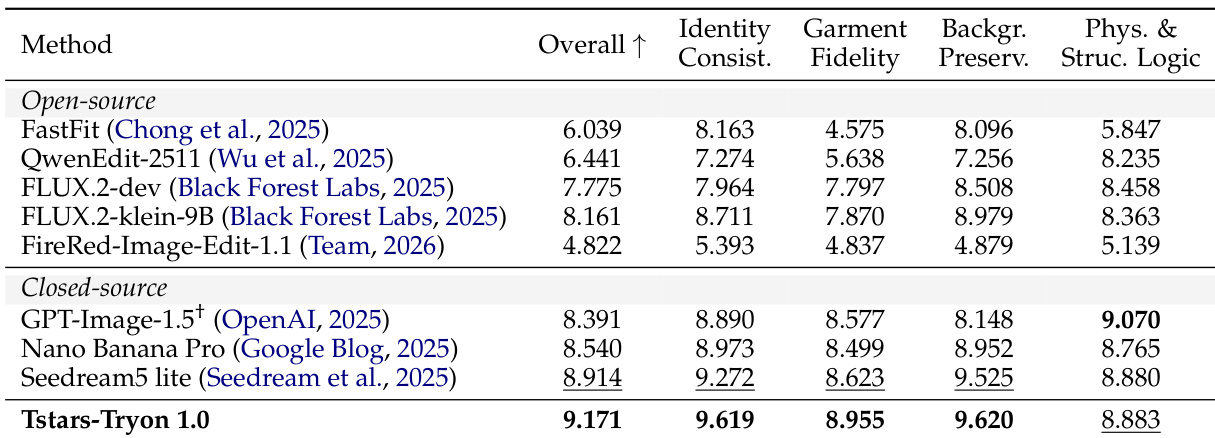

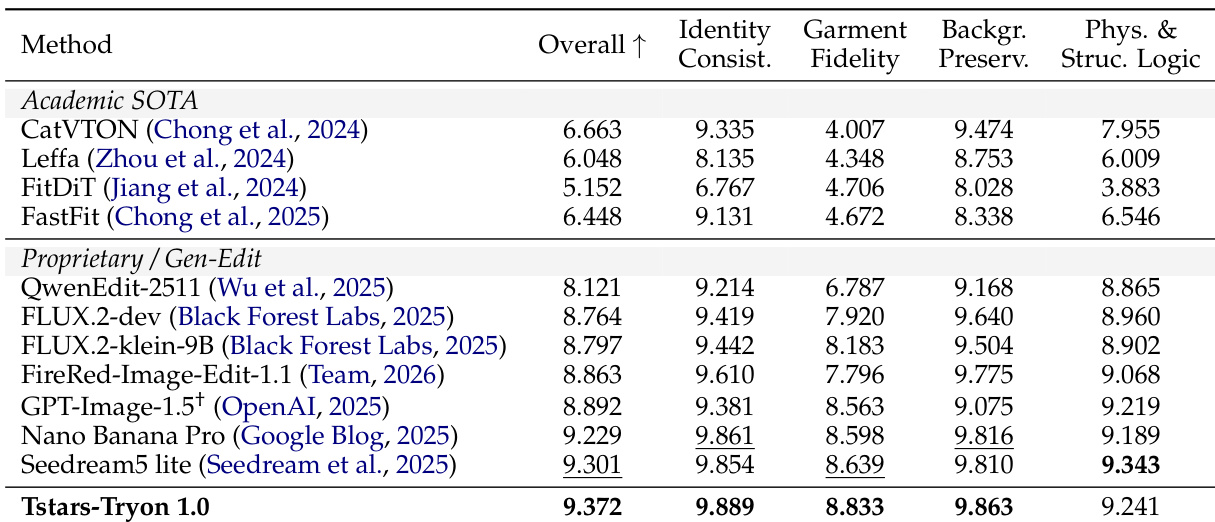

The authors evaluate Tstars-Tryon 1.0 against various open-source and closed-source models on a comprehensive benchmark, demonstrating its superior performance across multiple dimensions including overall quality, identity consistency, garment fidelity, background preservation, and physical and structural logic. The results show that Tstars-Tryon 1.0 achieves the highest scores in all evaluated categories, outperforming both specialized academic models and leading proprietary systems, particularly in complex multi-garment scenarios. Tstars-Tryon 1.0 achieves the highest scores in all evaluated metrics, including overall quality, identity consistency, and garment fidelity. The model outperforms both open-source and closed-source competitors, especially in complex multi-garment try-on tasks. Tstars-Tryon 1.0 demonstrates exceptional performance in maintaining physical and structural logic, preserving identity, and handling background details.

The authors present a comprehensive evaluation of Tstars-Tryon 1.0, a foundation model for virtual try-on, comparing its performance against state-of-the-art academic and commercial models. The results show that Tstars-Tryon 1.0 achieves superior or competitive performance across multiple metrics, particularly in garment fidelity and identity consistency, while maintaining high performance in complex multi-garment scenarios. The model demonstrates strong generalization capabilities, handling diverse inputs and complex instructions with high fidelity and robustness. Tstars-Tryon 1.0 outperforms both academic and proprietary models in key metrics, especially in garment fidelity and identity consistency. The model maintains high performance in complex multi-garment scenarios, demonstrating robustness and the ability to handle intricate layering and coordination. Tstars-Tryon 1.0 shows strong generalization capabilities, effectively managing diverse inputs and complex instructions while preserving identity and background details.

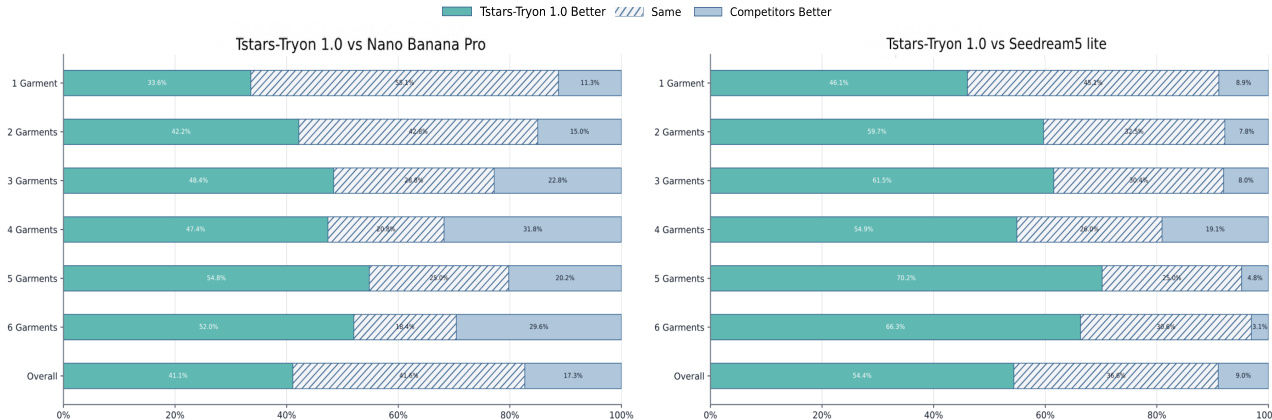

The authors compare Tstars-Tryon 1.0 against two leading proprietary models, Nano Banana Pro and Seedream5 lite, using human evaluation metrics across varying numbers of garments. The results show that Tstars-Tryon 1.0 consistently outperforms the competitors, particularly as the complexity of the try-on task increases. The performance gap widens significantly in multi-garment scenarios, where the competitors exhibit substantial declines in quality, while Tstars-Tryon 1.0 maintains high stability and fidelity. Tstars-Tryon 1.0 outperforms proprietary models in human evaluation, with a significant advantage in multi-garment scenarios. The performance gap between Tstars-Tryon 1.0 and competitors widens as the number of garments increases, indicating superior robustness under complex conditions. Tstars-Tryon 1.0 maintains high quality and consistency across all tested scenarios, while competitors show a marked decline in performance with increased complexity.

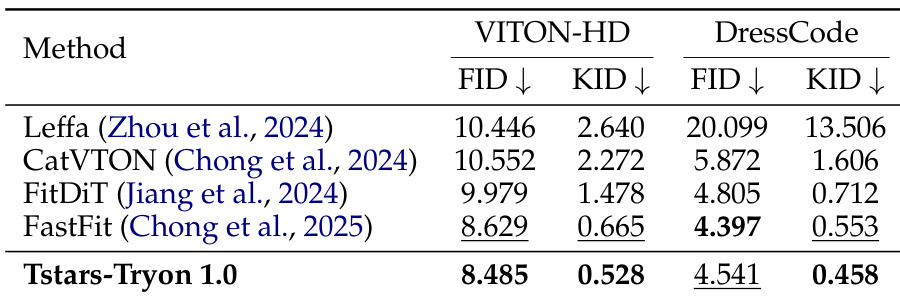

The authors present a comprehensive evaluation of Tstars-Tryon 1.0, demonstrating its superior performance in virtual try-on tasks compared to existing academic and commercial models. The model achieves state-of-the-art results on both single-garment and multi-garment scenarios, particularly excelling in complex multi-item generation and maintaining high fidelity across diverse conditions. Results show that Tstars-Tryon 1.0 outperforms other methods in key metrics, indicating its effectiveness in handling challenging real-world applications. Tstars-Tryon 1.0 achieves the best performance in both single-garment and multi-garment try-on tasks, outperforming specialized academic models and leading commercial systems. The model demonstrates exceptional robustness and high-fidelity rendering, maintaining identity, pose, and background consistency even in complex multi-garment scenarios. Tstars-Tryon 1.0 shows strong generalization capabilities, with superior performance on academic benchmarks despite not being trained on them, indicating its ability to handle unseen data distributions effectively.

Tstars-Tryon 1.0 was evaluated against various open-source academic models and leading proprietary systems through comprehensive benchmarks and human assessments. The experiments validate the model's ability to maintain identity consistency, garment fidelity, and structural logic across both single and multi-garment scenarios. The findings demonstrate that Tstars-Tryon 1.0 provides superior robustness and generalization, particularly as task complexity increases, whereas competing models show significant performance declines in multi-item coordination.