Command Palette

Search for a command to run...

MulTaBench: テキストと画像を用いたマルチモーダル表形式学習のベンチマーク

MulTaBench: テキストと画像を用いたマルチモーダル表形式学習のベンチマーク

概要

タイトル:要旨:表形式基盤モデルは、数値およびカテゴリカルな構造化データに対する汎用性の高い表現を学習するために事前学習を活用することで、教師あり表形式学習において最先端の成果を確立している。しかし、それらはテキストや画像といった非構造化モダリティをネイティブにサポートしておらず、それらの処理には凍結された事前学習済み埋め込みベクトルに依存している。確立されたマルチモーダル表形式学習のベンチマークにおいて、埋め込みベクトルをタスクに合わせて微調整することが性能向上につながることを示す。しかし、既存のベンチマークはしばしばモダリティの単なる共起に焦点を当てており、これによりデータセット間で分散が大きくなり、タスク固有の微調整の利点が隠蔽されてしまう。このギャップを解消するため、本稿では、画像-表形式タスクとテキスト-表形式タスクを均等に分割した40のデータセットからなるベンチマーク「MulTaBench」を導入する。我々は、モダリティが補完的な予測信号を提供し、汎用的な埋め込みベクトルが重要な情報を失うため、タスクと整合したターゲット認識表現(Target-Aware Representations)が必要となる予測タスクに焦点を当てる。実験結果は、ターゲット認識表現の微調整による性能向上が、テキストおよび画像の両モダリティ、複数の表形式学習器、エンコーダの規模、および埋め込み次元にわたって一般化することを示している。MulTaBenchは、医療や電子商取引といった高インパクトなドメインにまたがり、これまでにない最大規模の画像-表形式ベンチマークングの取り組みである。本ベンチマークは、結合モデリングとターゲット認識表現を組み合わせた新規アーキテクチャの研究を可能にするよう設計されており、新規なマルチモーダル表形式基盤モデルの開発への道を開くものである。

One-sentence Summary

MulTaBench is a benchmark of forty datasets split equally between image-tabular and text-tabular tasks that demonstrates how target-aware representation tuning outperforms frozen pretrained embeddings by aligning embeddings with complementary predictive signals, with performance gains generalizing across multiple tabular learners, encoder scales, and embedding dimensions while spanning healthcare and e-commerce domains.

Key Contributions

- MulTaBench, a benchmark comprising 40 datasets equally divided between image-tabular and text-tabular tasks. This benchmark addresses the high variance of prior evaluations by focusing on predictive tasks where modalities provide complementary signals, enabling rigorous assessment of target-aware tuning.

- Target-Aware Representations, a tuning approach that adapts frozen pretrained embeddings to downstream objectives instead of relying on static features. This process dynamically shifts model attention to task-relevant regions, recovering critical predictive information discarded by generic embeddings.

- Experimental results demonstrate that target-aware tuning consistently improves performance across various tabular learners, encoder scales, and embedding dimensions. These findings confirm that the adaptation strategy generalizes effectively across text and image modalities in high-impact domains such as healthcare and e-commerce.

Introduction

Modern tabular foundation models have established new performance standards for structured data but remain fundamentally unimodal, relying on frozen embeddings to process unstructured inputs like text and images. This static approach creates a significant bottleneck in high-stakes domains such as healthcare and e-commerce, where generic representations often discard the fine-grained, task-specific signals required for accurate prediction. Existing benchmarks further complicate progress by prioritizing dataset diversity over predictive necessity, which obscures the true value of joint modeling and target-aware tuning. To address these gaps, the authors introduce MulTaBench, a curated benchmark of 40 datasets that strictly filters for tasks where modalities provide complementary information and require target-aware representation tuning. Their experimental validation demonstrates that adapting embeddings to specific prediction objectives consistently outperforms frozen baselines across diverse architectures, establishing a rigorous standard for developing the next generation of multimodal tabular foundation models.

Dataset

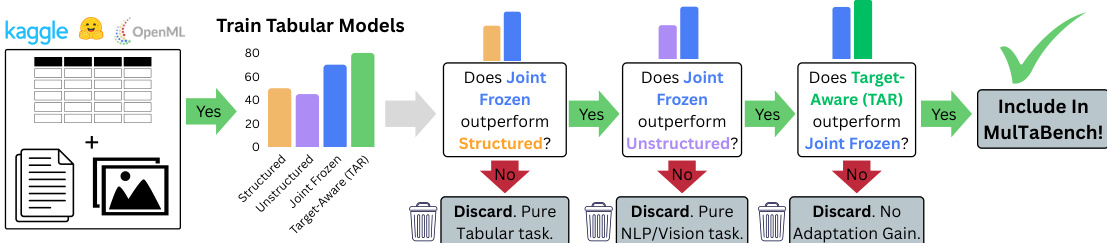

- Dataset Composition and Sources: The authors introduce MulTaBench, a benchmark comprising 40 multimodal tabular datasets divided equally between image-tabular and text-tabular pairs. The text-tabular subset aggregates 56 unique datasets from four established public benchmarks following deduplication. The image-tabular subset merges 16 candidates sourced from academic literature with manually curated additions collected from Kaggle and public repositories.

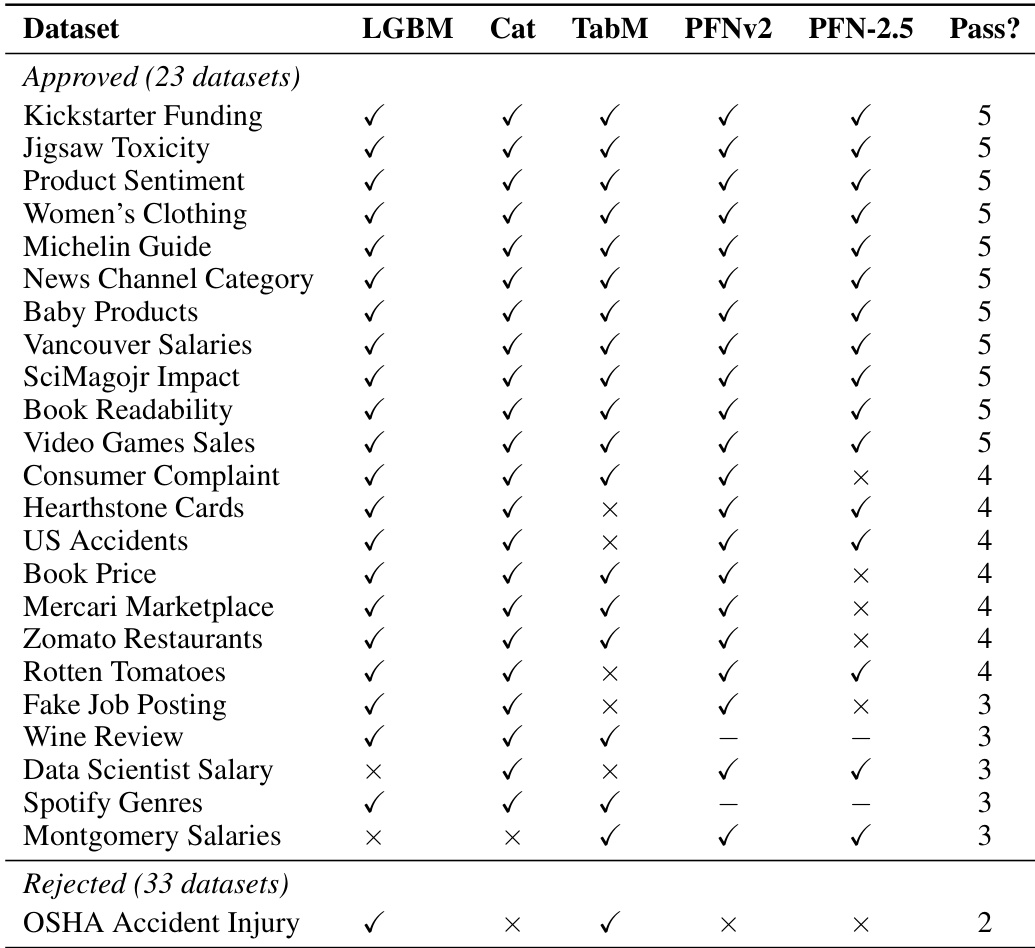

- Subset Details and Filtering Rules: Datasets span 400 to 114,000 rows and contain 1 to 245 structured features, maintaining a balanced mix of classification and regression objectives. The authors enforce a two-part curation pipeline requiring Joint Signal, meaning each modality must contribute independent predictive value, and Task-awareness, meaning fine-grained target cues must require representation tuning rather than generic encoders. Approximately 41 percent of text-tabular candidates satisfy both criteria, and the authors subsample 20 to equal the image subset size. Only 5 of 16 image-tabular literature candidates pass the filters, leading the authors to manually curate additional Kaggle datasets to complete the final 20.

- Data Usage and Processing: The authors distribute the benchmark through Kaggle, utilizing a unified loading API that standardizes data ingestion across all sources. They employ the data to benchmark Target-Aware Representations against frozen embeddings, normalizing AUC and R² metrics to a zero to one scale for cross-task evaluation and reporting ninety-five percent confidence intervals. Rows containing missing or corrupt images are dropped without imputation, and datasets offering multiple image columns per entry are simplified to a single image.

- Cropping Strategy and Metadata Construction: Several datasets rely on targeted cropping, including mammography mass regions and lesion crops, to direct visual encoders toward diagnostically relevant zones. The authors apply log transformations to price-based regression targets and use quantile binning to discretize continuous values into multiclass labels. Structured columns that leak the target variable or dominate visual signals are removed to maintain genuine multimodal learning conditions. Text features are either retained as raw strings or pre-embedded into continuous vectors, while a flat image directory structure paired with relative paths ensures consistent metadata alignment during training.

Method

The authors leverage a multi-stage framework for integrating structured and unstructured features in tabular learning tasks, with a focus on ensuring robust and target-aware representations. The overall architecture begins with a preprocessing step that adapts a pre-trained encoder to generate target-aware representations (TAR). This adaptation is performed by fine-tuning the top three layers of the encoder using Low-Rank Adaptation (LoRA), with a single linear head mapping the encoder output (384-dimensional) to the number of output classes. The fine-tuning is conducted exclusively on the training split, using a stratified 90/10 train/validation split to select the best checkpoint, ensuring no data leakage from the test set. For both DINO-v3-small and e5-small-v2, the LoRA configuration is fixed: r=16, α=32, and dropout of 0.1. Training employs AdamW with a learning rate of 10−4 for e5 and 0.001 for DINO, a batch size of 256, and weight decay of 0.01. Training for DINO proceeds up to 100 epochs, while e5 is limited to 50 epochs due to the prevalence of multiple text features across datasets. Early stopping is applied after three epochs of no improvement on validation loss, with all hyperparameters held constant across datasets to avoid per-dataset tuning.

For regression tasks, the continuous label is discretized into 20 equal-frequency bins, and the adaptation objective is cross-entropy over these bins. This approach enhances stability compared to direct regression fine-tuning by reducing sensitivity to outliers. In text-tabular datasets, which often contain multiple text fields, the authors define string features with at least 100 distinct values as text columns. To maintain efficiency, a single e5 model is fine-tuned jointly across all such text columns. Each row-column pair is treated as a training example in the format "col_name : col_val", paired with the row's target label, enabling the model to learn a shared representation across all text features simultaneously. While this approach may affect representation quality as feature size increases, fine-tuning a dedicated embedding model for each feature would be computationally prohibitive.

Experiment

The evaluation employs a four-condition protocol to curate datasets by isolating unimodal and joint representations, validating that selected benchmarks exhibit strong multimodal signal and task-awareness. Robustness analyses then confirm these properties generalize across diverse tabular learners, larger embedding scales, and varying dimensionality reductions, demonstrating that target-aware representations consistently improve upon frozen baselines. Qualitative attention maps further reveal that this tuning mechanism effectively redirects model focus toward semantically relevant features. Collectively, the experiments establish that representation adaptation serves as a reliable and necessary preprocessing step for multimodal tabular learning, independent of specific architecture or embedding capacity.

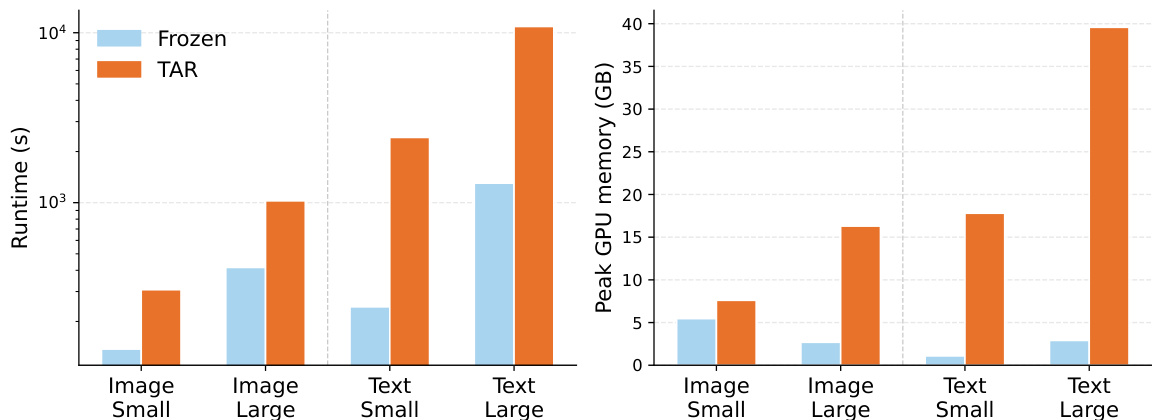

The authors analyze the computational costs of target-aware representation tuning (TAR) compared to frozen embeddings across different modalities and encoder sizes. Results show that TAR significantly increases runtime and GPU memory usage, with text-based tasks and larger encoders leading to substantially higher computational demands. The increase in costs is primarily attributed to the fine-tuning step of the encoders. Target-aware representation tuning increases runtime and GPU memory usage compared to frozen embeddings. Text-based tasks require significantly more computational resources than image-based tasks for both frozen and TAR conditions. Larger encoder models lead to substantially higher computational costs for both runtime and peak GPU memory, especially under the TAR condition.

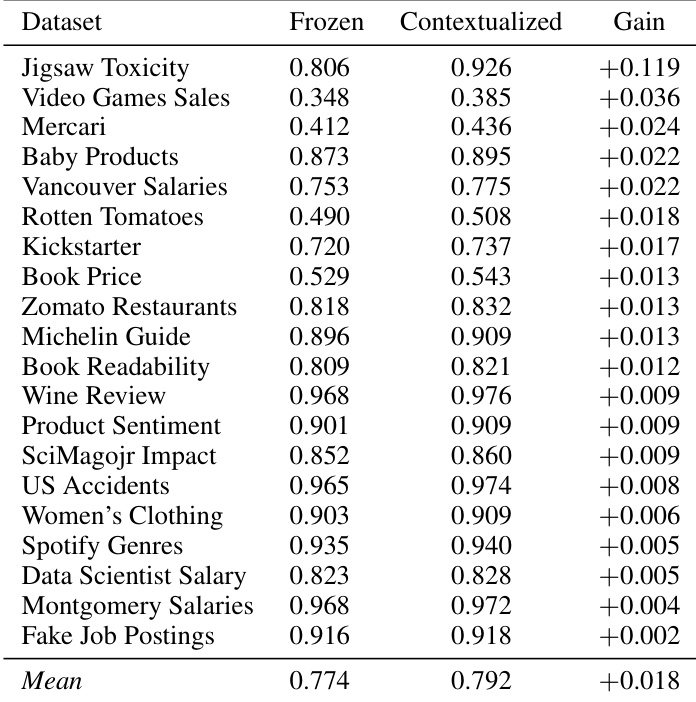

The authors evaluate the performance of tabular models with frozen versus target-aware contextualized representations across multiple datasets. Results show that contextualized representations consistently improve performance over frozen representations, with gains observed across different models and datasets. The average improvement across all datasets is small but positive, indicating a general benefit from target-aware tuning. Contextualized representations consistently outperform frozen representations across all datasets. The improvement from target-aware tuning is observed across various models and datasets. The average gain from contextualization is small but positive, indicating a general benefit.

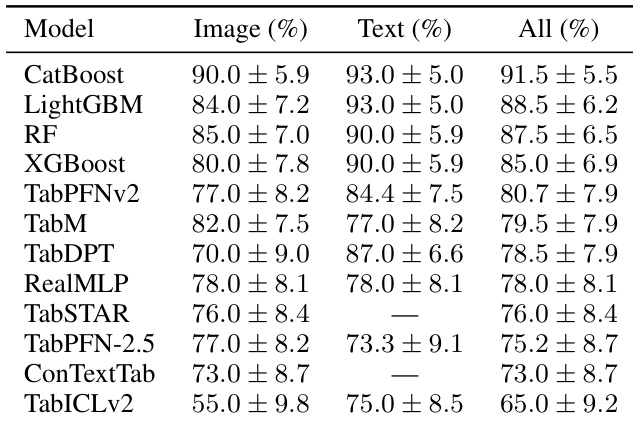

The authors evaluate multiple tabular models across image and text modalities to assess the effectiveness of target-aware representation tuning. Results show that models consistently achieve higher performance when using target-aware representations compared to frozen representations, with gains observed across different model types and modalities. The performance improvement is robust and not dependent on specific model architectures or embedding dimensions. Target-aware representations consistently outperform frozen representations across all evaluated models and modalities. The performance gains from target-aware tuning are observed across different model architectures, including both traditional and neural network-based learners. The benefits of target-aware tuning are robust to variations in embedding dimensions and model scales, indicating generalizability.

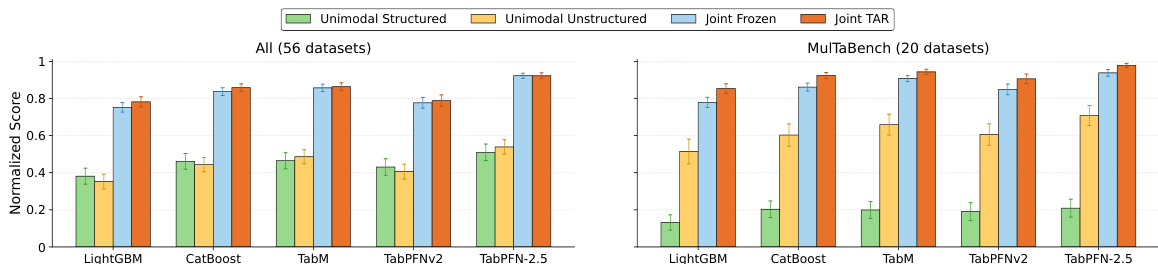

The authors evaluate a benchmark across multiple tabular learners and experimental conditions, comparing performance between unimodal and joint modeling approaches with and without target-aware representation tuning. Results show that joint modeling with target-aware representations consistently outperforms other configurations, particularly in the curated subset of datasets, and this improvement is robust across different model types and modalities. Joint modeling with target-aware representations consistently outperforms other configurations across all evaluated models and datasets. The curated subset of datasets shows a stronger and more consistent performance ordering compared to the full pool of candidates. Target-aware representations lead to significant improvements over frozen embeddings, with gains observed across both text and image modalities and multiple model types.

The authors evaluate a set of datasets using multiple tabular learners under different experimental conditions to assess their suitability for multimodal tasks. The evaluation focuses on two criteria: Joint Signal and Task-awareness, with datasets passing if they meet both criteria across at least three out of five models. The results show that a subset of datasets consistently satisfies these criteria, while others fail due to insufficient multimodal interaction or lack of benefit from target-aware representation tuning. The analysis confirms that the proposed curation method effectively identifies datasets where unstructured modalities contribute meaningfully to tabular prediction. The curation process identifies a subset of datasets that satisfy both Joint Signal and Task-awareness criteria, indicating meaningful multimodal interaction and benefit from target-aware representation tuning. Most datasets that pass the curation criteria are consistently validated across multiple tabular learners, while rejected datasets fail on at least one criterion. The evaluation reveals that models with native multimodal support may not always outperform models using target-aware representation tuning, highlighting the importance of the curation framework.

The experiments evaluate target-aware representation tuning against frozen embeddings across various tabular learners, modalities, and modeling configurations to assess computational efficiency, predictive performance, and dataset suitability. Qualitative results indicate that while target-aware tuning significantly increases computational overhead, particularly for text-based tasks and larger models, it consistently yields robust performance improvements across diverse architectures and modalities. Joint modeling approaches leveraging these contextualized representations demonstrate superior predictive capabilities, especially when applied to curated datasets that exhibit strong multimodal interaction. Overall, the findings underscore that combining careful dataset curation with target-aware tuning effectively harnesses unstructured data for tabular prediction, often surpassing the benefits of models with native multimodal architectures.