Command Palette

Search for a command to run...

TMAS:マルチエージェントの相乗効果によるテスト時の計算スケーリング

TMAS:マルチエージェントの相乗効果によるテスト時の計算スケーリング

George Wu Nan Jing Qing Yi Chuan Hao Ming Yang Feng Chang Yuan Wei Jian Yang Ran Tao Bryan Dai

概要

テスト時のスケーリングは、推論段階で追加の計算資源を割り当てることで大規模言語モデルの推論能力を向上させる効果的なパラダイムとして定着しています。最近の構造化アプローチは、複数の推論経路(トランジェクトリ)、微調整ラウンド、検証ベースのフィードバックにわたる推論を整理することで、このパラダイムを一層発展させてきました。しかし、既存の構造化テスト時スケーリング手法は、並列推論経路の協調が弱いか、あるいは何が保持・再利用されるべきかを明示的に決定せずにノイズの多い履歴情報に依存しており、探索と活用のバランスを取る能力に限界があります。本研究では、マルチエージェントの連携を通じてテスト時の計算資源をスケーリングするためのフレームワークであるTMASを提案します。TMASは、専門化されたエージェント間の協調プロセスとして推論を整理し、エージェント間、推論経路間、および微調整反復における構造化された情報フローを可能にします。効果的な経路横断的な協働を支えるため、TMASは階層的メモリを導入します。具体的には、「経験バンク」は信頼性の高い低レベルの中間結論とローカルなフィードバックを再利用し、「ガイドラインバンク」は以前に探索された高レベルな戦略を記録し、それにより後のロールアウトが冗長な推論パターンから遠ざかるように誘導します。さらに、TMASに tailored されたハイブリッド報酬強化学習スキームを設計しました。この手法は、基本的な推論能力の維持、経験の活用促進、および以前試行された解決戦略を超えた探索の奨励を一体的に行います。困難な推論ベンチマークに対する広範な実験により、TMASが既存のテスト時スケーリングの基底手法(ベースライン)よりも優れた反復的スケーリング性能を発揮すること、そしてハイブリッド報酬による学習が反復全体を通じてスケーリングの有効性と安定性をさらに向上させることが示されました。コードとデータは https://github.com/george-QF/TMAS-code で公開されています。

One-sentence Summary

The authors propose TMAS, a multi-agent synergy framework that scales test-time compute for large language model reasoning by coordinating specialized agents across inference trajectories, employing hierarchical experience and guideline banks to explicitly retain reliable intermediate conclusions and high-level strategies that balance exploration and exploitation during iterative refinement.

Key Contributions

- This work proposes TMAS, a framework for scaling test-time compute via multi-agent synergy that explicitly organizes information flow across agents, trajectories, and iterations. The framework introduces hierarchical memories, utilizing an experience bank to reuse reliable low-level signals and a guideline bank to record high-level strategies for balancing exploitation and exploration.

- A tailored hybrid reward reinforcement learning scheme optimizes three complementary objectives: preserving basic reasoning competence, enhancing experience utilization, and encouraging exploration beyond previously attempted strategies. This training design enables the model to effectively exploit the collaborative memory structure while maintaining sufficient exploration during iterative refinement.

- Extensive experiments on challenging reasoning benchmarks demonstrate that TMAS achieves stronger iterative scaling performance compared to existing test-time scaling baselines. The results further indicate that the hybrid reward scheme improves scaling effectiveness and stability across refinement rounds.

Introduction

Test-time scaling improves large language model reasoning by allocating additional computation during inference, a capability that has become essential for tackling complex analytical tasks. Despite recent advances, existing structured methods either weakly coordinate parallel reasoning trajectories or rely on unfiltered historical data, which fails to effectively balance exploration with exploitation and often propagates noisy signals. The authors leverage a multi-agent synergy framework called TMAS to restructure inference as a coordinated iterative process. They introduce hierarchical memory banks to separately preserve reliable intermediate conclusions and high-level exploration strategies, while a custom hybrid reward reinforcement learning scheme trains the model to efficiently reuse shared experience and pursue diverse solution paths.

Dataset

- Dataset Composition & Sources: The authors construct a cold-start training pool by combining open-source RL datasets (DAPO and Skywork-OR1) with two mathematical reasoning benchmarks (IMO-AnswerBench and HLE-Math-100).

- Subset Details & Filtering: The training data is organized into three task-specific subsets: 1.6K instances for Experience Utilization, 0.6K for Novel Strategy Exploration, and 2.2K for Standard Correctness Reward. For evaluation, they filter the original 400-problem IMO-AnswerBench using Qwen3-4B-Thinking-2507, retaining only the 50 problems solved correctly fewer than two times across eight independent inference runs. The HLE-Math-100 dataset is adopted directly from the RSE release without modification.

- Training Usage & Context Processing: To align with hybrid RL objectives, the team uses DeepSeek-V3.2 as a teacher model to simulate iterative inference on each problem. They generate multi-round rollout histories and distill these trajectories into historical context, experience banks, and guideline banks. This distillation process creates contextualized training examples that initialize the model before RL fine-tuning.

- Evaluation & Metadata Setup: During assessment, DeepSeek-V3.2 serves as an LLM-as-Judge to verify mathematical equivalence between generated solutions and reference answers. The authors run four independent judgment passes per solution, apply strict equivalence rules that ignore formatting variations and focus solely on final results, and compute a final Pass@1 accuracy by averaging the success rates across all judge runs.

Method

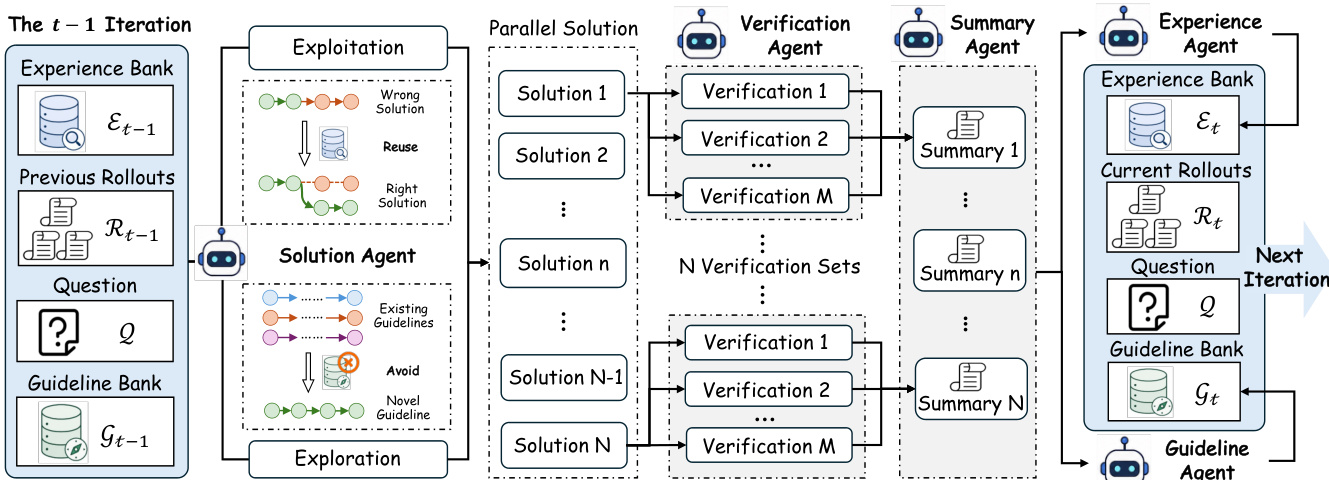

The TMAS framework orchestrates multi-agent collaboration to scale test-time compute through iterative reasoning, integrating parallel exploration with sequential exploitation. The overall system operates in an iterative pipeline where multiple solution trajectories are generated in parallel at each iteration, verified, summarized, and used to update two complementary memory banks. This process enables coordinated refinement and non-redundant exploration across iterations.

At each iteration, the system begins with a problem Q and leverages two memory banks: the experience bank Et−1, which stores low-level, trajectory-specific reasoning signals such as verified intermediate conclusions and error-avoidance heuristics, and the guideline bank Gt−1, which holds high-level solution strategies and structural insights from prior exploration. These memory banks condition the solution generation process, balancing exploitation of proven knowledge with exploration of novel paths.

The core of the inference process is driven by five specialized agents. The solution agent Asol generates N candidate solution trajectories {ct,i}i=1N in parallel. This generation follows an ϵ-greedy strategy: with probability 1−ϵ, it exploits previous rollouts and experience by sampling from Asol(Q,Rt−1,Et−1); with probability ϵ, it explores new directions by sampling from Asol(Q,Gt−1), guided by the high-level strategies in the guideline bank. This mechanism directly coordinates exploration and exploitation.

As shown in the figure below, each candidate solution ct,i is then independently verified by M verification agents, producing a verification set Vt,i. The verification outputs provide analytical feedback and a grading score for each step. A summary agent aggregates these results into a concise rollout-level summary st,i, which highlights validated reasoning and identifies logical flaws. The collection of all rollouts, Rt={(ct,i,st,i)}i=1N, serves as the input for the memory update phase.

Two parallel memory update agents operate on Rt. The experience agent Aexp extracts reusable low-level patterns, such as shared intermediate steps and common error pitfalls, to update the experience bank Et. The guideline agent Aguide abstracts the high-level solution approaches attempted across the rollouts to update the guideline bank Gt. This hierarchical memory structure serves as the communication substrate, allowing specialized agents to share local evidence and propagate global strategies, thereby converting independent parallel trajectories into a coordinated iterative reasoning process. The updated memory banks are carried forward to the next iteration, conditioning the solution agent's future actions.

Experiment

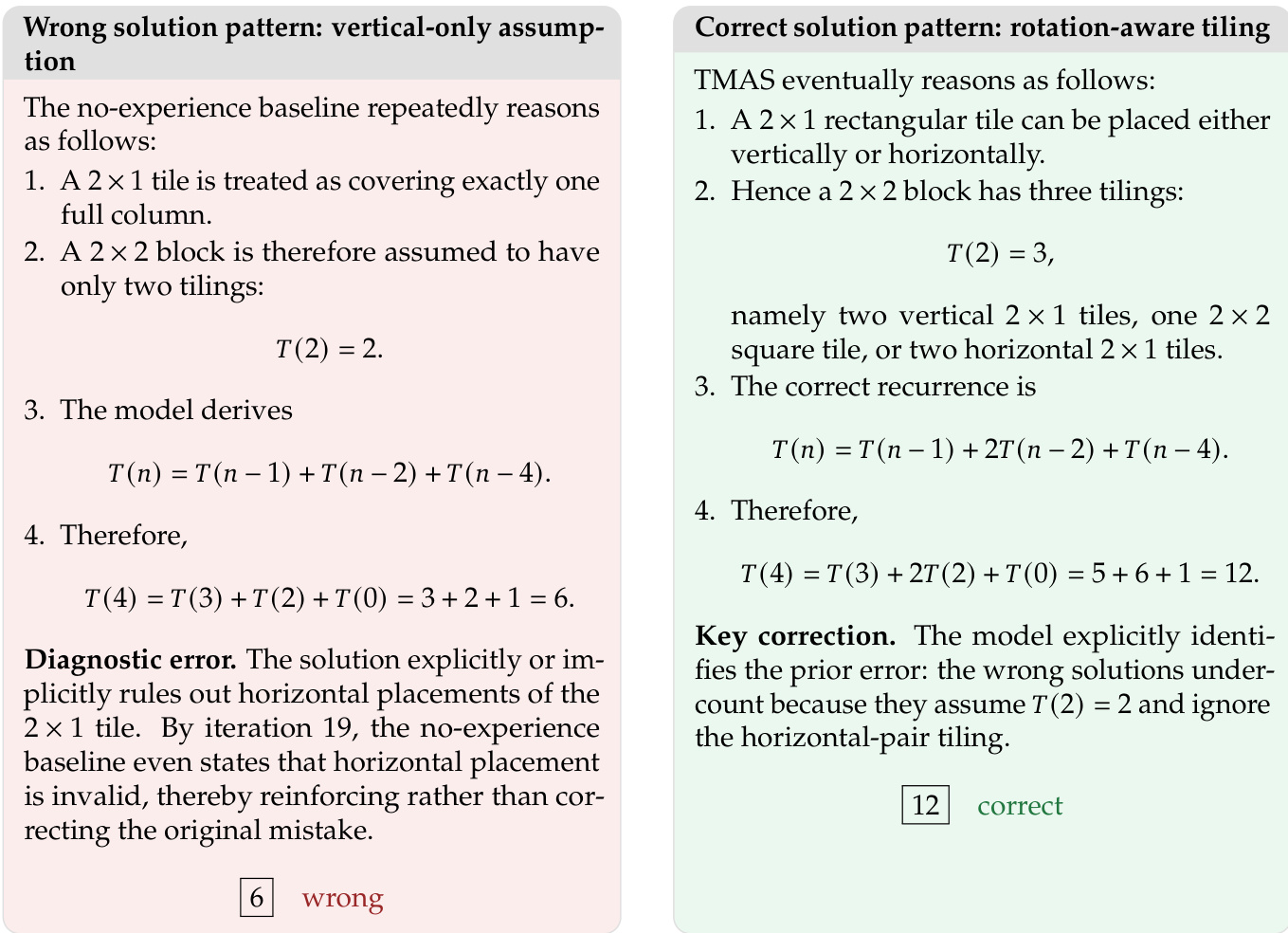

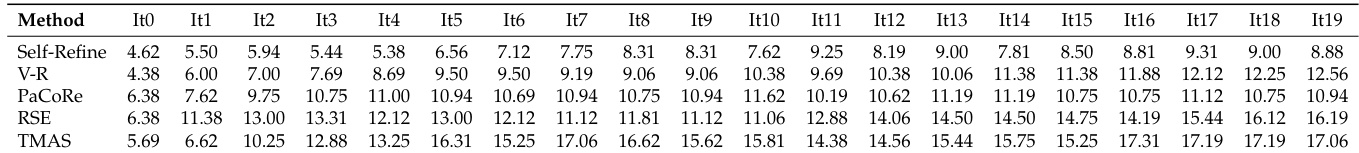

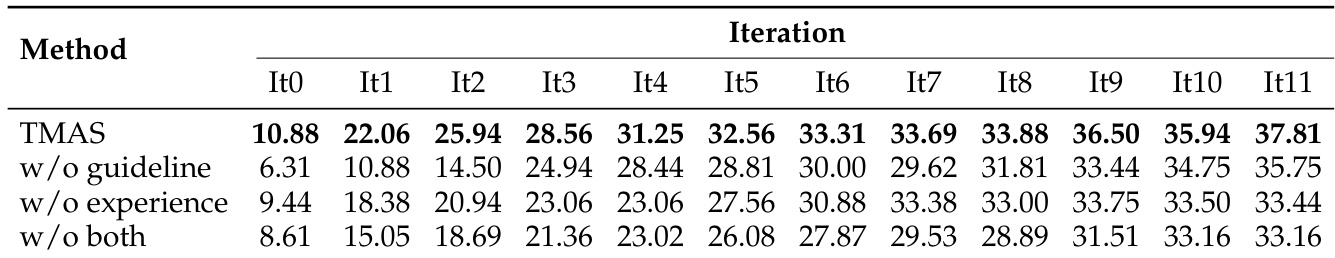

Evaluated on challenging mathematical reasoning benchmarks against established test-time scaling baselines, the primary experiments validate TMAS's superior capacity to sustain iterative performance gains without the plateauing observed in competing methods. Component ablations and sensitivity analyses further validate the complementary roles of strategic guidelines and historical experience, while confirming that moderate exploration and verification budgets optimally balance discovery with exploitation. Additional studies demonstrate that hybrid reward reinforcement learning stabilizes early progress and mitigates late-stage degradation, though they also reveal that a shared capability boundary between generation and verification ultimately constrains scaling on the most difficult problems.

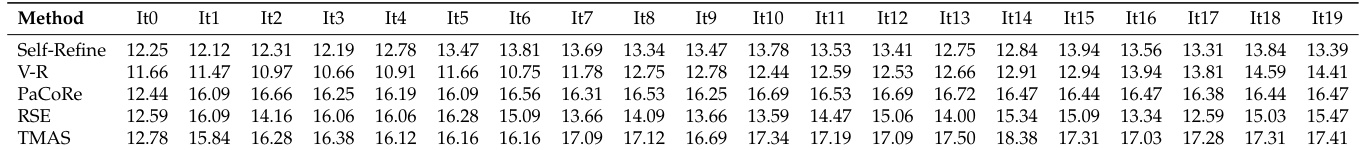

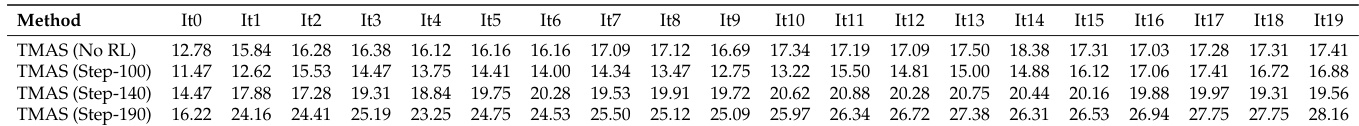

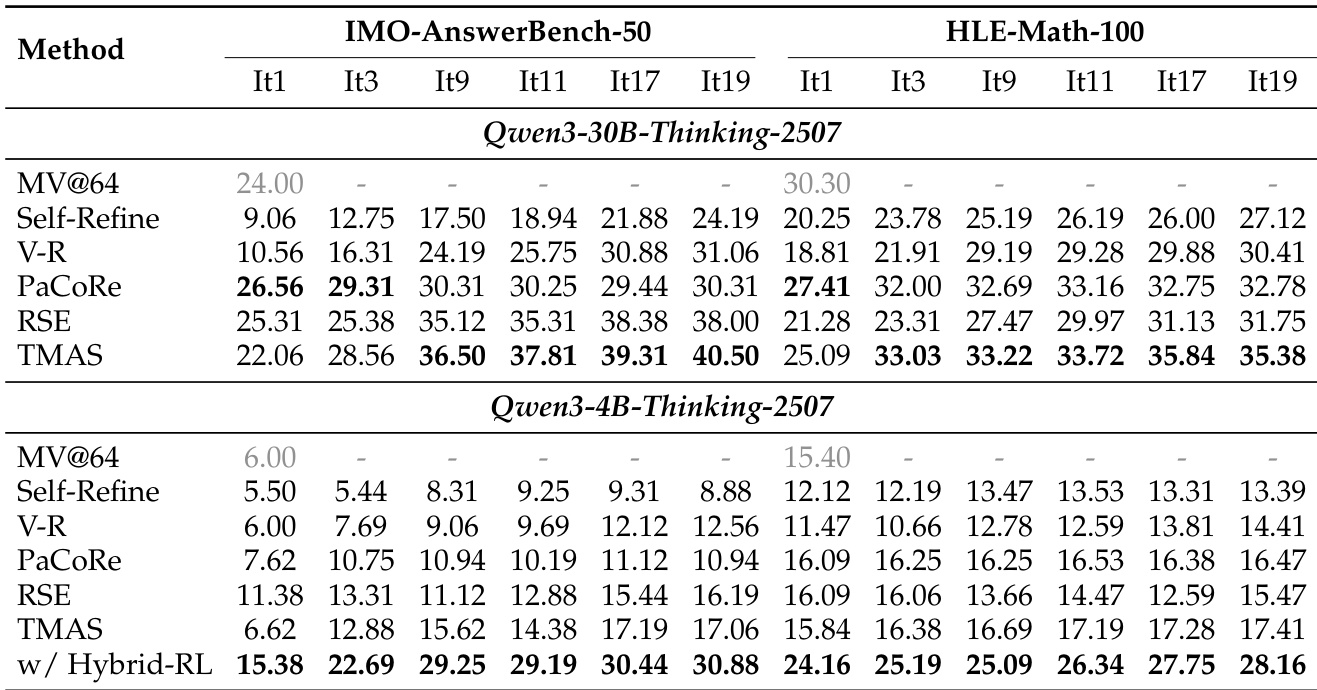

The authors evaluate TMAS on two reasoning benchmarks, demonstrating that it achieves stronger iterative scaling compared to baseline methods. Results show that TMAS continues to improve with additional refinement rounds, outperforming other methods at later iterations. The proposed hybrid reward RL significantly enhances performance, particularly in later stages, and reduces the performance gap between smaller and larger base models. TMAS demonstrates superior iterative scaling, consistently improving with more refinement rounds and achieving the best late-stage performance. Hybrid reward RL training significantly enhances TMAS's scaling ability, leading to sustained improvements and reduced performance gaps between different model sizes. The synergy of experience and guideline modules is critical, as removing either degrades performance, with guidelines aiding early progress and experience supporting late-stage refinement.

The authors evaluate TMAS on two reasoning benchmarks, comparing its performance against several baselines and analyzing its iterative scaling behavior. Results show that TMAS consistently improves with additional refinement rounds and achieves the best late-stage performance, particularly when using a hybrid reward RL training approach. The ablation study reveals that both the guideline and experience modules contribute uniquely to iterative gains, with the full system outperforming variants missing either component. TMAS demonstrates stronger iterative scaling ability compared to baselines, continuing to improve as refinement rounds increase. Hybrid reward RL significantly enhances iterative scaling, leading to superior performance and reduced performance gaps between smaller and larger models. The guideline and experience modules in TMAS provide complementary benefits, with each contributing uniquely to performance gains across different stages of refinement.

The authors evaluate TMAS on two reasoning benchmarks, demonstrating its superior iterative scaling ability compared to baseline methods. Results show that TMAS continues to improve with additional refinement rounds, achieving the best late-stage performance. The proposed hybrid reward RL significantly enhances iterative scaling, particularly by improving the performance of smaller models and mitigating performance degradation in later iterations. TMAS demonstrates stronger iterative scaling than baselines, continuing to improve with additional refinement rounds and achieving the best late-stage performance. Hybrid reward RL significantly amplifies iterative scaling, improving performance across all stages and mitigating degradation in later iterations. The integration of hybrid RL reduces the performance gap between smaller and larger models, enabling smaller models to approach the performance of larger ones through more effective iterative test-time computation.

The authors evaluate TMAS on two reasoning benchmarks, demonstrating that it achieves superior iterative scaling compared to baseline methods. Results show that TMAS continues to improve with additional refinement rounds, outperforming other methods in late-stage iterations. The integration of hybrid reward RL significantly enhances performance, particularly in later iterations, and reduces the performance gap between smaller and larger base models. TMAS shows stronger iterative scaling, continuing to improve with more refinement rounds while other methods plateau. Hybrid reward RL enhances TMAS performance, especially in later iterations, and reduces the gap between smaller and larger models. The model maintains consistent gains across iterations, indicating effective use of test-time computation.

The authors evaluate TMAS on two reasoning benchmarks, demonstrating that it achieves superior performance compared to baseline methods, particularly in later iterations. Results show that TMAS maintains strong iterative scaling, with performance continuing to improve as refinement rounds increase, unlike other methods that plateau or degrade. The integration of hybrid reward RL significantly enhances the model's ability to sustain improvements over multiple iterations. The authors also conduct ablation studies, revealing that both the experience and guideline modules contribute uniquely to the system's effectiveness, with their combined use yielding the best results. TMAS demonstrates stronger iterative scaling ability compared to baselines, consistently improving performance across refinement rounds. Hybrid reward RL significantly enhances iterative scaling, leading to sustained performance gains and mitigating degradation in later iterations. The experience and guideline modules in TMAS contribute complementary benefits, with their combined use resulting in the best overall performance.

Evaluated on two reasoning benchmarks, the experiments validate TMAS's superior iterative scaling against baseline methods, demonstrating consistent performance gains across multiple refinement rounds without the plateauing observed in competing approaches. The integration of hybrid reward reinforcement learning significantly amplifies these sustained improvements while effectively narrowing the performance gap between smaller and larger base models. Ablation studies further confirm the essential synergy between the guideline and experience modules, showing that their complementary contributions drive optimal early-stage guidance and late-stage refinement. Collectively, these findings establish TMAS as a robust framework that efficiently leverages test-time computation to achieve scalable and sustained reasoning improvements.