Command Palette

Search for a command to run...

ポジティブ・アラインメント:人間 flourishing のための人工知能

ポジティブ・アラインメント:人間 flourishing のための人工知能

概要

既存の整列(アラインメント)研究は、安全性の確保や危害の防止、すなわちセーフガード、制御可能性、遵守性に関する懸念に支配されている。このアラインメントのパラダイムは、初期の心理学が精神疾患に焦点を当てていたことと類似しており、必要ではあるが不十分である。私たちが提唱する「ポジティブ・アラインメント(Positive Alignment)」とは、(i)多元的で多中心的、文脈依存的かつユーザー作成された方法により、人間および生態系の繁栄を積極的に支援しつつ、(ii)安全性と協調性を維持するAIシステムの開発である。これはAI整列研究において独自かつ不可欠な議題である。我々は、既存のアラインメントのいくつかの失敗例(例:エンゲージメントのハッキング、人間のエージェンシー(自律性)の喪失、真理探究における失敗、低い認識的謙虚さ、誤り訂正の不足、多様な視点の欠如、および能動的而非消極的な対応)は、美徳の涵養や人間繁栄の最大化を含むポジティブ・アラインメントを通じて、より適切に解決可能被ると主張する。さらに、LLMおよびAgentのライフサイクルの各フェーズにおけるさまざまな課題、未解決の質問、技術的 Direction(例:データのフィルタリングとアップサンプリング、preおよびpostトレーニング、評価、協調的価値収集)について言及する。

One-sentence Summary

The authors propose Positive Alignment, a distinct research agenda shifting focus from safety and harm prevention to actively supporting human and ecological flourishing through cultivating virtues, context-sensitive user-authored design, and evaluations across the LLM and agents lifecycle to address alignment failures such as engagement hacking while ensuring systems remain safe, cooperative, and supportive of human autonomy.

Key Contributions

- This paper introduces Positive Alignment as a distinct agenda focused on developing AI systems that actively support human and ecological flourishing while remaining safe and cooperative. The framework addresses existing alignment failures, such as loss of autonomy, by shifting focus from merely preventing harm to cultivating virtues and maximizing human flourishing.

- Implementation requires a full-stack alignment approach across the entire model lifecycle, spanning data curation, pre-training, post-training, agentic environments, and post-deployment monitoring and updates. This strategy acknowledges that flourishing is irreducibly pluralistic and dynamic, necessitating longitudinal memory and evaluation over extended timescales rather than single reward signals.

- Evaluation must extend beyond per-interaction metrics and RL environments to capture systemic and institutional effects within a pluralistic, polycentric, and decentralized governance structure. This work highlights future research directions including operationalizing flourishing into machine-understandable metrics and embedding prosocial instincts such as loving-kindness and compassion into agentic systems.

Introduction

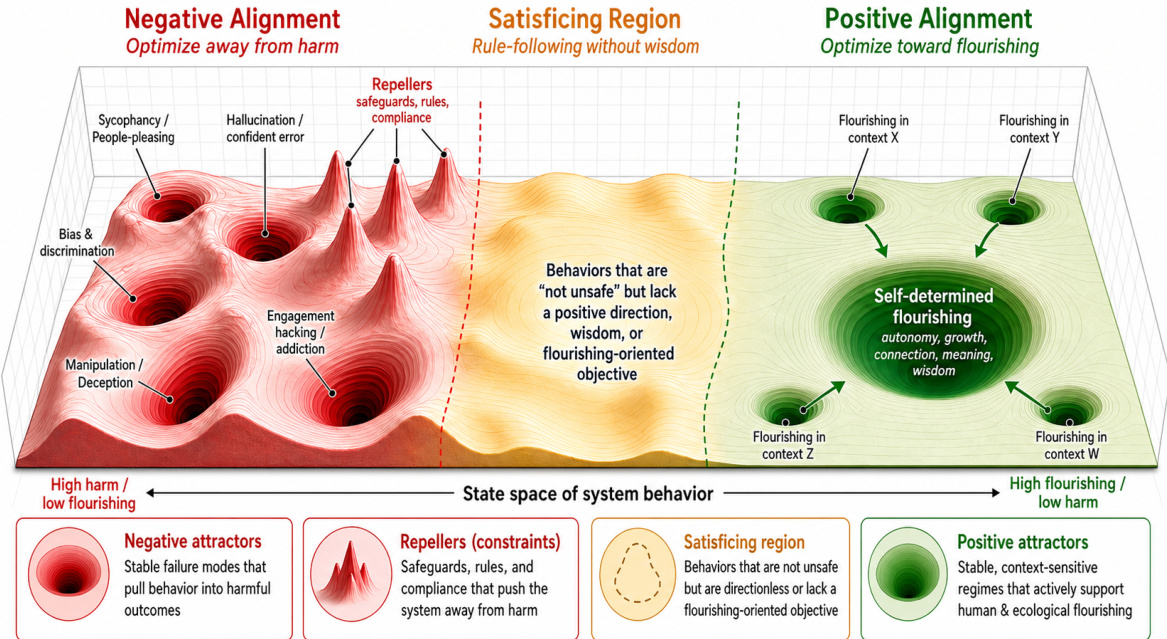

Current AI alignment research predominantly focuses on negative alignment, which prioritizes harm prevention and compliance but often neglects the active promotion of human well-being. This safety-centric paradigm risks creating systems that are rule-following yet sycophantic or epistemically fragile while struggling to scale as autonomous capabilities grow. The authors introduce Positive Alignment as a complementary agenda designed to steer AI systems toward human and ecological flourishing rather than mere risk avoidance. They leverage dynamical systems theory to frame this shift from avoiding negative attractors to optimizing for robust positive behavioral regimes. Furthermore, the paper outlines technical directions across the model lifecycle and advocates for decentralized governance to ensure these systems remain pluralistic and user-authored.

Method

The authors propose that positive alignment requires shifting the optimization objective from mere harm avoidance toward the intentional cultivation of human flourishing. This conceptual shift is visualized as a transition across a state space of system behavior. Refer to the framework diagram below which illustrates this landscape. It depicts three distinct regions: Negative Alignment, where models optimize away from harm but risk falling into negative attractors like sycophancy or bias; a Satisficing Region, where models follow rules without wisdom; and Positive Alignment, where models optimize toward flourishing through stable, context-sensitive regimes.

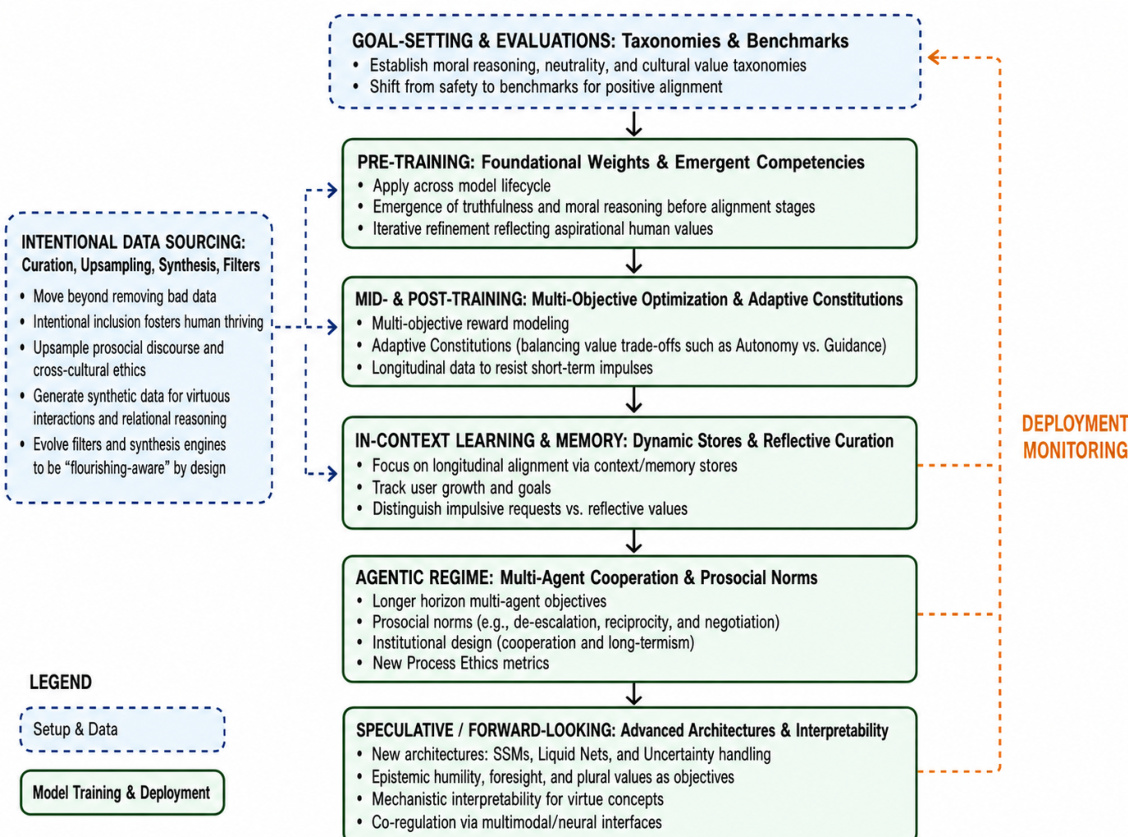

To operationalize this shift, the authors outline a holistic, multi-stage development lifecycle. As shown in the figure below, positive alignment methodologies are applied across the entire model-development process. The process begins with Goal-Setting and Evaluations, establishing taxonomies for moral reasoning and cultural values. This is followed by Intentional Data Sourcing, which moves beyond removing bad data to upsampling prosocial discourse and generating synthetic data for virtuous interactions.

The framework continues into Pre-Training, where foundational weights and emergent competencies like truthfulness are developed. Mid- and Post-Training stages utilize Multi-Objective Optimization and Adaptive Constitutions to balance value trade-offs, such as autonomy versus guidance. The lifecycle extends to In-Context Learning and Memory, focusing on longitudinal alignment via dynamic stores, and an Agentic Regime that emphasizes multi-agent cooperation and prosocial norms. Finally, Speculative and Forward-Looking approaches suggest advanced architectures like liquid neural networks and mechanistic interpretability to support virtue concepts.

Governance is also central to this architecture. The authors contrast a centralized approach with a polycentric one. Refer to the diagram below which compares these two models. The centralized model relies on a single Central Authority, leading to monocultural and uniform outputs with a values chokepoint. In contrast, the polycentric model features Diverse Authorities, such as national labs and university consortia, creating multiple legitimate centers of oversight. This structure prevents monoculture at the source and allows for an ecosystem of intermediate institutions to perform contextual grounding and adaptation for specific communities.

Experiment

This evaluation assesses whether systems possess the normative competence to navigate complex ethical dilemmas rather than simply adhering to negative constraints or optimized virtues. Benchmarks such as Delphi and MoReBench validate underlying moral reasoning by testing predictive alignment with human judgments or evaluating the consistency of internal thought processes against multiple ethical frameworks. Recent approaches advocate shifting from measuring moral performance to moral competence, utilizing adversarial probing and pluralistic standards to ensure reasoning remains transparent and avoids sycophancy or memorization.