Command Palette

Search for a command to run...

潜在空間:基盤、進化、メカニズム、能力、および展望

潜在空間:基盤、進化、メカニズム、能力、および展望

概要

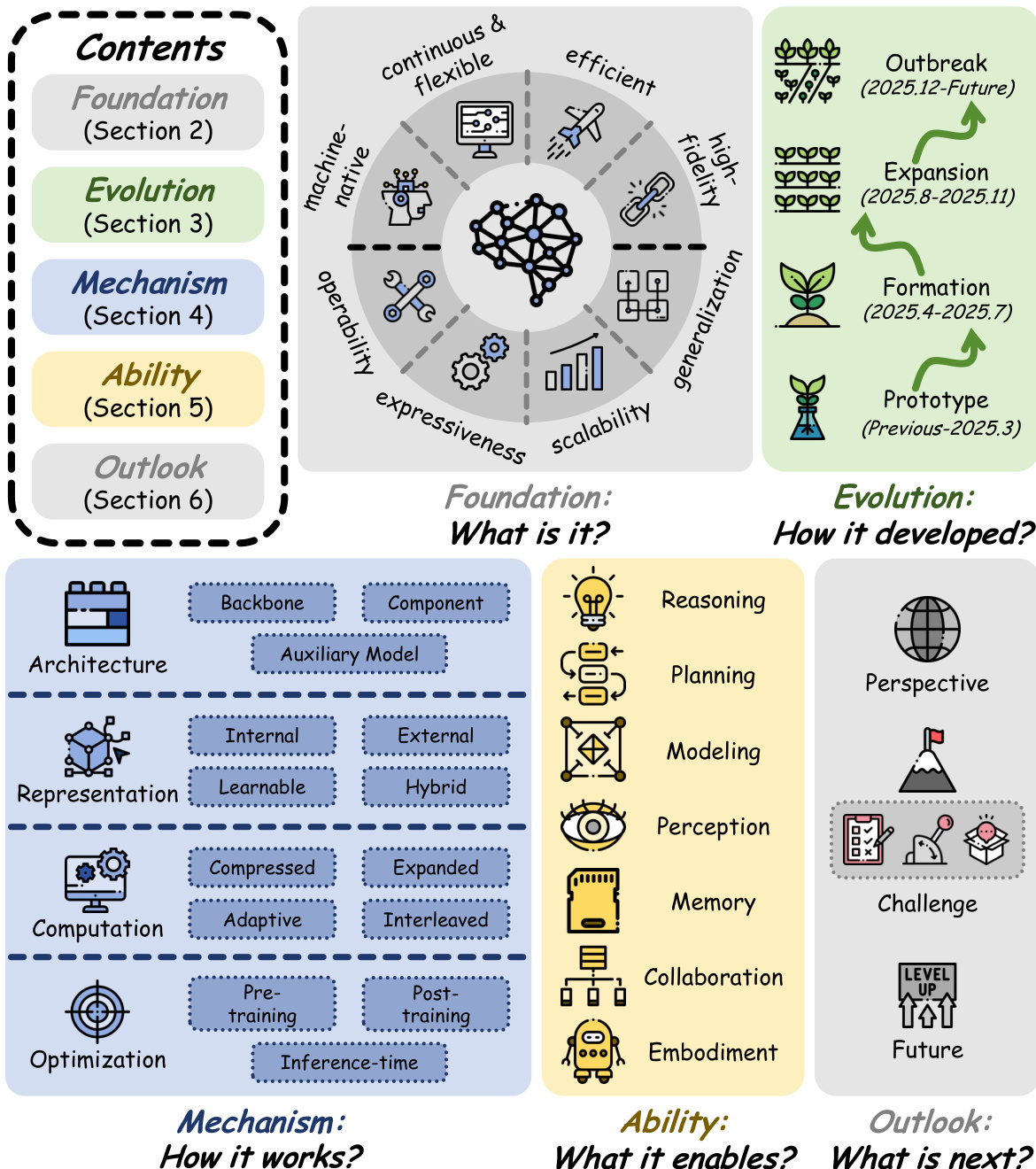

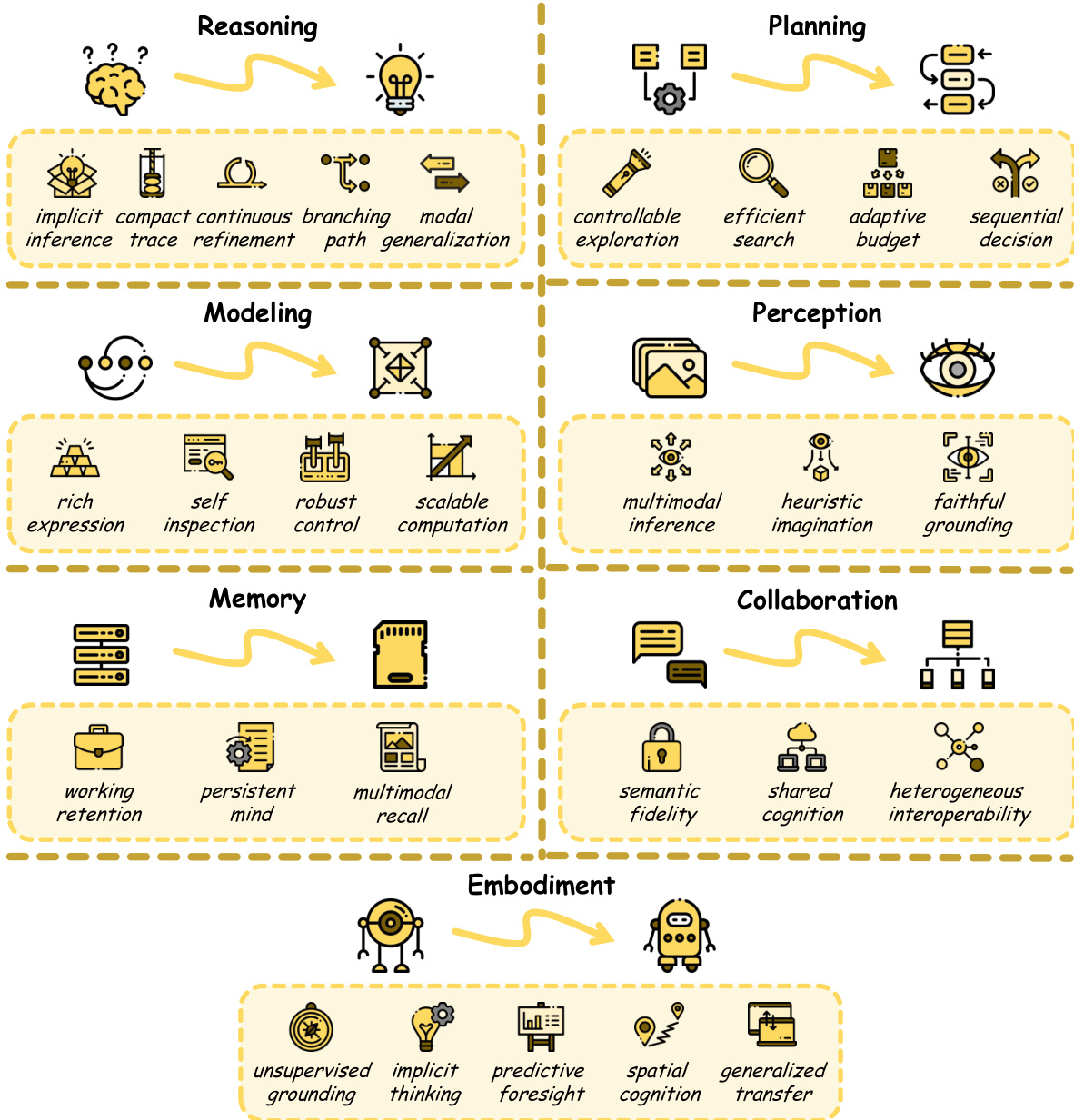

潜在空間は、言語ベースのモデルにおけるネイティブな基盤として急速に台頭しつつある。現代のシステムは依然として明示的なトークンレベルの生成によって理解されることが多いが、多くの重要な内部プロセスは、人間が読み取れる言語的痕跡よりも、連続的な潜在空間においてより自然に実行されることが示される研究が増加している。この転換は、言語的冗長性、離散化のボトルネック、逐次処理の非効率性、意味的損失といった、明示的空間計算の構造的限界によって駆動されている。本論文では、言語ベースのモデルにおける潜在空間の統合的かつ最新の実像を提示することを目的とする。本調査は、基盤(Foundation)、進化(Evolution)、メカニズム(Mechanism)、能力(Ability)、展望(Outlook)という5つの連続的な視点に整理されている。まず、潜在空間の範囲を定義し、明示的・言語的空間および生成型視覚モデルで一般的に研究される潜在空間との区別を明確にする。次に、分野の進化を初期の探索的試みから現在の大規模な拡大へと追跡する。技術的景観を整理するため、メカニズムと能力という相補的な視点を通じて既存の研究を検証する。メカニズムの観点からは、アーキテクチャ、表現、計算、最適化という4つの主要な発展路線を特定する。能力の観点からは、潜在空間が推論、計画、モデリング、知覚、記憶、協働、具身化にわたる広範な能力スペクトルをどのように支えているかを示す。既存研究の総括を超え、重要な未解決課題について議論し、将来の研究に向けた有望な方向性を概説する。本調査が、既存研究の参照資料であるだけでなく、次世代の知能に対する汎用的な計算およびシステムパラダイムとしての潜在空間の理解の基盤となることを期待する。

One-sentence Summary

Researchers from National University of Singapore, Fudan University, Tsinghua University, and other leading institutions propose a unified survey on latent space in language-based models, introducing a two-dimensional taxonomy of mechanisms and abilities to consolidate fragmented literature and guide future research in reasoning, perception, and embodied AI.

Key Contributions

- The paper introduces a unified survey framework organized around five sequential perspectives—Foundation, Evolution, Mechanism, Ability, and Outlook—to consolidate fragmented literature on latent space in language-based models.

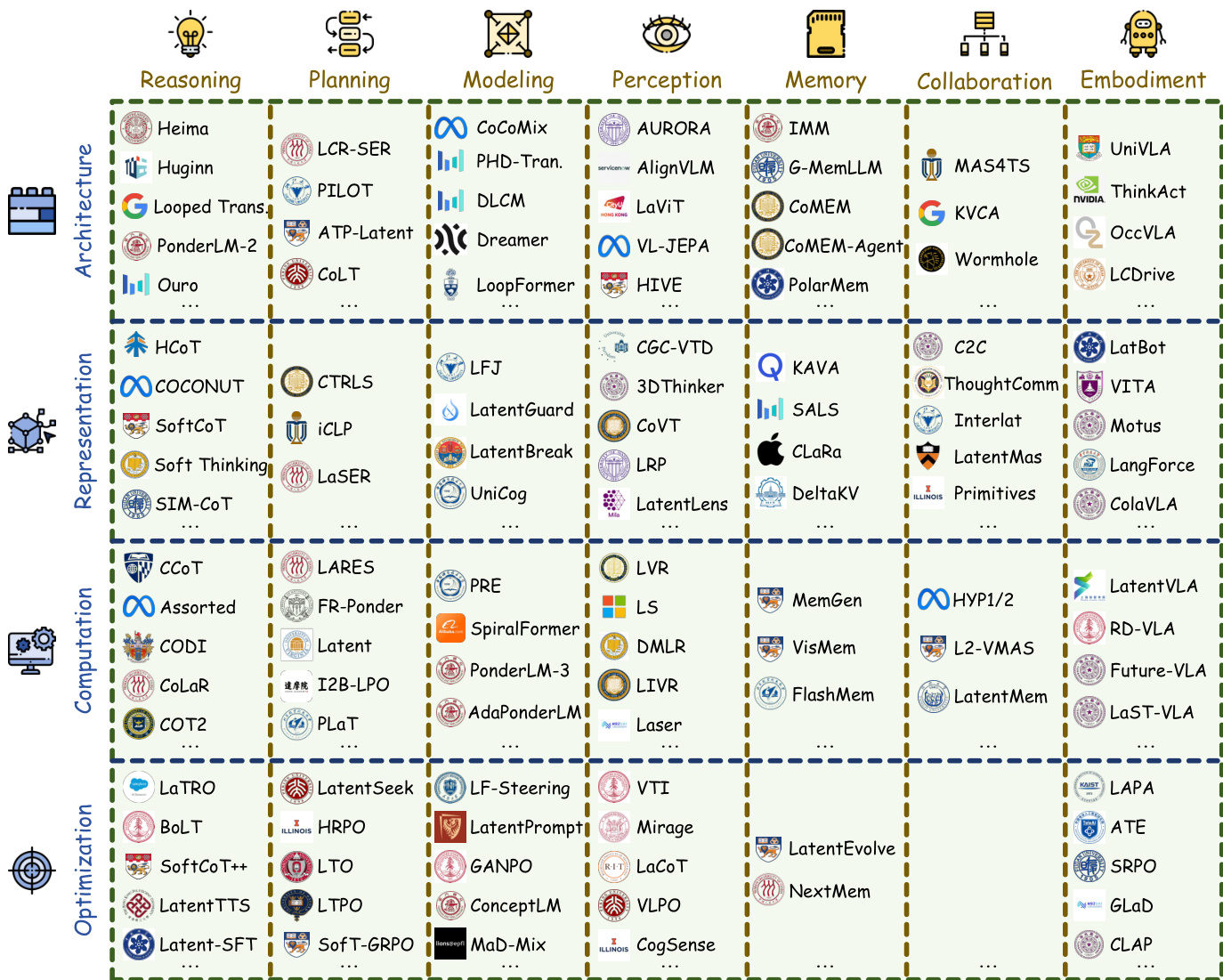

- This work presents a comprehensive technical taxonomy that classifies existing methods into four mechanism categories (Architecture, Representation, Computation, Optimization) and seven ability domains (Reasoning, Planning, Modeling, Perception, Memory, Collaboration, Embodiment).

- The study delineates the conceptual scope of latent space by distinguishing it from explicit token-level generation and visual generative models, while outlining open challenges and future research directions for next-generation intelligence.

Introduction

Language-based models are increasingly shifting from explicit token-level generation to continuous latent space as a native computational substrate, driven by the need to overcome linguistic redundancy, discretization bottlenecks, and sequential inefficiencies inherent in verbal traces. Prior research has largely remained fragmented across specific tasks like latent reasoning or visual understanding, lacking a unified framework to classify the diverse mechanisms and capabilities emerging in this field. The authors address this gap by providing a comprehensive survey that organizes the landscape into five sequential perspectives and introduces a two-dimensional taxonomy based on technical mechanisms and functional abilities to guide future research.

Method

The authors propose a unified framework to categorize how latent space is instantiated and operationalized within modern language-based systems. This mechanism-oriented taxonomy organizes diverse approaches along four complementary axes: Architecture, Representation, Computation, and Optimization. As illustrated in the framework diagram, these dimensions collectively define the design space for latent-space methods, clarifying how latent variables are constructed, processed, and refined.

The architectural axis characterizes the structural role of latent space in the model. Methods are classified into three categories based on where latent computation is embedded. First, Backbone-based approaches endow the main model with native latent capacity through recurrent, looping, or recursive structures, making latent operation a primitive of the architecture itself. Second, Component-based methods preserve the original backbone but augment it with functional modules that construct, transform, store, or retrieve latent representations. Third, Auxiliary Model-based paradigms utilize an extra model to provide supervision signals or intermediate features to guide or supplement the host model. The taxonomy of representative works across these architectural choices is detailed in the grid diagram.

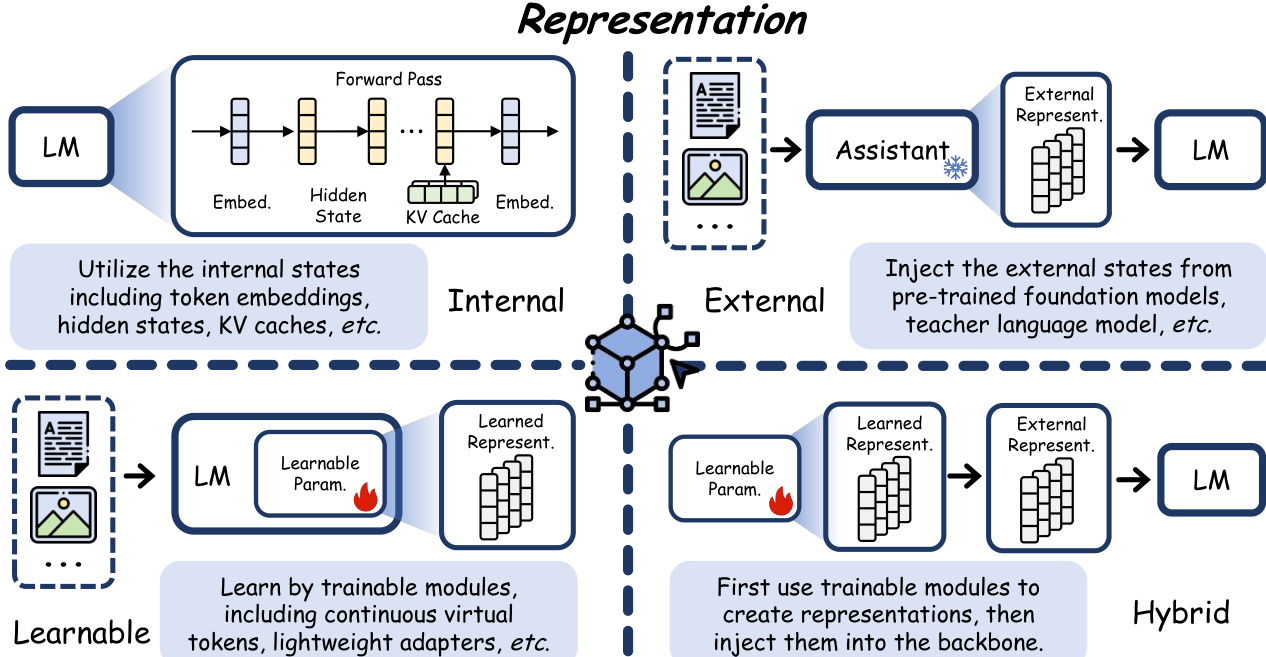

The representation axis describes the form of latent variables, distinguishing how information is encoded and integrated. Internal representations operate directly on activations produced during the backbone's forward pass, such as token embeddings or hidden states, without introducing additional parameters. External representations are derived from a structurally independent auxiliary system and injected into the backbone as conditioning inputs. Learnable representations are constructed by dedicated trainable modules embedded directly into the backbone and optimized end-to-end. Hybrid representations combine the Learnable and External paradigms by first using trainable modules to create specialized representations, then injecting them as exogenous signals. The schematic diagram illustrates these four sub-types and their data flow.

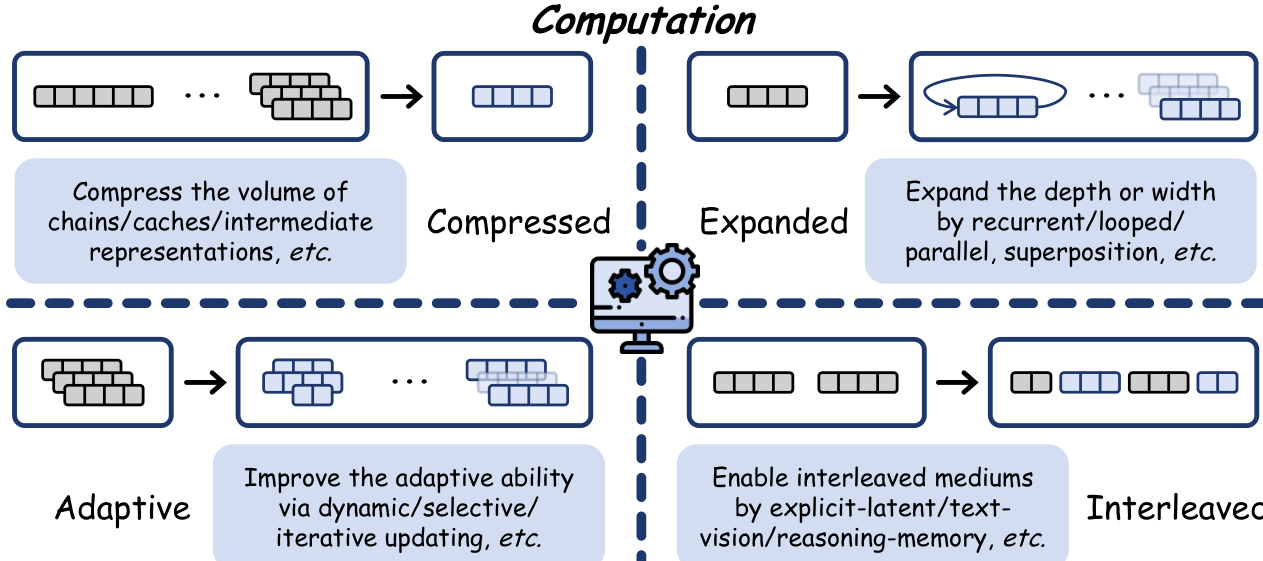

The computation axis captures how the latent space participates in information processing. Compressed computation reduces the volume of explicit traces or internal states to enhance efficiency while preserving expressiveness. Expanded computation increases effective capacity by extending latent processes along depth or width dimensions, such as through recurrent or parallel designs. Adaptive computation allocates resources dynamically based on input complexity, balancing capacity and efficiency flexibly. Interleaved computation bridges heterogeneous generation media, alternating between discrete tokens and continuous latents to combine explicit interpretability with implicit power. The corresponding schematic outlines these four computational strategies.

The optimization axis focuses on when and how latent space is induced, aligned, or refined. Pre-training methods start with a randomly initialized model and train it from scratch to enable native latent-level abilities. Post-training enhances the ability of pre-trained models using diverse supervision signals and objectives to learn the latent space. Inference-time methods focus on the manipulation of latent states during test time, allowing for dynamic adjustment without modifying model weights. The overview table summarizes the supervision, objective, and scenarios for each optimization stage.

Experiment

- A comparative analysis was conducted between the latent space and traditional explicit (verbal) space to clarify the unique characteristics of the latent representation.

- The experiment validates a paradigm shift in the representational properties and functional capabilities of language models when utilizing latent space versus explicit space.