Command Palette

Search for a command to run...

なぜ自己蒸留(Self-Distillation)は、LLM の推論能力を(時として)劣化させるのか?

なぜ自己蒸留(Self-Distillation)は、LLM の推論能力を(時として)劣化させるのか?

Jeonghye Kim Xufang Luo Minbeom Kim Sangmook Lee Dohyung Kim Jiwon Jeon Dongsheng Li Yuqing Yang

概要

自己蒸留(self-distillation)は、LLM に対する効果的なポストトレーニングパラダイムとして浮上しており、推論トレースを短縮しつつ性能を向上させることが多く見られます。しかし、数学的推論の領域においては、応答長を短縮する一方で性能が低下することが我々の調査で明らかになりました。この性能低下は、推論過程におけるモデルの不確実性の表現(epistemic verbalization)が抑制されることに起因すると特定しました。条件付けコンテキストの豊かさとタスクカバレッジを系統的に変化させた制御実験を通じて、教師モデルを豊富な情報に基づいて条件付けると不確実性の表現が抑制され、限られたタスクカバレッジ下でもドメイン内での迅速な最適化が可能になる一方で、未知の問題が不確実性を表現しそれに応じて調整することで恩恵を受ける OOD(out-of-distribution)性能は損なわれることを示しました。Qwen3-8B、DeepSeek-Distill-Qwen-7B、Olmo3-7B-Instruct の各モデルにおいて、最大 40% の性能低下が観測されました。本研究の知見は、堅牢な推論には適切なレベルの不確実性を曝露することが不可欠であることを強調するとともに、単に正解のトレースを強化するだけでなく、推論行動そのものを最適化する重要性を浮き彫りにしています。

One-sentence Summary

Researchers from Microsoft Research, KAIST, and Seoul National University reveal that self-distillation harms mathematical reasoning in LLMs by suppressing epistemic verbalization. They demonstrate that rich teacher conditioning reduces uncertainty expression, causing significant performance drops on out-of-distribution tasks despite shorter reasoning traces.

Key Contributions

- The paper identifies that self-distillation in mathematical reasoning degrades performance by suppressing epistemic verbalization, which is the model's expression of uncertainty during the reasoning process.

- Controlled experiments varying conditioning context richness and task coverage demonstrate that conditioning a teacher on rich information enables rapid in-domain optimization but harms out-of-distribution performance where uncertainty expression is beneficial.

- Empirical results across Qwen3-8B, DeepSeek-Distill-Qwen-7B, and Olmo3-7B-Instruct show performance drops of up to 40%, providing evidence that optimizing reasoning behavior requires preserving appropriate levels of uncertainty beyond reinforcing correct answer traces.

Introduction

Self-distillation is a popular post-training paradigm for LLMs that typically improves performance while shortening reasoning traces, yet it unexpectedly degrades mathematical reasoning capabilities in certain scenarios. Prior work often assumes that compressing reasoning into concise, confident outputs is universally beneficial, but this approach fails to account for the loss of epistemic verbalization where models express uncertainty to navigate complex problems. The authors identify that conditioning the teacher model on rich ground-truth information suppresses these uncertainty signals, leading to significant performance drops of up to 40% on out-of-distribution tasks. They demonstrate that preserving appropriate levels of uncertainty expression is critical for robust reasoning and propose that future training objectives must optimize for reasoning behavior beyond mere answer correctness.

Dataset

- The authors incorporate the DAPO-Math-17k dataset alongside their experimental setup to enhance task coverage and model performance.

- This subset contains 17,000 math problems derived from a pool of 25,600 samples, with 14,000 distinct problems representing 78% of the total due to repeated sampling over 100 training steps.

- Unlike the Chemistry dataset which relies on only six problem types or LiveCodeBench v6 with just 131 problems, DAPO-Math-17k exposes the model to a broad, non-overlapping range of problem types.

- The data is processed using a specific prompt format that instructs the model to solve problems step by step and format the final output as "Answer: $Answer" on its own line.

- Evaluation is conducted on unseen problem types to ensure the model generalizes beyond the training distribution.

Method

The authors leverage a self-distillation framework to enhance the reasoning capabilities of language models. In this setup, the model πθ functions as both a student and a teacher under different conditioning contexts. The student generates a sequence y based solely on the input x, while the teacher policy is conditioned on a richer context c that provides additional information such as solutions or environment feedback. The training objective minimizes the divergence between the student and teacher next-token distributions:

LSD(θ)=∑tKL(πθ(⋅∣x,y<t)∥stopgrad(πθ(⋅∣x,c,y<t))).

This objective encourages the student to match the teacher's predictions under the richer context, enabling the model to improve by distilling information available at training time without requiring an external teacher. A key component of this approach is the handling of uncertainty during the reasoning process. Math reasoning is treated as self-Bayesian reasoning where the model iteratively updates its belief over intermediate hypotheses.

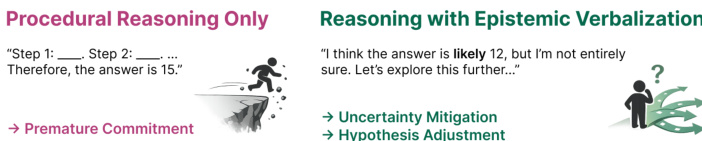

As shown in the figure below, the authors distinguish between procedural reasoning and reasoning with epistemic verbalization.

Reasoning without epistemic signals often leads to premature commitment to incorrect hypotheses with limited opportunity for recovery. In contrast, epistemic verbalization allows the model to express uncertainty, which serves as an informative signal rather than mere stylistic redundancy. This approach helps maintain alternative hypotheses and supports gradual uncertainty reduction. The challenge lies in filtering out non-informative content while retaining epistemic expressions that enable iterative belief refinement, rather than blindly compressing the reasoning process.

Experiment

- Experiments on LLM reasoning under varying information richness demonstrate that providing richer conditioning context (e.g., full solutions) significantly reduces response length and the usage of epistemic tokens, leading to more concise and confident outputs.

- Supervised fine-tuning using solution-guided responses, which lack epistemic markers, causes substantial performance degradation on math benchmarks, whereas training on unguided responses preserves reasoning capability, indicating that epistemic verbalization is critical for autonomous error correction.

- On-policy self-distillation (SDPO) consistently suppresses epistemic tokens and shortens responses compared to GRPO, resulting in severe out-of-distribution performance drops on challenging math tasks, particularly when the base model relies on uncertainty expression for complex reasoning.

- The negative impact of self-distillation is linked to task coverage; while concise reasoning improves efficiency on small, narrow datasets, it hinders generalization on larger, diverse problem sets where expressing uncertainty is necessary for adaptation.

- Ablation studies confirm that using a fixed teacher policy mitigates but does not eliminate the performance degradation caused by epistemic suppression, and these findings hold across multiple model families including DeepSeek, Qwen, and OLMo.