Command Palette

Search for a command to run...

SkillNet:AI スキルの作成、評価、および連携

SkillNet:AI スキルの作成、評価、および連携

概要

現在の AI エージェントは、柔軟にツールを呼び出し、複雑なタスクを実行可能であるものの、その長期的な進展は、スキルの体系的な蓄積と転移が欠如していることに起因して阻害されている。スキル統合の統一メカニズムが欠如しているため、エージェントは頻繁に「車輪の再発明」を繰り返しており、孤立した文脈において既存の戦略を活用することなく、新たな解を再発見するに留まっている。この限界を克服するため、我々は大規模な AI スキルの作成、評価、整理を可能にするオープンインフラ「SkillNet」を提案する。SkillNet は、多様なソースからスキルを生成し、豊かな関係性を確立し、安全性、完全性、実行可能性、保守性、コスト意識という多次元の評価を可能にする統一オントロジーに基づいてスキルを構造化する。本インフラは、20 万件以上のスキルを格納するリポジトリ、対話型プラットフォーム、多用途の Python ツールキットを統合している。ALFWorld、WebShop、ScienceWorld における実験的評価により、SkillNet がエージェントのパフォーマンスを著しく向上させ、複数の基盤モデルにおいて平均報酬を 40% 改善し、実行ステップを 30% 削減することが実証された。スキルを進化可能かつ組み立て可能な資産として形式化することで、SkillNet は、エージェントが一時的な経験から永続的な習熟へと移行するための堅牢な基盤を提供する。

One-sentence Summary

Researchers from Zhejiang University and major industry partners introduce SkillNet, an open infrastructure that unifies over 200,000 AI skills into a structured ontology. This system enables systematic skill consolidation and multi-dimensional evaluation, significantly boosting agent performance in complex task environments by preventing redundant learning.

Key Contributions

- Current AI agents struggle to accumulate and transfer skills systematically, often rediscovering solutions in isolated contexts without leveraging prior strategies.

- SkillNet addresses this by introducing an open infrastructure with a unified ontology that organizes over 200,000 skills and evaluates them across five dimensions including Safety, Completeness, and Executability.

- Experimental results on ALFWorld, WebShop, and ScienceWorld show that SkillNet improves average agent rewards by 40% and reduces execution steps by 30% across multiple backbone models.

Introduction

As AI agents evolve to handle complex, long-horizon tasks, their progress is currently stalled by an inability to systematically accumulate and transfer skills, forcing them to repeatedly rediscover solutions in isolated contexts. Prior approaches rely on manual engineering or transient in-context learning, while existing skill repositories suffer from static curation, lack of rigorous quality control, and poor composability that prevents scalable reuse. To address these gaps, the authors introduce SkillNet, an open infrastructure that structures over 200,000 skills into a unified ontology with rich relational connections and a multi-dimensional evaluation framework covering safety, executability, and cost. This system transforms fragmented experience into durable, composable assets, enabling agents to achieve significant performance gains by leveraging a robust foundation for cumulative learning rather than episodic trial and error.

Dataset

-

Dataset Composition and Sources: The authors construct a versatile skill repository by aggregating heterogeneous data from four primary sources: execution trajectories and conversational logs, open-source GitHub repositories, semi-structured documents (PDF, PowerPoint, Word), and direct natural language user prompts.

-

Key Details for Each Subset: The initial pool contains over 200,000 candidate skills derived from open internet resources, automated pipelines, and community contributions. A rigorous multi-stage filtering and evaluation process curates this down to a final repository of more than 150,000 high-quality skills that are constantly expanding.

-

Data Usage and Processing: The authors employ a fully automated pipeline powered by Large Language Models to transform raw inputs into reusable structured agent skills. Users can customize the underlying models, and the system supports continuous expansion through open resources and community submissions.

-

Quality Assurance and Metadata: To ensure reliability, the team implements automated checks across five dimensions: safety, completeness, executability, maintainability, and cost-awareness. They also conduct periodic manual audits via random sampling and use the data to analyze skill relations, uncovering dependencies, hierarchical compositions, and functional similarities.

Method

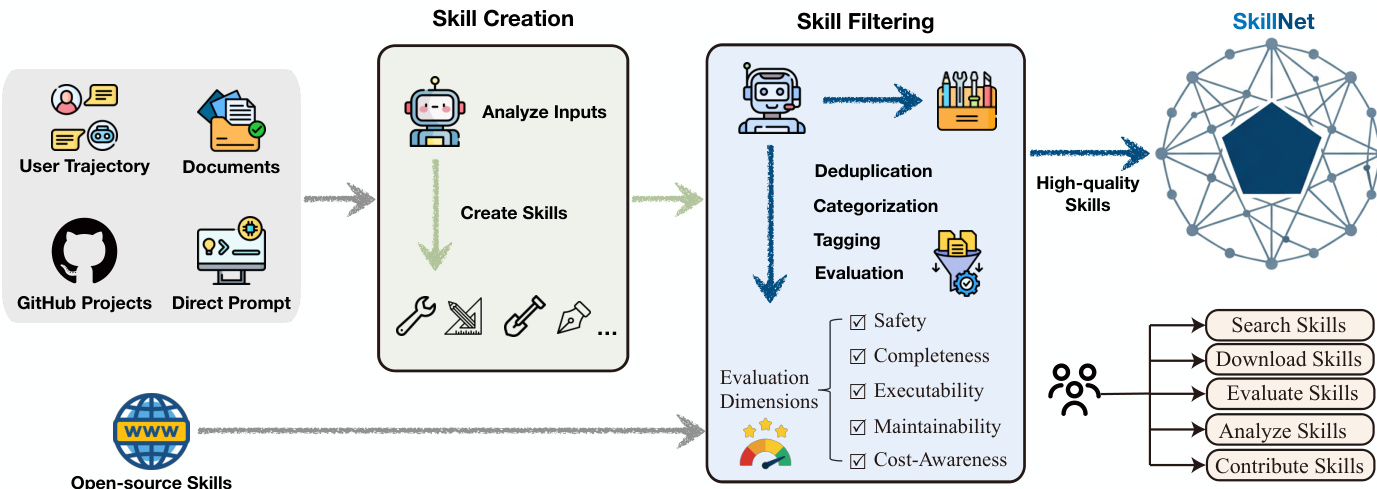

The authors propose SkillNet, a comprehensive framework designed to transform fragmented agent experiences and human knowledge into reusable, verifiable skill entities. The system operates through a systematic pipeline that encompasses skill creation, evaluation, and organization to support scalable and reliable capability growth. Refer to the framework diagram for the end-to-end architecture.

The framework begins with Skill Creation, where the system analyzes diverse inputs including user trajectories, documents, GitHub projects, and direct prompts to generate new skills. These generated skills undergo a rigorous Skill Filtering process involving deduplication, categorization, and a multi-dimensional evaluation mechanism. The evaluation dimensions include Safety, Completeness, Executability, Maintainability, and Cost-Awareness. Only high-quality skills that pass these checks are admitted into the repository, ensuring the system functions as a self-evolving ecosystem rather than a static collection.

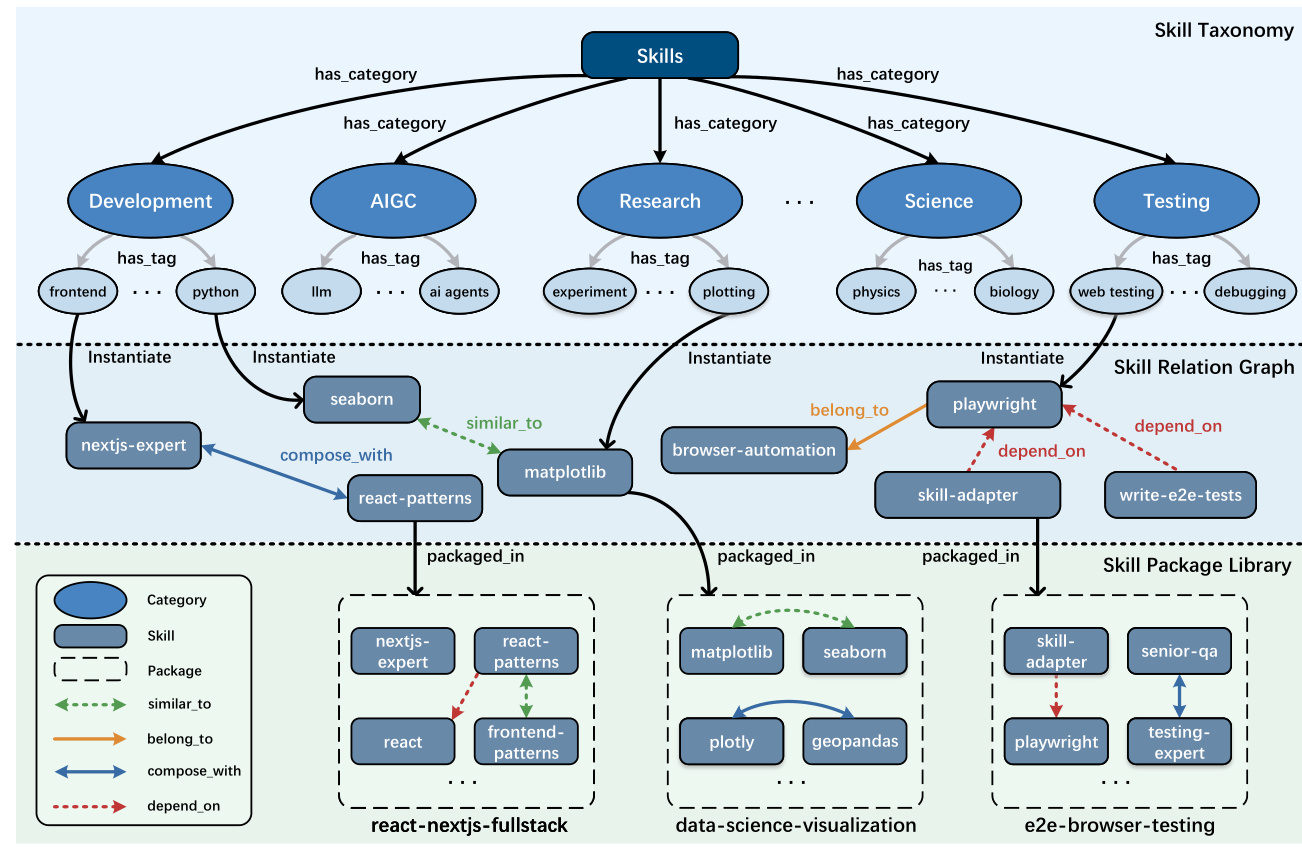

To manage the growing repository, SkillNet employs a structured ontology. As shown in the figure below, this ontology is organized into three progressive layers.

The top layer is the Skill Taxonomy, which categorizes skills into broad domains such as Development, AIGC, and Science, further refined by fine-grained tags. The middle layer is the Skill Relation Graph, which models inter-skill dependencies and semantic associations using relations such as similar_to, compose_with, belong_to, and depend_on. The bottom layer is the Skill Package Library, which groups individual skills into modular, task-oriented bundles for deployment.

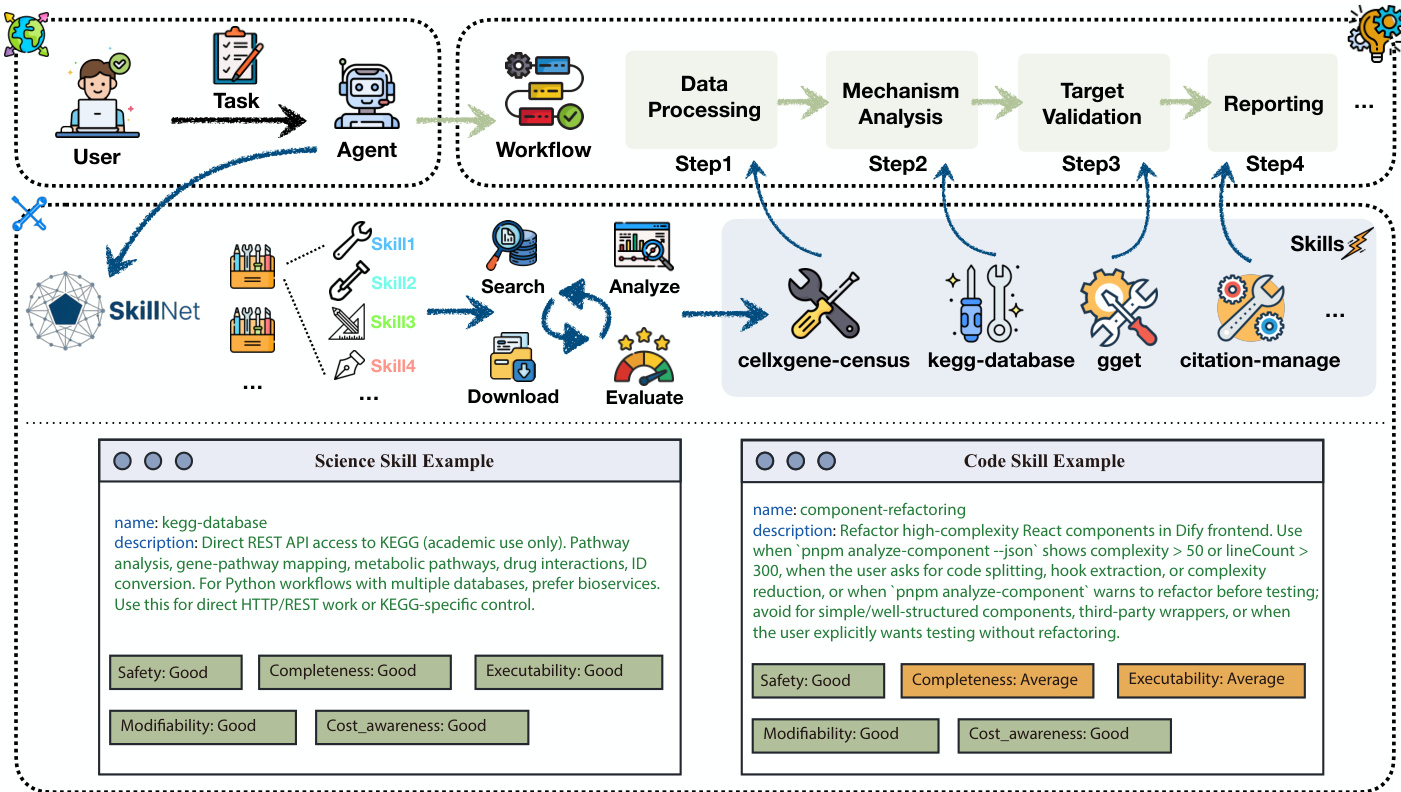

Beyond isolated skill creation, the system includes a Skill Analysis module that automatically discovers and models structural relations between skills. This enables global reasoning over large skill repositories and supports advanced downstream applications such as skill retrieval and workflow synthesis. In practical scenarios, such as autonomous scientific discovery or coding, the system decomposes user tasks into actionable steps. Refer to the practical use examples for specific instances of skill application and evaluation.

For instance, in a scientific workflow, the agent schedules data processing skills followed by mechanistic analysis and target validation. The system provides detailed skill cards, such as for kegg-database or component-refactoring, which include metadata and quality scores to guide the agent's selection and execution. This structured approach allows agents to bridge high-level user intentions with executable actions by organizing specialized skills into a coherent workflow.

Experiment

- A multi-dimensional evaluation framework was established to assess skill reliability across safety, completeness, executability, maintainability, and cost-awareness, confirming that an automated LLM-based evaluator achieves near-perfect alignment with human expert judgments.

- Experiments in three simulated environments (ALFWorld, WebShop, and ScienceWorld) demonstrate that integrating SkillNet significantly outperforms baseline methods like ReAct and Few-Shot by enabling agents to solve tasks more reliably with fewer interaction steps.

- Results validate that SkillNet effectively transforms fragmented experiences into reusable procedural abstractions, providing robust performance gains across models of varying sizes and ensuring strong generalization to both seen and unseen tasks.