NVFP4 KV Cache Boosts Inference Efficiency: 50% Memory Reduction, 2x Context Length, and <1% Accuracy Loss on NVIDIA Blackwell GPUs

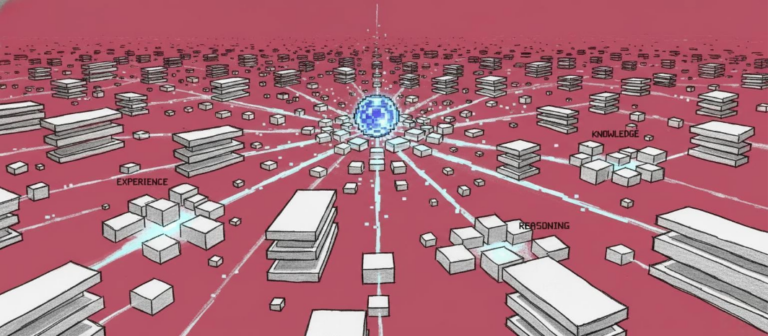

NVFP4 KV cache quantization is a breakthrough optimization introduced by NVIDIA to enhance inference performance for large language models on Blackwell GPUs. By reducing the precision of the key-value (KV) cache from 16-bit to 4-bit using the NVFP4 format, this technique cuts memory usage by up to 50% and effectively doubles the context length that can be stored in memory. This advancement enables significantly larger batch sizes, longer sequences, and higher cache-hit rates—critical for high-throughput, long-context AI workloads—while maintaining less than 1% accuracy loss across key benchmarks in code generation, knowledge, and long-context reasoning. The KV cache is a core component in autoregressive transformer models, where it stores the key and value vectors of previously processed tokens to avoid redundant recomputation during decoding. Without it, every new token would require reprocessing all prior tokens, leading to severe computational inefficiency. With the cache, only the current token’s keys and values are computed, while past values are retrieved from memory. However, the cache’s memory footprint can become a bottleneck, especially as context lengths grow and models scale. NVFP4 addresses this by compressing the KV cache to 4-bit precision. Unlike earlier approaches such as FP8, which already offer compression, NVFP4 delivers superior memory efficiency. The new format allows models to store twice as much context per GPU memory unit compared to FP8, directly increasing the effective cache size. This results in higher cache-hit rates, reducing the need for recomputation and lowering latency—particularly during the prefill phase, where the system ingests the full input sequence. Performance gains are substantial. With NVFP4, time-to-first-token (TTFT) latency can improve by up to 3x, and cache-hit rates increase by around 20% compared to FP8. These improvements are most pronounced at higher memory capacities, where the benefits of increased context retention become more impactful. As the cache grows, it naturally captures more context, leading to diminishing returns in relative gains, but NVFP4 ensures that the absolute performance remains superior due to its efficient memory utilization. Despite the aggressive compression, NVFP4 maintains high accuracy. Benchmarks on models like Qwen3-Coder-480B and Llama 3.3 70B show near parity with full-precision (BF16) and FP8 baselines across LiveCodeBench, MMLU-PRO, MBPP, and Ruler 64K. Notably, NVFP4 outperforms MXFP4 in accuracy by 5% on MMLU, thanks to its more precise block scaling and better E4M3 FP8 scaling factors, which reduce quantization error during dequantization. The optimization is implemented through the NVIDIA TensorRT Model Optimizer, supporting both post-training quantization (PTQ) and quantization-aware training (QAT). Developers can enable NVFP4 KV cache with minimal code changes by updating the quantization configuration, making it easy to integrate into existing workflows. The model is first quantized using the provided API, and the resulting model is ready for deployment with minimal accuracy degradation. Looking ahead, NVFP4 KV cache is part of NVIDIA’s broader strategy of hardware-software co-design. It synergizes with other innovations such as KV-aware routing, offload in NVIDIA Dynamo, and Wide Expert Parallelism in TensorRT-LLM. Together, these technologies enable efficient scaling across multi-GPU systems, supporting larger models, longer sequences, and higher concurrency—essential for next-generation AI applications. For developers, NVIDIA provides code samples and notebooks to help adopt NVFP4 in custom workflows. As the ecosystem matures, this optimization will play a key role in unlocking the full potential of Blackwell GPUs for large-scale, high-performance inference.