Command Palette

Search for a command to run...

Externalization in LLM Agents: A Unified Review of Memory, Skills, Protocols and Harness Engineering

Externalization in LLM Agents: A Unified Review of Memory, Skills, Protocols and Harness Engineering

Abstract

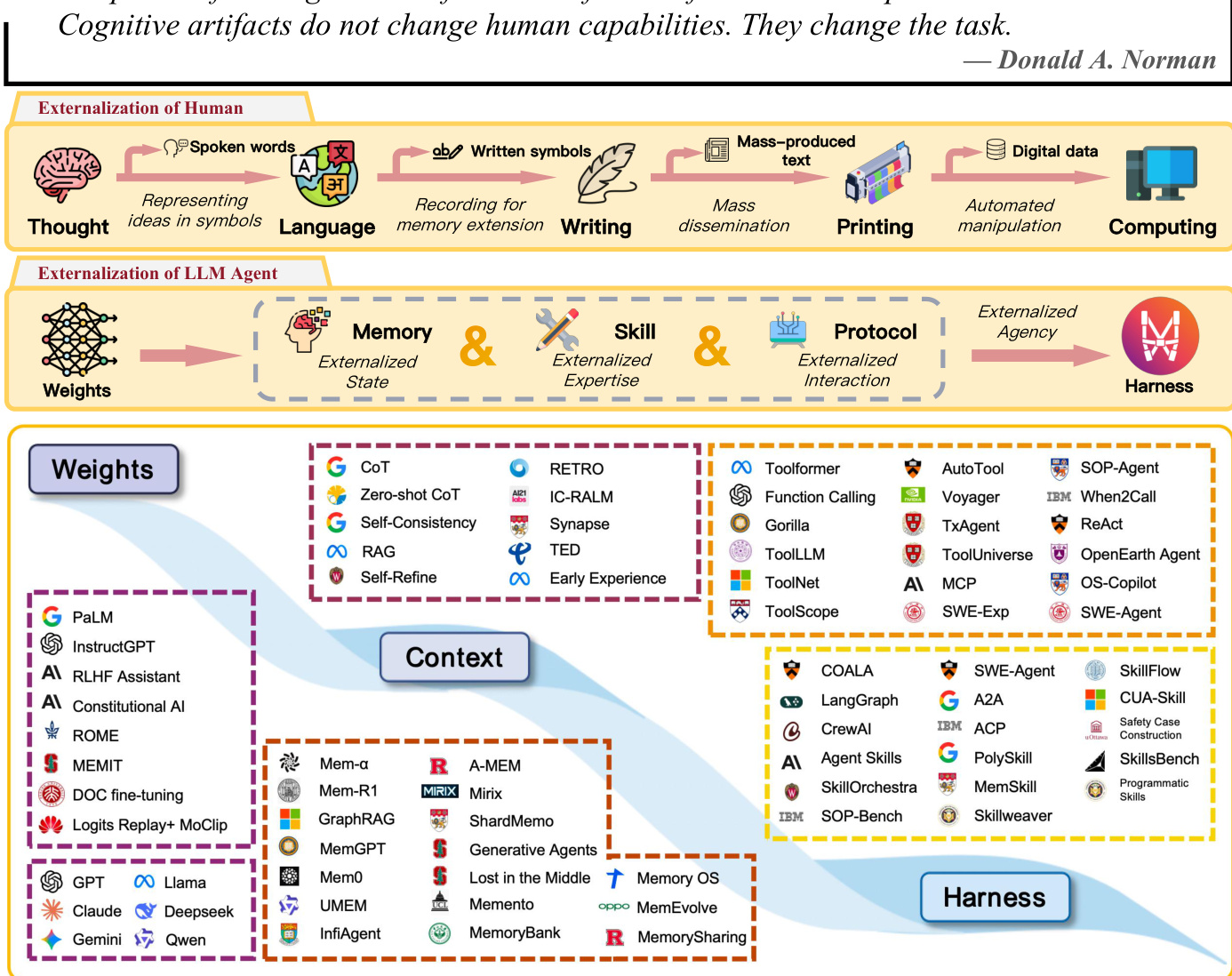

Large language model (LLM) agents are increasingly built less by changing model weights than by reorganizing the runtime around them. Capabilities that earlier systems expected the model to recover internally are now externalized into memory stores, reusable skills, interaction protocols, and the surrounding harness that makes these modules reliable in practice. This paper reviews that shift through the lens of externalization. Drawing on the idea of cognitive artifacts, we argue that agent infrastructure matters not merely because it adds auxiliary components, but because it transforms hard cognitive burdens into forms that the model can solve more reliably. Under this view, memory externalizes state across time, skills externalize procedural expertise, protocols externalize interaction structure, and harness engineering serves as the unification layer that coordinates them into governed execution. We trace a historical progression from weights to context to harness, analyze memory, skills, and protocols as three distinct but coupled forms of externalization, and examine how they interact inside a larger agent system.

One-sentence Summary

This paper provides a unified review of LLM agent development through the lens of externalization, arguing that shifting cognitive burdens from internal model weights to external memory, skills, protocols, and harness engineering transforms complex reasoning into reliable, structured execution processes.

Key Contributions

- This paper introduces the concept of externalization as a framework for understanding the shift in LLM agent development from model weight optimization to the reorganization of runtime infrastructure.

- The work categorizes agent capabilities into three distinct but coupled forms of externalization: memory for state management, skills for procedural expertise, and protocols for interaction structure.

- The research proposes a comprehensive taxonomy for evaluating agent systems through metrics such as maintainability, recovery robustness, context efficiency, and governance quality to better distinguish infrastructure achievements from model intelligence.

Introduction

As Large Language Model (LLM) agents evolve, the focus of development is shifting from increasing model parameters to optimizing the runtime environments in which they operate. While earlier approaches relied on internalizing knowledge within model weights or managing it through ephemeral context windows, these methods struggle with long-term continuity, procedural consistency, and reliable coordination with external tools. The authors leverage the concept of cognitive artifacts to propose a systems-level framework centered on externalization. They argue that reliable agency is achieved by relocating cognitive burdens into three distinct dimensions: memory for temporal state, skills for procedural expertise, and protocols for structured interaction. These modules are unified by harness engineering, which provides the essential orchestration, governance, and observability required to transform raw model reasoning into dependable, real-world execution.

Method

The authors propose a framework for agentic intelligence that shifts the burden of continuity from the model's internal weights to a structured cognitive environment known as a harness. This architecture decouples the agent's state across time from its transient context by externalizing cognition into three primary modules: Memory, Skills, and Protocols.

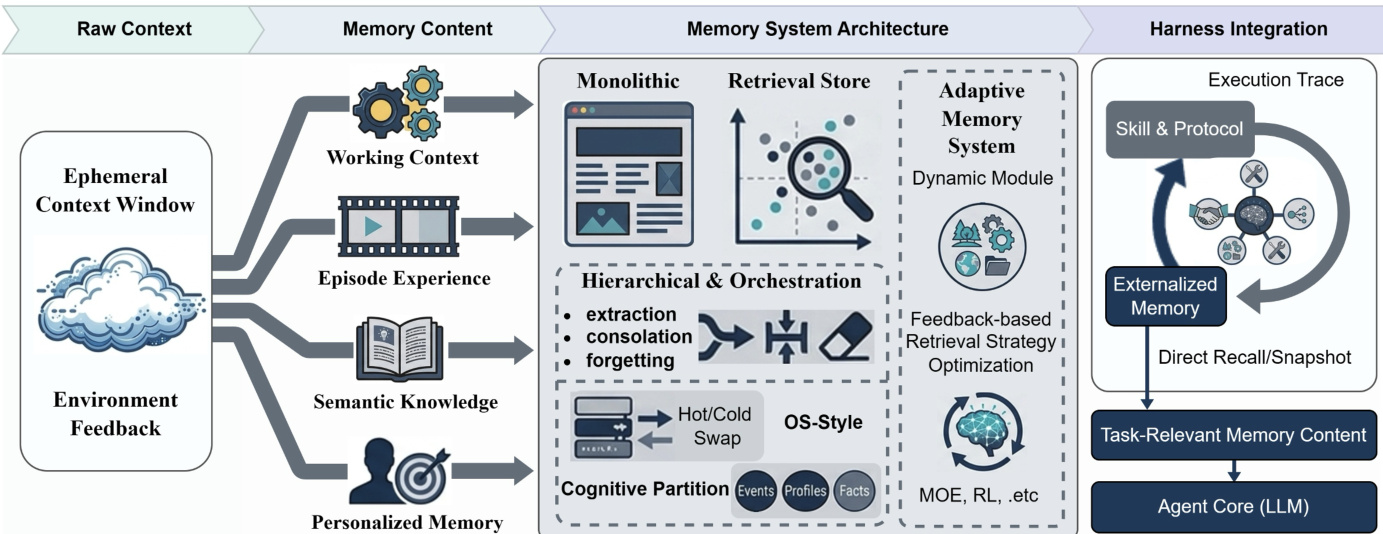

The memory system serves as the repository for externalized state, categorized into four distinct dimensions to manage temporal properties and retrieval needs. These include working context, which captures the live intermediate state of a task; episodic experience, which records specific prior runs and decision points; semantic knowledge, which stores abstracted domain facts and heuristics; and personalized memory, which tracks user-specific preferences and habits.

As shown in the figure above, raw context from the ephemeral window and environmental feedback is converted into these four persistent dimensions. The architecture of these memory systems evolves from monolithic context to retrieval stores, hierarchical orchestration involving extraction and consolidation, and finally to adaptive memory systems utilizing dynamic modules and feedback-based optimization.

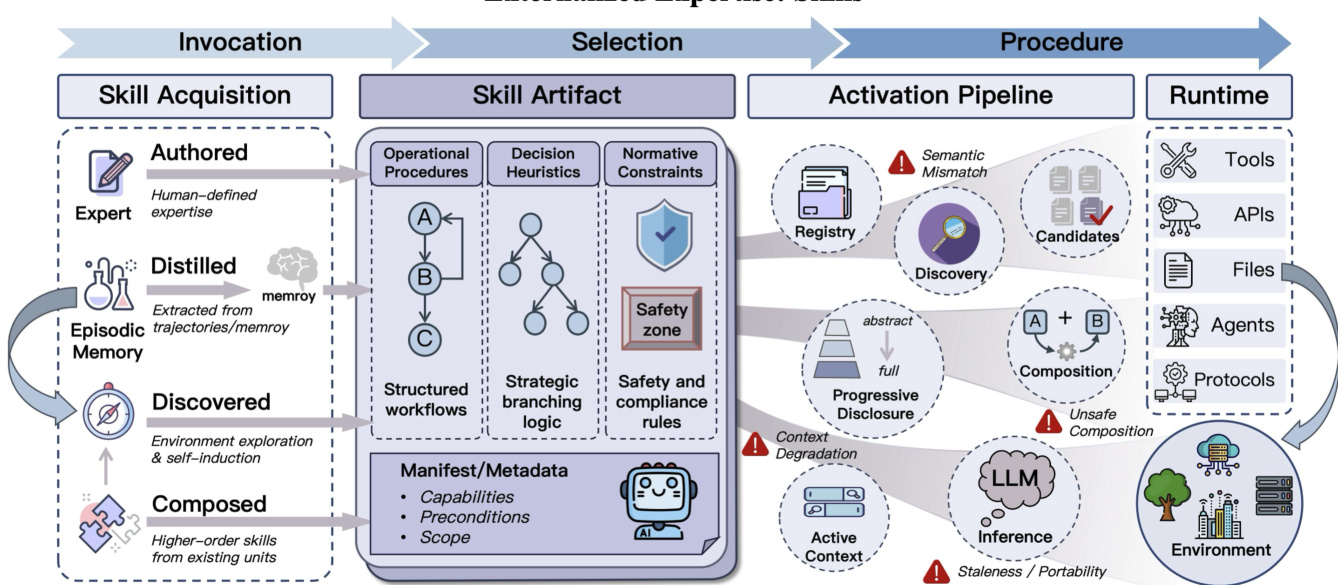

Skills represent externalized expertise, transforming procedural know-how into reusable, bounded capabilities. A skill is defined by its specification, which includes capability boundaries, scope, preconditions, execution constraints, and examples. This specification elevates a skill from an unstructured prompt to an explicit object that can be governed. Within a skill artifact, the authors distinguish between operational procedures, decision heuristics, and normative constraints, which define the acceptable boundaries for execution.

Protocols provide the externalized interaction layer, translating high-level skill intent into deterministic, machine-readable action schemas. They ensure that skill execution is grounded through standardized interfaces such as tool schemas and subagent delegation contracts.

The integration of these modules occurs within the harness, which acts as a coordinated cognitive environment. Refer to the framework diagram to see how the foundation model sits at the center, surrounded by the three externalization modules and three operational surfaces: Permission, Control, and Observability.

The harness facilitates a continuous loop of interaction among these components. Memory supplies the situational evidence required for skill selection and protocol routing. Skills turn stored experiences into reusable procedures and invoke protocolized actions. Protocols, in turn, constrain execution and facilitate result assimilation by writing normalized outcomes back into memory. This creates a self-reinforcing cycle where execution traces and successes are continuously distilled to improve the agent's long-term capabilities.