Command Palette

Search for a command to run...

FlowScene: Style-Consistent Indoor Scene Generation with Multimodal Graph Rectified Flow

FlowScene: Style-Consistent Indoor Scene Generation with Multimodal Graph Rectified Flow

Zhifei Yang Guangyao Zhai Keyang Lu YuYang Yin Chao Zhang Zhen Xiao Jieyi Long Nassir Navab Yikai Wang

Abstract

Scene generation has extensive industrial applications, demanding both high realism and precise control over geometry and appearance. Language-driven retrieval methods compose plausible scenes from a large object database, but overlook object-level control and often fail to enforce scene-level style coherence. Graph-based formulations offer higher controllability over objects and inform holistic consistency by explicitly modeling relations, yet existing methods struggle to produce high-fidelity textured results, thereby limiting their practical utility. We present FlowScene, a tri-branch scene generative model conditioned on multimodal graphs that collaboratively generates scene layouts, object shapes, and object textures. At its core lies a tight-coupled rectified flow model that exchanges object information during generation, enabling collaborative reasoning across the graph. This enables fine-grained control of objects' shapes, textures, and relations while enforcing scene-level style coherence across structure and appearance. Extensive experiments show that FlowScene outperforms both language-conditioned and graph-conditioned baselines in terms of generation realism, style consistency, and alignment with human preferences.

One-sentence Summary

Researchers from Peking University and Technical University of Munich introduce FlowScene, a tri-branch generative model that leverages a tight-coupled rectified flow mechanism to collaboratively synthesize layouts, shapes, and textures from multimodal graphs, achieving superior style coherence and object-level control compared to existing language or graph-driven baselines.

Key Contributions

- The paper introduces FlowScene, a tri-branch generative model conditioned on multimodal graphs that collaboratively produces scene layouts, object shapes, and object textures to ensure fine-grained control and scene-level style coherence.

- A tight-coupled rectified flow mechanism is presented as the core engine, which exchanges node information during the sampling process to satisfy both individual object conditions and holistic scene constraints while accelerating generation compared to diffusion-based methods.

- Extensive experiments demonstrate that the method outperforms language-conditioned and graph-conditioned baselines in generation realism, style consistency, and alignment with human preferences, supported by a workflow for diverse input sources.

Introduction

Scene generation is critical for industries like interior design, VR/AR, and robotics, where applications demand high realism alongside precise control over geometry and appearance. Prior language-driven methods often fail to enforce scene-level style coherence or provide granular object control, while existing graph-based approaches struggle to produce high-fidelity textured results in an end-to-end manner. The authors introduce FlowScene, a tri-branch generative model that leverages Multimodal Graph Rectified Flow to collaboratively generate scene layouts, object shapes, and textures. By tightly coupling node information exchange during the sampling process, this approach enables fine-grained control over individual objects while ensuring consistent style across the entire scene structure and appearance.

Method

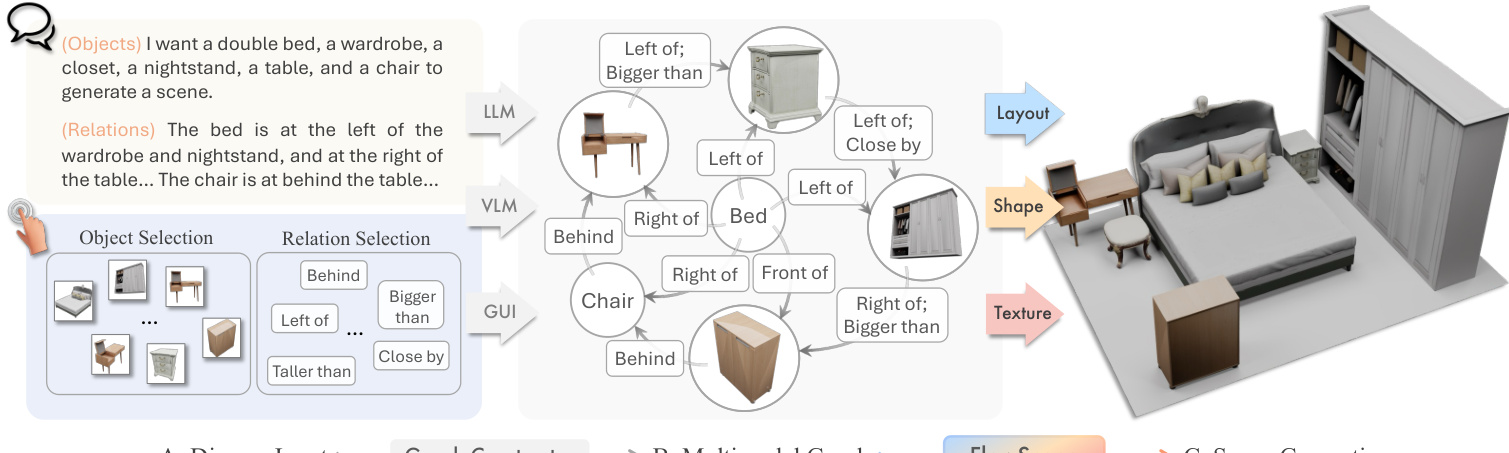

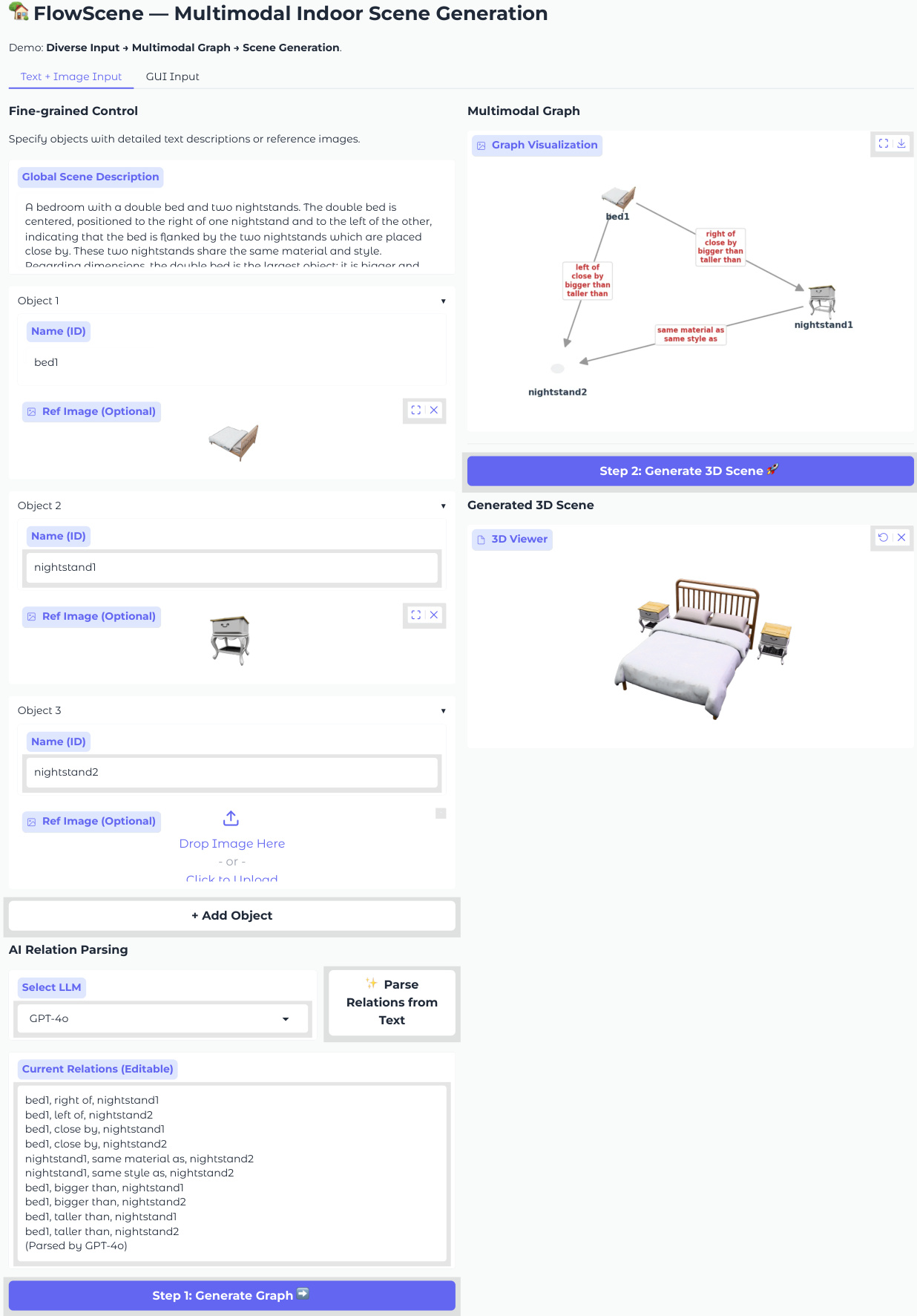

The proposed framework, FlowScene, operates as a tri-branch generator conditioned on a multimodal graph to synthesize indoor scenes. The system accepts diverse inputs, including natural language descriptions and reference images, which are parsed into a structured scene graph. This graph serves as the central conditioning mechanism for the generation process, ensuring consistency across object layouts, shapes, and textures.

The process begins with the construction of a multimodal scene graph GM=(VM,E). Nodes in VM represent objects and can be text-only, image-only, or multimodal, aggregating learnable embeddings with foundation features from CLIP or DINOv2. Edges in E encode spatial and semantic relationships such as "left of" or "bigger than." An LLM or VLM parses user inputs to populate this graph, allowing for fine-grained control over the scene configuration.

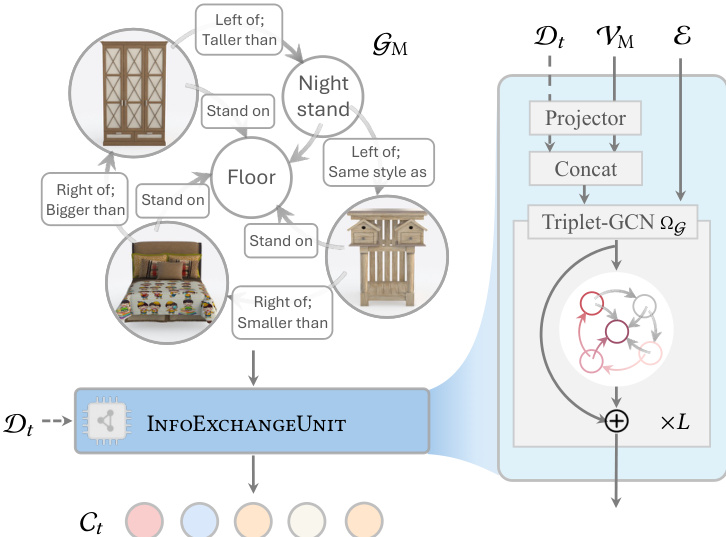

To generate the scene, the authors employ a Multimodal Graph Rectified Flow backbone. This module adapts rectified flow models to handle multiple content generation jointly. The core of this mechanism is the InfoExchangeUnit, which utilizes a Triplet-Graph Convolutional Network (Triplet-GCN) to perform message passing and feature aggregation across the graph edges. During the denoising process, this unit incorporates temporal denoising data Dt and projects it alongside node features to produce time-dependent conditions Ct. This ensures that the generation of each object respects the global constraints imposed by the graph structure.

The training objective minimizes the least-squares error between the predicted velocity field vθ and the target velocity derived from linear interpolation between data and noise. The loss function is defined as:

LGRF=ED,C,t[∥ΘD(Dt,Ct,t)−v∥22]where ΘD is the denoiser network. During inference, the model integrates the reverse-time ODE starting from Gaussian noise to recover the target data distribution.

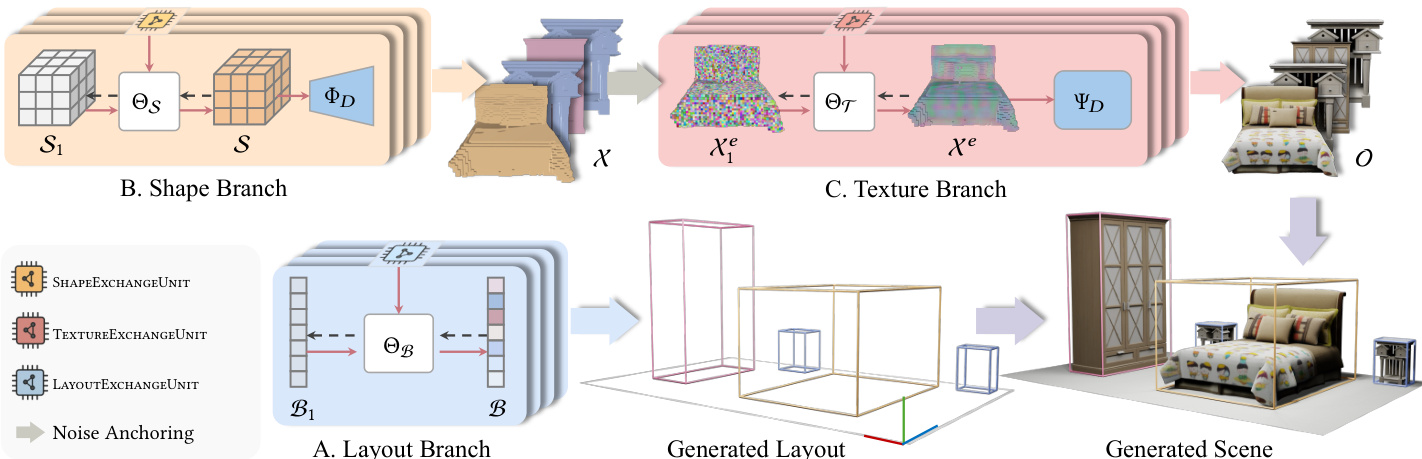

FlowScene decomposes the generation task into three coordinated branches, each backed by the graph rectified flow module. The Layout Branch generates 3D bounding boxes defined by location, size, and rotation, utilizing a specialized LayoutExchangeUnit to enforce spatial constraints. The Shape Branch operates in parallel to generate voxelized object shapes. It employs a Shape VQ-VAE to encode sparse voxel structures into compact latent codes, which are then denoised and decoded. The Texture Branch is subordinate to the shape branch, anchoring Gaussian noise to the geometric structure to generate textures. It uses a Texture VQ-VAE and a TextureExchangeUnit to ensure style consistency across objects, which is particularly crucial for text-only nodes where appearance is inferred from relational context.

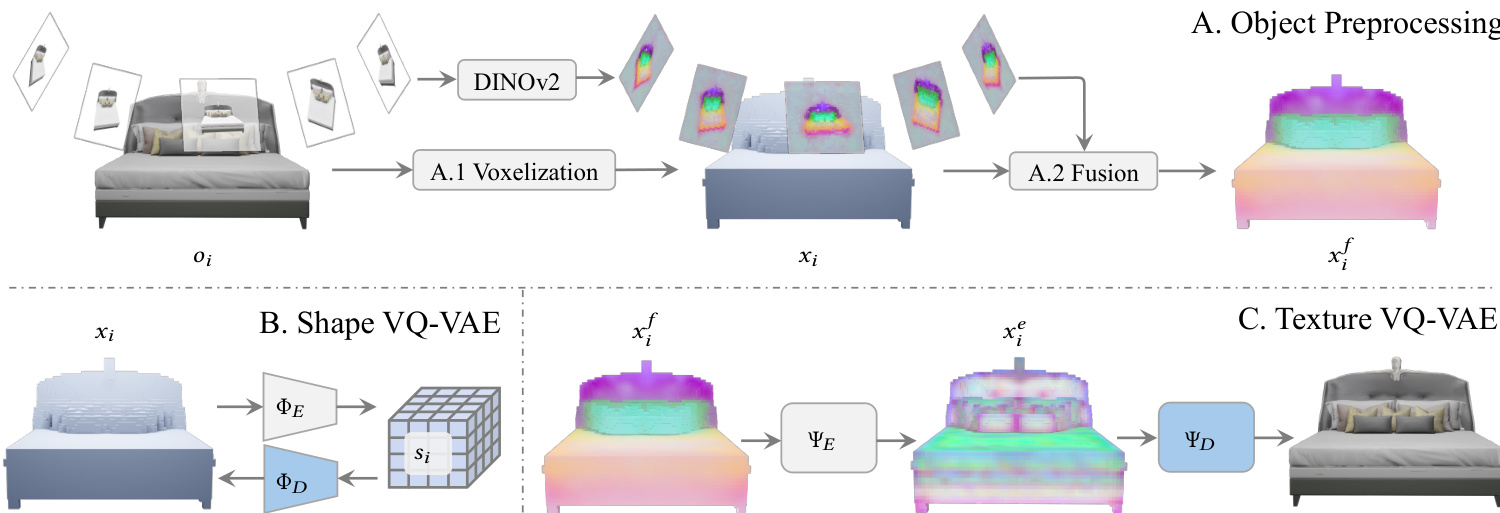

Prior to training the generative branches, objects undergo preprocessing to prepare the data for the VQ-VAEs. Objects are voxelized into sparse structures, and multi-view images are rendered to extract DINOv2 features. These features are reprojected onto the voxel grid and averaged to create feature voxels. The Shape VQ-VAE learns to reconstruct the voxelized geometry, while the Texture VQ-VAE learns to reconstruct the object appearance from the feature voxels. This preprocessing ensures that the generative models operate on efficient latent representations rather than raw high-dimensional data.

The system supports two primary application modes for user interaction. In the language-driven mode, users provide natural language descriptions which are parsed into a scene graph by an LLM. In the interactive GUI mode, users select object candidates and define relations through a visual interface. Both modes feed into the multimodal graph, which drives the FlowScene backend to generate a high-fidelity textured 3D scene consistent with the specified configuration.

Experiment

- Experiments on SG-FRONT and 3D-FRONT datasets validate that FlowScene outperforms training-free language-based methods and graph-conditioned generative models in scene-level realism, object-level geometric fidelity, and style consistency.

- Quantitative and qualitative comparisons demonstrate that FlowScene achieves superior alignment with human preferences, producing scenes with higher visual quality and more accurate layout adherence to textual and graph constraints.

- Ablation studies confirm that the InfoExchangeUnit is critical for spatial coherence and appearance consistency, while the graph flow backbone significantly enhances shape generation quality compared to diffusion baselines.

- Robustness tests show that training with multi-view inputs enables flexible adaptation to varying visual conditions, and the model maintains high performance even with sparse relational information in the input graph.

- Additional evaluations highlight FlowScene's ability to preserve inter-shape consistency for identical objects and effectively propagate local graph updates to maintain holistic scene structure.