Command Palette

Search for a command to run...

Complementary Reinforcement Learning

Complementary Reinforcement Learning

Abstract

Reinforcement Learning (RL) has emerged as a powerful paradigm for training LLM-based agents, yet remains limited by low sample efficiency, stemming not only from sparse outcome feedback but also from the agent's inability to leverage prior experience across episodes. While augmenting agents with historical experience offers a promising remedy, existing approaches suffer from a critical weakness: the experience distilled from history is either stored statically or fail to coevolve with the improving actor, causing a progressive misalignment between the experience and the actor's evolving capability that diminishes its utility over the course of training. Inspired by complementary learning systems in neuroscience, we present Complementary RL to achieve seamless co-evolution of an experience extractor and a policy actor within the RL optimization loop. Specifically, the actor is optimized via sparse outcome-based rewards, while the experience extractor is optimized according to whether its distilled experiences demonstrably contribute to the actor's success, thereby evolving its experience management strategy in lockstep with the actor's growing capabilities. Empirically, Complementary RL outperforms outcome-based agentic RL baselines that do not learn from experience, achieving 10% performance improvement in single-task scenarios and exhibits robust scalability in multi-task settings. These results establish Complementary RL as a paradigm for efficient experience-driven agent learning.

One-sentence Summary

Researchers from Alibaba Group and HKUST introduce Complementary RL, a framework that enables the seamless co-evolution of an experience extractor and policy actor to overcome static memory limitations in reinforcement learning, significantly boosting sample efficiency and performance for LLM-based agents in both single and multi-task scenarios.

Key Contributions

- The paper introduces Complementary RL, a framework that establishes a closed co-evolutionary loop between a policy actor and an experience extractor to ensure distilled knowledge evolves in lockstep with the agent's growing capabilities.

- This method optimizes the experience extractor based on the demonstrable utility of its distilled experiences in facilitating actor success, utilizing structured addition, refining, and merging operations to automatically resolve conflicts and redundancies.

- Experimental results demonstrate that the approach achieves a 10% performance improvement in single-task scenarios and exhibits robust scalability in multi-task settings compared to outcome-based agentic RL baselines that do not learn from experience.

Introduction

Reinforcement learning empowers Large Language Model agents but struggles with sample inefficiency due to sparse outcome feedback and an inability to effectively reuse prior experience. Existing methods that incorporate historical data often fail because they treat experience as a static resource or rely on extractors that do not adapt, leading to a misalignment between the guidance provided and the agent's growing capabilities. To address this, the authors introduce Complementary RL, a framework inspired by neuroscience that establishes a closed co-evolutionary loop between a policy actor and an experience extractor. The actor optimizes via outcome-based rewards while the extractor learns to distill high-utility experiences based on their actual contribution to the actor's success, ensuring both components evolve in lockstep to maximize learning efficiency.

Dataset

-

Dataset Composition and Sources The authors curate a multi-environment dataset drawn from five distinct sources: MiniHack, WebShop, ALFWorld, SWE-Bench, and Sokoban. These environments span grid-based navigation, web interaction, household task simulation, software engineering, and combinatorial puzzle solving.

-

Key Details for Each Subset

- MiniHack: Adapted for LLM agents using text symbols to represent entities under a fog-of-war observation model. The authors evaluate on four specific variants of increasing difficulty: MiniHack Room (5x5 grid, directional actions), MiniHack Maze (9x9 grid, directional actions), MiniHack KeyRoom (requires key retrieval and door opening), and MiniHack River (requires pushing a boulder to cross water).

- WebShop: A simulated shopping benchmark where agents issue search queries or click buttons. The authors use the small variant configuration, restricting the product catalog to 1,000 items and sampling goals based on attribute frequency.

- ALFWorld: A text-based interactive environment for household tasks. The training split consists of 1,466 task instances, while 134 instances are held out for evaluation.

- SWE-Bench: A software engineering benchmark requiring codebase modifications to pass unit tests. The authors utilize the SWE-Bench-Verified subset but apply a filtering strategy to retain only 124 tasks where the base model achieves a pass@16 success rate between 0% and 80%, excluding trivial or impossible instances.

- Sokoban: A text-based puzzle game represented with structured symbols. Episodes are configured as 6x6 rooms containing two boxes and two targets to create a challenging combinatorial search space.

-

Model Usage and Task Mixtures The paper defines two specific training mixtures based on the available environments:

- 3-Tasks Mixture: Combines MiniHack Room, WebShop, and ALFWorld.

- 6-Tasks Mixture: Expands the set to include MiniHack Maze, MiniHack KeyRoom, and Sokoban alongside the previous three. During reinforcement learning, all environments generally use a binary reward scheme (1 for success, 0 for failure), with the exception of ALFWorld which assigns -1 for failure.

-

Processing and Implementation Details

- Symbolic Representation: MiniHack and Sokoban environments are converted into text-based formats using specific symbols for agents, goals, obstacles, and objects to align with LLM input requirements.

- Action Spaces: Action definitions vary by environment, ranging from simple directional movements in MiniHack Room to complex tool usage (Bash, str_replace_editor) in SWE-Bench and natural language commands in ALFWorld.

- Reward Logic: The authors implement a standard binary reward system across most tasks, while ALFWorld utilizes a negative reward for failure to provide stronger learning signals.

Method

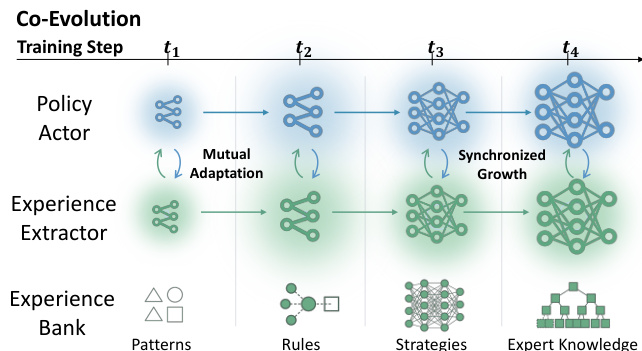

The authors propose Complementary RL, a unified framework that establishes a co-evolutionary relationship between a policy actor and an experience extractor. Unlike traditional approaches where experience is static or collected by a fixed extractor, this method jointly optimizes both components to ensure mutual adaptation. As shown in the figure below, the system evolves through distinct training steps where the Policy Actor and Experience Extractor grow in complexity in tandem.

At the initial stage t1, both the Policy Actor and Experience Extractor are relatively simple. As training progresses through t2 and t3, the Policy Actor generates higher-quality trajectories, which in turn allows the Experience Extractor to distill more sophisticated patterns and rules into the Experience Bank. By t4, the system achieves synchronized growth, where the extractor provides expert knowledge that guides the actor, and the actor's improved performance refines the quality of the experience bank. This dynamic prevents the distributional misalignment often seen in static experience systems, where the guidance becomes stale as the policy improves.

The algorithmic design relies on specific optimization objectives for each component. The Experience Extractor πϕ distills structured experience m from completed trajectories τ and is optimized using the CISPO objective. This objective maximizes the utility of the distilled experience by assigning a binary reward based on the outcome of the trajectory it guided. The Policy Actor πθ is optimized using a modified Group Relative Policy Optimization (GRPO) objective. To prevent over-reliance on external guidance, the authors partition the rollouts into experience-guided and experience-free subgroups. Advantages are computed independently within each subgroup to preserve signal integrity, ensuring the actor internalizes capabilities while benefiting from retrieved knowledge.

To support this co-evolution efficiently, the authors implement a dual-loop infrastructure that decouples rollout collection from experience distillation. Refer to the framework diagram for the detailed architecture of the Primary Training Loop and Background Track. In the Primary Training Loop, the Policy Actor continuously interacts with the environment to collect trajectories and updates its parameters based on outcome rewards. Concurrently, the Background Track manages the Experience Manager H, which oversees the Experience Bank M. The Experience Manager coordinates the asynchronous flow of data by receiving distillation requests from completed episodes, processing them through the Experience Extractor, and updating the bank using write locks to ensure consistency. For retrieval, it employs query batching and parallel search workers under read locks to minimize latency for the actor. This asynchronous design eliminates synchronization barriers, allowing the actor and extractor to optimize on independent schedules while maintaining a globally consistent experience bank.

Experiment

- Complementary RL is validated across four open-ended environments, demonstrating that co-evolving a policy actor with an experience extractor consistently outperforms static baselines and methods lacking experience integration.

- The approach achieves higher success rates and improved action efficiency by guiding the actor toward more effective decision-making through distilled experience.

- Multi-task training experiments confirm that co-evolution is essential for performance, as static extractors fail to adapt to the evolving actor, leading to distributional misalignment and noisy retrieval.

- Increasing the capacity of the experience extractor further amplifies benefits, indicating that stronger models extract more generalizable and informative experience.

- The framework scales robustly as the number of training tasks increases, maintaining significant performance gains over baselines in complex, multi-task settings.

- Latency analysis shows that the asynchronous training framework introduces no appreciable overhead to rollout collection compared to standard baselines.