Command Palette

Search for a command to run...

Attention Residuals

Attention Residuals

Abstract

Residual connections with PreNorm are standard in modern LLMs, yet they accumulate all layer outputs with fixed unit weights. This uniform aggregation causes uncontrolled hidden-state growth with depth, progressively diluting each layer's contribution. We propose Attention Residuals (AttnRes), which replaces this fixed accumulation with softmax attention over preceding layer outputs, allowing each layer to selectively aggregate earlier representations with learned, input-dependent weights. To address the memory and communication overhead of attending over all preceding layer outputs for large-scale model training, we introduce Block AttnRes, which partitions layers into blocks and attends over block-level representations, reducing the memory footprint while preserving most of the gains of full AttnRes. Combined with cache-based pipeline communication and a two-phase computation strategy, Block AttnRes becomes a practical drop-in replacement for standard residual connections with minimal overhead. Scaling law experiments confirm that the improvement is consistent across model sizes, and ablations validate the benefit of content-dependent depth-wise selection. We further integrate AttnRes into the Kimi Linear architecture (48B total / 3B activated parameters) and pre-train on 1.4T tokens, where AttnRes mitigates PreNorm dilution, yielding more uniform output magnitudes and gradient distribution across depth, and improves downstream performance across all evaluated tasks.

One-sentence Summary

The Kimi Team proposes Attention Residuals, a novel mechanism replacing fixed residual weights with learned softmax attention to mitigate hidden-state dilution in large language models. Their optimized Block AttnRes variant reduces memory overhead while significantly improving training stability and downstream task performance across various model scales.

Key Contributions

- The paper introduces Attention Residuals (AttnRes), a mechanism that replaces fixed unit-weight accumulation with learned softmax attention over preceding layer outputs to enable selective, content-dependent aggregation of representations across depth.

- To address scalability, the work presents Block AttnRes, which partitions layers into blocks and attends over block-level summaries to reduce memory and communication complexity from O(Ld) to O(Nd) while preserving performance gains.

- Comprehensive experiments on a 48B-parameter model pre-trained on 1.4T tokens demonstrate that the method mitigates hidden-state dilution, yields more uniform gradient distributions, and consistently improves downstream task performance compared to standard residual connections.

Introduction

The research addresses the challenge of efficiently scaling large language models while maintaining high performance, a critical need for deploying advanced AI in real-world applications. Prior approaches often struggle with the computational overhead of attention mechanisms or fail to fully utilize residual connections for stable training at scale. To overcome these limitations, the authors introduce a novel framework centered on attention residuals that optimizes information flow and reduces training costs without sacrificing model quality.

Method

The authors propose Attention Residuals (AttnRes) to address the limitations of standard residual connections in deep networks. In standard architectures, the hidden state update follows a fixed recurrence hl=hl−1+fl−1(hl−1), which unrolls to a uniform sum of all preceding layer outputs. This fixed aggregation causes hidden-state magnitudes to grow linearly with depth, diluting the contribution of individual layers. AttnRes replaces this fixed accumulation with a learned, input-dependent attention mechanism over depth.

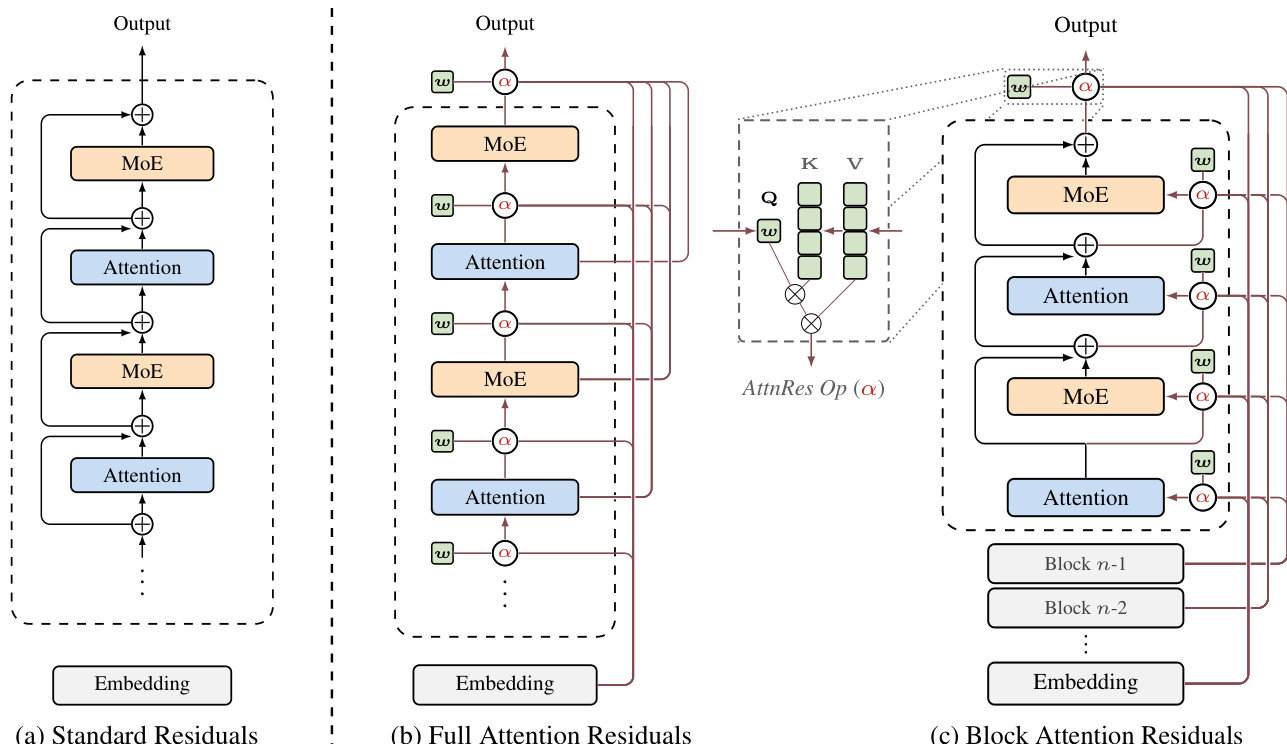

Refer to the framework diagram for a visual comparison of the residual connection variants.

As shown in the figure, the standard approach (a) simply adds the previous layer output. In contrast, Full AttnRes (b) allows each layer to selectively aggregate all previous layer outputs via learned attention weights. Specifically, the input to layer l is computed as hl=∑i=0l−1αi→l⋅vi, where vi represents the output of layer i (or the embedding for i=0). The attention weights αi→l are derived from a softmax over a kernel function ϕ(ql,ki), where ql=wl is a learnable pseudo-query vector specific to layer l, and ki=vi are the keys derived from previous outputs. This mechanism enables content-aware retrieval across depth with minimal parameter overhead.

To make this approach scalable for large models, the authors introduce Block AttnRes (c). This variant partitions the L layers into N blocks. Within each block, layer outputs are reduced to a single representation via summation, and attention is applied only over the N block-level representations. This reduces the memory and communication complexity from O(Ld) to O(Nd). The intra-block accumulation is defined as bn=∑j∈Bnfj(hj), where Bn is the set of layers in block n. Inter-block attention then operates on these block summaries, allowing layers within a block to attend to previous blocks and the partial sum of the current block.

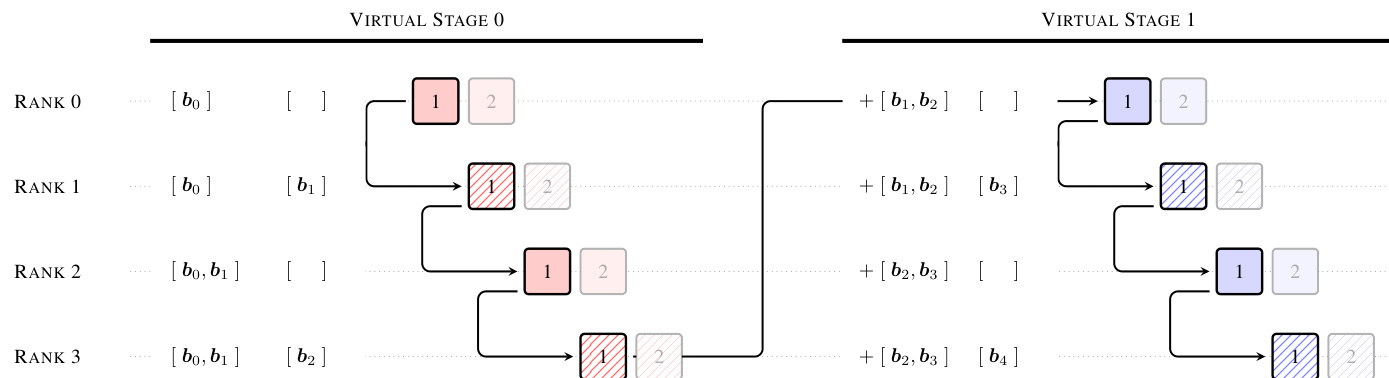

For efficient training at scale, the method incorporates specific infrastructure optimizations to handle the communication of block representations across pipeline stages. As illustrated in the pipeline communication example, cross-stage caching is employed to eliminate redundant data transfers.

In this setup, blocks received during earlier virtual stages are cached locally. Consequently, stage transitions only transmit incremental blocks accumulated since the receiver's corresponding chunk in the previous virtual stage, rather than the full history. This caching strategy reduces the peak per-transition communication cost significantly, enabling the method to function as a practical drop-in replacement for standard residual connections with marginal training overhead. Additionally, a two-phase computation strategy is used during inference to amortize cross-block attention costs, further minimizing latency.

Experiment

- Scaling law experiments validate that both Full and Block Attention Residuals (AttnRes) consistently outperform the PreNorm baseline across all model sizes, with Block AttnRes recovering most of the performance gains of the full variant while maintaining lower memory overhead.

- Main training results demonstrate that AttnRes resolves the PreNorm dilution problem by bounding hidden-state growth and achieving a more uniform gradient distribution, leading to superior performance on multi-step reasoning, code generation, and knowledge benchmarks.

- Ablation studies confirm that input-dependent weighting via softmax attention is critical for performance, while block-wise aggregation offers an effective trade-off between memory efficiency and the ability to access distant layers compared to sliding-window or full cross-layer access.

- Architecture sweeps reveal that AttnRes shifts the optimal design preference toward deeper and narrower networks compared to standard Transformers, indicating that the method more effectively leverages increased depth for information flow.

- Analysis of learned attention patterns shows that AttnRes preserves locality while establishing learned skip connections to early layers and the token embedding, with Block AttnRes successfully maintaining these structural benefits through implicit regularization.