Command Palette

Search for a command to run...

Gravity Falls: A Comparative Analysis of Domain-Generation Algorithm (DGA) Detection Methods for Mobile Device Spearphishing

Gravity Falls: A Comparative Analysis of Domain-Generation Algorithm (DGA) Detection Methods for Mobile Device Spearphishing

Adam Dorian Wong John D. Hastings

Abstract

Mobile devices are frequent targets of eCrime threat actors through SMS spearphishing (smishing) links that leverage Domain Generation Algorithms (DGA) to rotate hostile infrastructure. Despite this, DGA research and evaluation largely emphasize malware C2 and email phishing datasets, leaving limited evidence on how well detectors generalize to smishing-driven domain tactics outside enterprise perimeters. This work addresses that gap by evaluating traditional and machine-learning DGA detectors against Gravity Falls, a new semi-synthetic dataset derived from smishing links delivered between 2022 and 2025. Gravity Falls captures a single threat actor's evolution across four technique clusters, shifting from short randomized strings to dictionary concatenation and themed combo-squatting variants used for credential theft and fee/fine fraud. Two string-analysis approaches (Shannon entropy and Exp0se) and two ML-based detectors (an LSTM classifier and COSSAS DGAD) are assessed using Top-1M domains as benign baselines. Results are strongly tactic-dependent: performance is highest on randomized-string domains but drops on dictionary concatenation and themed combo-squatting, with low recall across multiple tool/cluster pairings. Overall, both traditional heuristics and recent ML detectors are ill-suited for consistently evolving DGA tactics observed in Gravity Falls, motivating more context-aware approaches and providing a reproducible benchmark for future evaluation.

One-sentence Summary

Adam Dorian Wong and John D. Hastings of Dakota State University introduce Gravity Falls, a semi-synthetic smishing-derived DGA dataset spanning 2022–2025, revealing that both traditional heuristics and ML detectors (including LSTM and COSSAS DGAD) fail against evolving tactics like themed combo-squatting, urging context-aware defenses for mobile threat landscapes.

Key Contributions

- The paper introduces Gravity Falls, a new semi-synthetic DGA dataset derived from real-world SMS spearphishing campaigns (2022–2025), capturing a threat actor’s evolving tactics across four technique clusters—from randomized strings to themed combo-squatting—filling a gap in mobile-targeted DGA research previously dominated by malware C2 and email datasets.

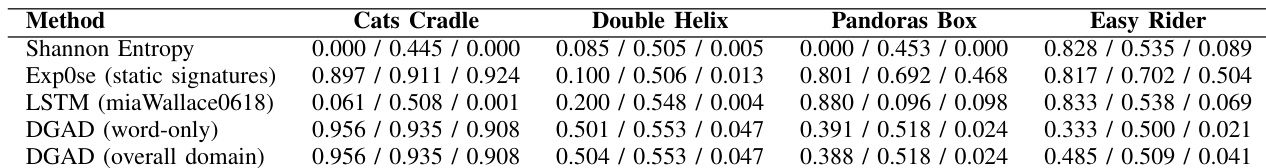

- It evaluates four DGA detectors (Shannon entropy, Exp0se, LSTM, COSSAS DGAD) against Gravity Falls using Top-1M domains as benign baselines, revealing that all methods struggle with dictionary-based and themed domains, showing tactic-dependent performance and low recall in multiple tool-cluster pairings.

- The findings demonstrate that both traditional heuristics and recent ML-based detectors are ill-suited for the dynamic, context-rich DGA patterns in smishing, motivating context-aware detection methods and providing a reproducible benchmark for future evaluation of mobile threat infrastructure.

Introduction

The authors leverage the Gravity Falls dataset—a semi-synthetic collection of smishing-driven DGA domains from 2022 to 2025—to evaluate how well traditional and machine-learning DGA detectors perform against real-world, evolving attack tactics outside enterprise networks. While prior work focuses on malware C2 or email phishing, smishing targets individuals with fewer protections and rapidly rotating domains, making detection critical yet understudied. The authors find that both entropy-based heuristics and modern ML models like LSTM and COSSAS DGAD struggle with dictionary concatenation and themed combo-squatting variants, revealing a gap in detector adaptability to tactic shifts. Their main contribution is a new benchmark dataset and evidence that current tools are insufficient for smishing-specific DGA evolution, urging context-aware detection methods.

Dataset

-

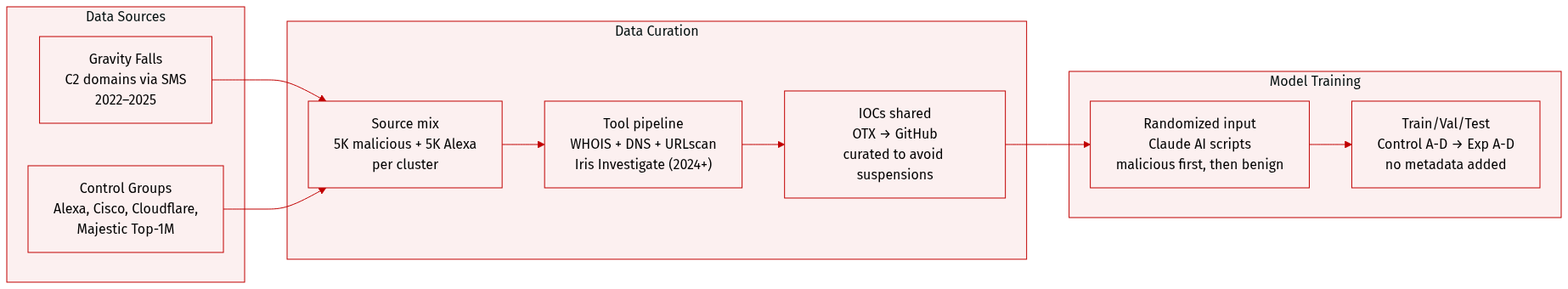

The authors use the Gravity Falls dataset, composed of C2 domains delivered via SMS between 2022 and 2025, organized into four technique clusters reflecting annual evolution of the same threat actor’s TTPs. The data is semi-synthetic, blending observed malicious domains with predicted ones used for sinkholing and measurement.

-

Each cluster has distinct characteristics:

- Cats Cradle (2022): Short randomized 7-character domains with common TLDs; landing pages mimicked CAPTCHA portals.

- Double Helix (2023): Dictionary-based concatenations with newer gTLDs; occasional truncations suggest encoding constraints.

- Pandoras Box (2024): Professional package-delivery lures; combo-squatting with random suffixes; heavy use of Chinese infrastructure.

- Easy Rider (2025): Government/toll-themed lures; shifted to email-to-iMessage/SMS with foreign numbers; combo-squatting stabilized.

-

Control groups (10,000 domains each) were drawn from Alexa, Cisco, Cloudflare, and Majestic Top-1M lists (2017–2025), treated as benign baselines. Experimental groups combined 5,000 malicious domains from each cluster with 5,000 from Alexa Top-1M to maintain consistent size; Alexa was used for padding due to its static nature.

-

Data was collected via recipient-side SMS observation, followed by WHOIS lookups (via DomainTools), passive DNS queries (SecurityTrails), and URL snapshots (URLscan). From 2024 onward, Iris Investigate replaced manual workflows, enabling link graphs and structured CSV exports. IOCs were initially shared via OTX, later migrated to GitHub with curation to avoid platform suspensions.

-

For model evaluation, domains were randomized using Claude AI scripts, fed into tools in order (Control A–D, then Experimental A–D), with malicious samples stacked before benign ones to test for potential model assimilation. No explicit cropping or metadata construction beyond tool outputs was applied, though future work suggests retroactive standardization via DomainTools for higher fidelity.

Method

The authors leverage two distinct CAPTCHA generation techniques to evaluate target validation mechanisms, each designed to simulate human-like input patterns while introducing controlled randomness to thwart automated systems.

In the first approach, Cats Cradle (2022), the system generates randomized sequences of alphabetical characters constrained to lengths between five and eight characters. This method relies on the perceptual unpredictability of letter arrangements to challenge automated solvers, while maintaining a structure that remains legible and interpretable to human users. The technique does not enforce semantic meaning, instead prioritizing visual and typographic variability as a barrier to machine recognition.

The second method, Double Helix (2023), adopts a more linguistically grounded strategy by concatenating pairs of dictionary words. This dual-word structure preserves semantic coherence while increasing combinatorial complexity, making it harder for bots to guess or brute-force valid inputs. The authors assess both techniques under the same objective: validating target systems through the deployment of fake CAPTCHAs that mimic real-world adversarial conditions.

No architectural diagrams or training workflows are provided in the source material; the focus remains on the design and intent of the CAPTCHA generation strategies rather than their implementation or evaluation infrastructure.

Experiment

- Evaluated four domain-generation tactics (Cats Cradle, Double Helix, Pandoras Box, Easy Rider) using traditional and ML-based detectors, revealing strong performance only on randomized domains (Cats Cradle) and poor detection on dictionary-based or combo-squatting variants.

- Traditional detectors like Exp0se excelled at high-entropy domains but struggled with structured, dictionary-driven tactics, confirming their role as high-throughput sieves rather than comprehensive solutions.

- ML-based tools (LSTM, DGAD) showed limited generalization beyond randomized domains, indicating current models are not robust against blended, real-world smishing tactics that mix brand tokens and minor randomization.

- Defenders should adopt layered strategies: use lexical heuristics for obvious random domains, and supplement with contextual signals (message content, infrastructure, brand abuse policies) for more sophisticated tactics.

- LLMs demonstrated potential in identifying thematic patterns across clusters, suggesting future integration could enhance detection capabilities.

- Experimental limitations include semi-synthetic data, sampling duplicates, skewed benign/malicious ratios, and outdated benign baselines, all of which constrain generalizability and should be addressed in future work.

The authors evaluate four domain detection methods across four distinct domain-generation tactics, finding that performance varies significantly by tactic type. Traditional and ML-based detectors achieve high precision and accuracy on randomized domains but struggle with dictionary-based and themed combo-squatting domains. Results indicate that current tools are not robust against real-world smishing tactics that blend recognizable words with minor randomization.