Command Palette

Search for a command to run...

Active Context Compression: Autonomous Memory Management in LLM Agents

Active Context Compression: Autonomous Memory Management in LLM Agents

Nikhil Verma

Abstract

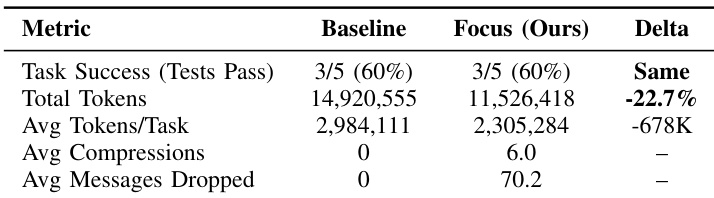

Large Language Model (LLM) agents struggle with long-horizon software engineering tasks due to "Context Bloat." As interaction history grows, computational costs explode, latency increases, and reasoning capabilities degrade due to distraction by irrelevant past errors. Existing solutions often rely on passive, external summarization mechanisms that the agent cannot control. This paper proposes Focus, an agent-centric architecture inspired by the biological exploration strategies of Physarum polycephalum (slime mold). The Focus Agent autonomously decides when to consolidate key learnings into a persistent "Knowledge" block and actively withdraws (prunes) the raw interaction history. Using an optimized scaffold matching industry best practices (persistent bash + string-replacement editor), we evaluated Focus on N=5 context-intensive instances from SWE-bench Lite using Claude Haiku 4.5. With aggressive prompting that encourages frequent compression, Focus achieves 22.7% token reduction (14.9M → 11.5M tokens) while maintaining identical accuracy (3/5 = 60% for both agents). Focus performed 6.0 autonomous compressions per task on average, with token savings up to 57% on individual instances. We demonstrate that capable models can autonomously self-regulate their context when given appropriate tools and prompting, opening pathways for cost-aware agentic systems without sacrificing task performance.

One-sentence Summary

Nikhil Verma proposes Focus, an agent-centric architecture inspired by the biological exploration strategies of Physarum polycephalum that enables LLM agents to autonomously manage context through active consolidation and pruning, achieving a 22.7% token reduction on SWE-bench Lite instances using Claude Haiku 4.5 while maintaining 60% accuracy.

Key Contributions

- The paper introduces Focus, an agent-centric architecture inspired by the biological exploration strategies of slime mold that enables intra-trajectory context management. This method allows an agent to autonomously decide when to consolidate key learnings into a persistent knowledge block while actively pruning raw interaction history.

- This work implements an optimized scaffold using industry best practices, such as a persistent bash interface and a string-replacement editor, to facilitate autonomous self-regulation of context. This approach enables the agent to summarize recent trajectories into high-level insights and physically delete redundant logs during a single task.

- Evaluations on context-intensive SWE-bench Lite instances demonstrate that the Focus agent achieves a 22.7% total token reduction while maintaining identical task accuracy compared to standard agents. Results show that the method performs an average of 6.0 autonomous compressions per task, with individual instance token savings reaching up to 57%.

Introduction

As Large Language Model (LLM) agents tackle complex, long-horizon software engineering tasks, they face significant hurdles known as context bloat. Naive use of large context windows leads to quadratic cost increases, higher latency, and context poisoning where irrelevant trial-and-error logs distract the model from the primary task. While existing solutions use external memory hierarchies or separate compression models, they often rely on passive mechanisms that the agent cannot control during a continuous task trajectory. The authors propose Focus, an agent-centric architecture inspired by the biological exploration strategies of slime mold. This approach enables the agent to perform intra-trajectory compression by autonomously summarizing key learnings into a persistent knowledge block and actively pruning raw interaction history to maintain a lean, effective context.

Method

The authors introduce a novel architecture termed the Focus Loop, which augments the standard ReAct agent loop with two specialized primitives: start_focus and complete_focus. Unlike traditional approaches that rely on external timers or fixed heuristics to manage context length, this architecture grants the agent full autonomy to determine when to initiate and conclude a focus cycle.

The process begins when the agent invokes start_focus to declare a specific investigation objective, such as debugging a database connection. This action establishes a formal checkpoint within the conversation history. Following this checkpoint, the agent enters the exploration phase, where it utilizes standard tools like reading, editing, and executing code to perform its tasks.

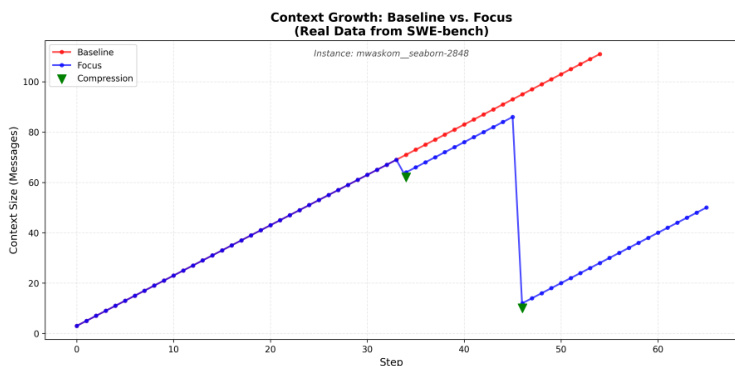

As the agent reaches a natural conclusion to a sub-task or encounters a dead end, it invokes complete_focus. During this consolidation phase, the agent generates a structured summary that captures the attempted actions, the facts or bugs learned, and the final outcome. The system then executes a withdrawal process: the generated summary is appended to a persistent "Knowledge" block located at the top of the context, and all intermediate messages between the initial checkpoint and the current step are deleted. This mechanism transforms the context from a monotonically increasing log into a "Sawtooth" pattern, where the context expands during exploration and collapses during consolidation. This allows the model to manage its own context based on the inherent structure of the task rather than arbitrary step counts.

To support this loop, the authors implement an optimized scaffold designed for software engineering tasks. This scaffold consists of two primary tools: a Persistent Bash session and a String-Replace Editor. The Persistent Bash tool provides a stateful shell environment where the working directory and environment remain consistent across multiple calls, mimicking a real-world developer terminal. To ensure precise file manipulation, the String-Replace Editor allows for targeted edits through exact string replacement, including operations such as viewing, creating, replacing, and inserting text. This approach avoids the common errors associated with full-file rewrites. The agent is guided by a system prompt to utilize these tools extensively, with a maximum limit of 150 steps, and is encouraged to implement tests before attempting to solve the primary problem.

Experiment

The Focus architecture was evaluated on five context-intensive SWE-bench Lite instances using an A/B comparison to determine if aggressive context compression could reduce token usage without sacrificing task accuracy. The experiments demonstrate that directive prompting, which enforces frequent and structured compression phases, enables significant token savings while maintaining the same success rate as a baseline agent. While the architecture is highly effective for exploration-heavy tasks, the results also indicate that compression overhead can occasionally exceed benefits in tasks requiring continuous iterative refinement.

The authors compare a baseline agent against their Focus architecture on context-intensive software engineering tasks. Results show that the Focus agent achieves significant token reductions while maintaining the same level of task success as the baseline. The Focus agent achieves a substantial reduction in total token consumption compared to the baseline. Task success rates remain identical between the baseline and the Focus architecture. The Focus approach utilizes frequent compressions and message dropping to manage context efficiency.

The authors evaluate the Focus architecture against a baseline agent on context-intensive software engineering tasks to test its ability to manage context efficiency. By utilizing frequent compressions and message dropping, the Focus approach significantly reduces total token consumption. Ultimately, the architecture maintains the same level of task success as the baseline while operating much more efficiently.