Command Palette

Search for a command to run...

Quadratic Gradient: A Unified Framework Bridging Gradient Descent and Newton-Type Methods by Synthesizing Hessians and Gradients

Quadratic Gradient: A Unified Framework Bridging Gradient Descent and Newton-Type Methods by Synthesizing Hessians and Gradients

John Chiang

Abstract

Accelerating the convergence of second-order optimization, particularly Newton-type methods, remains a pivotal challenge in algorithmic research. In this paper, we extend previous work on the extbf{Quadratic Gradient (QG)} and rigorously validate its applicability to general convex numerical optimization problems. We introduce a novel variant of the Quadratic Gradient that departs from the conventional fixed Hessian Newton framework. We present a new way to build a new version of the quadratic gradient. This new quadratic gradient doesn't satisfy the convergence conditions of the fixed Hessian Newton's method. However, experimental results show that it sometimes has a better performance than the original one in convergence rate. While this variant relaxes certain classical convergence constraints, it maintains a positive-definite Hessian proxy and demonstrates comparable, or in some cases superior, empirical performance in convergence rates. Furthermore, we demonstrate that both the original and the proposed QG variants can be effectively applied to non-convex optimization landscapes. A key motivation of our work is the limitation of traditional scalar learning rates. We argue that a diagonal matrix can more effectively accelerate gradient elements at heterogeneous rates. Our findings establish the Quadratic Gradient as a versatile and potent framework for modern optimization. Furthermore, we integrate Hutchinson's Estimator to estimate the Hessian diagonal efficiently via Hessian-vector products. Notably, we demonstrate that the proposed Quadratic Gradient variant is highly effective for Deep Learning architectures, providing a robust second-order alternative to standard adaptive optimizers.

One-sentence Summary

John Chiang proposes a novel Quadratic Gradient variant that replaces fixed Hessian Newton frameworks with a diagonal matrix approach to accelerate convergence in Deep Learning. By integrating Hutchinson's Estimator for efficient Hessian diagonal approximation, this method outperforms traditional scalar learning rates in non-convex optimization landscapes.

Key Contributions

- The paper introduces a novel variant of the Quadratic Gradient that departs from the fixed Hessian Newton framework by maintaining a positive-definite Hessian proxy, which allows for effective application to non-convex optimization landscapes where traditional methods often fail.

- This work integrates Hutchinson's Estimator to efficiently approximate the Hessian diagonal via Hessian-vector products, reducing the computational complexity of second-order information from O(n2) to O(n) and enabling scalability for Deep Learning architectures.

- Experimental results demonstrate that the proposed Quadratic Gradient variants achieve comparable or superior convergence rates compared to original methods and standard adaptive optimizers, validating their utility as a robust second-order alternative for general convex and non-convex problems.

Introduction

Modern numerical optimization struggles to balance the scalability of first-order methods like SGD with the rapid convergence of second-order approaches like Newton's method, as the former fails on ill-conditioned curvatures while the latter incurs prohibitive computational costs and sensitivity to Hessian indefiniteness. Prior work on the Quadratic Gradient (QG) has shown promise in specific tasks like logistic regression but often relies on fixed Hessian approximations that limit general applicability and theoretical convergence guarantees. The authors leverage a novel QG variant that synthesizes curvature information into a diagonal matrix to replace scalar learning rates, enabling dimension-wise acceleration without satisfying the strict constraints of traditional fixed Hessian Newton methods. By integrating Hutchinson's Estimator for efficient Hessian diagonal approximation, they establish a unified framework that extends to general convex and non-convex landscapes, offering a robust second-order alternative to standard adaptive optimizers in Deep Learning.

Dataset

The provided text only lists the titles "mnist" and "credi" under the section "Large-Scale Datasets" without offering any descriptive content. Consequently, it is not possible to draft a dataset description covering composition, sources, filtering rules, training splits, or processing strategies based on this input. No further details regarding dataset size, metadata construction, or model usage are available in the source material.

Experiment

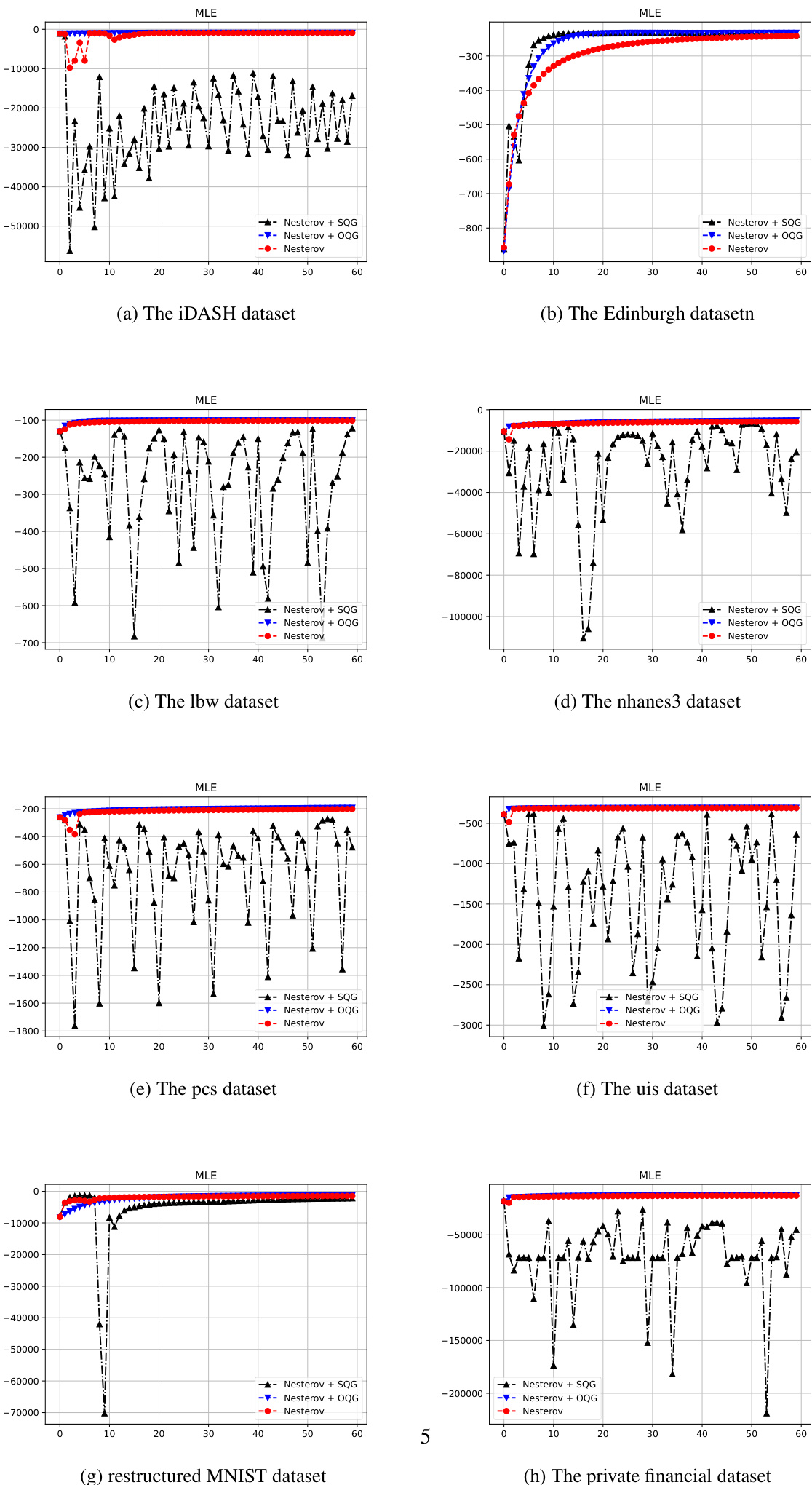

- Logistic regression experiments on multiple datasets validate that the Simplified Quadratic Gradient (SQG) framework maintains high computational efficiency and convergence speed comparable to the original method while offering a more streamlined architecture, though it requires conservative learning rate decay for NAG to ensure stability.

- Deep learning tests on ResNet-18 demonstrate that the new QG variant achieves faster convergence than Adam and exhibits superior stability in non-convex regions compared to AdaHessian, with computational overhead comparable to second-order methods.

- Benchmark evaluations on convex and non-convex functions confirm the algorithm's ability to handle dimensional scaling and navigate ill-conditioned valleys effectively.

- Saddle point analysis reveals that the QG variant overcomes the stagnation typical of first-order methods by utilizing spectral information to assign large adaptive steps along directions of minimal curvature, allowing the optimizer to escape saddle points efficiently.

- Overall, the framework successfully bridges first and second-order optimization by integrating Hessian approximations to accelerate convergence and robustly manage complex topological features in high-dimensional spaces.