Command Palette

Search for a command to run...

AI Weekly Paper Report: A Quick Look at Multimodal Memory Agents, Visual Basic Models, Reasoning Models, and More

In the development of multimodal intelligent agents, how to efficiently store and utilize long-term memory like humans has always been a key challenge.

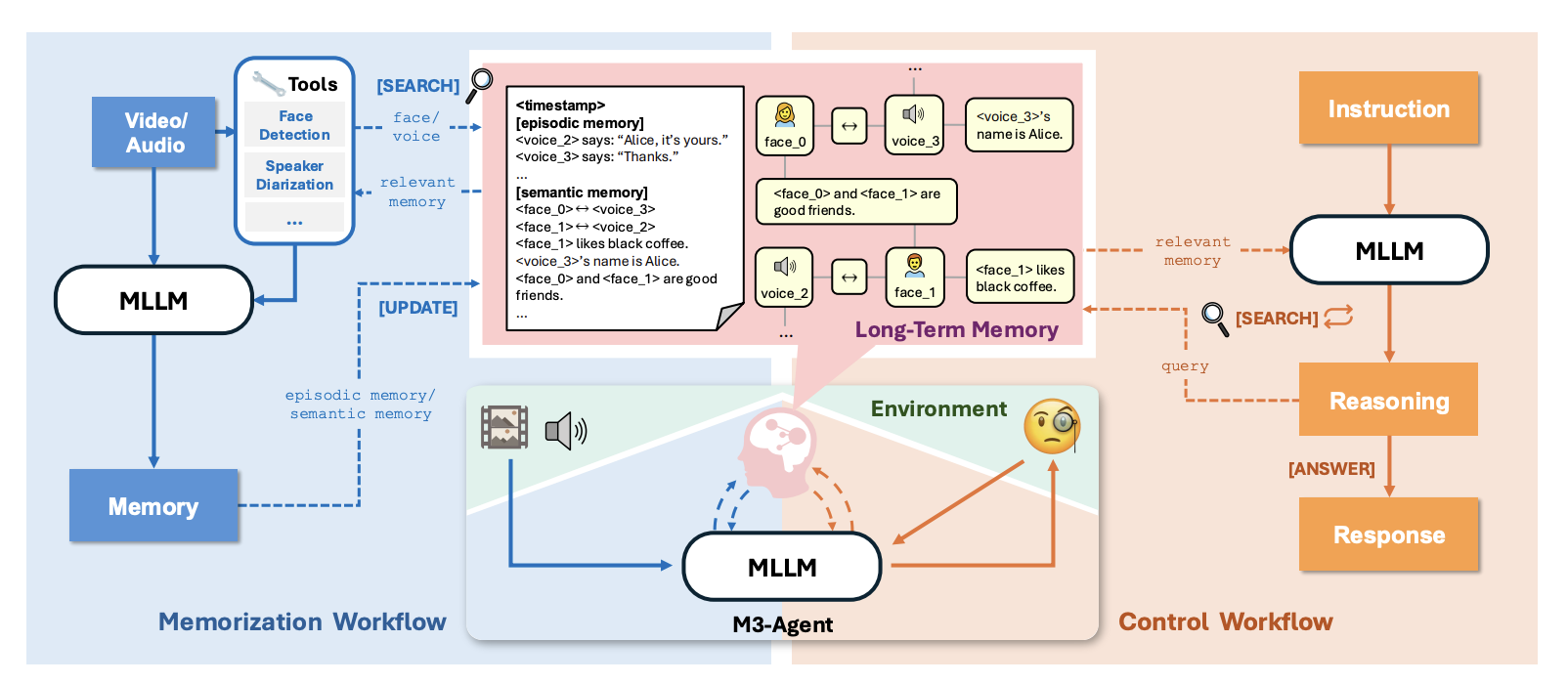

The M3-Agent framework offers a novel solution to this problem: it receives and processes real-time visual and auditory input, transforming this information into an entity-centric, multimodal long-term memory graph. It also incorporates a hierarchical mechanism for episodic and semantic memory. Compared to traditional approaches, it exhibits characteristics closer to human intelligence in terms of long-term information retention, multimodal reasoning, and memory consistency.

Paper link:https://go.hyper.ai/lGKm9

Latest AI Papers:https://hyper.ai/papers

In order to let more users know the latest developments in the field of artificial intelligence in academia, HyperAI's official website (hyper.ai) has now launched a "Latest Papers" section, which updates cutting-edge AI research papers every day.Here are 5 popular AI papers we recommendAt the same time, we have also summarized the mind map of the paper structure for everyone. Let’s take a quick look at this week’s AI cutting-edge achievements⬇️

This week's paper recommendation

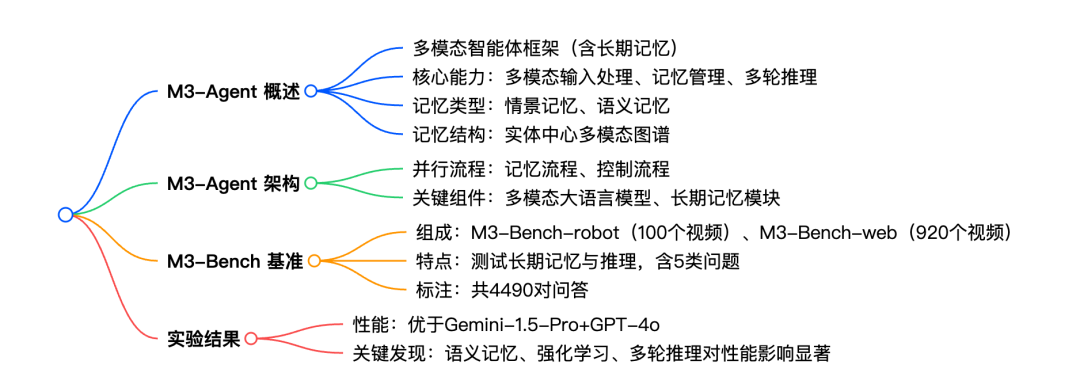

1. Seeing, Listening, Remembering, and Reasoning: A Multimodal Agent with Long-Term Memory

This paper introduces M3-Agent, a novel multimodal agent framework with long-term memory. M3-Agent processes real-time visual and auditory input and uses this information to build and update its long-term memory. In addition to episodic memory, it also develops semantic memory, accumulating world knowledge about its environment. Experimental results show that M3-Agent, trained with reinforcement learning, surpasses the strongest baseline using a combination of Gemini-1.5-pro and GPT-4o model cues.

Paper link:https://go.hyper.ai/lGKm9

M3-Bench long video question answering benchmark dataset:https://go.hyper.ai/FPR7q

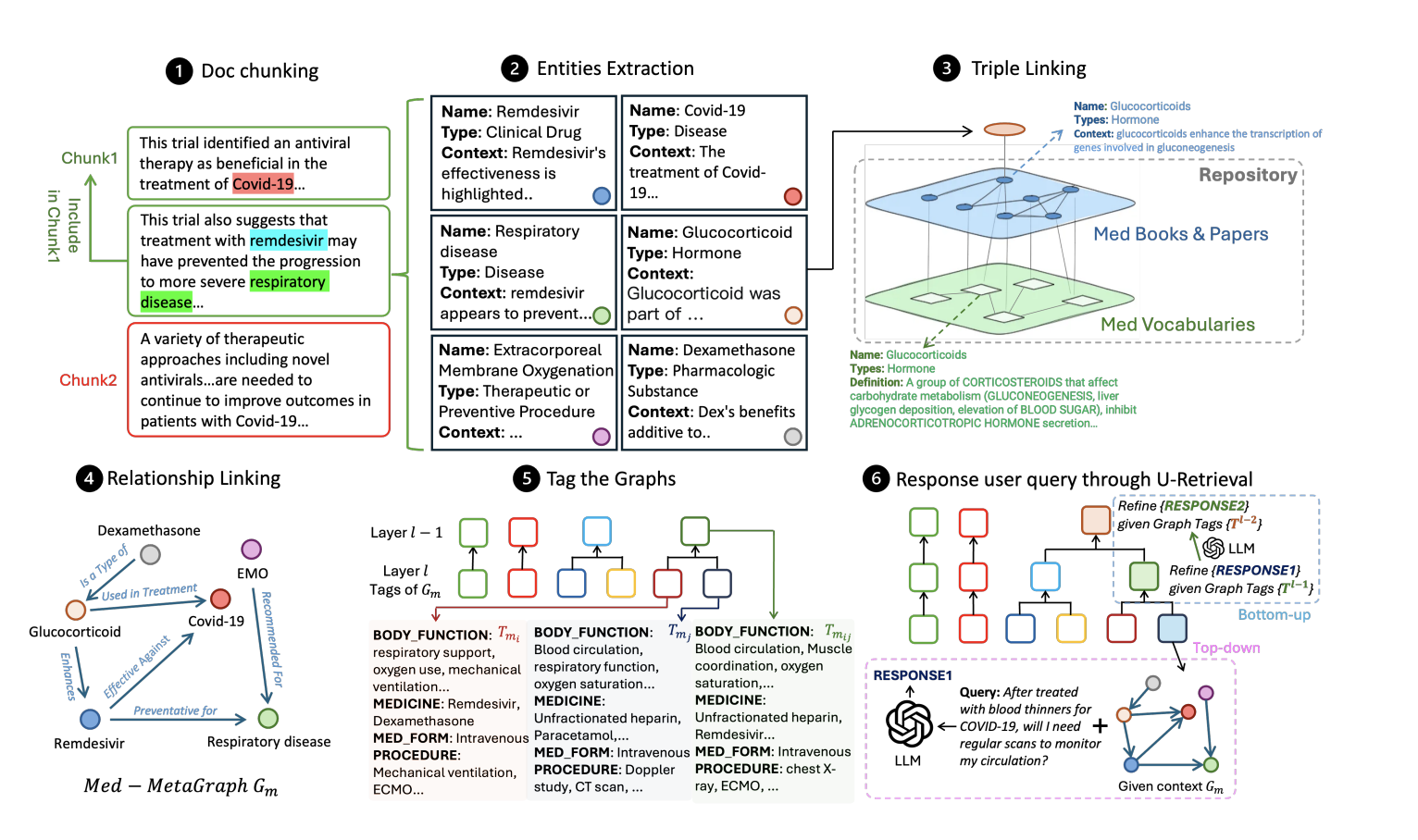

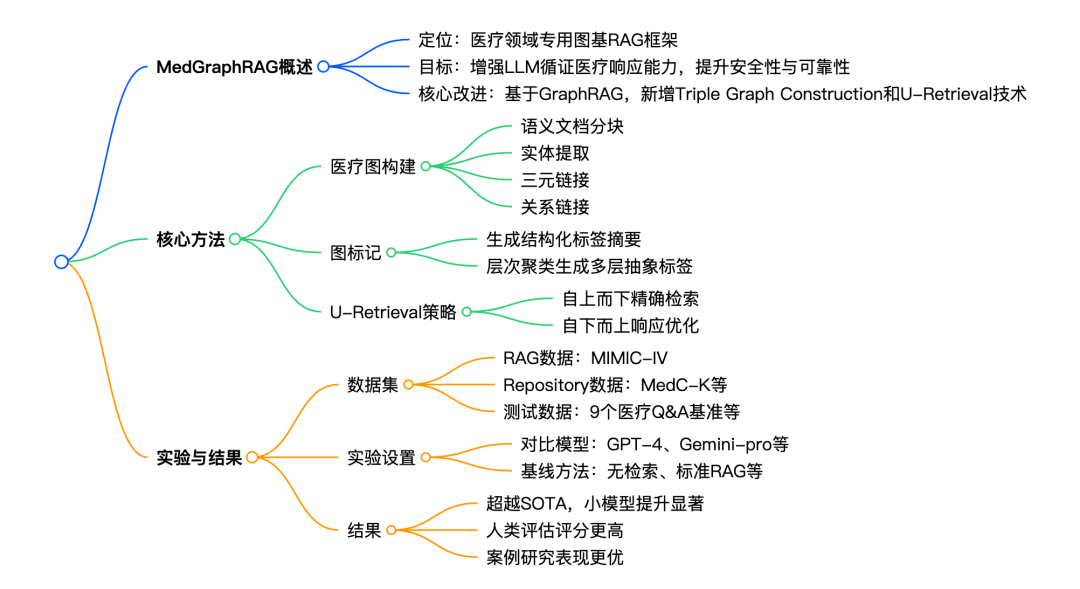

2.Medical Graph RAG: Towards Safe Medical Large Language Model via Graph Retrieval-Augmented Generation

This paper proposes a novel graph-based retrieval-augmented generation (RAG) framework for the medical field, named MedGraphRAG. This framework aims to enhance the ability of large-scale language models to generate evidence-based medical answers while strengthening the security and reliability of processing private medical data. The research team introduces two innovative technologies in the paper: triple graph structure construction and the U-Retrieval mechanism.

Paper link:https://go.hyper.ai/FIuKc

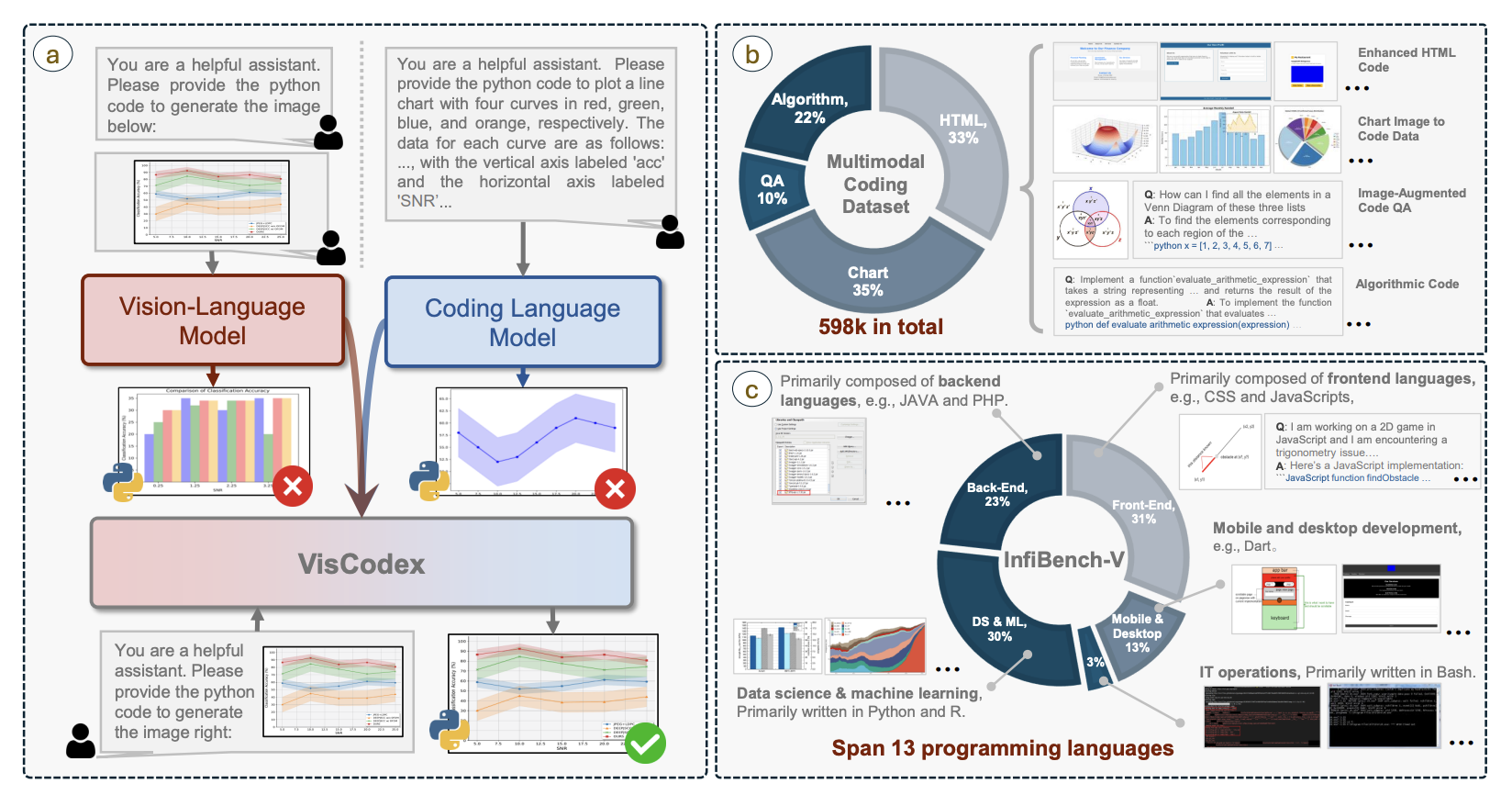

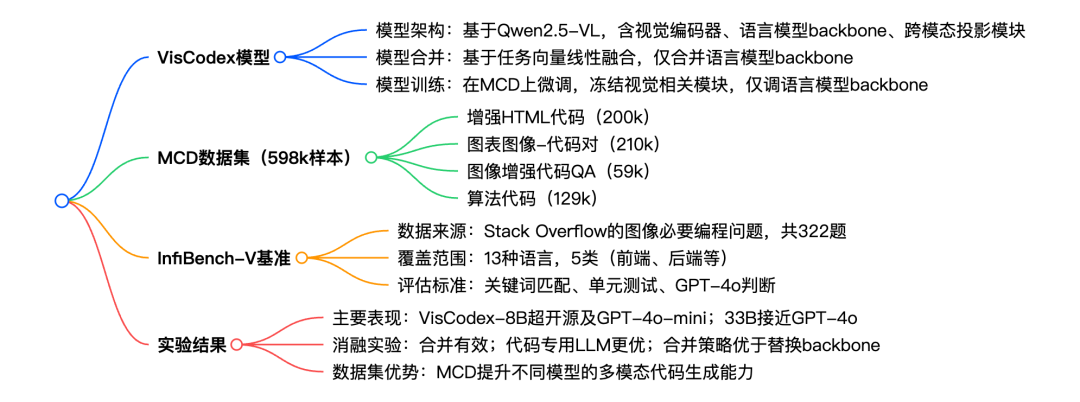

3.VisCodex: Unified Multimodal Code Generation via Merging Vision and Coding Models

This paper introduces a novel framework, VisCodex, that enhances the code generation capabilities of large multimodal language models by fusing visual and coding models. Furthermore, the research team constructed a large-scale, diverse dataset, called the Multimodal Coding Dataset (MCD), which includes high-quality HTML code, diagram-image-code pairs, image-based Stack Overflow Q&A, and algorithmic questions. Experimental results demonstrate that VisCodex performs well across multiple evaluations, surpassing open-source MLLMs and approaching the performance of the leading enterprise-grade model, GPT-4o.

Paper link:https://go.hyper.ai/JJtbR

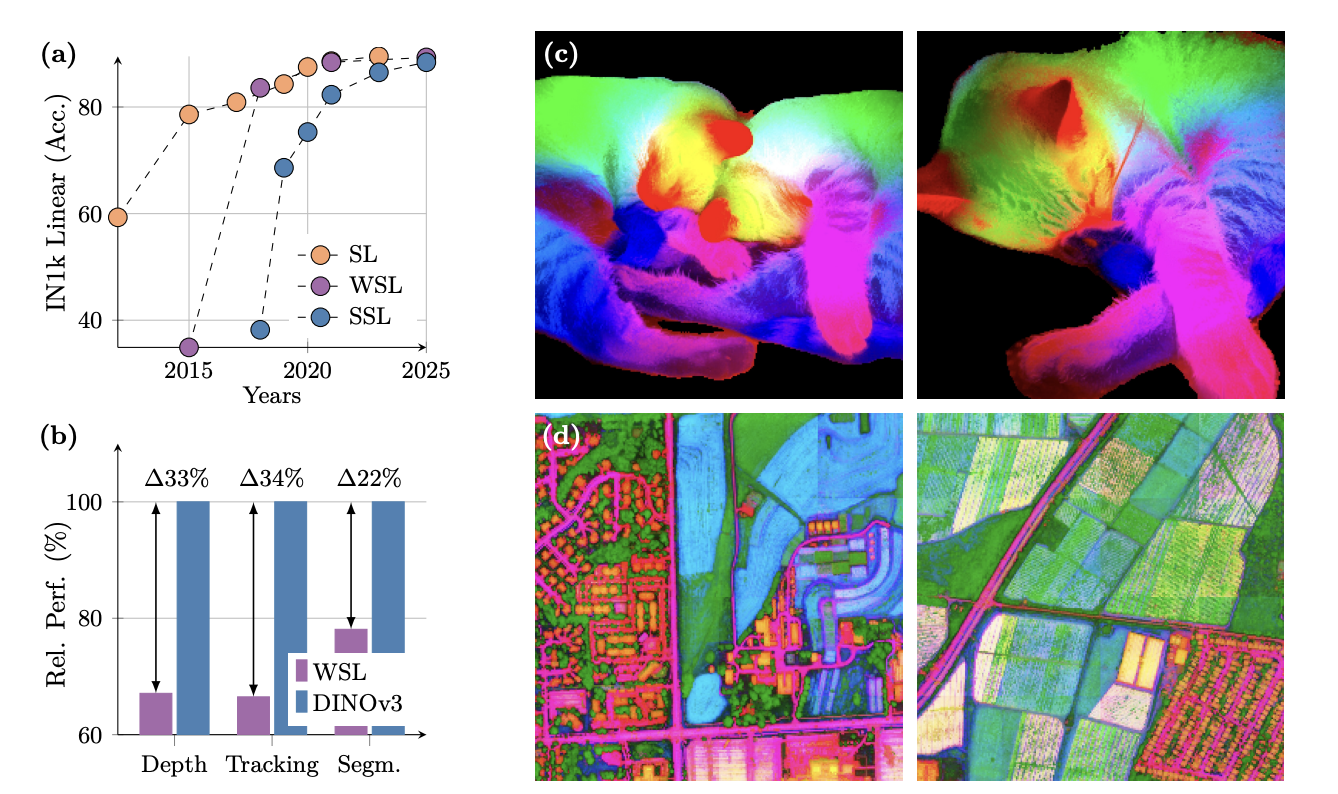

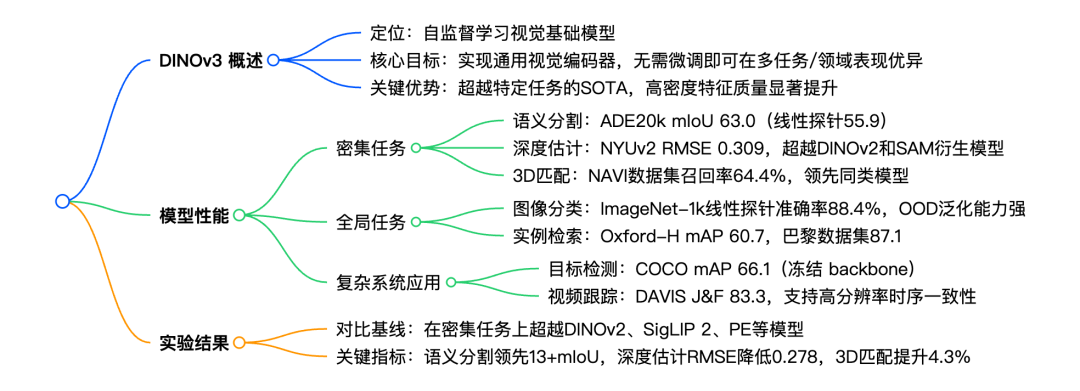

4.DINOv3

This paper proposes a versatile self-supervised visual base model, DINOv3, designed to generate high-quality dense features. This model achieves excellent performance on a variety of visual tasks, significantly outperforming previous self-supervised and weakly supervised base models. The research team also released the DINOv3 model suite, aiming to provide scalable solutions for diverse resource constraints and deployment scenarios.

Paper link:https://go.hyper.ai/lUNDj

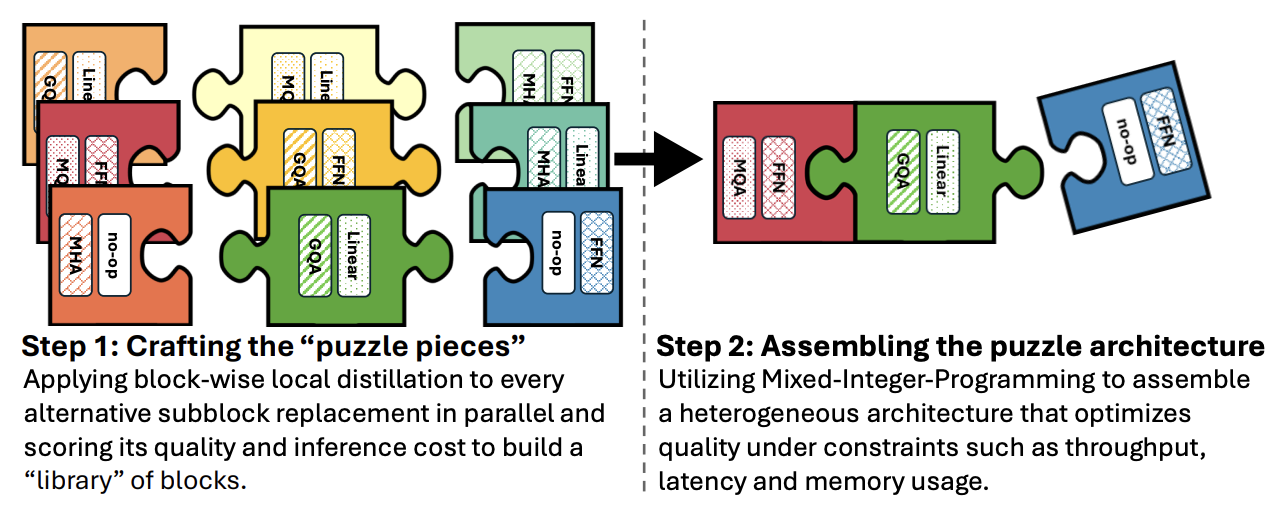

5.Llama-Nemotron: Efficient Reasoning Models

This article introduces the Llama-Nemotron family of models, an open family of heterogeneous inference models with superior inference capabilities and efficiency, available under an open license for enterprise use. The family includes three sizes: Nano (8B), Super (49B), and Ultra (253B). Their performance rivals that of state-of-the-art inference models, while offering superior inference throughput and memory efficiency.

Paper link:https://go.hyper.ai/3INVh

The above is all the content of this week’s paper recommendation. For more cutting-edge AI research papers, please visit the “Latest Papers” section of hyper.ai’s official website.

We also welcome research teams to submit high-quality results and papers to us. Those interested can add the NeuroStar WeChat (WeChat ID: Hyperai01).

See you next week!