Command Palette

Search for a command to run...

ExoActor: Exozentrische Video-Generierung als generalisierbare interaktive Kontrolle humanoider Roboter

ExoActor: Exozentrische Video-Generierung als generalisierbare interaktive Kontrolle humanoider Roboter

Yanghao Zhou Jingyu Ma Yibo Peng Zhenguo Sun Yu Bai Börje F. Karlsson

Zusammenfassung

Humanoidere Steuerungssysteme haben in den letzten Jahren bedeutende Fortschritte erzielt. Dennoch bleibt die Modellierung flüssiger, interaktionsreicher Verhaltensweisen zwischen einem Roboter, seiner umgebenden Umwelt und aufgabenrelevanten Objekten eine grundlegende Herausforderung. Diese Schwierigkeit ergibt sich aus der Notwendigkeit, räumlichen Kontext, zeitliche Dynamik, Roboteraktionen und Aufgabenabsichten gleichzeitig in großem Maßstab zu erfassen, was sich nur unzureichend mit konventionellen Überwachungsansätzen (supervised learning) vereinbaren lässt.Wir präsentieren ExoActor, einen neuartigen Ansatz, der die Verallgemeinerungsfähigkeit großer Modelle zur Video-Generierung nutzt, um dieses Problem zu adressieren. Die zentrale Erkenntnis von ExoActor besteht darin, die Generierung von Videos aus der Third-Person-Perspektive als einheitliche Schnittstelle zur Modellierung interaktionsdynamischer Prozesse zu verwenden. Basierend auf einer Aufgabenanweisung und dem Szenenkontext synthetisiert ExoActor plausible Ausführungsabläufe, die implizit koordinierte Interaktionen zwischen Roboter, Umgebung und Objekten kodieren. Diese Videoausgabe wird anschließend durch eine Pipeline in ausführbare humanoidere Bewegungen transformiert, die menschliche Bewegungsdaten schätzt und diese über einen allgemeinen Bewegungsgenerator ausführt, wodurch eine aufgabengebundene Bewegungssequenz entsteht.Zur Validierung des vorgeschlagenen Ansatzes haben wir ihn als End-to-End-System implementiert und seine Verallgemeinerungsfähigkeit auf neue Szenarien ohne zusätzliche Datenerhebung in der realen Welt nachgewiesen. Abschließend diskutieren wir die Grenzen der aktuellen Implementierung und leiten vielversprechende Richtungen für zukünftige Forschungsarbeiten ab. Dabei veranschaulichen wir, wie ExoActor einen skalierbaren Ansatz zur Modellierung interaktionsreicher humanoider Verhaltensweisen bietet und potenziell neue Wege aufzeigt, wie generative Modelle die Entwicklung allgemeiner, humanoider Intelligenz vorantreiben können.

One-sentence Summary

The authors propose ExoActor, a framework that leverages exocentric video generation as a unified interface to implicitly encode coordinated robot-environment-object interactions, converting synthesized execution videos into executable humanoid behaviors via human motion estimation and a general motion controller to demonstrate generalization to new scenarios without additional real-world data collection.

Key Contributions

- ExoActor is an end-to-end framework that leverages large-scale video generation models to capture spatial, temporal, and task-driven dynamics between humanoid robots, environments, and objects.

- The method employs third-person video generation as a unified interface to synthesize execution processes that implicitly encode coordinated interactions, which are subsequently converted into executable behaviors through a pipeline combining human motion estimation and a general motion controller.

- The implemented system demonstrates generalization to novel scenarios without requiring additional real-world data collection.

Introduction

Humanoid robots must execute fluent, interaction-rich behaviors across unstructured environments to enable general-purpose intelligence. Prior approaches struggle to jointly model spatial context, temporal dynamics, and task intent at scale, often relying on costly real-world data collection, domain-specific tuning, or curated demonstrations that fail to generalize outside controlled settings. To overcome these bottlenecks, the authors leverage large-scale third-person video generation as a unified interface for modeling complex interaction dynamics. Their ExoActor framework synthesizes plausible execution videos from task instructions and scene context, then translates these imagined demonstrations into executable whole-body behaviors through a unified motion estimation and tracking pipeline. This design decouples high-level interaction planning from low-level control, allowing humanoid systems to adapt to novel scenarios without additional real-world data collection.

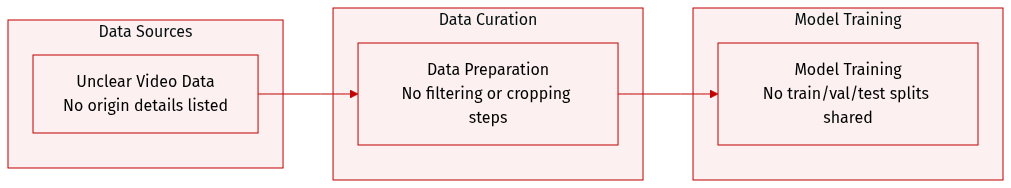

Dataset

- Dataset composition and sources: The authors do not specify the dataset composition or data sources in the provided excerpt.

- Key details for each subset: No information is given regarding subset sizes, origins, or filtering rules.

- Model usage and training configuration: The excerpt does not describe how the authors split the data, set mixture ratios, or process it for training.

- Processing and metadata construction: No cropping strategies, metadata construction steps, or additional preprocessing details are mentioned.

Method

The ExoActor framework operates as a unified pipeline that translates high-level task instructions into executable humanoid behaviors by leveraging third-person video generation as an intermediate representation. The overall system is structured into three primary stages: video generation, motion estimation, and motion execution. As shown in the framework diagram, the process begins with an initial third-person observation of the robot in the environment and a task instruction. This information is first processed through a task-to-action decomposition module, which converts the abstract goal into a temporally ordered sequence of atomic actions, providing a structured plan for the subsequent stages. The decomposed action chain, combined with the initial observation, serves as a scene- and task-aware description that guides the video generation process.

Refer to the framework diagram to understand the flow of information through the system. The next stage involves transforming the robot-centered observation into a human-compatible representation, a step essential for overcoming the embodiment mismatch between the robot and the human-centric priors of video generation models. This robot-to-human embodiment transfer, illustrated in Figure 3, converts the robot into a human subject while strictly preserving the original scene layout, camera viewpoint, body pose, orientation, scale, and robot-body proportions. This transformation ensures that the input to the video generation model aligns with its learned data distribution, thereby improving temporal consistency, reducing visual artifacts, and enhancing motion estimation accuracy. The transfer is implemented using a prompt-based image editing interface, which enforces the necessary constraints on pose, orientation, and scene preservation.

Following the embodiment transfer, the system generates a sequence of third-person action videos that are both task-consistent and compatible with the embodiment constraints of the target robot. This is achieved through a structured prompt template that explicitly encodes scene constraints, motion requirements, and task execution details. The prompt takes the initial observation and the enriched action description as inputs, organizing them into structured fields such as Shot, Scene, Motion, Execution, and End State. These fields act as explicit constraints that enforce a fixed camera viewpoint, preserve scene geometry, and encourage natural, physically plausible, and robot-aligned motion patterns, thereby reducing hallucinations and improving temporal consistency. The video generation model used is Kling, chosen for its superior stability and consistency.

The generated videos are then fed into an interaction-aware whole-body motion estimation module to recover the 3D kinematic trajectories of the human actor. This stage aims to bridge the gap between pixel-level video synthesis and structured robot control. The authors employ GENMO, a diffusion-based model, to perform whole-body motion estimation, which formulates motion estimation as a constrained generation process. Instead of direct pose regression, the model leverages video features and 2D keypoints as conditioning signals to produce temporally consistent and physically plausible 3D motion sequences. The estimated motion is represented using SMPL parameters, including joint rotations and global positions, and the model performs temporal in-filling for partially occluded frames, producing a smooth and physically consistent motion sequence. This interaction-aware motion serves as a structured representation of the generated videos and provides a reliable interface for downstream robot execution commands.

To capture the fine-grained manipulation required for object interaction, the system applies WiLoR frame by frame to the generated third-person video to estimate bilateral hand poses. This hand motion estimation, illustrated in Figure 5, tracks the left and right hand poses at the original video frame rate, yielding one prediction per video frame. Each hand is assigned a discrete interaction state, corresponding to open, half-open, and closed grasp states. The smoothed hand poses and interaction states are synchronized with the whole-body motion and mapped frame-wise to robot end-effector commands. The final interaction-aware motion representation combines the global body motion, bilateral hand poses, and discrete manipulation states, providing a comprehensive motion trajectory.

The final stage of the pipeline is the general motion tracking deployment, where the estimated motions are transformed into physically consistent and dynamically feasible control policies. The system leverages SONIC as its motion tracking controller, which takes the current robot state and a temporal window of reference motions as input. By utilizing SONIC's scaled-up architecture, the system effectively "physics-filters" the "imagined" demonstrations from the video generation model, ensuring dynamic stability and stylistic fidelity without requiring task-specific reward engineering. For hand motion deployment, the estimated hand states are mapped to Unitree's Dex3-1-compatible 7-DoF joint targets and streamed together with the SMPL body trajectory through a queue input interface to the robot. This allows the robot to execute the generated behaviors in a physically realistic manner.

Experiment

The framework is evaluated through real-world zero-shot tasks spanning basic navigation to fine-grained multi-step manipulation, validating its capacity to translate video-driven prompts into stable whole-body humanoid behaviors. Qualitative analysis indicates that while the system reliably executes complex locomotion and interaction sequences, it encounters inherent limitations in video generation fidelity, motion estimation under occlusion, and precise height alignment during object manipulation. Ablation studies further demonstrate that bypassing intermediate retargeting preserves critical spatial accuracy, task-aware camera perspectives optimize performance, and selecting specific generative and estimation models yields the most robust trade-offs between physical plausibility and computational efficiency.

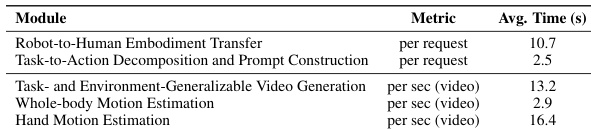

The authors evaluate ExoActor through a series of real-world experiments designed to assess its ability to generate and execute interaction-rich humanoid behaviors across tasks of increasing difficulty. The system's performance is analyzed in terms of computational efficiency and the time required for different modules, with a focus on video generation, motion estimation, and task decomposition. The system's overall performance is influenced by the time required for video generation and motion estimation, with hand motion estimation being the most time-consuming module. Task decomposition and prompt construction are relatively fast compared to other modules, indicating efficient processing for high-level task planning. Video generation and whole-body motion estimation require more time per video, suggesting higher computational demands for these stages.

The authors evaluate ExoActor through a series of real-world experiments designed to validate its capacity to generate and execute complex humanoid interactions across tasks of escalating difficulty. Performance analysis reveals that while high-level task planning remains computationally efficient, hand motion estimation serves as the primary processing bottleneck. Ultimately, the findings indicate that generating detailed video sequences and whole-body movements demands significantly more resources, highlighting a clear trade-off between behavioral complexity and system efficiency.