Command Palette

Search for a command to run...

Bildgeneratoren sind Generalist Vision Learners

Bildgeneratoren sind Generalist Vision Learners

Zusammenfassung

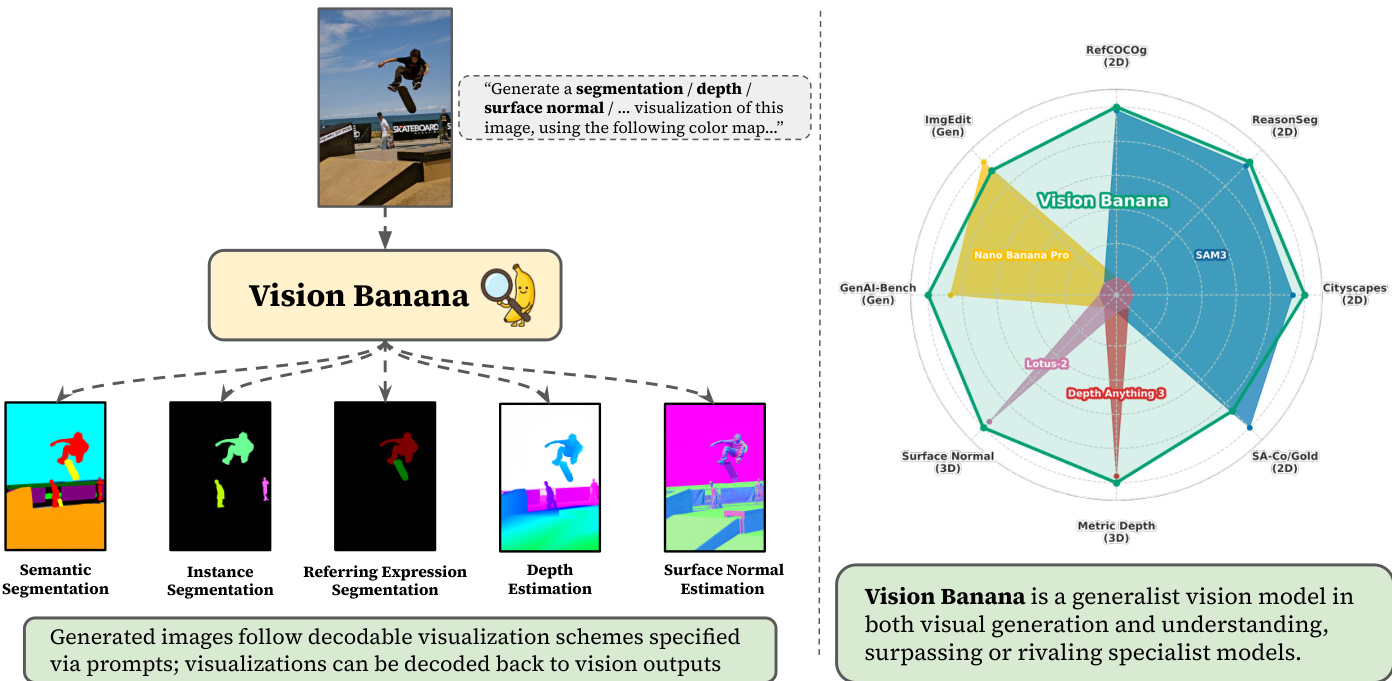

Hier ist die Übersetzung des Textes ins Deutsche, unter Berücksichtigung der fachspezifischen Terminologie und des akademischen Stils:Aktuelle Arbeiten zeigen, dass Bild- und Videogeneratoren Verhaltensweisen des Zero-Shot-visuellen Verständnisses aufweisen, die an die Art und Weise erinnern, wie Large Language Models (LLMs) durch generatives Pretraining emergente Fähigkeiten im Sprachverständnis und in der Argumentation entwickeln. Während schon lange die Vermutung besteht, dass die Fähigkeit zur Erstellung visueller Inhalte eine gleichzeitige Fähigkeit zu deren Verständnis impliziert, gab es bisher nur begrenzte Belege dafür, dass generative Vision-Modelle starke Verständniskapazitäten entwickelt haben. In dieser Arbeit demonstrieren wir, dass das Training zur Bildgenerierung eine ähnliche Rolle spielt wie das LLM-Pretraining und es Modellen ermöglicht, leistungsstarke und generalisierte visuelle Repräsentationen zu erlernen, die eine State-of-the-Art-Performance bei verschiedenen Vision-Aufgaben ermöglichen.Wir führen Vision Banana ein, ein Generalist-Modell, das durch Instruction-Tuning von Nano Banana Pro (NBP) auf einer Mischung aus dessen ursprünglichen Trainingsdaten sowie einer geringen Menge an Daten für Vision-Aufgaben erstellt wurde. Durch die Parametrisierung des Output-Raums von Vision-Aufgaben als RGB-Bilder definieren wir die Wahrnehmung (Perception) nahtlos als Bildgenerierung um. Unser Generalist-Modell, Vision Banana, erzielt State-of-the-Art-Ergebnisse bei einer Vielzahl von Vision-Aufgaben, die sowohl 2D- als auch 3D-Verständnis umfassen, und übertrifft oder erreicht die Leistung von Zero-Shot-Domänenspezialisten, einschließlich des Segment Anything Model 3 bei Segmentierungsaufgaben und der Depth Anything-Serie bei der metrischen Tiefenschätzung (metric depth estimation).Wir zeigen auf, dass diese Ergebnisse durch leichtgewichtiges Instruction-Tuning erreicht werden können, ohne die Bildgenerierungsfähigkeiten des Basismodells zu beeinträchtigen. Die überlegenen Ergebnisse legen nahe, dass das Pretraining mittels Bildgenerierung als generalisierter visueller Lernmechanismus fungiert. Zudem verdeutlicht dies, dass die Bildgenerierung als vereinheitlichte und universelle Schnittstelle für Vision-Aufgaben dient, vergleichbar mit der Rolle der Textgenerierung für das Sprachverständnis und die Argumentation. Wir könnten Zeugen eines bedeutenden Paradigmenwechsels in der Computer Vision werden, bei dem das generative Vision-Pretraining eine zentrale Rolle beim Aufbau von Foundational Vision Models sowohl für die Generierung als auch für das Verständnis einnimmt.

One-sentence Summary

This work introduces Vision Banana, a generalist model built by lightweight instruction-tuning Nano Banana Pro to parameterize vision task outputs as RGB images and reframe perception as image generation, achieving state-of-the-art results on 2D and 3D tasks by rivaling zero-shot domain-specialists such as Segment Anything Model 3 and the Depth Anything series without sacrificing generation capabilities, demonstrating that generative pretraining serves as a unified interface for foundational vision models.

Key Contributions

- The paper introduces Vision Banana, a generalist model built by instruction-tuning Nano Banana Pro on a mixture of original training data and vision task data. This approach parameterizes the output space of vision tasks as RGB images to seamlessly reframe perception as image generation.

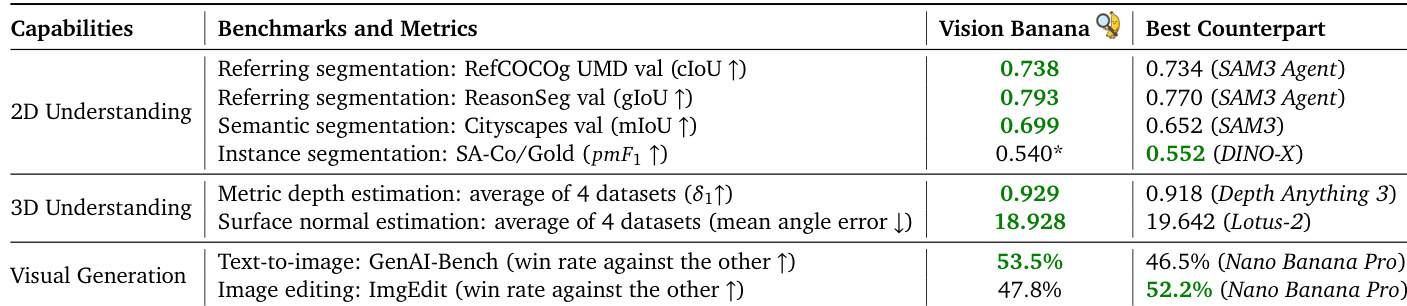

- Experiments demonstrate that Vision Banana achieves state-of-the-art results on a variety of vision tasks involving both 2D and 3D understanding. The model beats or rivals zero-shot domain specialists, including Segment Anything Model 3 on segmentation tasks and the Depth Anything series on metric depth estimation.

- The work shows that image generation training serves a role similar to LLM pretraining, allowing models to learn powerful and general visual representations. These results indicate that image generation acts as a unified interface for vision tasks while preserving the base model's image generation capabilities through lightweight instruction-tuning.

Introduction

Recent image and video generators exhibit emergent visual understanding behaviors reminiscent of large language models, yet prior generative vision models historically lagged behind specialized discriminative approaches. Previous efforts to adapt these generators for specific tasks often failed to achieve state-of-the-art results or required architectural modifications that compromised the model's generality. To address this, the authors introduce Vision Banana, a generalist model built by instruction-tuning a pretrained image generator to parameterize vision task outputs as RGB images. This lightweight tuning enables state-of-the-art performance on diverse 2D and 3D understanding tasks without sacrificing the base model's image generation capabilities, positioning generative pretraining as a unified foundation for visual intelligence.

Method

The authors construct Vision Banana by instruction-tuning their base model, Nano Banana Pro, to rigorously investigate and benchmark zero-shot capabilities in generating visualizations for visual understanding tasks. The core objective is to align the model to generate visualizations that can be decoded back to visual task outputs for quantitative evaluation. For instance, a generated depth heatmap must be invertible back to physical depth values. To achieve this, the authors mix vision task data into Nano Banana Pro's training mixture at a very low ratio. This lightweight instruction-tuning strategy aligns the model's emergent generative representations into measurable physical geometry and semantic labels while preserving the original generative priors.

The framework covers two fundamental categories of visual understanding: 2D scene understanding and 3D structure inference. The 2D suite includes referring expression, semantic, and instance segmentation, testing the capability to ground natural language and segment objects. For 3D understanding, the model focuses on monocular metric depth and surface normal estimation, which require geometric reasoning and internal knowledge about object scales.

As illustrated in the framework diagram above, Vision Banana accepts an image and a prompt specifying the desired visualization (e.g., segmentation, depth, surface normal) and generates the corresponding output. The model is evaluated against specialist models across various benchmarks, including RefCOCog, ReasonSeg, Cityscapes, and Metric Depth, demonstrating its ability to rival or surpass task-specific experts.

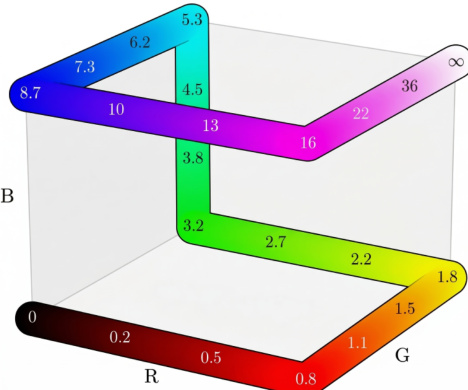

To ensure quantitative assessment, the generated images follow decodable visualization schemes specified via prompts. These visualizations are designed to be decoded back to vision outputs using specific color maps.

The figure above depicts a sample color map used for decoding, where specific colors correspond to numerical values ranging from 0 to infinity, facilitating the inversion of visual outputs back to physical measurements. Data collection for instruction tuning utilizes in-house model annotations for web-crawled 2D images and synthetic data from rendering engines for 3D tasks. Crucially, no training data from the evaluation benchmarks is included in the instruction-tuning mixture, ensuring the results reflect true generalist capability. The authors further validate the preservation of image generation capabilities by benchmarking Vision Banana against the base Nano Banana Pro on text-to-image generation and image editing tasks, obtaining competitive win rates that verify the model does not forget its generative nature.

Experiment

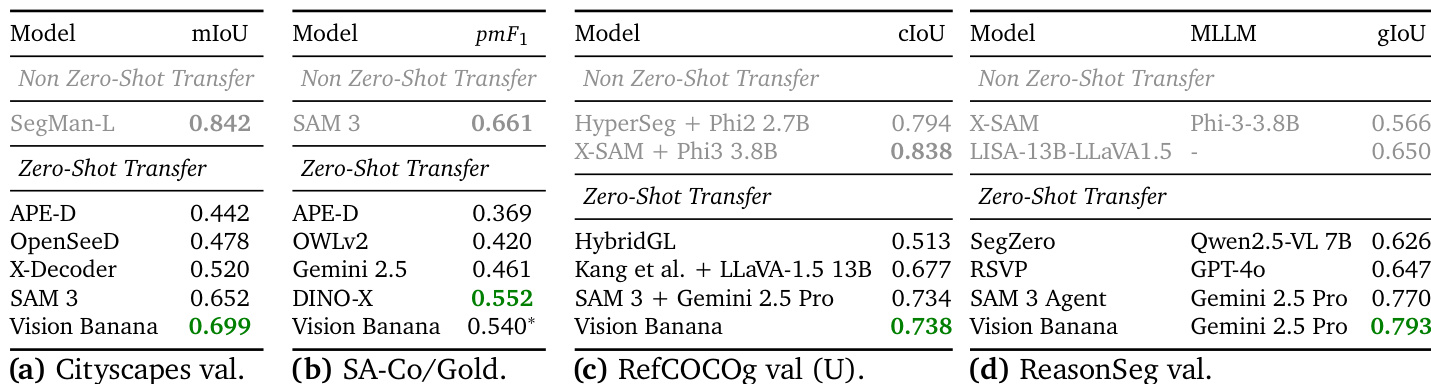

The evaluation compares Vision Banana against task-specific specialist models across 2D semantic understanding and 3D monocular understanding tasks under a zero-shot transfer setting without specialized architectures or custom training losses. Results indicate the model achieves state-of-the-art performance in semantic and referring expression segmentation by leveraging generative pre-training to reason about natural language queries. Additionally, the approach successfully infers metric depth and surface normals from single images without camera intrinsics, producing geometrically consistent reconstructions and superior visual fidelity that surpasses existing specialist methods on multiple benchmarks.

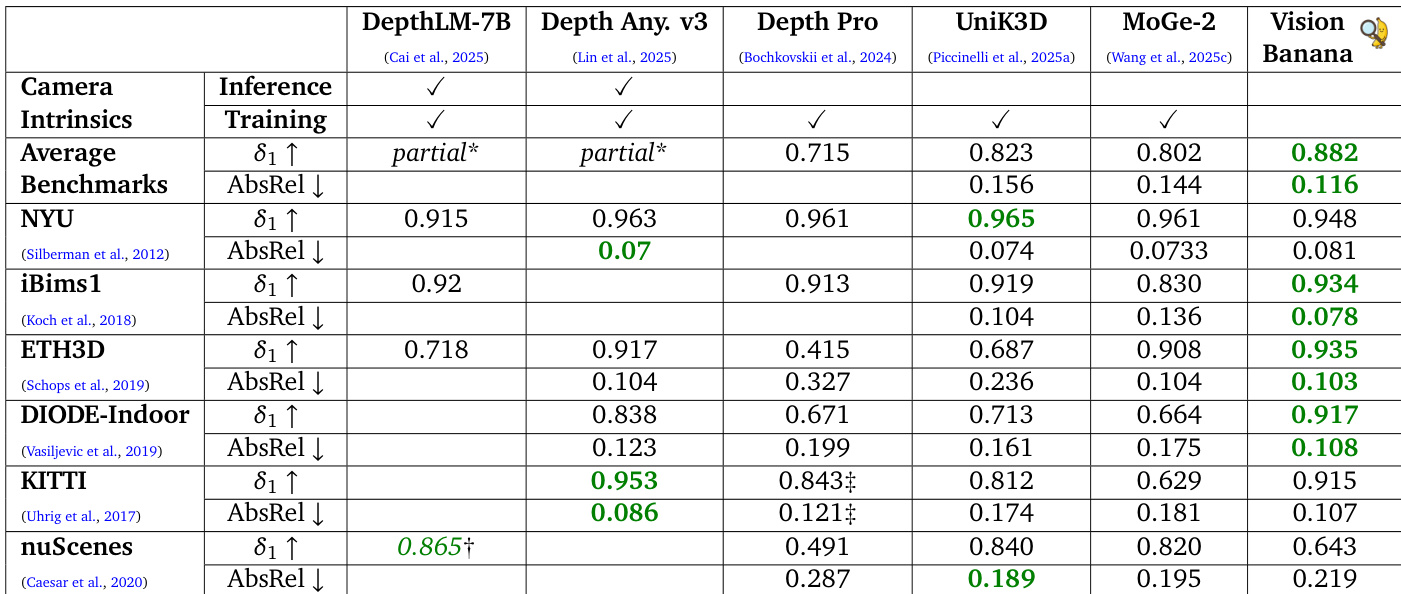

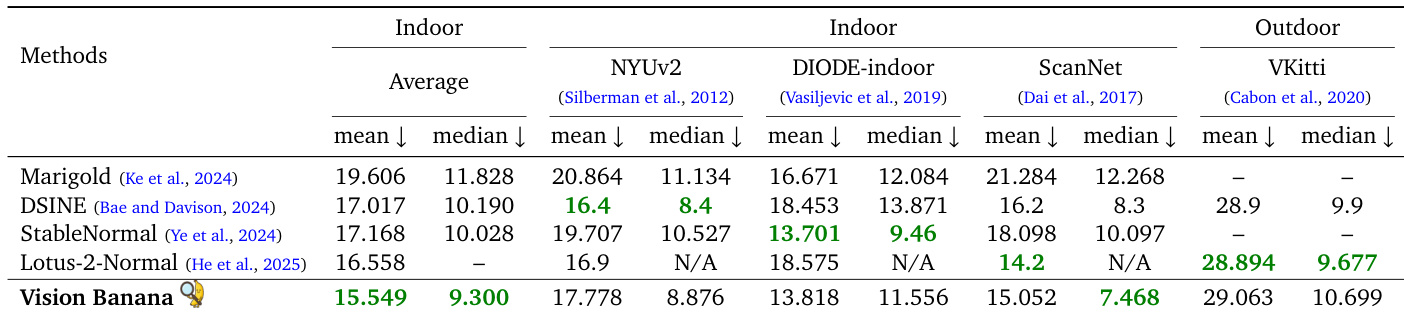

The authors present Vision Banana, a generalist vision model built from an image generator that achieves state-of-the-art zero-shot performance across a broad range of visual understanding tasks. The model outperforms specialized methods in reasoning and referring expression segmentation and demonstrates competitive results in semantic and instance segmentation. Furthermore, it achieves superior performance in 3D understanding tasks like metric depth and surface normal estimation without requiring camera intrinsics. Vision Banana achieves top-tier zero-shot performance in reasoning and referring expression segmentation, surpassing specialized agents and models. The model demonstrates robust metric depth estimation, outperforming dedicated depth models without relying on camera intrinsics during training or inference. In surface normal estimation, the model achieves the lowest error rates on indoor datasets and produces higher visual fidelity than leading specialist methods.

The authors evaluate Vision Banana on monocular metric depth estimation, comparing it against specialized models that often rely on camera intrinsics. Results indicate that Vision Banana achieves superior average performance and leads on several specific datasets without relying on camera intrinsics during training or inference. This indicates strong zero-shot generalization capabilities derived from synthetic training data. Vision Banana achieves the highest average accuracy and lowest error rates across benchmarks compared to specialized models. The model outperforms competitors like Depth Anything V3 on average across multiple datasets despite not using camera intrinsics. Trained entirely on synthetic data, the model demonstrates robust zero-shot generalization to real-world scenes.

The authors evaluate surface normal estimation across multiple benchmarks, demonstrating that their zero-shot model achieves superior average performance on indoor datasets. While specialized models lead on specific outdoor or individual indoor datasets, Vision Banana outperforms them on the ScanNet benchmark and maintains competitive results on outdoor scenes without in-domain training. Vision Banana achieves the lowest mean and median errors averaged across indoor datasets. The model outperforms state-of-the-art specialists on the ScanNet benchmark. Results on the outdoor VKitti dataset are competitive, despite the model not being trained on this specific data.

The authors demonstrate that Vision Banana achieves state-of-the-art results across a broad range of visual understanding tasks without specialized architectures. The data shows the model outperforms leading specialist counterparts in semantic segmentation, metric depth estimation, and surface normal estimation. While it excels in text-to-image generation, it shows slightly lower performance in image editing and instance segmentation compared to specific competitors. Vision Banana surpasses specialist models in semantic segmentation and referring expression tasks. The model achieves superior accuracy in 3D understanding tasks like depth and surface normal estimation. Visual generation results show a higher win rate for text-to-image generation but lower performance in image editing.

Vision Banana is evaluated as a generalist vision model trained on synthetic data to assess its zero-shot performance across segmentation, reasoning, and 3D understanding tasks compared to specialized methods. The experiments validate that the model achieves superior accuracy in metric depth and surface normal estimation without relying on camera intrinsics while also surpassing specialists in reasoning and referring expression segmentation. Although the model excels in text-to-image generation and semantic segmentation, it shows slightly lower performance in image editing and instance segmentation compared to specific competitors.