Command Palette

Search for a command to run...

AnyRecon: Beliebige 3D-Rekonstruktion mittels eines Video-Diffusionsmodells

AnyRecon: Beliebige 3D-Rekonstruktion mittels eines Video-Diffusionsmodells

Yutian Chen Shi Guo Renbiao Jin Tianshuo Yang Xin Cai Yawen Luo Mingxin Yang Mulin Yu Linning Xu Tianfan Xue

Zusammenfassung

Die 3D-Rekonstruktion aus spärlichen Ansichten (Sparse-view 3D reconstruction) ist essenziell für die Modellierung von Szenen aus Gelegenheitsaufnahmen, stellt jedoch für nicht-generative Rekonstruktionsverfahren weiterhin eine Herausforderung dar. Bestehende Diffusions-basierte Ansätze mildern dieses Problem durch die Synthese neuer Ansichten ab, basieren jedoch häufig nur auf ein bis zwei Aufnahmerahmen (capture frames). Dies schränkt die geometrische Konsistenz ein und begrenzt die Skalierbarkeit auf große oder diverse Szenen.Wir schlagen AnyRecon vor, ein skalierbares Framework für die Rekonstruktion aus beliebigen und ungeordneten spärlichen Eingaben, das eine explizite geometrische Kontrolle bewahrt und gleichzeitig eine flexible Anzahl an Bedingungsfaktoren (conditioning cardinality) unterstützt. Um eine weitreichende Konditionierung (long-range conditioning) zu ermöglichen, konstruiert unsere Methode ein persistentes globales Szenengedächtnis über einen vorgelagerten Cache der Aufnahmemansichten (capture view cache). Zudem wird die zeitliche Kompression entfernt, um die Korrespondenz auf Frame-Ebene auch bei großen Ansichtswechseln aufrechtzuerhalten.Über die Verbesserung generativer Modelle hinaus stellen wir fest, dass das Zusammenspiel zwischen Generierung und Rekonstruktion für großflächige 3D-Szenen entscheidend ist. Daher führen wir eine geometrie-bewusste Konditionierungsstrategie ein, die Generierung und Rekonstruktion durch ein explizites 3D-Geometriegedächtnis und eine geometriegetriebene Abfrage der Aufnahmemansichten (capture-view retrieval) koppelt. Um die Effizienz zu gewährleisten, kombinieren wir eine 4-Schritt-Diffusions-Distillation mit einem Context-Window Sparse Attention, um die quadratische Komplexität zu reduzieren. Umfangreiche Experimente belegen eine robuste und skalierbare Rekonstruktion über unregelmäßige Eingaben, große Ansichtswinkelunterschiede und lange Trajektorien hinweg.

One-sentence Summary

AnyRecon is a scalable framework for 3D reconstruction from arbitrary and unordered sparse views that utilizes a persistent global scene memory and a geometry-aware conditioning strategy to maintain long-range geometric consistency and frame-level correspondence, overcoming the scalability and consistency limitations of existing diffusion-based methods.

Key Contributions

- The paper introduces AnyRecon, a scalable framework for 3D reconstruction from arbitrary and unordered sparse inputs that utilizes a video diffusion architecture with a global scene memory cache to maintain frame-level correspondence across large viewpoint changes.

- A geometry-aware conditioning strategy is presented to couple generation and reconstruction through a closed-loop system involving an explicit 3D geometric memory and a geometry-driven capture-view retrieval mechanism.

- The method incorporates 4-step diffusion distillation combined with context-window sparse attention to ensure computational efficiency, with experiments demonstrating superior performance in view interpolation, extrapolation, and large-scale scene consistency compared to existing baselines.

Introduction

Sparse-view 3D reconstruction is essential for transforming casual, irregular captures into immersive digital environments. While recent diffusion-based methods attempt to bridge the gap by synthesizing novel views, they often rely on only one or two reference frames, which limits their ability to maintain global geometric consistency and scale to large scenes. Furthermore, existing video diffusion frameworks are typically designed for sequential data, making them ill-suited for the unordered and non-sequential nature of arbitrary sparse inputs.

The authors leverage a scalable framework called AnyRecon to enable high-quality reconstruction from an arbitrary number of unordered views. They introduce a video diffusion architecture that utilizes a global scene memory cache and removes temporal compression to maintain frame-level correspondence across large viewpoint gaps. To support large-scale environments, the authors implement a geometry-aware conditioning strategy that creates a closed loop between generation and reconstruction through an explicit 3D geometry memory and geometry-driven view retrieval.

Dataset

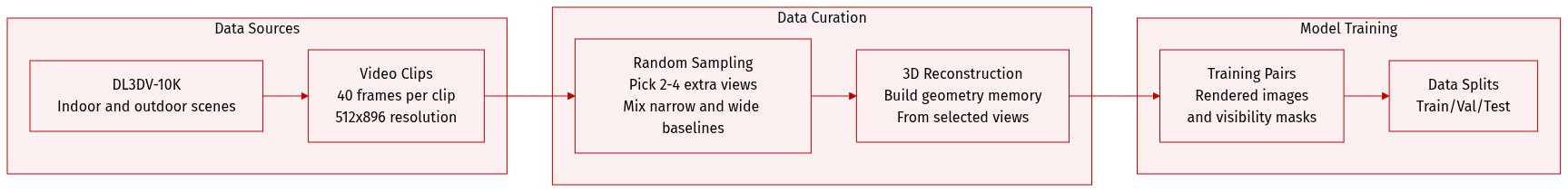

The authors utilize the DL3DV-10K dataset, a large-scale collection of high-quality 3D indoor and outdoor scenes, to train AnyRecon. The dataset processing and usage are summarized below:

- Dataset Composition and Partitioning: Original video sequences are partitioned into clips consisting of 40 frames each. Each frame is processed at a resolution of 512 by 896.

- Conditioning Strategy: To enhance generative priors and simulate irregular input scenarios, the authors implement a randomized conditioning sampling strategy. For every clip, the first frame is fixed as a base reference, while an additional N views (where N is between 2 and 4) are randomly selected.

- Sampling Distribution: To balance narrow-baseline interpolation with wide-baseline synthesis, the additional conditioning views are sampled using a dual-probability approach. There is a 50% probability that indices are selected from the first 20 frames and a 50% probability they are selected from the full 40-frame window.

- Data Processing and Training Pairs: The selected conditioning views are passed through a feed-forward reconstruction module to establish an initial 3D geometry memory. The authors then project the resulting point-cloud observations onto target novel viewpoints to generate rendered images and visibility masks, which constitute the final training pairs for the geometry-controlled generative model.

Method

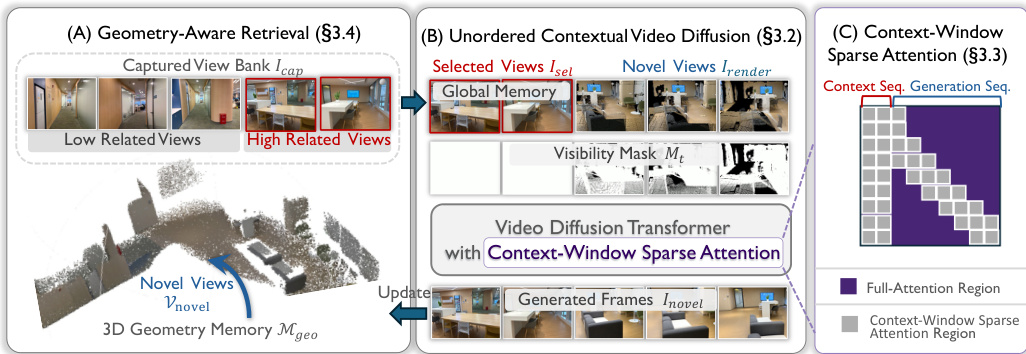

The AnyRecon framework operates as a closed-loop system for sparse-view 3D reconstruction, designed to handle arbitrary and unordered input sequences while maintaining geometric consistency across long trajectories. The overall architecture consists of three primary stages: initial geometry construction, novel view generation, and geometry updating, which collectively form an iterative refinement loop. As illustrated in the framework diagram, the process begins with the construction of an initial 3D geometry memory Mgeo from the input views, which are organized into a captured view bank Icap. This initial geometry is established using a feed-forward point map estimation method such as VGGT or π3, providing a foundational representation of the scene.

The second stage involves the synthesis of novel views along a user-specified trajectory Vnovel. To manage computational complexity, the trajectory is segmented, and for each segment, a geometry-aware retrieval process selects a subset of relevant views Isel from Icap. This retrieval is guided by the current 3D geometry memory Mgeo, ensuring that only views with significant geometric contribution to the target perspective are considered. The selected views, along with point-cloud renderings Irender and visibility masks Mt derived from Mgeo, serve as contextual inputs to the unordered contextual video diffusion model. This diffusion module, as detailed in Section 3.2, employs a global scene memory to store and query the retrieved reference views, enabling flexible context injection independent of temporal order. This mechanism decouples the generation process from strict temporal dependencies, allowing for robust synthesis across arbitrary viewpoint gaps. Furthermore, the model uses non-compressive latent encoding, where a frame-wise 2D VAE preserves the one-to-one mapping between latent tokens and pixel coordinates, avoiding the feature entanglement that arises from temporal compression in standard video diffusion models.

To ensure computational efficiency, the framework incorporates two key optimizations. First, a context-window sparse attention mechanism limits the receptive field of each frame in the target trajectory to a local temporal window and a selectively retrieved subset of geometry-aligned reference views Isel. This reduces the quadratic complexity associated with long sequences by focusing the model's attention on visually relevant regions. Second, a 4-step diffusion sampling strategy accelerates inference by distilling the pre-trained model into a student network capable of high-quality generation in just four steps. This is achieved through distribution matching distillation, which minimizes the Kullback-Leibler divergence between the student's and teacher's distributions, using a pseudo-regression objective with a stop-gradient operator to stabilize training.

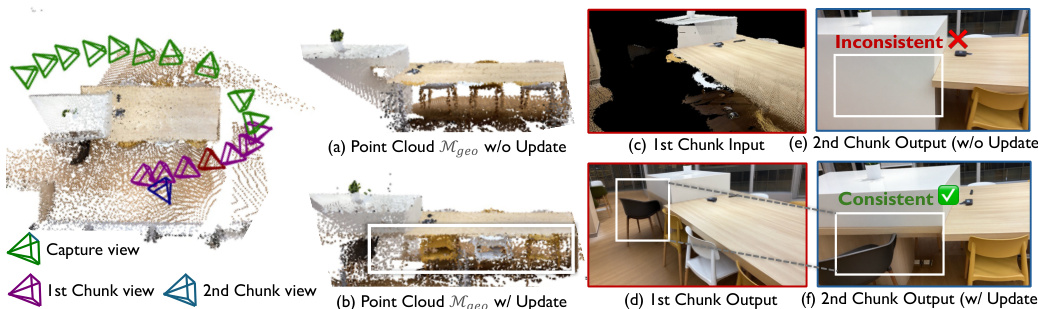

The final stage is the geometry updating process, where the 3D geometry reconstructed from the newly synthesized views is used to update the global memory Mgeo. This update is critical for maintaining scene-level consistency, as it ensures that newly generated trajectory segments are integrated into the global scene representation. Without this update, the reconstructed geometry becomes incomplete and inconsistent across trajectory segments, leading to visual mismatches. The explicit memory update mechanism prevents error accumulation and geometric drift, anchoring each new segment to the evolving global structure. This recursive loop—where novel views inform geometry and updated geometry guides subsequent generation—enables scalable processing of long trajectories and large-scale inputs. The geometry-aware retrieval strategy, which selects conditioning views based on their geometric contribution to the target perspective, further enhances the robustness of the system under occlusion and complex spatial layouts.

Experiment

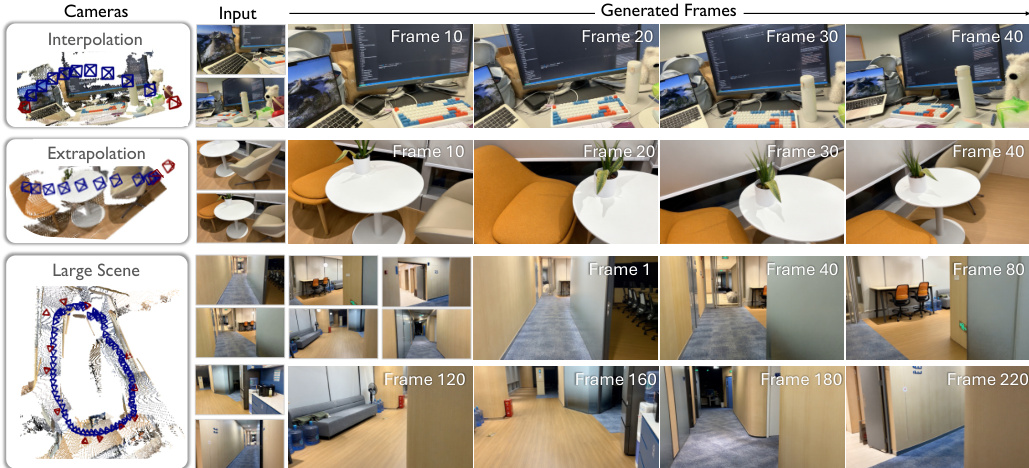

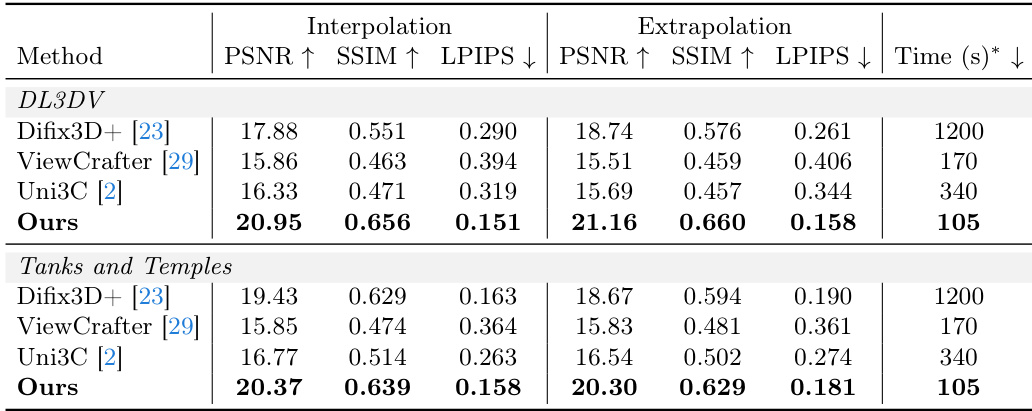

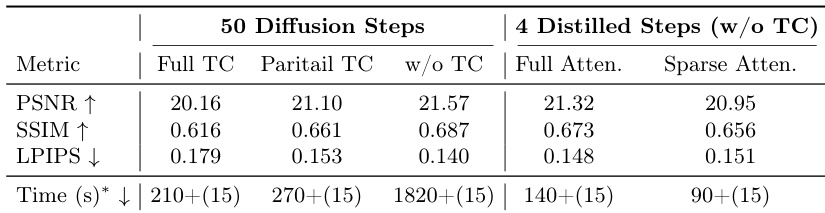

The evaluation compares AnyRecon against state-of-the-art diffusion-based methods using interpolation and extrapolation tasks on the DL3DV and Tanks and Temples datasets to assess reconstruction fidelity and generative capability. Results demonstrate that AnyRecon achieves superior structural integrity and appearance consistency by leveraging global scene memory to suppress geometric artifacts and hallucinate plausible content. Ablation studies further validate that avoiding temporal compression preserves essential high-frequency details, while the combination of model distillation and sparse attention significantly enhances inference efficiency without compromising competitive visual quality.

The authors evaluate their method against state-of-the-art diffusion-based 3D reconstruction models on two datasets, assessing performance in interpolation and extrapolation settings. Results show that the proposed method achieves superior quality across all metrics and significantly faster inference times compared to baselines, which exhibit structural inconsistencies and higher latency. The proposed method outperforms all baselines in both interpolation and extrapolation tasks, achieving higher quality across all evaluation metrics. The method demonstrates significantly faster inference times compared to other approaches, with the lowest latency observed in both datasets. Baselines exhibit structural inconsistencies and lower quality, particularly in handling large viewpoint gaps and maintaining cross-view coherence.

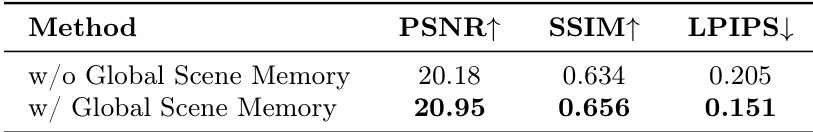

The authors conduct an ablation study to evaluate the impact of global scene memory on reconstruction quality. Results show that incorporating global scene memory leads to improvements across all metrics, with higher PSNR and SSIM values and lower LPIPS scores, indicating better pixel-level accuracy, structural integrity, and perceptual quality. The presence of global scene memory enhances the model's ability to preserve fine details and reduce artifacts in synthesized views. Incorporating global scene memory improves PSNR and SSIM while reducing LPIPS, indicating enhanced reconstruction quality. The model with global scene memory achieves better structural integrity and perceptual quality compared to the version without it. Global scene memory helps preserve fine-grained details and reduces artifacts in synthesized views.

The authors conduct an ablation study to evaluate the impact of different temporal compression strategies, inference efficiency techniques, and the role of global scene memory on reconstruction quality and speed. Results show that full temporal compression degrades visual fidelity, while the combination of distillation and sparse attention significantly reduces inference time with minimal quality loss, and maintaining raw captured views in global memory improves structural and textural accuracy. The study highlights trade-offs between efficiency and quality, emphasizing the importance of preserving high-frequency details and using memory-based conditioning for robust 3D reconstruction. Full temporal compression leads to a noticeable degradation in visual fidelity, particularly in fine-grained structural details. The integration of distillation and sparse attention reduces inference time substantially while maintaining competitive reconstruction quality. Maintaining raw captured views in global memory improves structural integrity and texture recovery compared to baselines relying on rendered point-cloud maps.

The authors evaluate their method against state-of-the-art diffusion-based models through comparative testing on interpolation and extrapolation tasks, alongside ablation studies on scene memory and temporal compression strategies. The results demonstrate that the proposed approach provides superior reconstruction quality and faster inference times while maintaining better cross-view coherence than existing baselines. Furthermore, the findings highlight that utilizing global scene memory and combining distillation with sparse attention optimizes the balance between computational efficiency and the preservation of fine-grained structural details.