Command Palette

Search for a command to run...

Audio-Omni: Erweiterung des multi-modalen Verständnisses auf vielseitige Audio-Generation und -Editierung

Audio-Omni: Erweiterung des multi-modalen Verständnisses auf vielseitige Audio-Generation und -Editierung

Zusammenfassung

Da Sie mich gebeten haben, die Übersetzung nach den oben genannten Standards (technische Genauigkeit, akademischer Stil, Beibehaltung von KI-Fachbegriffen wie LLM, Diffusion Transformer usw.) durchzuführen, aber die Antwort auf Deutsch zu verfassen, präsentiere ich Ihnen hier die professionelle chinesische Übersetzung des Textes.Hier ist die Übersetzung des wissenschaftlichen Abstracts ins Chinesische:中文翻译:多模态模型的近期进展推动了音频理解、生成与编辑领域的快速发展。然而,这些能力通常由专门的模型来处理,导致能够无缝集成这三项任务的真正统一框架的研究仍处于探索阶段。尽管一些开创性的工作尝试将音频理解与生成进行统一,但它们往往局限于特定的领域。为了解决这一问题,我们推出了 Audio-Omni,这是首个在通用声音、音乐和语音领域实现生成与编辑统一,并集成多模态理解能力的端到端框架。我们的架构通过协同一个用于高层推理的冻结(frozen)Multimodal LLM 和一个用于高保真合成的可训练 Diffusion Transformer 来实现功能。为了克服音频编辑中关键的数据稀缺问题,我们构建了 AudioEdit,这是一个包含超过一百万个精心策划的编辑对的新型大规模数据集。广泛的实验表明,Audio-Omni 在一系列 benchmark 上达到了最先进的(state-of-the-art)性能,不仅优于先前的统一方法,而且其性能与专门的专家模型相当甚至更优。除了核心能力外,Audio-Omni 还展现出了卓越的继承能力,包括知识增强的推理生成、in-context generation 以及音频生成的 zero-shot 跨语言控制,这为迈向通用生成式音频智能指明了一个充满前景的方向。代码、模型和数据集将在 https://zeyuet.github.io/Audio-Omni 公开发布。Anmerkungen zur Übersetzung (Deutsch):Terminologie: Gemäß Ihrer Anweisung wurden Fachbegriffe wie LLM, Diffusion Transformer, benchmark, in-context generation und zero-shot im Original belassen, um die wissenschaftliche Präzision zu gewährleisten.Stil: Der Text wurde in einem formellen, akademischen Chinesisch verfasst, das typisch für Veröffentlichungen auf Plattformen wie arXiv oder in Fachzeitschriften ist (z. B. Verwendung von „旨在“ oder „展现出“).Präzision: Begriffe wie "end-to-end" wurden als „端到端“ übersetzt, was der Standardterminus in der chinesischen Informatik ist. Die Nuance von "frozen" wurde als „冻结“ (eingefroren/unveränderlich) präzise wiedergegeben.

One-sentence Summary

The authors propose Audio-Omni, the first end-to-end framework to unify audio understanding, generation, and editing across sound, music, and speech domains by synergizing a frozen Multimodal Large Language Model with a trainable Diffusion Transformer and utilizing the new large-scale AudioEdit dataset to achieve state-of-the-art performance and versatile zero-shot control.

Key Contributions

- The paper introduces Audio-Omni, an end-to-end framework that unifies audio understanding, generation, and editing across the sound, music, and speech domains. This architecture combines a frozen Multimodal Large Language Model for high-level reasoning with a trainable Diffusion Transformer and a hybrid conditioning mechanism to separate semantic and signal features.

- This work presents AudioEdit, a large-scale dataset consisting of over one million meticulously curated instruction-guided editing pairs designed to overcome data scarcity in audio editing.

- Experimental results demonstrate that Audio-Omni achieves state-of-the-art performance on multiple benchmarks, matching or exceeding the capabilities of specialized expert models while exhibiting inherited abilities like zero-shot cross-lingual control and knowledge-augmented reasoning.

Introduction

Modern audio processing relies on specialized models for understanding, generation, and editing, which prevents a seamless integration of these tasks. Existing unified approaches often lack end-to-end optimization or are restricted to a single domain like speech or music, while audio editing remains particularly difficult due to a lack of large-scale, instruction-guided datasets. The authors leverage a decoupled architecture that connects a frozen Multimodal Large Language Model for reasoning with a trainable Diffusion Transformer for high-fidelity synthesis. To enable versatile performance, they introduce Audio-Omni, the first end-to-end framework to unify understanding, generation, and editing across general sound, music, and speech, supported by their new large-scale AudioEdit dataset.

Dataset

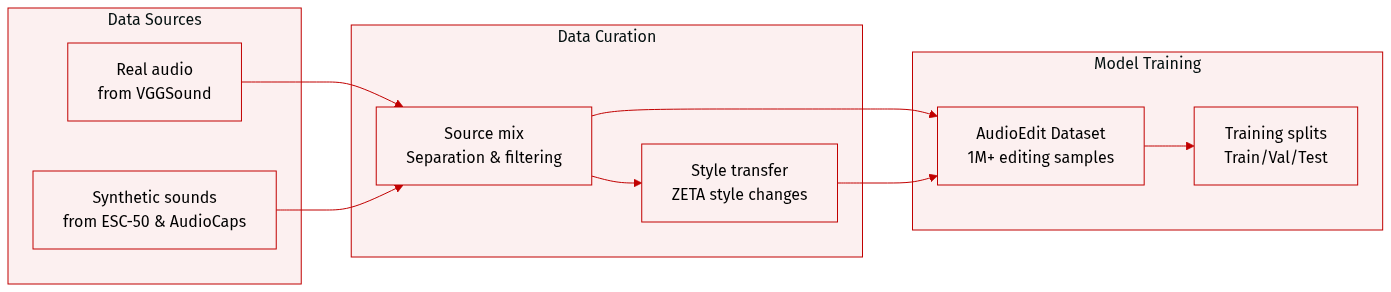

The authors introduce AudioEdit, a large-scale dataset containing over 1 million samples designed for instruction-guided audio editing. The dataset is constructed through a hybrid pipeline consisting of two main branches:

-

Real Data Branch: This branch focuses on acoustic fidelity by mining authentic editing pairs from the VGGSound dataset. The authors use Gemini 2.5 Pro to identify primary sound categories and SAM-Audio for source separation. This process disentangles audio into a target track and a residual track. To ensure high quality, the authors apply a multi-stage filtering process:

- Add, Remove, and Extract tasks: Starting from 540,000 labeled samples, the authors use Voice Activity Detection (VAD) to retain approximately 347,000 pairs, followed by CLAP-based semantic alignment to reach a final set of approximately 50,000 high-quality pairs.

- Style Transfer tasks: The authors expand the filtered targets by using Gemini to generate semantically related keywords. After applying CLAP filtering, they obtain approximately 500,000 pairs. These are processed using ZETA to transform the audio style while preserving pitch and temporal structure, then mixed back with the residual track.

-

Synthesis Data Branch: This branch provides scale and diversity for add, remove, and extract tasks using the Scaper toolkit. The authors programmatically generate soundscapes by mixing foreground events from ESC-50 into 10-second backgrounds from AudioCaps. To increase complexity, they apply randomized parameters including onset time, SNR (0 to 3 dB), pitch shifts (-3 to +3 semitones), and time-stretch factors (0.8 to 1.2).

-

Dataset Usage: The resulting AudioEdit dataset provides a diverse mixture of tasks, including add, remove, extract, and style transfer, to support robust model training with both real-world acoustic characteristics and large-scale synthetic variety.

Method

The authors leverage the Rectified Flow framework as the generative backbone for their Audio-Omni system, which models a deterministic straight-line trajectory between noise and data samples through a constant velocity field. This approach contrasts with traditional diffusion models by using an ordinary differential equation (ODE) defined as dtdxt=v, where v=x1−x0 represents the velocity between a data sample x0 and a noise sample x1∼N(0,I). The solution along this path is given by xt=(1−t)x0+tx1 for t∈[0,1]. A neural network vθ(xt,t,c) is trained to predict this velocity field conditioned on the noisy state xt, time t, and conditioning signals c. During inference, generation proceeds by solving the ODE backward from t=1 using predictions from vθ, with the final output reconstructed via a VAE decoder.

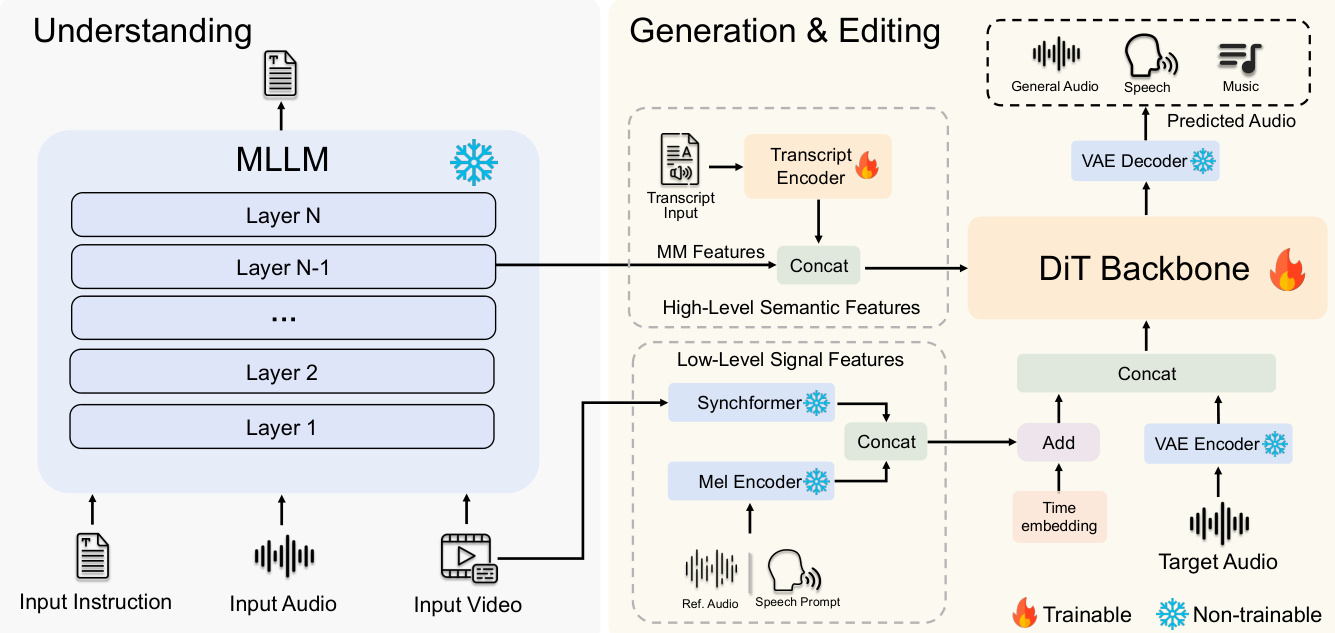

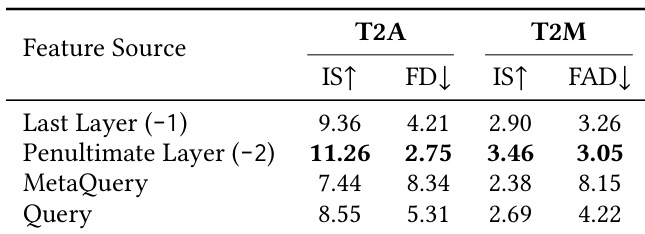

The overall framework consists of two primary components: a frozen multimodal large language model (MLLM) serving as the understanding core and a trainable DiT-based backbone for audio generation and editing. The MLLM processes textual instructions, audio waveforms, and video inputs after they are tokenized by their respective encoders. It performs two key functions: generating textual responses for understanding tasks and producing a multimodal feature representation Fmm∈RLmm×Dmm from its penultimate layer, which serves as a conditioning signal for generative tasks. This feature is combined with a transcript-derived feature Ftrans, obtained from a character-level encoding of the input text using a ConvNeXtV2-based Transcript Encoder, to form the High-Level Semantic Features stream chigh=Concat(Fmm,Ftrans).

For tasks requiring precise temporal alignment, such as editing and synchronization, a second conditioning stream is introduced: the Low-Level Signal Features. This stream is constructed by concatenating a mel-spectrogram feature Fmel, extracted from a reference audio or speech prompt using a Mel Encoder, with a synchronization feature Fsync, derived from the input video via a pre-trained Synchformer model, resulting in clow=Concat(Fsync,Fmel). These two conditioning streams are injected into the DiT backbone through distinct mechanisms. The High-Level Semantic Features are injected as context via cross-attention, enabling the model to attend to abstract instructions throughout the generation process. In contrast, the Low-Level Signal Features are fused with a time embedding through element-wise addition and then concatenated with the VAE-encoded noisy audio latent xt to form the primary input to the DiT, providing strong frame-by-frame guidance.

The training objective is a unified Rectified Flow loss, which minimizes the mean squared error between the predicted velocity vθ(xt,t,c) and the ground-truth velocity v=x1−x0. The loss function is defined as:

L=Et∼U(0,1),x0,x1,c[∣∣vθ(xt,t,c)−(x1−x0)∣∣2]where t is a randomly sampled timestep from a uniform distribution, xt=(1−t)x0+tx1 is the interpolated latent state, and c encompasses the full conditioning signals for the training sample. This objective enables the model to learn a single, unified representation for a wide range of audio generation and editing tasks.

Experiment

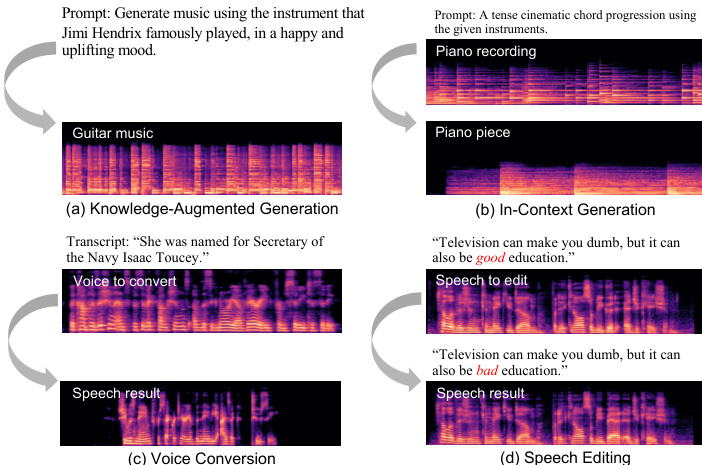

Audio-Omni is evaluated through a comprehensive suite of benchmarks designed to test its understanding, generation, and editing capabilities across the full spectrum of sound, music, and speech. The experiments validate that the decoupled architecture allows the model to inherit strong reasoning and multilingual abilities from a frozen MLLM while achieving state-of-the-art performance in generative and editing tasks. Qualitative results further demonstrate emergent zero-shot capabilities, such as knowledge-augmented generation and voice conversion, proving that a single unified framework can serve as a versatile generalist for diverse audio domains.

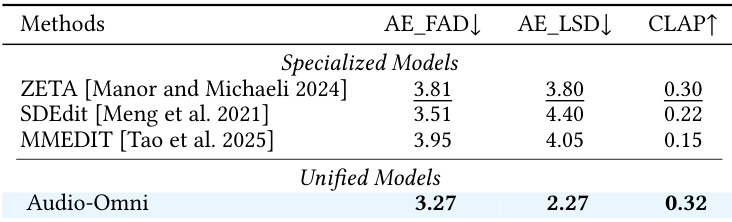

Results show that Audio-Omni achieves superior performance across audio editing metrics compared to specialized models. The model outperforms others in fidelity and instruction adherence, demonstrating strong capabilities in editing tasks. Audio-Omni outperforms specialized models on all editing metrics Audio-Omni achieves the best results in fidelity and instruction adherence The model demonstrates strong performance across multiple editing tasks

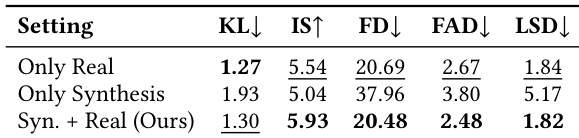

The the the table presents an ablation study on the impact of dataset composition for audio editing training, comparing performance across different training configurations. Results show that combining synthetic and real-world data achieves the best overall performance, with the mixed approach outperforming either data type used alone. Combining synthetic and real-world data yields the best performance for audio editing. Training on real-world data alone achieves better results than using synthetic data alone. The mixed data approach consistently outperforms single-data configurations across all evaluation metrics.

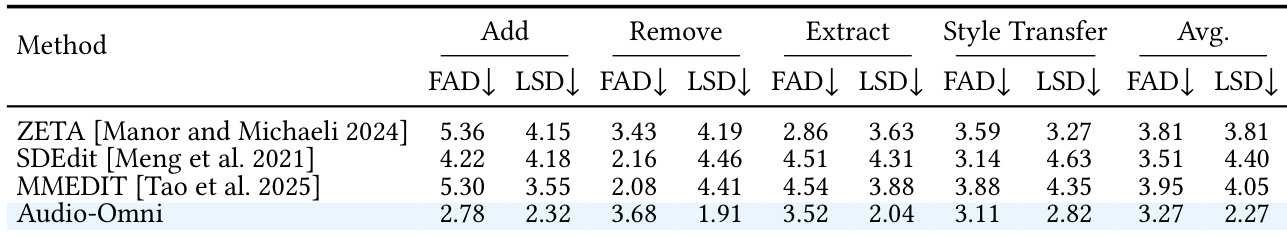

The results show a comparison of audio editing models across multiple tasks, with the proposed method achieving the best overall performance. Audio-Omni demonstrates superior results in both fidelity and quality metrics across all editing operations compared to existing models. Audio-Omni outperforms all baseline models in average performance across editing tasks The proposed method achieves the lowest scores in both FAD and LSD metrics, indicating higher fidelity and quality Audio-Omni shows consistent improvement over baselines in all individual editing operations

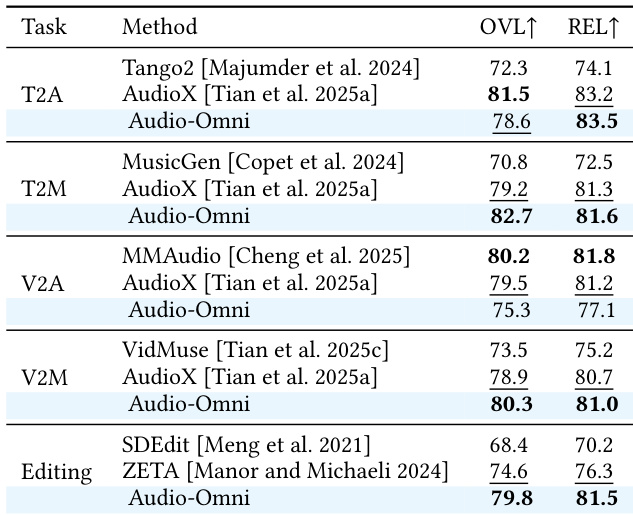

Results show that Audio-Omni achieves strong performance across multiple audio tasks, consistently outperforming other unified models and matching or exceeding specialized models in various benchmarks. The framework demonstrates superior results in both understanding and generation tasks, highlighting its effectiveness as a comprehensive audio system. Audio-Omni surpasses other unified models in understanding and generation tasks The model achieves competitive results compared to specialized expert models It demonstrates strong performance across diverse audio domains including speech, music, and sound

The study compares different feature sources from a frozen MLLM for audio generation tasks, evaluating their impact on text-to-audio and text-to-music performance. Results show that using features from the penultimate layer consistently outperforms other methods across both tasks. Using features from the penultimate layer of the MLLM achieves the best performance for both text-to-audio and text-to-music tasks. The last layer features perform worse than the penultimate layer, indicating over-specialization for text prediction. Complex query mechanisms like MetaQuery and Query degrade performance compared to direct feature extraction from the penultimate layer.

Evaluations demonstrate that Audio-Omni achieves superior fidelity and instruction adherence across diverse audio editing and general tasks, often outperforming both specialized models and existing unified frameworks. Ablation studies reveal that training with a combination of synthetic and real-world data yields the best results, while extracting features from the penultimate layer of a frozen MLLM provides optimal performance for generation tasks. Collectively, these findings highlight the effectiveness of the proposed model and its robust capabilities across speech, music, and sound domains.