Command Palette

Search for a command to run...

Intern-S1-Pro: Ein wissenschaftliches multimodales Grundmodell im Billionen-Skala-Bereich

Intern-S1-Pro: Ein wissenschaftliches multimodales Grundmodell im Billionen-Skala-Bereich

Zusammenfassung

Wir stellen Intern-S1-Pro vor, das erste wissenschaftliche multimodale Basismodell mit einer Milliarde Milliarden Parametern. Durch das Skalieren auf diese beispiellose Größe bietet das Modell eine umfassende Leistungssteigerung sowohl im allgemeinen als auch im wissenschaftlichen Bereich. Neben verbesserten Schlussfolgerungs- und Bild-Text-Verständnisfähigkeiten wird die Intelligenz des Modells durch fortschrittliche Agenten-Fähigkeiten erweitert. Gleichzeitig wurde seine wissenschaftliche Expertise erheblich ausgeweitet, sodass es nun über 100 spezialisierte Aufgaben in kritischen Wissenschaftsbereichen wie Chemie, Materialwissenschaft, Lebenswissenschaften und Geowissenschaften beherrscht. Die Realisierung dieser massiven Skalierung wird durch die robuste Infrastruktur von XTuner und LMDeploy ermöglicht, die hocheffizientes Reinforcement Learning (RL)-Training auf der Ebene von einer Billion Parametern unterstützt und dabei eine strikte Präzisionskonsistenz zwischen Training und Inference gewährleistet. Durch die nahtlose Integration dieser Fortschritte stärkt Intern-S1-Pro weiter die Fusion von allgemeiner und spezialisierter Intelligenz und fungiert als „Specializable Generalist". Es belegt eine Spitzenposition unter den Open-Source-Modellen hinsichtlich allgemeiner Fähigkeiten und übertrifft proprietäre Modelle in der Tiefe spezialisierter wissenschaftlicher Aufgaben.

One-sentence Summary

The Intern-S1-Pro Team from Shanghai AI Laboratory introduces Intern-S1-Pro, a trillion-parameter scientific multimodal foundation model that employs Grouped Routing and Straight-Through Estimators to stabilize massive Mixture-of-Experts training. This approach enables the model to master over 100 specialized scientific tasks while outperforming proprietary systems in deep domain reasoning and general capabilities.

Key Contributions

- The paper introduces Intern-S1-Pro, the first one-trillion-parameter scientific multimodal foundation model that integrates advanced agent capabilities and masters over 100 specialized tasks across chemistry, materials, life sciences, and earth sciences.

- A novel group routing mechanism and a gradient estimation scheme are proposed to address training instability and router optimization challenges in massive Mixture-of-Experts architectures, ensuring efficient load balancing and accelerated expert updates.

- The work demonstrates state-of-the-art performance on scientific benchmarks through a specialized caption pipeline for scientific imagery and co-designed infrastructure using XTuner and LMDeploy, which enables efficient Reinforcement Learning training at the trillion-parameter scale with strict precision consistency.

Introduction

The rapid growth of Large Language Models and Visual Language Models has created a need for unified systems capable of accelerating scientific discovery across diverse fields like chemistry, biology, and materials science. Prior approaches often struggle with the immense diversity of scientific domains, where specialized knowledge and unique reasoning patterns require massive model capacity that smaller or single-purpose models cannot provide. Additionally, scaling to trillion-parameter levels introduces significant engineering hurdles, including training instability caused by expert load imbalance and the difficulty of optimizing router embeddings in Mixture of Experts architectures. To address these challenges, the authors introduce Intern-S1-Pro, the first one-trillion-parameter scientific multimodal foundation model that leverages a novel Grouped Routing mechanism and a synergistic training framework to ensure stability and efficiency. By integrating advanced agent capabilities with joint training on general and specialized tasks, this model achieves state-of-the-art performance across over 100 scientific tasks while outperforming proprietary models in specialized depth.

Dataset

-

Dataset Composition and Sources: The authors construct a 6T-token pre-training corpus for Intern-S1-Pro, combining general image-text data with a specialized 270B-token scientific subset. This scientific data is derived primarily from PDF corpora across life sciences, chemistry, earth sciences, and materials science, addressing the scarcity of high-quality, aligned scientific image-text pairs found in standard web sources.

-

Key Details for the Scientific Subset:

- Source: Massive PDF corpora containing high-information-density figures such as experimental results, statistical plots, and structural diagrams.

- Extraction: The team uses MinerU2.5 for layout analysis to detect and localize figures, formulas, and tables, cropping them into standardized sub-images.

- Deduplication: Perceptual hashing (pHash) is applied to eliminate redundant visual content at scale.

- Captioning Strategy: A model routing mechanism assigns scientific sub-images to InternVL3.5-241B for domain-specific descriptions, while non-scientific sub-images are processed by CapRL-32B, a model trained with Reinforcement Learning with Verifiable Rewards (RLVR) to generate dense captions.

- Quality Control: A 0.5B-parameter text quality discriminator filters out garbled text, repetitive expressions, and low-information-density content.

-

Data Usage in Training: The authors employ the full 6T-token mixture for continued pre-training. The pipeline emphasizes the integration of the newly generated scientific captions to enhance the model's ability to understand and reason about complex visual content, moving beyond the brief or misaligned captions typical of original literature.

-

Processing and Metadata Details:

- Prompting: A multi-template randomized prompting strategy is adopted to ensure linguistic diversity in the generated captions.

- Alignment Focus: The pipeline specifically targets the creation of dense captions where text explicitly refers to visual elements, correcting the common issue where original literature text serves as an extension rather than a description.

- Evaluation Context: While the training data focuses on scientific and general multimodal alignment, the resulting model is evaluated against a suite of benchmarks including SciReasoner, SFE, and MMMU-Pro to test reasoning and perception capabilities.

Method

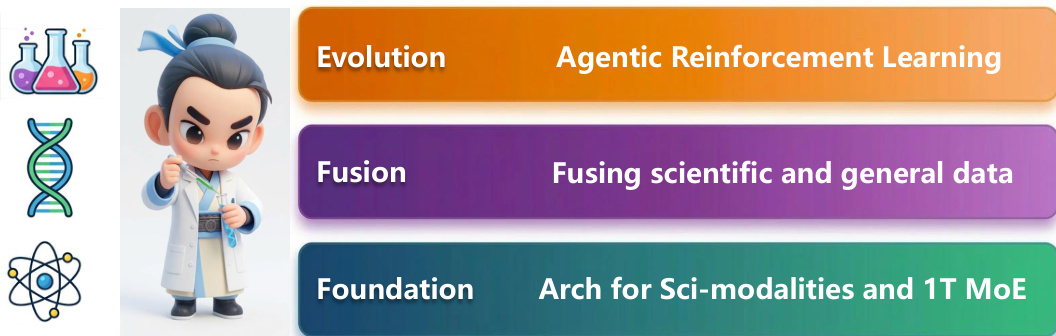

The Intern-S1-Pro model is constructed upon a foundation designed for scientific modalities and a 1T Mixture-of-Experts (MoE) architecture. The overall framework integrates three core pillars: a foundation for scientific modalities, fusion of scientific and general data, and evolution through Agentic Reinforcement Learning.

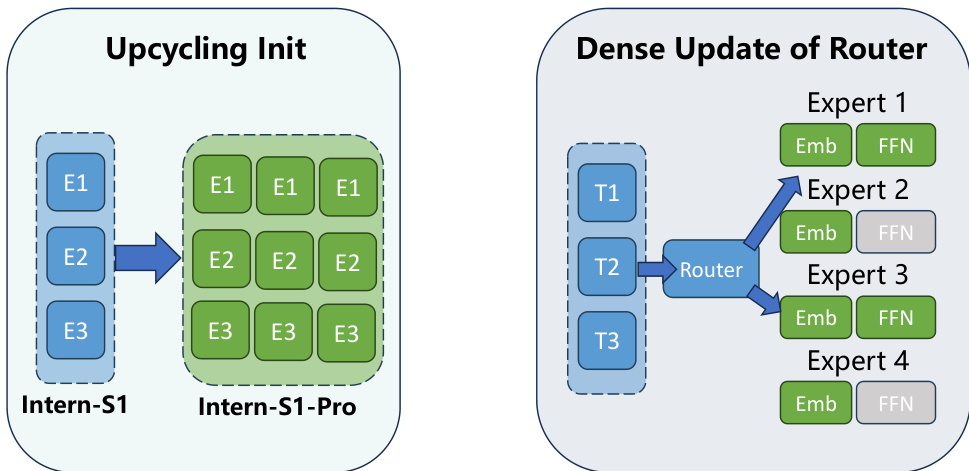

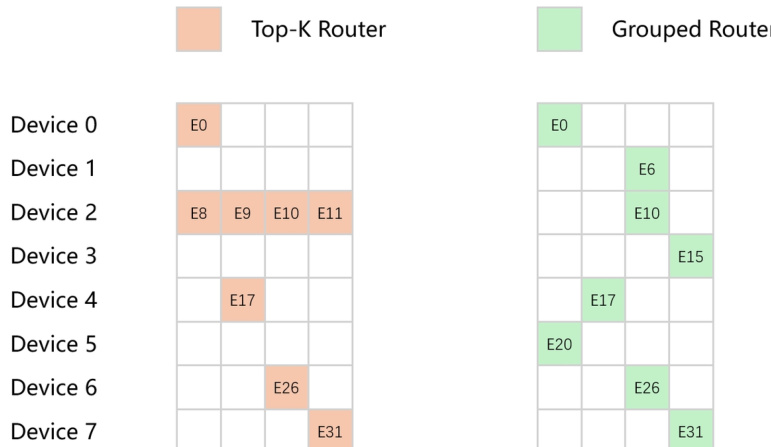

The model architecture is derived from Intern-S1 through an expert expansion process. This upcycling initialization ensures that well-trained experts from the base model are distributed across groups to maintain stability. To address load imbalance in ultra-large-scale MoE models, the authors replace the traditional Top-K Router with a Grouped Router. This design partitions experts into mutually disjoint groups based on device mapping, selecting the top experts within each group to achieve absolute load balancing across devices under an 8-way expert parallelism strategy.

To enable effective training of the sparse routing mechanism, a Straight-Through Estimator (STE) is employed. This technique decouples the forward and backward passes, allowing gradients to flow through the full dense softmax distribution during backpropagation while preserving sparse selection in the forward pass. This ensures that all router embeddings receive informative learning signals, improving load balancing and convergence.

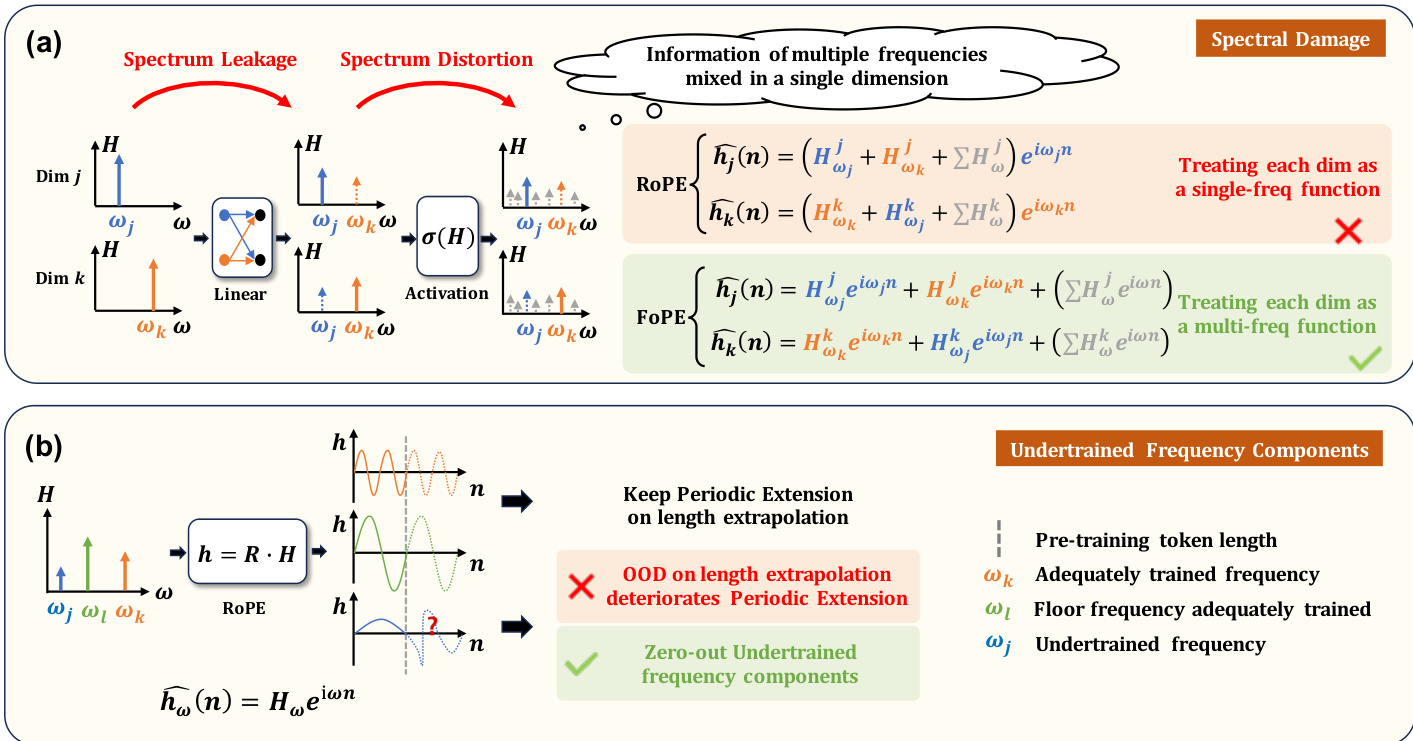

For positional encoding, the model introduces Fourier Position Encoding (FoPE) to better handle the continuous, wave-like nature of physical signals. Unlike Rotary Position Embedding (RoPE), which treats each dimension as a single-frequency function and can suffer from spectral damage, FoPE models each dimension as a multi-frequency function. This approach separates information more effectively and mitigates issues related to undertrained frequency components during length extrapolation.

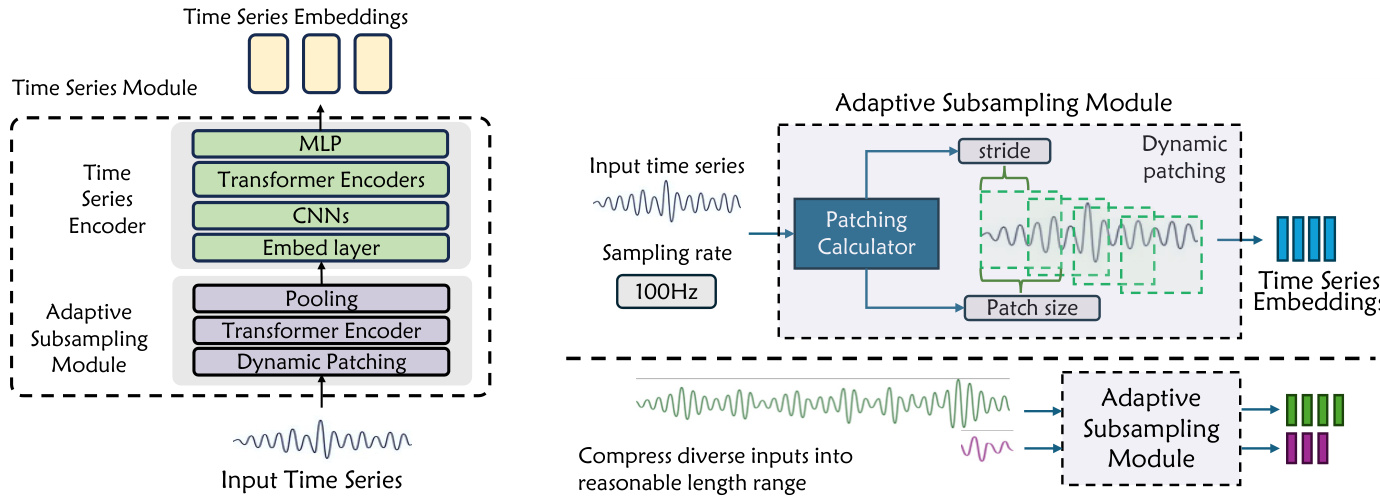

The architecture also includes a dedicated Time Series Module to handle diverse scientific data. This module features an Adaptive Subsampling Module that dynamically determines patch size and stride based on the signal and sampling rate. This allows the model to compress heterogeneous time series into a uniform representation space, handling sequences ranging from 100 to 106 time steps while preserving structural features.

Training involves resolving conflicts between scientific and general data through strategies like Structured Scientific Data Transformation and System Prompt Isolation. Additionally, Stable Mixed-Precision Reinforcement Learning is implemented for the sparse MoE models, utilizing FP8 quantization for expert layers and FP32 for the language modeling head to ensure numerical fidelity and training stability.

Experiment

- Comprehensive evaluations across scientific and general-purpose benchmarks in text-only and multimodal settings validate that Intern-S1-Pro achieves competitive performance with top-tier open-source models and surpasses leading closed-source models in scientific reasoning.

- Comparisons with the previous Intern-S1 generation demonstrate substantial improvements in general capabilities, expanded coverage of diverse scientific domains, and enhanced agent skills for multi-step planning and environmental grounding.

- Time series experiments confirm that incorporating a dedicated time series encoder and dynamic subsampling process allows the model to significantly outperform existing text and vision-language models in capturing complex temporal dynamics.

- A case study in biology reveals that a larger generalist model trained on specialized data outperforms dedicated domain-specific models, proving that integrating general reasoning with specialized knowledge creates a synergistic effect that boosts problem-solving capacity.