Command Palette

Search for a command to run...

Langschwanz-Fahrszenarien mit Reasoning Traces: Das KITScenes LongTail-Dataset

Langschwanz-Fahrszenarien mit Reasoning Traces: Das KITScenes LongTail-Dataset

Zusammenfassung

In real-world Anwendungsbereichen wie dem autonomen Fahren bleibt die Generalisierung auf seltene Szenarien eine fundamentale Herausforderung. Um dies zu adressieren, stellen wir einen neuen Datensatz für end-to-end-Steuerung vor, der sich auf Long-Tail-Fahrszenarien konzentriert. Wir liefern mehransichtige Videodaten, Trajektorien, hochrangige Anweisungen sowie detaillierte Reasoning-Traces, was In-Context-Learning und Few-Shot-Generalisierung ermöglicht. Der daraus abgeleitete Benchmark für multimodale Modelle wie VLMs und VLAs geht über herkömmliche Sicherheits- und Komfortmetriken hinaus, indem er die Befolgung von Anweisungen sowie die semantische Kohärenz zwischen den Modelloutputs bewertet. Die mehrsprachigen Reasoning-Traces in Englisch, Spanisch und Chinesisch stammen von Domänenexperten mit unterschiedlichem kulturellem Hintergrund. Unser Datensatz stellt somit eine einzigartige Ressource dar, um zu untersuchen, wie verschiedene Formen des Reasonings die Fahrfähigkeit beeinflussen. Der Datensatz ist verfügbar unter: https://hf.co/datasets/kit-mrt/kitscenes-longtail

One-sentence Summary

Researchers from KIT and FZI introduce the KITScenes LongTail dataset, featuring multilingual expert reasoning traces to address rare driving scenarios. Unlike prior benchmarks, it evaluates semantic coherence and proposes the lightweight Multi-Maneuver Score to assess instruction following and safety in end-to-end autonomous driving.

Key Contributions

- The paper introduces the KITScenes LongTail dataset, which provides long-tail driving scenarios paired with reasoning traces to enable task-aligned reasoning and generalization for vision-language models.

- A new Multi-Maneuver Score (MMS) metric is presented to evaluate planned trajectories by ranking them against five reference categories while applying comfort penalties based on jerk and tortuosity calculations.

- The work proposes a semantic coherence evaluation method that uses Rocchio classification on sentence embeddings to verify if driving actions described in reasoning traces align with the actual planned trajectory, offering robustness against synonym variations.

Introduction

Vision-language models (VLMs) and vision-language-action models (VLAs) extend language capabilities to interpret visual inputs and execute actions, where intermediate reasoning steps like chain-of-thought significantly improve reliability in complex tasks. While prior work has successfully applied sampling, tree-based search, and domain-specific fine-tuning to stabilize few-shot behavior, these approaches often struggle to consistently ground reasoning in executable policies or generalize across diverse domains without high-quality data. The authors leverage advanced reasoning mechanisms to bridge the gap between visual understanding and action planning, ensuring that VLAs can perform multi-step tasks with greater accuracy and policy alignment.

Dataset

-

Dataset Composition and Sources The authors introduce the KITScenes LongTail Dataset, a resource designed for end-to-end driving that focuses on rare, long-tail scenarios. The data was collected over two years in urban, suburban, and highway environments, specifically filtering for adverse weather, road closures, accidents, and construction zones. Unlike standard perception datasets, this collection integrates multi-view video, high-level instructions, and human-labeled reasoning traces to support decision-making research.

-

Key Details for Each Subset

- Total Size: The dataset comprises 1,000 scenarios, each lasting 9 seconds.

- Splits: The data is divided into a training set (500 scenarios), a test set (400 scenarios), and a validation set (100 scenarios).

- Scenario Types: Distribution is balanced across splits, featuring a mix of specifically selected rare events (e.g., protests, crashes) and long-tail data identified via the Pareto principle using nuScenes as a reference (e.g., nighttime driving, overtaking, lane changes).

- Instructions: High-level commands are manually annotated by experts, ranging from simple directions like "drive straight" to complex overtaking instructions specifying object types and relative positions.

- Reasoning Traces: Annotations are provided in English, Chinese, and Spanish by domain experts with diverse cultural backgrounds, capturing intuitive reasoning in their native or fluent languages.

-

Data Usage in the Paper The authors utilize the dataset to evaluate Vision-Language Models (VLMs) and Vision-Language Agents (VLAs) through in-context learning mechanisms.

- Training and Evaluation: The training split supports few-shot prompting and few-shot Chain-of-Thought (CoT) prompting, where models adapt from a handful of examples containing reasoning traces.

- Metrics: The paper introduces the Multi-Maneuver Score (MMS) to evaluate safety, comfort, and instruction following across multiple possible futures rather than a single ground-truth trajectory.

- Analysis: The data enables the measurement of semantic coherence between model outputs and expert reasoning, as well as the study of how different reasoning styles and languages affect driving competence.

-

Processing and Metadata Construction

- Video Processing: The dataset provides synchronized six-view video data with a 360° horizontal field of view. Frames are offered in raw and pinhole formats, with pinhole parameters optimized for ViT patch processing.

- Stitching Strategy: A frame-wise stitching method is applied to generate 360° views. Instead of a single homography, the method divides images into vertical sections and blends homography with identity transformations to handle overlaps smoothly.

- Annotation Workflow: Experts answer five structured questions regarding situational awareness and specific acceleration/steering commands for 0–3s and 3–5s intervals. Responses are recorded verbally or in writing and transcribed using Whisper, ensuring diverse and culturally grounded explanations.

- Instruction Granularity: Instructions are fine-grained to enable precise evaluation of context-aware decision-making, including cases where the instructed maneuver is intentionally impossible due to external factors.

Method

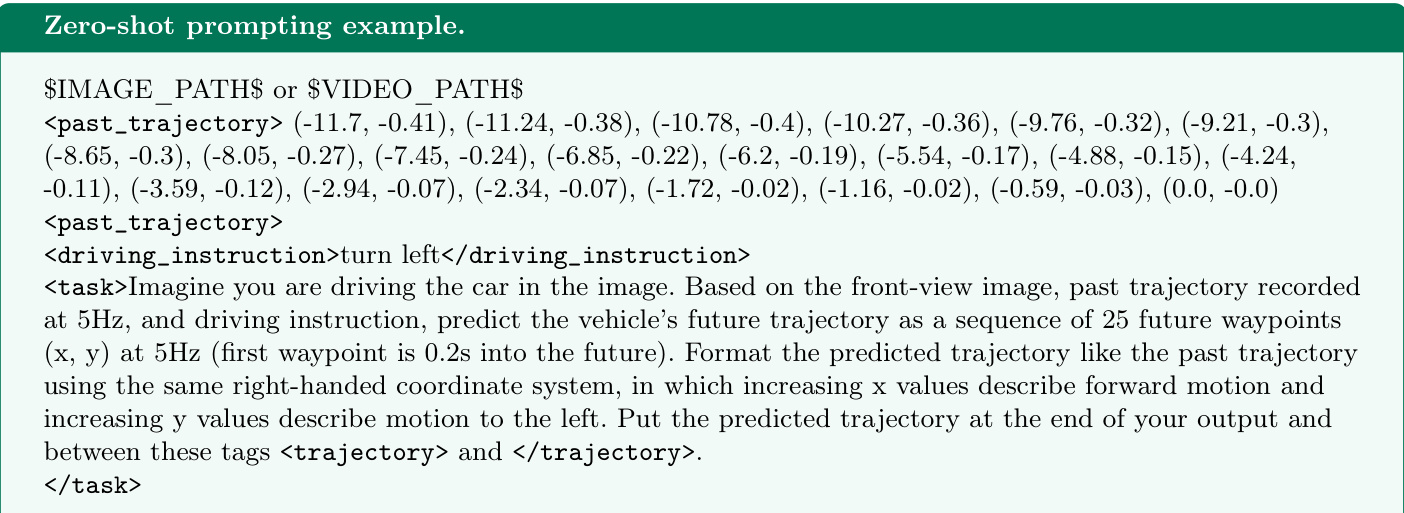

The authors leverage a zero-shot prompting approach to define the driving task. As shown in the figure below, the input consists of the image path, past trajectory coordinates, and a specific driving instruction, which guides the model to predict future waypoints.

To ensure the model's reasoning aligns with its actions, the authors measure semantic coherence. They apply heuristics to classify driving actions from the planned trajectory and generate embeddings for the reasoning traces using EmbeddingGemma 0.3B. Rocchio classification is then performed to compare these embeddings against reference embeddings representing all possible driving actions. The classification is defined as:

y^=argc∈Cmaxcos(z,μc)where C is the set of all classes, z is an embedding, μc is the reference embedding of class c, and cos(⋅) computes the cosine similarity.

For trajectory evaluation, the authors propose a Multi-maneuver Score (MMS) that ranks planned trajectories based on similarity to reference trajectories and comfort levels. Refer to the table below for the reference categories used in this metric.

The MMS considers five categories: expert-like, wrong speed, neglect instruction, driving off road without crashing, and crash. Comfort is accounted for by subtracting a penalty based on jerk and tortuosity relative to reference trajectories. The authors compute jerk using:

average jerk=T1t∑Δt3Δ3Yt,:where Y∈RT×2 is a trajectory as temporal sequence of waypoints. Moreover, they compute tortuosity using:

tortuosity=∥YT:−Y1:∥∑t=2T∥Yt:−Yt−1:∥To compute the similarity between planned and reference trajectories, they leverage a threshold-based similarity metric:

sim={1,min(simlat,simlon),if dlat≤λlat and dlon≤λlon,otherwise.The final MMS is calculated based on four cases involving the similarity score s, the reference score MMSref, and the comfort penalty CP:

MMS=⎩⎨⎧0,MMSref,s⋅MMSref,3.5−CP,if ⟨vplan(0),vref(0)⟩≤0.5vref(0),else if MMSref∈{0,1} and s≥0.4,else if s⋅MMSref≥3.5−CP,otherwise,The system visualizes the predicted trajectory overlaid on the driving scene. Refer to the figure below for an example of the predicted path visualization.

Experiment

- Comparison of the MMS metric against L2 errors and closed-loop DrivingScores validates that MMS correlates significantly better with overall driving quality, particularly in detecting trajectory inconsistencies that L2 errors miss.

- Zero-shot evaluations reveal that closed-source VLMs and classic end-to-end driving models outperform open-source VLMs, though open-source models show substantial improvement with few-shot and few-shot CoT prompting.

- Analysis of semantic coherence demonstrates a frequent mismatch between the driving actions described in model reasoning traces and the final predicted trajectories, indicating issues with hallucination or domain gaps.

- Integrating a kinematic model to convert model-predicted driving actions into trajectories yields the best performance for open-source models, confirming that intermediate reasoning traces often contain more accurate driving behavior than direct trajectory generation.

- Qualitative results highlight that models struggle most in complex scenarios like snow and intersections, often failing to follow instructions or generating unsafe maneuvers.