Command Palette

Search for a command to run...

RACAS: Steuerung diverser Roboter mit einem einzigen Agentic System

RACAS: Steuerung diverser Roboter mit einem einzigen Agentic System

Dylan R. Ashley Jan Przepióra Yimeng Chen Ali Abualsaud Nurzhan Yesmagambet Shinkyu Park Eric Feron Jürgen Schmidhuber

Zusammenfassung

Viele Roboterplattformen bieten eine Programmierschnittstelle (API), über die externe Software ihre Aktoren steuern und ihre Sensordaten auslesen kann. Der Übergang von diesen low-level-Schnittstellen zu hochleveligem autonomen Verhalten erfordert jedoch eine komplexe Verarbeitungspipeline, deren Komponenten unterschiedliche Fachkenntnisse voraussetzen. Bestehende Ansätze zur Überbrückung dieser Lücke erfordern entweder für jede neue Roboterplattform ein erneutes Training oder wurden lediglich auf strukturell ähnlichen Plattformen validiert. Wir stellen RACAS (Robot-Agnostic Control via Agentic Systems) vor, eine kooperative Agentenarchitektur, bei der drei auf großen Sprachmodellen (LLM) bzw. multimodalen Sprachmodellen (VLM) basierende Module (Monitore, ein Controller und ein Memory Curator) ausschließlich über natürliche Sprache kommunizieren, um eine geschlossene Regelkreiskontrolle des Roboters zu ermöglichen. RACAS benötigt lediglich eine natürliche Sprachbeschreibung des Roboters, eine Definition der verfügbaren Aktionen und eine Aufgaben specification; weder der Quellcode, Modellgewichte noch Belohnungsfunktionen müssen angepasst werden, um zwischen verschiedenen Plattformen zu wechseln. Wir evaluieren RACAS an mehreren Aufgaben unter Verwendung eines radgetriebenen Bodenroboters, eines kürzlich veröffentlichten neuartigen mehrgelenkigen Roboterarms sowie eines Unterwasserfahrzeugs. RACAS löste konsistent alle zugewiesenen Aufgaben auf diesen grundverschiedenen Plattformen und demonstriert damit das Potenzial agentenbasierter KI, die Hürden für die Prototypenentwicklung robotischer Lösungen erheblich zu senken.

One-sentence Summary

Researchers from KAUST and collaborating Swiss and Polish institutes introduce RACAS, a cooperative agentic architecture using LLM and VLM modules that achieves zero-training generalization across radically different robots by relying solely on natural language prompts instead of retraining or code modifications.

Key Contributions

- Current robotic control pipelines struggle to bridge low-level APIs and high-level autonomy across diverse platforms, often requiring retraining or validation only on structurally similar robots.

- RACAS introduces a cooperative agentic architecture where LLM/VLM-based modules communicate exclusively via natural language to enable closed-loop control using only declarative prompt configurations.

- The system achieves zero-training generalization across three radically heterogeneous platforms, including a wheeled ground robot, a novel multi-jointed limb, and an underwater vehicle, without modifying source code or model weights.

Introduction

Modern robotics relies on complex pipelines that bridge low-level sensor APIs with high-level autonomous behavior, yet this process demands disjointed expertise and scales poorly with the growing diversity of robotic platforms. Prior solutions either require extensive retraining and data collection for every new robot embodiment or have only been validated across structurally similar machines in controlled settings, leaving a gap in zero-training generalization for radically different systems. The authors introduce RACAS, a cooperative agentic architecture that uses three natural language-based modules to achieve closed-loop control without modifying source code, model weights, or reward functions. By confining all embodiment-specific knowledge to declarative prompts and leveraging a dynamic memory curator, this system successfully executes tasks across a wheeled ground robot, a novel multi-jointed limb, and an underwater vehicle, demonstrating unprecedented adaptability across heterogeneous morphologies and environments.

Dataset

- The authors evaluate their approach across three distinct robot platforms: the Limb multi-jointed manipulator, the Clearpath Dingo wheeled ground robot, and the BlueROV2 underwater vehicle.

- The Limb platform features a single-finger end effector and six onboard cameras, though the authors disabled two side-facing units to increase task difficulty.

- For the Limb robot, low-resolution 100×100 px camera inputs are upsampled to 768×768 px using a two-stage Swin2SR super-resolution pipeline before VLM inference.

- The Clearpath Dingo operates in both NVIDIA Isaac Sim and physical environments with action sets restricted to ground plane movements including forward, backward, and rotation commands.

- Simulation for the Dingo utilizes three cameras at 640×480 px resolution, while the real-world setup relies on a single forward-facing camera at 1280×800 px.

- Success for the Dingo is defined by a proximity threshold of 1 meter to the target object.

- The BlueROV2 is a thruster-driven vehicle controlled via the MAVLink protocol with ArduSub firmware, utilizing a single forward-facing 1920×1080 px camera.

- Although the BlueROV2 is fully actuated, the study restricts control to three axes (surge, heave, and yaw) resulting in a discrete action set of six commands.

- Thrust magnitudes for the BlueROV2 are configured between 300 and 600 on a 0–1000 scale with a fixed 2-second command duration.

Method

The authors present a framework for language-driven robotic control designed to operate across heterogeneous robot embodiments without retraining. The core methodology decomposes closed-loop control into three cooperative LLM pipelines: perception, decision, and memory, connected through a unified natural language interface. All embodiment-specific knowledge is encapsulated in declarative prompt configurations rather than learned parameters.

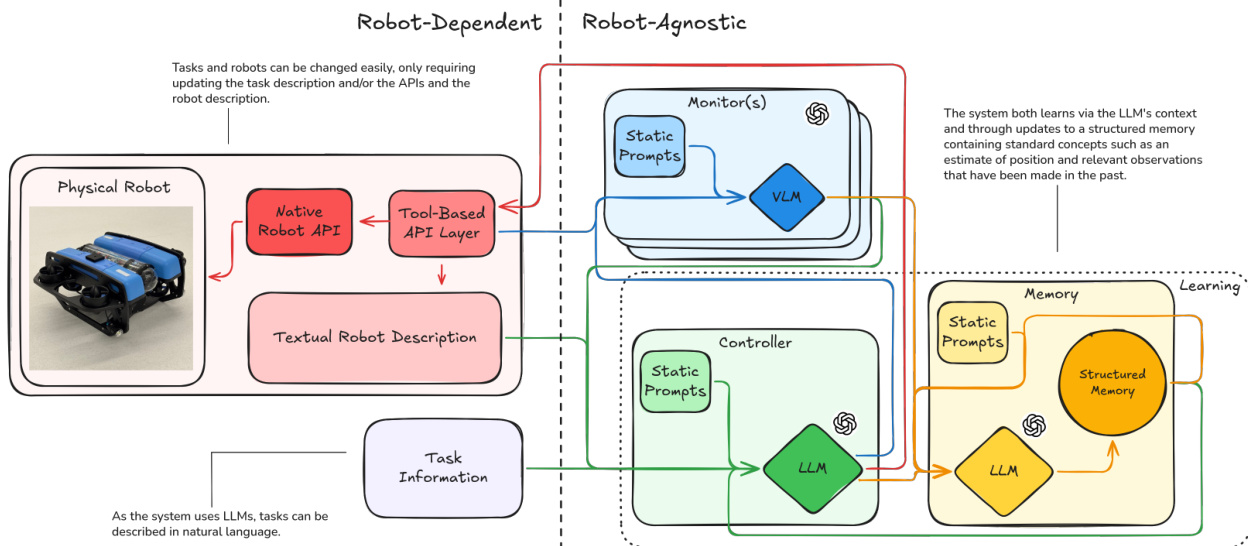

The overall system architecture separates robot-dependent components from robot-agnostic intelligence. Refer to the framework diagram for a visual breakdown of these interactions. The robot-dependent side manages the physical interface, including the Native Robot API and Tool-Based API Layer, alongside a Textual Robot Description. The robot-agnostic side houses the intelligence modules: Monitors, a Controller, and a Memory Curator. This separation allows tasks and robots to be changed easily by updating the task description and APIs without modifying the control logic.

The control loop proceeds in discrete time steps t. At each step, the Controller generates a targeted visual query qt based on the current task state. This query is dispatched to each Monitor mc, which processes its camera image It(c) through a Vision Language Model (VLM) to return a natural language scene description ot(c). The Controller then receives all monitor observations, reasons over them, and selects an action at from the admissible action set A. This action is dispatched to the robot through the hardware abstraction layer. Finally, the Memory Curator integrates the interaction record into a persistent environment memory Mt.

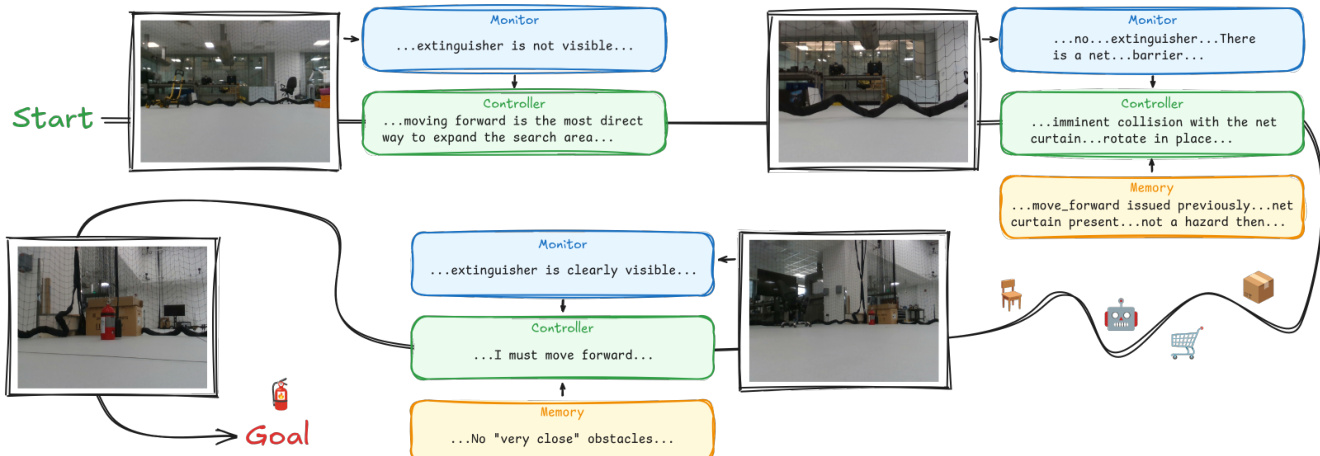

A detailed view of the reasoning process during a real run is shown in the figure below:

The Controller is re-initialized at each step with a dynamically composed system prompt. This prompt assembles six components: the robot description D, the action interface A, the environment memory Mt−1, the proprioceptive state st, the action history Ht, and the task specification τ. In the first step, the Controller reasons over this context to produce the visual query qt. In the subsequent step, it receives the monitor observations and outputs a reasoning trace followed by a single action at.

Perception is handled by the Monitor module, which reformulates perception as a language-conditioned visual question-answering process. Unlike conventional pipelines that produce fixed-schema numerical outputs, this design ensures perception is task-adaptive. The query qt varies with the execution stage, allowing the Monitor to attend to different scene aspects without architectural change. The output resides in the same representational space as the Controller's input, eliminating the need for hand-designed encoders.

To manage long-term interactions, the system employs an LLM-Curated Persistent Memory. Naively appending observations leads to unbounded context growth, so the Memory Curator performs incremental rewriting. It receives the current memory Mt−1 and the latest interaction record to produce an updated memory Mt. This operation compresses redundant information, resolves contradictions, and discards irrelevant details. The Curator organizes knowledge into four categories: physical environment, robot state, curated history of significant commands, and task state. Additionally, the system performs cross-modal position inference by intersecting camera observations with robot actions to estimate object locations relative to the robot.

The physical embodiment used in the experiments, such as the mobile manipulator shown below, is defined entirely through natural language descriptions and API specifications.

Adapting the framework to a new platform requires authoring three text files: a robot description D, a tool definition file specifying actions A, and the task description. No source code, model weights, or reward functions are modified. The system learns via the LLM's context and through updates to the structured memory containing standard concepts such as position estimates and relevant past observations.

Experiment

- The framework was evaluated across three distinct robotic platforms (a robotic limb, a ground robot, and an underwater vehicle) to validate its ability to generalize across radically different morphologies and environments using identical control logic.

- Experiments in object localization, target approach, and underwater navigation demonstrated that the system successfully completed tasks in a reasonable number of steps, significantly outperforming random action baselines where applicable.

- Results indicate that the system effectively leverages the generalization capabilities of large language and vision models to solve navigation tasks without requiring platform-specific code modifications or training data.

- Performance analysis revealed that task completion time is primarily limited by sensor fidelity and the time required to locate objects, rather than by the underlying control architecture or model reasoning.

- The study confirms that a unified, zero-training control framework can be adapted to new robots using only natural language descriptions and existing APIs, though current limitations include high inference latency and a lack of direct depth information.