Command Palette

Search for a command to run...

Echtzeit-KI-Service-Ökonomie: Ein Rahmenwerk für agentic Computing über das gesamte Kontinuum

Echtzeit-KI-Service-Ökonomie: Ein Rahmenwerk für agentic Computing über das gesamte Kontinuum

Lauri Lovén Alaa Saleh Reza Farahani Ilir Murturi Miguel Bordallo López Praveen Kumar Donta Schahram Dustdar

Zusammenfassung

Echtzeit-KI-Dienste agieren zunehmend über das Device-Edge-Cloud-Kontinuum hinweg, in dem autonome KI-Agenten latenzsensitive Workloads erzeugen, mehrstufige Verarbeitungspipelines orchestrieren und unter politischen sowie governance-bedingten Restriktionen um gemeinsam genutzte Ressourcen konkurrieren. Dieser Artikel zeigt, dass die Struktur von Dienstabhängigkeitsgraphen – modelliert als gerichtete azyklische Graphen (DAGs), deren Knoten Rechenschritte repräsentieren und deren Kanten die Ausführungsreihenfolge kodieren – ein primärer Determinant dafür ist, ob dezentralisierte, preisbasierte Ressourcenallokation im großen Maßstab zuverlässig funktionieren kann. Sind die Abhängigkeitsgraphen hierarchisch (als Bäume oder serien-parallele Strukturen), konvergieren die Preise zu stabilen Gleichgewichten, können optimale Allokationen effizient berechnet werden, und unter geeigneter Mechanismusgestaltung (mit quasilinearen Nutzenfunktionen und diskreten Slice-Items) haben Agenten innerhalb jedes Entscheidungszeitraums keinen Anreiz, ihre Bewertungen falsch zu melden. Bei komplexeren Abhängigkeiten mit querlaufenden Verknüpfungen zwischen Pipeline-Stufen oszillieren die Preise, die Allokationsqualität verschlechtert sich, und das System wird schwer steuerbar. Um diese Lücke zu schließen, schlagen wir eine hybride Managementarchitektur vor, in der domänenübergreifende Integratoren komplexe Teilgraphen in Ressourcen-Slices kapseln, die dem übrigen Markt eine einfachere, strukturierte Schnittstelle bieten. Eine systematische Ablationsstudie über sechs Experimente (insgesamt 1.620 Durchläufe mit jeweils 10 Seeds) bestätigt: (i) Die Topologie des Abhängigkeitsgraphen ist ein primärer Determinant für Preisstabilität und Skalierbarkeit; (ii) die hybride Architektur reduziert die Preisvolatilität um bis zu 70–75 %, ohne den Durchsatz zu beeinträchtigen; (iii) Governance-Restriktionen erzeugen quantifizierbare Trade-offs zwischen Effizienz und Compliance, die sowohl von der Topologie als auch von der Last abhängen; und (iv) bei wahrheitsgemäßen Geboten entspricht das dezentralisierte Marktgleichgewicht einer zentralisierten, wertoptimalen Baseline, was belegt, dass dezentralisierte Koordinierung die Allokationsqualität einer zentralisierten Lösung replizieren kann.

One-sentence Summary

Authors from the University of Oulu and other European institutions propose a hybrid management architecture that encapsulates complex service dependencies into polymatroidal slices, enabling stable, incentive-compatible decentralized resource allocation for real-time AI agents across the device-edge-cloud continuum.

Key Contributions

- Real-time AI services across the device-edge-cloud continuum face instability when complex service-dependency graphs create cross-resource complementarities that prevent price convergence and efficient allocation.

- The proposed hybrid management architecture encapsulates complex sub-graphs into resource slices to enforce hierarchical topologies, ensuring the feasible allocation set forms a polymatroid that guarantees market-clearing prices and truthful bidding.

- Systematic ablation studies across 1,620 runs demonstrate that this approach reduces price volatility by up to 75% without sacrificing throughput while matching the value-optimal quality of centralized baselines under truthful bidding.

Introduction

Real-time AI services increasingly operate across device-edge-cloud environments where autonomous agents must coordinate latency-sensitive workloads under strict governance constraints. Prior approaches struggle because centralized orchestration is impractical across trust boundaries, while naive decentralized markets fail when complex service dependencies create resource complementarities that destabilize prices and make optimal allocation computationally intractable. The authors leverage service-dependency graph topology to identify stable regimes where tree or series-parallel structures guarantee market equilibrium and truthful bidding, then propose a hybrid architecture that encapsulates complex sub-graphs into simplified resource slices to restore stability without sacrificing throughput.

Method

The authors propose a framework for distributed service computing where autonomous AI agents generate tasks, compose services, and interact economically across a continuum of devices, edge platforms, and cloud infrastructure. The system operates in discrete time periods t, with agents conditioning their valuations on a commonly accepted system state st. The overall model integrates agentic behavior, resource dependencies, governance constraints, and mechanism design to facilitate efficient allocation.

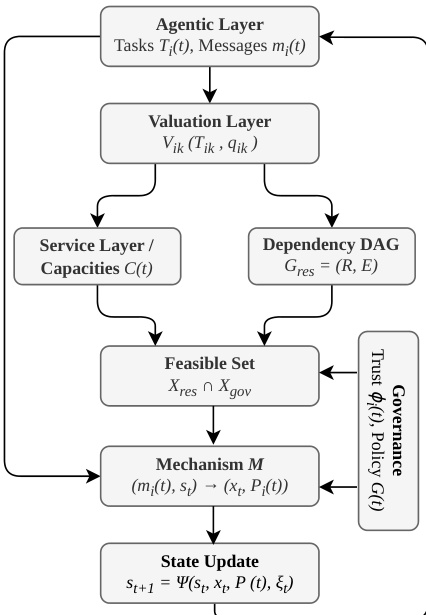

Refer to the framework diagram for a high-level overview of the system components and their interactions. The process begins at the Agentic Layer, where agents issue tasks Ti(t) and send messages mi(t). These inputs flow into the Valuation Layer, which computes latency-aware valuations defined as Vik(Tik,qik)=vik(qk)δik(Tik). Here, vik(q) represents the base value of completing a task at quality q, while δik captures latency decay. The valuation depends on the latency Tik and workload qk associated with the task.

Concurrently, the Service Layer defines available capacities C(t), while the Dependency DAG models structural dependencies among resources as Gres=(R,E). These factors converge at the Feasible Set, which is the intersection of resource constraints Xres and governance constraints Xgov. Governance inputs, including trust scores ϕ(t) and policies G(t), further restrict the feasible allocations. The Mechanism M then maps the messages and current state to an allocation xt and payments Pi(t). Finally, the State Update module evolves the system state according to st+1=Ψ(st,xt,P(t),ξt), incorporating exogenous events and realized allocations.

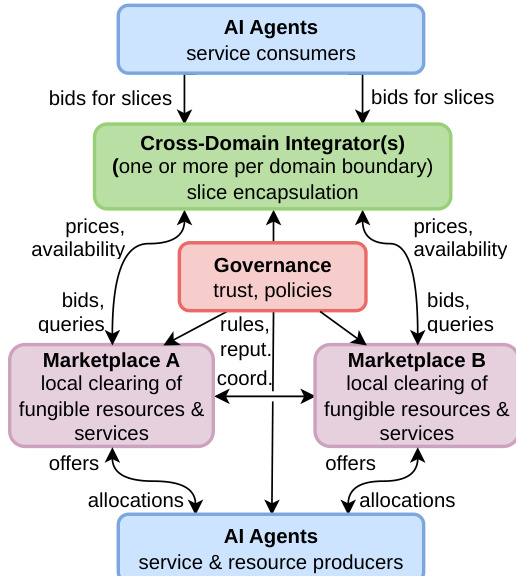

To ensure tractability in the presence of complex dependencies, the authors introduce a hybrid market architecture. As shown in the figure below, this architecture consists of three primary layers: Cross-Domain Integrators, Local Marketplaces, and AI Agents.

Cross-Domain Integrators form the agent-facing layer. Each integrator encapsulates a complex multi-resource service path into a governance-compliant slice. Internally, the integrator manages the dependency DAG of its sub-system and exposes a simplified, substitutable capacity interface. The capacity of this slice is set equal to the maximum flow of the internal sub-DAG. This encapsulation absorbs complementarities that would otherwise destabilize market-based coordination.

Beneath the integrators, Local Marketplaces operate at the device or edge scope to coordinate fungible services and resources, such as compute cycles and bandwidth. These markets clear via lightweight auctions or posted prices and enforce local governance policies. For simple, single-domain services, agents may interact directly with a local marketplace. Inter-Market Coordination ensures consistency through the exchange of coarse-grained signals, such as aggregate demand and congestion indicators, without requiring full system-wide optimization.

The mechanism design relies on the structural properties of the feasible allocation set. The authors demonstrate that when the service-dependency DAG is a tree or series-parallel network, the capacity constraints form a polymatroid. By using architectural encapsulation, the integrators ensure that the quotient graph seen by agents maintains this tree or series-parallel structure, even if the underlying infrastructure DAG is arbitrary. This preserves the polymatroidal structure of the agent-facing feasible region.

Furthermore, the latency-aware valuations satisfy the gross-substitutes (GS) condition under slice encapsulation. This is achieved because integrators expose discrete, indivisible slices with fixed internal routing and deterministic latency within each mechanism epoch. With a polymatroidal feasible set and GS valuations, the system admits a Walrasian equilibrium. Consequently, efficient allocation is computable in polynomial time via ascending auctions, and the outcome is implementable in a dominant-strategy incentive-compatible (DSIC) manner using mechanisms such as VCG or polymatroid clinching auctions.

Experiment

- Structural discipline experiments validate that polymatroidal topologies (tree and linear) ensure market stability with zero price volatility, whereas entangled dependency graphs cause severe degradation and market failure under high load.

- Hybrid architecture experiments confirm that encapsulating complex services into slices significantly reduces price volatility, with EMA smoothing acting as the primary stabilizer and efficiency factors improving latency and welfare in congested regimes.

- Governance experiments demonstrate that strict trust-gated capacity partitioning trades service coverage for quality by reducing latency, though it can induce price volatility in otherwise stable topologies due to smaller resource pools.

- Interaction studies reveal that the hybrid architecture effectively mitigates the volatility penalties introduced by strict governance, with synergy effects varying by topology from additive to super-additive.

- Market mechanism experiments show that under truthful bidding, price-based coordination yields welfare outcomes nearly identical to value-greedy allocation, indicating the mechanism's primary value lies in incentive alignment rather than informational superiority.