Command Palette

Search for a command to run...

LoGeR: Lange Kontext-Geometrische Rekonstruktion mit Hybrid-Speicher

LoGeR: Lange Kontext-Geometrische Rekonstruktion mit Hybrid-Speicher

Junyi Zhang Charles Herrmann Junhwa Hur Chen Sun Ming-Hsuan Yang Forrester Cole Trevor Darrell Deqing Sun

Zusammenfassung

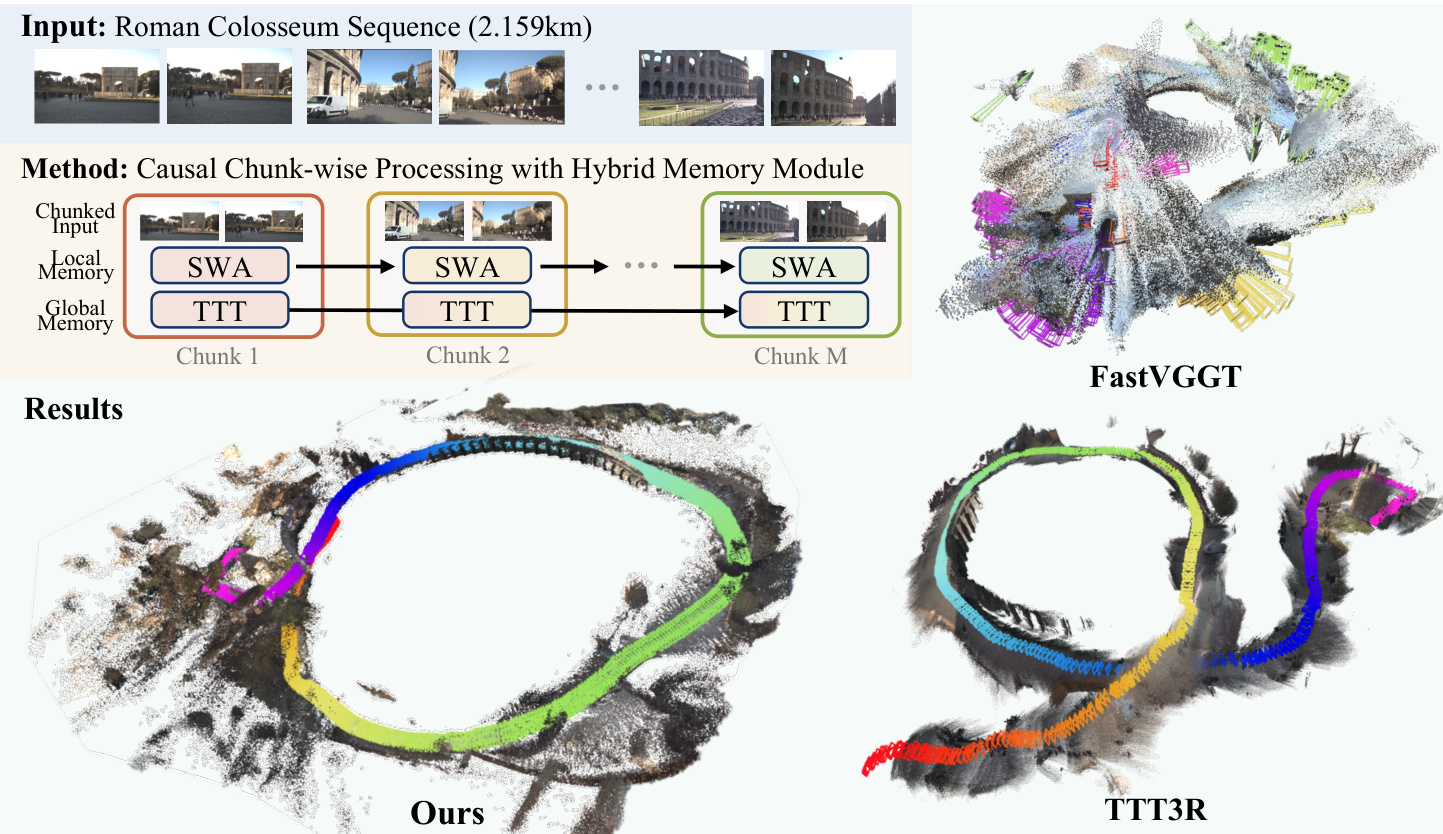

Feedforward-geometrische Fundamentmodelle erzielen zwar eine robuste Rekonstruktion über kurze Zeitfenster, doch ihre Skalierung auf mehrere Minuten lange Videos wird entweder durch eine quadratische Komplexität der Aufmerksamkeitsmechanismen oder durch die begrenzte effektive Speicherkapazität rekurrenter Architekturen behindert. Wir stellen LoGeR (Long-context Geometric Reconstruction) vor, eine neuartige Architektur, die eine dichte 3D-Rekonstruktion auf extrem lange Sequenzen ohne nachgelagerte Optimierung skaliert. LoGeR verarbeitet Videostreams in Blöcken und nutzt starke bidirektionale Priors für eine hochgenaue Inferenz innerhalb dieser Blöcke. Um die kritische Herausforderung der Kohärenz über Blockgrenzen hinweg zu bewältigen, schlagen wir einen lernbasierten hybriden Speichermodule vor. Dieses duale System kombiniert einen parametrischen Testzeit-Trainings-Speicher (Test-Time Training, TTT), der das globale Koordinatensystem verankert und eine Skalendrift verhindert, mit einem nicht-parametrischen Sliding-Window-Attention-Mechanismus (SWA), der unkomprimierten Kontext für eine präzise benachbarte Ausrichtung bewahrt. Bemerkenswerterweise ermöglicht diese Speicherarchitektur es LoGeR, auf Sequenzen mit 128 Bildern trainiert zu werden und während der Inferenz auf Tausende von Bildern zu generalisieren. Evaluiert auf Standard-Benchmarks sowie auf einem neu aufbereiteten VBR-Datensatz mit Sequenzen von bis zu 19.000 Bildern, übertrifft LoGeR bestehende feedforward-basierte State-of-the-Art-Methoden deutlich – die mittlere absolute Trajektorienfehler (ATE) auf dem KITTI-Datensatz wird um mehr als 74 % reduziert – und erzielt eine robuste, global konsistente Rekonstruktion über beispiellose Zeithorizonte.

One-sentence Summary

Researchers from Google DeepMind and UC Berkeley present LoGeR, a feedforward model that scales 3D reconstruction to long videos by combining Test-Time Training for global consistency with Sliding Window Attention for local precision, eliminating the need for post-optimization while achieving superior accuracy on datasets with thousands of frames.

Key Contributions

- Feedforward geometric models currently struggle to scale to minute-long videos due to quadratic attention complexity and limited memory, creating a critical gap between short-window reconstruction and the need for global consistency over long sequences.

- LoGeR introduces a novel chunk-wise architecture with a hybrid memory module that combines parametric Test-Time Training to anchor the global coordinate frame and non-parametric Sliding Window Attention to preserve high-precision local alignment.

- Trained on sequences of only 128 frames, the model generalizes to thousands of frames and achieves state-of-the-art performance by reducing Absolute Trajectory Error on KITTI by over 74% and improving results by 30.8% on a new 19k-frame VBR benchmark.

Introduction

Large-scale dense 3D reconstruction is essential for applications ranging from autonomous driving to generative world-building, yet current methods struggle to balance computational efficiency with long-range consistency. While classical optimization pipelines can handle city-scale scenes, they rely on slow offline processes and fail on sparse inputs, whereas modern feedforward geometric models offer speed but are limited to short, bounded scenes due to quadratic attention complexity and a lack of training data for long sequences. To bridge this gap, the authors propose LoGeR, a feedforward framework that utilizes a hybrid memory module combining non-parametric sliding window attention for high-fidelity local details and parametric associative memory for global structural integrity. This approach allows the model to process massive sequences of up to 19,000 frames with linear computational cost, effectively overcoming the context and data walls that previously prevented feedforward models from scaling to real-world, long-horizon trajectories.

Dataset

-

Dataset Composition and Sources: The authors utilize a diverse mixture of 14 large-scale datasets containing both real-world and synthetic scenes across indoor, outdoor, and autonomous driving environments to support long-context geometric reconstruction.

-

Key Details for Each Subset:

- Navigation and large-scale scene datasets like TartanAirV2 and VKITTI2 are heavily weighted to encourage long-range geometric reasoning.

- DL3DV receives a high sampling weight due to its exceptional real-world scene diversity, which aids model generalization.

- Smaller or object-centric datasets are down-weighted in the mixture.

- The OmniWorld-Game contribution is limited to a subset of 5,000 sequences based on the publicly released data at the time of training.

-

Data Usage and Mixture Ratios: The training configuration employs relative sampling percentages as summarized in Table 4, where the mixture is specifically tuned to provide sufficient long-horizon signals and diverse scene priors.

-

Processing and Filtering Strategies:

- All datasets are standardized into multi-view sequences with 48 views (or 128 views for H200 GPU training) at a uniform resolution of 504 × 280.

- The sampling strategy follows the CUT3R approach.

- Rigorous depth filtering is applied to ensure geometric supervision quality.

- Metric-scale datasets like ARKitScenes and ScanNet use a maximum depth threshold (e.g., 80.0 meters), while others like DL3DV and TartanAir use percentile-based clipping (e.g., 90th or 98th percentile) to mask noisy or invalid depth values.

Method

The authors propose LoGeR, a novel architecture designed to scale dense 3D reconstruction to extremely long video sequences without post-optimization. To overcome the quadratic complexity of global attention and the scarcity of long-horizon training data, the method processes video streams sequentially by chunk. This approach tightly bounds computational cost while ensuring that local inferences remain within the distribution of existing short-context training data.

Refer to the framework diagram for an overview of the proposed chunk-wise processing pipeline and its performance on long sequences.

The core innovation lies in a learning-based hybrid memory module that manages coherence across chunk boundaries. This dual-component system combines a parametric Test-Time Training (TTT) memory to anchor the global coordinate frame and prevent scale drift, alongside a non-parametric Sliding Window Attention (SWA) mechanism to preserve uncompressed context for high-precision adjacent alignment.

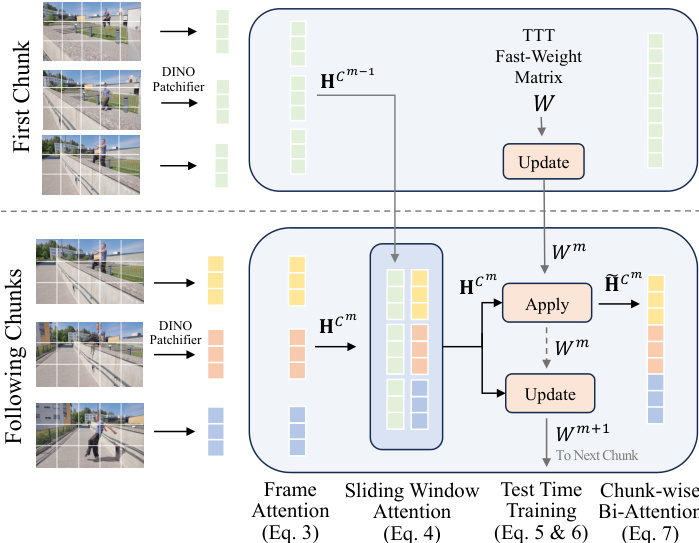

As shown in the figure below, the architecture processes input sequences in consecutive chunks, utilizing specific mechanisms for the first chunk versus following chunks to propagate information effectively.

Within each residual block of the geometry backbone, the authors introduce the hybrid memory system. The process begins with per-frame attention to extract spatial features independently for each frame. To align adjacent chunks, sparse sliding-window attention layers are inserted at a subset of network depths. These layers attend to tokens from both the previous chunk Cm−1 and the current chunk Cm, establishing a lossless information highway for high-fidelity feature propagation.

To integrate global context, the model maintains a set of fast weights Wm that summarize information up to chunk m. The TTT layer performs an apply-then-update procedure at the chunk level. In the apply operation, the TTT layers use historical information stored in the weights to modulate the network's processing of the current chunk. In the update operation, the weights are edited to store information from the current chunk, conceptually compressing important but redundant geometric information. The mathematical formulation for the TTT update and apply operations is defined as:

W←W−η∇WL(fW(k),v) Apply operation:o=fW(q)where η is the learning rate and L is a loss function encouraging the function fW to link keys with corresponding values. Finally, within each chunk, a bidirectional attention module is employed for powerful geometric reasoning under a bounded context window.

For training, the authors employ a progressive curriculum strategy to stabilize the optimization of recurrent TTT layers. The schedule begins with shorter sequences and gradually increases complexity, forcing the model to shift reliance from local Sliding Window Attention to the global TTT hidden state. Additionally, to mitigate prediction errors in very long streams, a variant called LoGeR* incorporates a purely feedforward alignment step. This step aligns raw predictions into a consistent global coordinate system by computing a rigid SE(3) transformation using overlapping frames between chunks.

Experiment

- Long-sequence evaluation on KITTI and VBR benchmarks demonstrates that LoGeR effectively mitigates accumulated drift over thousands of frames, maintaining global scale and trajectory consistency where prior methods fail.

- Short-sequence tests on 7-Scenes, ScanNet, and TUM-Dynamics confirm that the proposed model and baseline significantly outperform existing feedforward and optimization-based approaches in 3D reconstruction and camera pose estimation.

- Ablation studies validate that the hybrid architecture is essential, with the Test-Time Training layer ensuring global consistency and the Sliding Window Attention layer preserving local geometric smoothness.

- Experiments on data mixture and curriculum training prove that incorporating large-scale navigation datasets and a progressive training schedule are critical for generalization and stabilizing recurrent layer optimization.