Command Palette

Search for a command to run...

Panini: Kontinuierliches Lernen im Token-Raum mittels strukturierter Speicherung

Panini: Kontinuierliches Lernen im Token-Raum mittels strukturierter Speicherung

Shreyas Rajesh Pavan Holur Mehmet Yigit Turali Chenda Duan Vwani Roychowdhury

Zusammenfassung

Sprachmodelle werden zunehmend dazu eingesetzt, über Inhalte zu inferieren, für die sie nicht trainiert wurden, wie beispielsweise neue Dokumente, sich stetig entwickelnde Wissensbestände oder benutzerspezifische Daten. Ein verbreiteter Ansatz ist die retrieval-augmented generation (RAG), bei der Dokumente wortwörtlich extern (als Blöcke) gespeichert und bei der Inferenz nur ein relevanter Teil abgerufen wird, damit das Sprachmodell darauf basierend schließen kann. Dies führt jedoch zu einer ineffizienten Nutzung der Rechenressourcen während der Inferenz (das LLM schließt wiederholt über dieselben Dokumente nach), und die Blockretrieval kann zudem irrelevante Kontextinformationen einbringen, was zu ununterstützten Generierungen führt. Wir schlagen einen menschenähnlichen, nicht-parametrischen kontinuierlichen Lernansatz vor, bei dem das Basismodell fix bleibt und das Lernen durch die Integration jeder neuen Erfahrung in einen externen semantischen Gedächtniszustand erfolgt, der sich kontinuierlich akkumuliert und konsolidiert. Wir präsentieren Panini, das diesen Ansatz realisiert, indem Dokumente als generative semantische Arbeitsräume (Generative Semantic Workspaces, GSW) repräsentiert werden – ein netzwerkartiger, entitäten- und ereignisbewusster Satz von Fragen-Antwort-Paaren, der ausreicht, damit ein LLM die erlebten Situationen rekonstruieren und latentes Wissen durch auf Schlussfolgerungen basierende Inferenzketten im Netzwerk erschließen kann. Bei einer Abfrage durchquert Panini ausschließlich den kontinuierlich aktualisierten GSW (nicht die wortwörtlichen Dokumente oder Blöcke) und ruft die wahrscheinlichsten Inferenzketten ab. Auf sechs QA-Benchmark-Datenbanken erreicht Panini die höchste durchschnittliche Leistung, 5–7 Prozentpunkte besser als andere konkurrierende Ansätze, wobei nur 2 bis 30 Mal weniger Antwort-Kontext-Token verwendet werden. Zudem unterstützt Panini vollständig offene Quellcode-Pipelines und verringert die Anzahl ununterstützter Antworten bei kuratierten, nicht beantwortbaren Fragen. Die Ergebnisse zeigen, dass eine effiziente und präzise Strukturierung von Erfahrungen bereits bei der Speicherung – wie durch das GSW-Modell erreicht – sowohl Effizienz als auch Zuverlässigkeit bei der Abfrage (read time) erzeugt. Der Quellcode ist unter https://github.com/roychowdhuryresearch/gsw-memory verfügbar.

One-sentence Summary

Shreyas Rajesh, Pavan Holur, and colleagues from UCLA propose PANINI, a continual learning method that operates in token space using structured memory to mitigate forgetting, enabling efficient adaptation without retraining, ideal for dynamic NLP tasks with evolving vocabularies.

Key Contributions

- PANINI introduces a non-parametric continual learning framework that avoids retraining LLMs by storing new knowledge in structured Generative Semantic Workspaces (GSW), which encode documents as entity- and event-aware QA networks to support reasoning over accumulated experiences.

- It employs Reasoning Inference Chain Retrieval (RICR) to traverse GSW at query time, enabling efficient and targeted access to inference chains without reprocessing verbatim text, thereby reducing compute overhead and minimizing irrelevant context injection.

- Evaluated across six QA benchmarks, PANINI outperforms baselines by 5%–7% average accuracy while using 2–30× fewer tokens per answer and significantly reducing unsupported generations on unanswerable queries, demonstrating gains in both efficiency and reliability.

Introduction

The authors leverage non-parametric continual learning to address the inefficiency and unreliability of retrieval-augmented generation (RAG) systems, which store verbatim document chunks and force large language models to reprocess the same text repeatedly at inference time. Prior structured memory approaches like RAPTOR and GraphRAG focus on summarization rather than reasoning, while parametric methods suffer from catastrophic forgetting and high retraining costs. PANINI introduces Generative Semantic Workspaces (GSW)—an entity- and event-aware network of QA pairs—and a lightweight retrieval method called Reasoning Inference Chain Retrieval (RICR) that traverses this structured memory to answer queries without iterative LLM calls. This design achieves state-of-the-art performance across six QA benchmarks, using 2–30x fewer tokens and improving reliability on unanswerable questions, demonstrating that investing compute at write time to build structured memory pays off in efficiency and accuracy at read time.

Dataset

The authors use a mix of single-hop and multi-hop QA benchmarks to evaluate PANINI’s non-parametric continual learning capabilities, focusing on factual memory and associativity across documents.

-

Multi-hop datasets: MuSiQue, 2WikiMultihopQA, HotpotQA, and LV-Eval (hotpotwikiqa-mixup 256k) test multi-step reasoning. MuSiQue emphasizes compositional reasoning; 2WikiMultihopQA covers diverse reasoning patterns over Wikipedia; HotpotQA requires identifying supporting facts from multiple sources. LV-Eval is a large-scale variant used for scalability testing.

-

Single-hop datasets: NQ and PopQA assess basic factual retrieval, ensuring the model maintains performance on direct queries.

-

Platinum variants (MUSIQUE-PLATINUM, 2WIKI-PLATINUM): Constructed to evaluate reliability under missing evidence. Built using a multi-agent LLM system to generate answers from source documents, followed by manual review to label examples as answerable or unanswerable. Final splits: MuSiQue (766 answerable / 153 unanswerable), 2Wiki (906 / 94). Models are prompted to output “N/A” when evidence is insufficient; performance is measured via F1 on answerable examples and binary refusal accuracy on unanswerable ones.

-

Training and evaluation setup: The authors adopt the same dataset splits as HippoRAG2 for fair baseline comparison. All experiments use GPT-4.1-mini to generate Generative Semantic Workspace (GSW) memories — structured semantic networks capturing entities, roles, states, and bidirectional QA pairs — which are then consumed by Qwen3-8B (thinking mode) as the reader, with Qwen3-8B + LoRA handling question decomposition.

-

Processing details: GSW extraction follows a structured prompt guiding the model to identify entities, roles, states, aliases, and factual relationships from raw text. Abbreviations are preserved unless explicitly expanded in the source. No cropping is mentioned; all processing is document-level with structured semantic graph construction.

Method

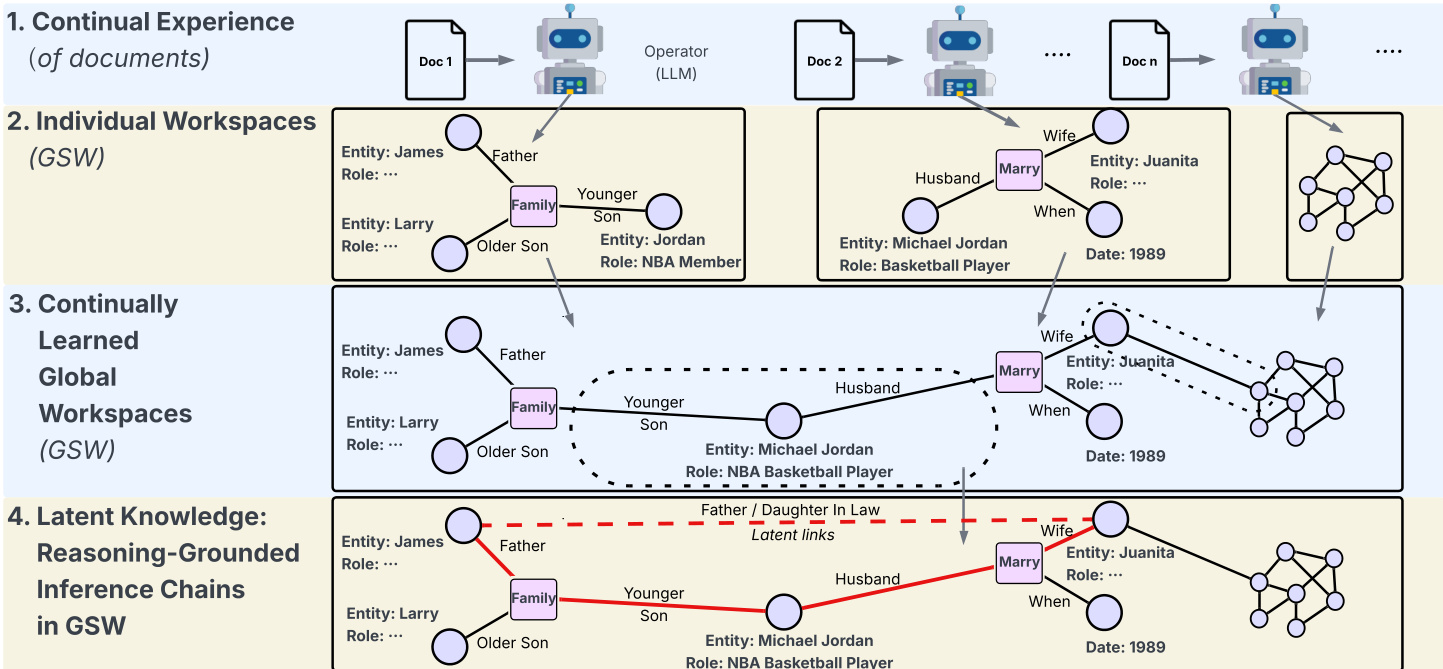

The authors leverage a structured, non-parametric memory framework called the Generative Semantic Workspace (GSW) to enable efficient, reasoning-grounded question answering. The system, PANINI, operates in three core stages: structured memory construction, reasoning inference chain retrieval (RICR), and answer generation. Each stage is designed to minimize parametric reliance during inference, instead grounding responses in explicitly retrieved, atomic QA evidence.

The framework begins with the construction of per-document GSWs. Each document di is processed by an LLM to generate a semantic graph Gi=(Ei,Vi,Qi), where Ei represents entity nodes annotated with roles and states, Vi represents verb-phrase or event nodes, and Qi consists of question-answer pairs that form directed edges from verb phrases to entities. For example, the statement “Barack Obama was born on August 4, 1961” yields an entity node for Obama, a verb-phrase node “was born,” and QA pairs such as “When was Barack Obama born?” → “August 4, 1961.” This structure explicitly encodes event-attribute relationships in atomic, retrievable units. Refer to the framework diagram for a visual representation of how documents are transformed into individual GSWs and then reconciled into a continually learned global workspace.

To enable efficient retrieval, the authors implement a dual indexing strategy over the corpus. A sparse BM25 index is built over entity nodes, incorporating both surface forms and their associated roles or states. A dense vector index is constructed over all QA pairs extracted from all GSWs. During retrieval, a query is simultaneously scored against both indices: the entity index provides entry points into local semantic graphs, while the QA index enables semantic matching to candidate facts. This dual approach ensures that evidence is composed solely of compact, targeted QA pairs rather than long passages or graph neighborhoods.

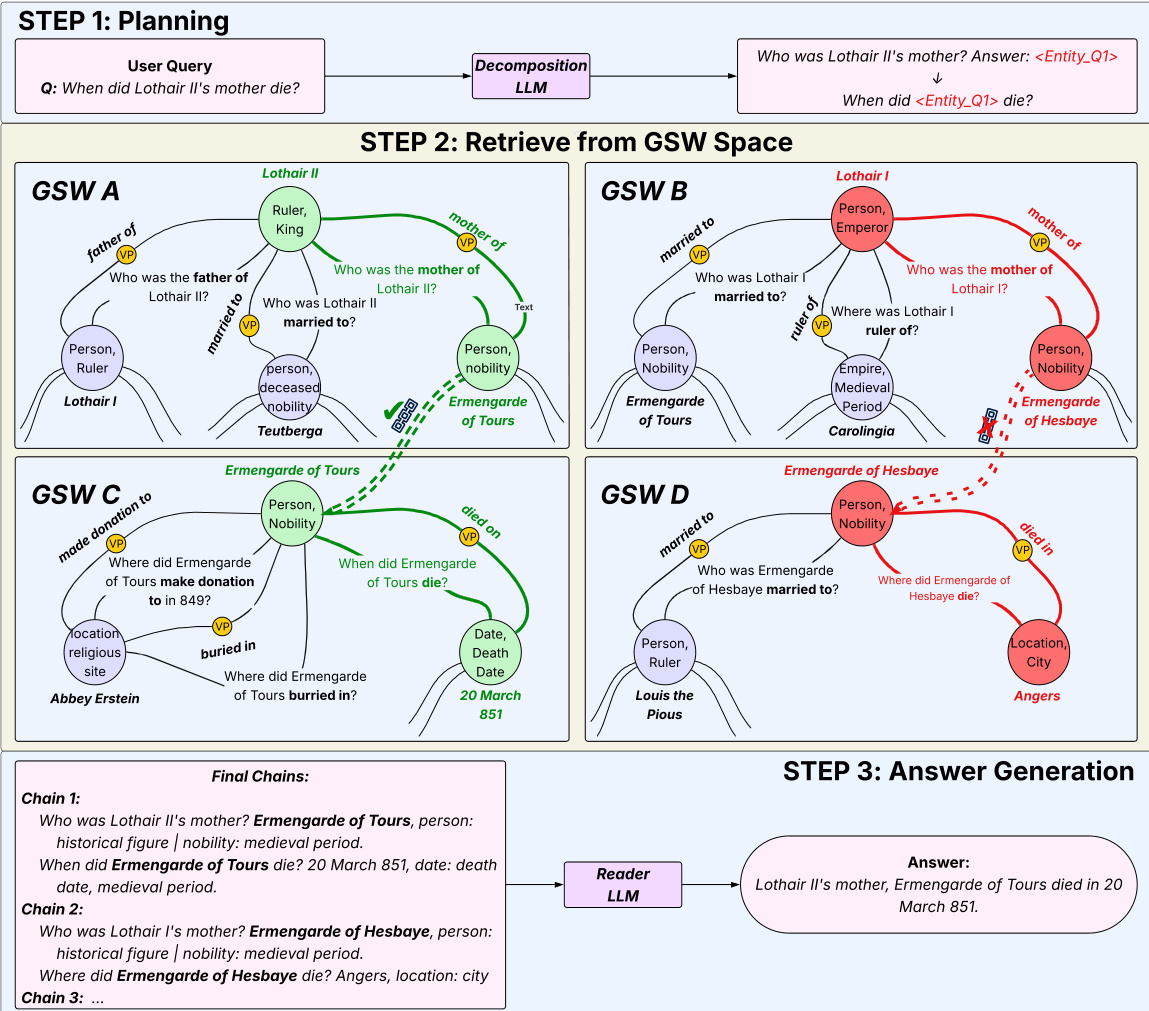

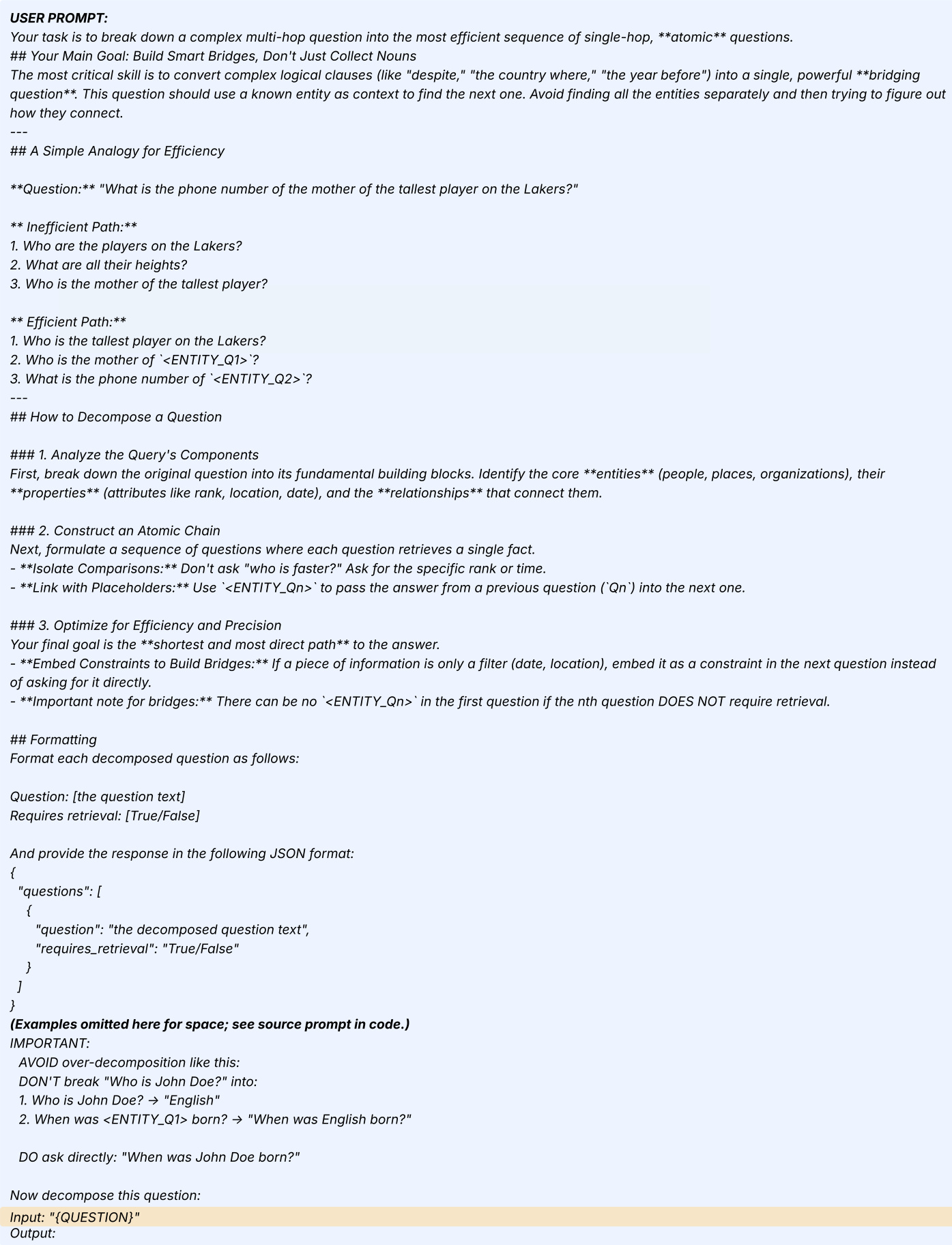

At inference time, PANINI processes a user query through a three-step pipeline. First, a decomposition LLM rewrites the query into a sequence of atomic, single-hop sub-questions. For instance, “When did Lothair II’s mother die?” becomes “Who was the mother of Lothair II?” followed by “When did <Entity_Q1> die?”. The decomposition is performed once and is non-parametric thereafter. As shown in the system overview, this planning step is followed by RICR, a beam-search procedure that navigates the GSWs to assemble evidence chains.

RICR operates hop-by-hop for each sub-question sequence. At each hop t, given a sub-question qt(xt), the system retrieves candidate QA pairs using the dual index: BM25 over entities and dense retrieval over QA pairs. The candidates are reranked using a cross-encoder, and the top-k pairs are retained. A chain C is an ordered sequence of QA pairs (q1G,a1G)⇒(q2G,a2G)⇒⋯⇒(qTG,aTG), where the answer atG from one hop instantiates the next sub-question. To mitigate error propagation, RICR maintains B parallel chains, scoring each by the geometric mean of its constituent relevance scores: score(Ct(j))=(∏l=1tsl,j)1/t. At each hop, chains are pruned to retain only the top-B with unique answers, ensuring diverse exploration of entity paths. After T hops, the top chains are deduplicated, and their QA pairs are passed to the answer model.

The final stage generates a grounded response using an LLM conditioned on the retrieved evidence. The answer model synthesizes the final output from the selected QA pairs, ensuring that responses are directly supported by the retrieved facts. For multi-sequence queries, RICR executes independently for each sequence, and the resulting evidence is combined before answer generation.

To improve GSW quality, especially when using smaller models, the authors introduce a two-pass refinement strategy. After an initial GSW is generated, a second LLM pass inspects and repairs structural deficiencies—adding missing entities, correcting malformed QA pairs, or ensuring bidirectional question coverage. The refinement prompt explicitly instructs the model to preserve correct elements, add missing entities, and enforce QA inversion rules. This lightweight refinement significantly improves downstream performance without requiring larger models for construction.

The entire pipeline is designed to be modular and adaptable. GSW construction, question decomposition, and answer generation can be implemented with different LLMs, and the retrieval and scoring components are non-parametric, enabling robust performance across model sizes and configurations. The system’s strength lies in its ability to dynamically construct inference chains at read time, rather than precomputing dense cross-document graphs, thereby avoiding spurious connections and leveraging LLM-guided scoring at each reasoning step.

Experiment

- PANINI outperforms chunk-based, structure-augmented, and agentic retrieval baselines on multi-hop QA tasks, achieving top average performance while using significantly fewer inference tokens.

- It breaks the typical trade-off between answering accuracy and refusal reliability under missing evidence, maintaining high accuracy on answerable questions while effectively abstaining on unanswerable ones.

- PANINI’s gains persist with fully open-source models, showing robustness to noisy or imperfect GSW construction and preserving advantages even when all components run locally on a single GPU.

- Ablations confirm that question decomposition, dual-index retrieval, QA reranking, and cumulative chain scoring are critical to its performance, especially for multi-hop reasoning.

- PANINI’s structured GSW memory improves not only its own retrieval but also boosts agentic systems like Search-R1 when substituted for their default chunk retrieval.

- It demonstrates superior resilience to corpus growth, maintaining stable performance as distractor documents increase, unlike embedding- or BM25-based methods.

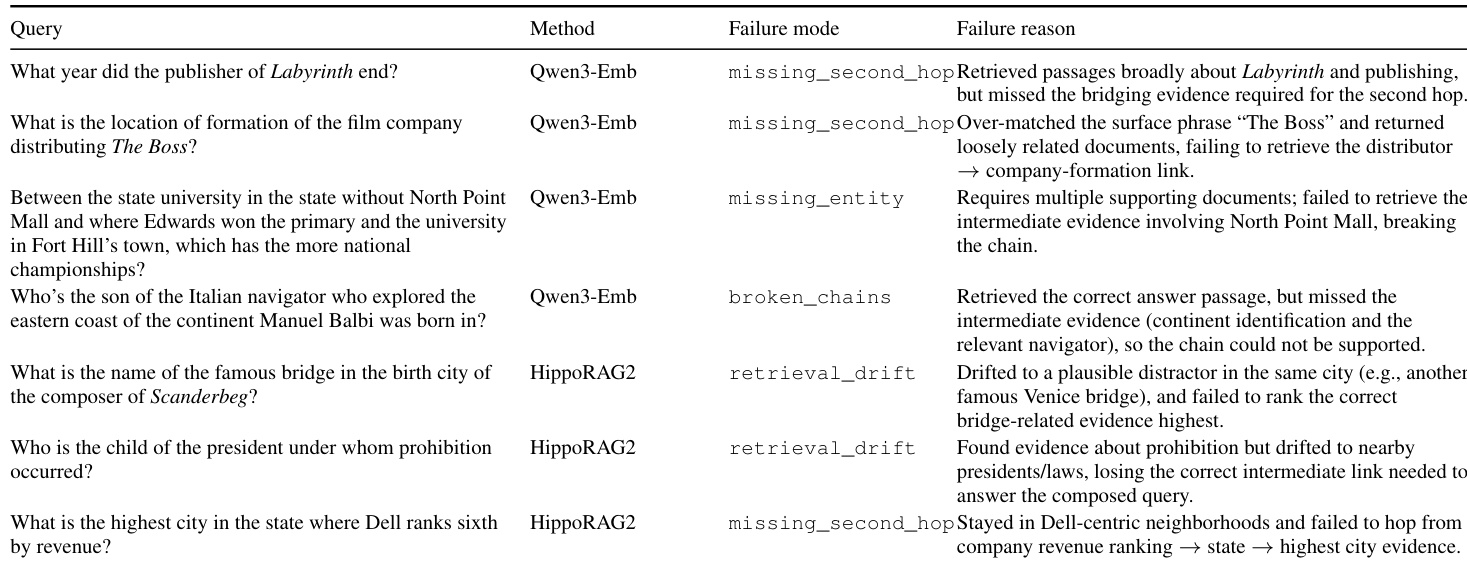

- Qualitative analysis reveals PANINI avoids common baseline failures—such as retrieval drift or broken chains—by enforcing explicit, context-aware, hop-conditioned evidence retrieval.

- Key failure modes stem from imperfect GSW construction (missing verb phrases or QA links) or flawed decomposition, but performance degrades gracefully, supported by beam search resilience.

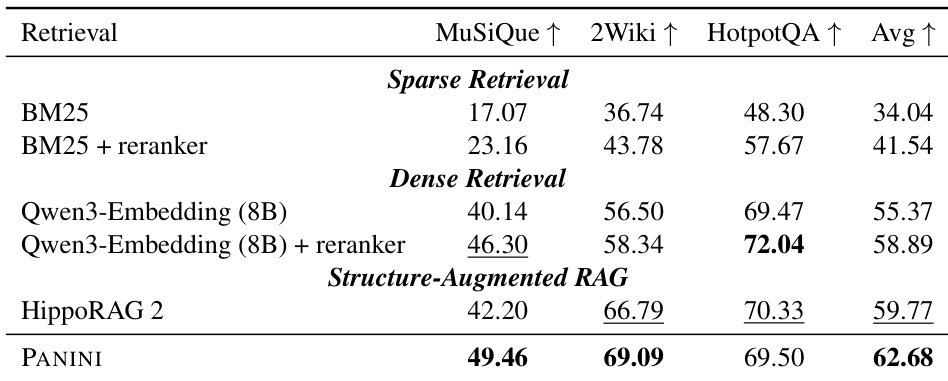

PANINI outperforms both sparse and dense retrieval baselines as well as structure-augmented methods like HippoRAG2 across multiple multi-hop QA benchmarks, achieving the highest average score. The gains are particularly evident on complex tasks such as MuSiQue and 2Wiki, where PANINI’s structured, chain-following retrieval design enables more accurate evidence assembly than traditional chunk-based or graph-based approaches. Results also show that PANINI maintains efficiency advantages while delivering stronger performance, indicating its framework effectively balances precision and computational cost.

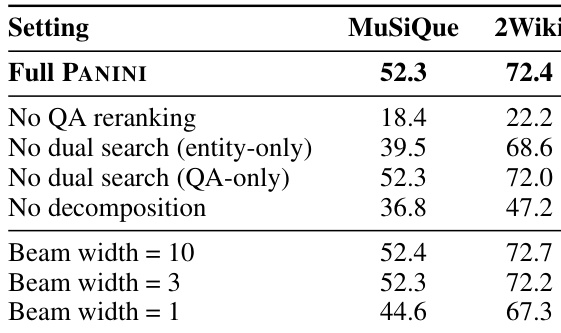

The authors use ablation studies to isolate the impact of key components in PANINI, showing that disabling QA reranking or question decomposition significantly reduces performance, while dual search and beam width adjustments have more nuanced effects. Results show that maintaining QA reranking and decomposition is critical for multi-hop accuracy, whereas reducing beam width to 3 preserves performance while cutting token usage. The framework remains robust across beam widths, with only minor degradation at width 1, indicating flexibility in computational trade-offs.

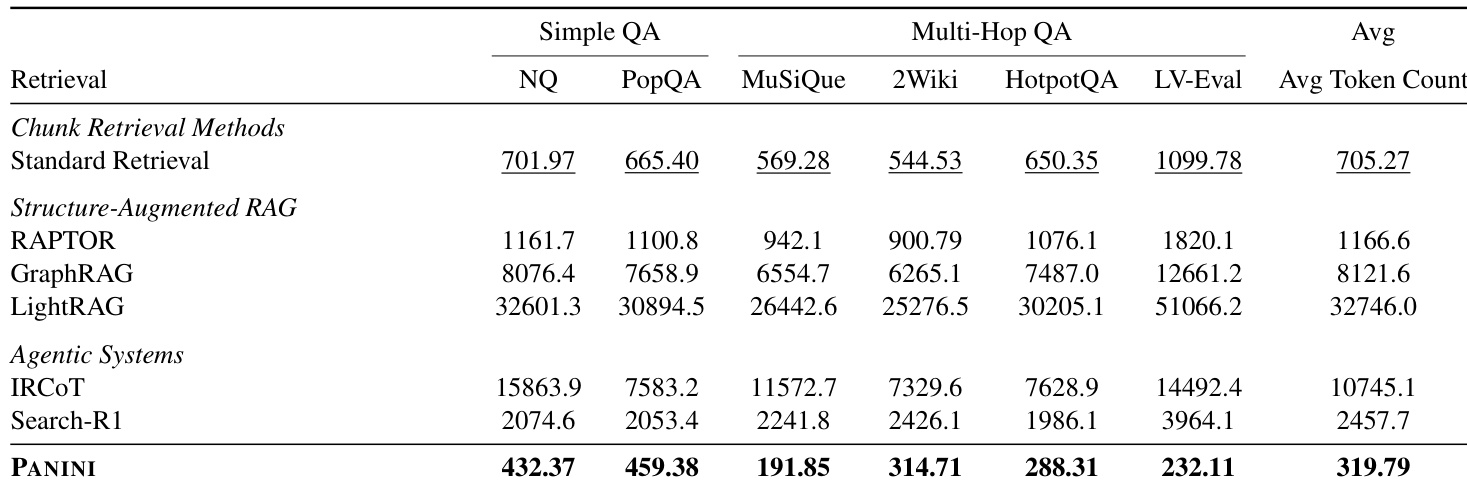

PANINI significantly reduces inference-time token usage compared to chunk-based, structure-augmented, and agentic retrieval methods, achieving the lowest average token count across all evaluated benchmarks. This efficiency stems from its design of conditioning the answer model on concise, targeted QA pairs rather than full document chunks or iterative retrieval traces. The results confirm that PANINI’s lightweight retrieval approach maintains strong performance while drastically cutting computational overhead during inference.

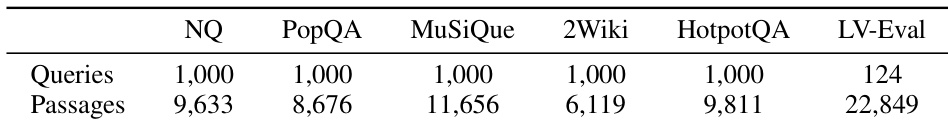

The authors use this table to define the scale of evaluation across six QA benchmarks, specifying the number of queries and passages per dataset. Results across these benchmarks show that PANINI consistently outperforms baselines in multi-hop reasoning while using significantly fewer inference tokens. The framework also maintains strong reliability under missing evidence, breaking the typical trade-off between answering correctly and refusing appropriately.

The authors use qualitative failure analysis to show that dense retrieval systems often miss bridging evidence or break reasoning chains, while graph-based methods like HippoRAG2 suffer from retrieval drift toward semantically related but incorrect entities. PANINI avoids these issues by enforcing explicit, hop-conditioned retrieval through structured QA pairs and dual indexing, which maintains chain integrity and reduces reliance on graph proximity. Results indicate that PANINI’s design improves multi-hop accuracy by aligning retrieval with evolving reasoning context rather than surface similarity or neighborhood structure.