Command Palette

Search for a command to run...

CollabVR : Raisonnement vidéo collaboratif avec des modèles de vision-langage et de génération vidéo

CollabVR : Raisonnement vidéo collaboratif avec des modèles de vision-langage et de génération vidéo

Joowon Kim Seungho Shin Joonhyung Park Eunho Yang

Résumé

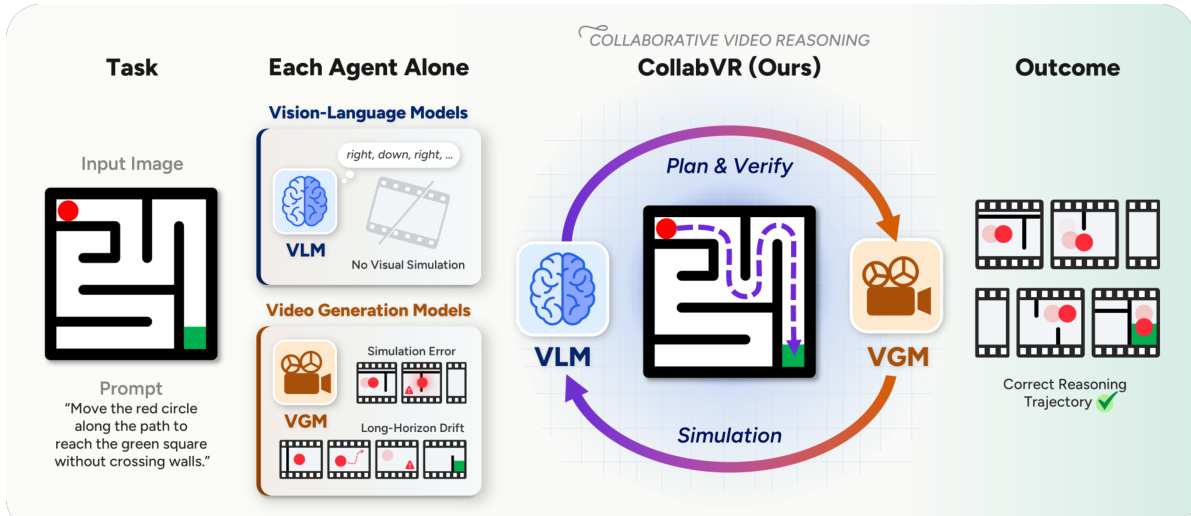

Les approches récentes de « Réflexion par la vidéo » (Thinking with Video) utilisent les modèles de génération vidéo (VGM) pour le raisonnement visuel en produisant des « chaînes d’images » (Chain-of-Frames) cohérentes dans le temps en tant qu’artefacts du raisonnement. Cependant, même les VGM les plus performants présentent deux modes d’échec récurrents sur les tâches orientées vers un objectif : une dérive à long terme sur les tâches multi-étapes et des erreurs de simulation au milieu de la séquence vidéo qui s’accumulent. Ces problèmes découlent tous deux de l’absence d’un raisonnement explicite fondé sur l’prior visuel à court horizon des VGM, un rôle naturellement dévolu aux modèles vision-langage (VLM), mais dont l’intégration précise n’est pas triviale : une planification préalable engage le système avant qu’aucune image ne soit générée, tandis que les critiques a posteriori sur l’ensemble de la vidéo interviennent trop tard.Nous proposons le Raisonnement Vidéo Collaboratif VLM-VGM (CollabVR), un cadre en boucle fermée qui couple le VLM au VGM avec une granularité au niveau de chaque étape : le VLM planifie l’action immédiate suivante, inspecte la séquence vidéo générée par le VGM, et intègre directement le diagnostic du vérificateur dans l’invite de prompt pour l’action suivante afin de réparer les défaillances détectées. Sur les jeux de données Gen-ViRe et VBVR-Bench, CollabVR améliore les performances des VGM open-source et closed-source par rapport aux méthodes d’inférence unique, à Pass@k, et aux solutions de référence basées sur l’augmentation du temps de test pour une quantité de calcul équivalente, avec les gains les plus significatifs sur les tâches les plus difficiles. Il apporte également des améliorations supplémentaires sur un VGM déjà affiné pour le raisonnement, indiquant que la supervision VLM au niveau des étapes est orthogonale et combinable avec l’affinage orienté raisonnement. Nous fournissons des exemples vidéo et des résultats qualitatifs supplémentaires sur la page de notre projet : https://joow0n-kim.github.io/collabvr-project-page.

One-sentence Summary

CollabVR couples vision-language and video generation models at step-level granularity within a closed-loop framework that plans immediate actions, inspects generated clips, and integrates diagnostic feedback to repair failures, thereby outperforming single-inference, Pass@k, and test-time scaling baselines on the Gen-ViRe and VBVR-Bench benchmarks while remaining fully stackable with reasoning-fine-tuned video generation models.

Key Contributions

- An adaptive planning module dynamically determines task step counts and generates only the immediate next action conditioned on previously generated frames, effectively mitigating long-horizon drift in multi-step video reasoning.

- A closed-loop collaborative mechanism employs a vision-language model to verify each generated clip and inject diagnostic feedback directly into the subsequent action prompt, isolating execution errors to individual segments for targeted repair.

- Evaluations on Gen-ViRe and VBVR-Bench demonstrate consistent improvements over single-inference, Pass@k, and VideoTPO baselines across open- and closed-source video generation models at matched compute, with orthogonal performance gains on reasoning-fine-tuned variants.

Introduction

The shift from static image-based reasoning to video generation has unlocked dynamic, temporally grounded AI applications like scientific visualization, educational demonstrations, and embodied navigation. Despite this progress, current Video Generation Models excel only at short-horizon visual simulation and lack the logical planning required for complex, multi-step tasks. This gap produces two recurring failure modes: overloaded prompts that collapse long sequences into inaccurate short rollouts, and localized mid-clip errors that propagate and corrupt entire trajectories. Existing test-time scaling methods struggle to fix these issues because valid reasoning paths are tightly constrained and often fall outside the generator's native distribution. The authors address these challenges by introducing CollabVR, a closed-loop framework that couples Vision-Language and Video Generation Models at a step-level granularity. The authors leverage a VLM as a progressive planner and verifier that inspects each generated clip, diagnoses failures in real time, and dynamically adjusts subsequent prompts to correct errors before they compound. This stepwise collaboration yields higher reasoning fidelity and interpretability across multiple benchmarks without requiring additional model training.

Method

The authors present CollabVR, a closed-loop framework for video reasoning that integrates a Vision-Language Model (VLM) with a Video Generation Model (VGM) at step-level granularity to address systematic failures in goal-directed video generation tasks. The overall architecture operates as a construction process, where the correct trajectory is assembled incrementally through alternating planning and generation steps, rather than being sampled from the VGM’s output distribution. The framework is composed of two core modules: VLM-Driven Progressive Planning and VLM-VGM Collaborative Reasoning, which collectively address long-horizon drift and mid-clip simulation errors, respectively.

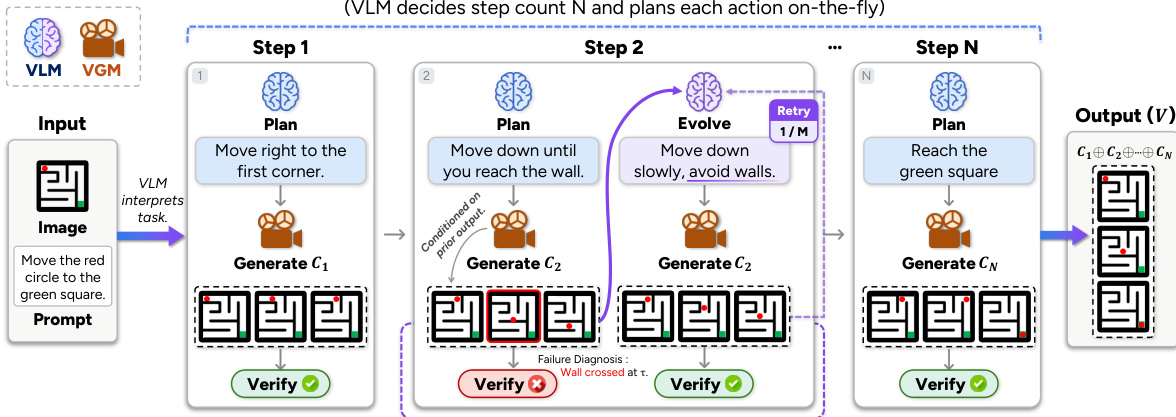

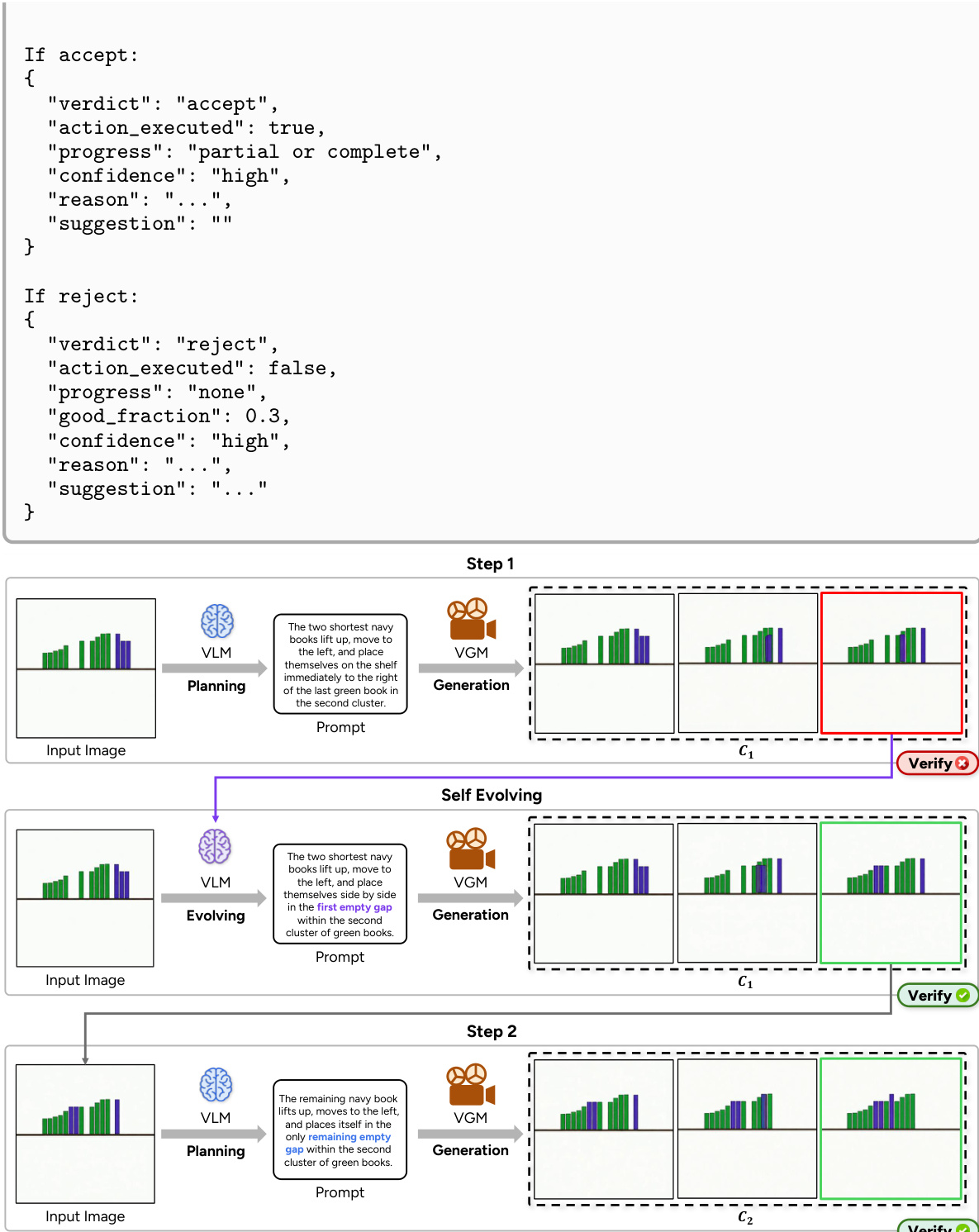

The process begins with an input image and a task prompt, which are used to initialize the reasoning loop. At each step, the VLM acts as a supervisor, first planning the immediate next action based on the current state and the task objective. This planning is performed incrementally, with the VLM determining only the next sub-action rather than committing to a full sequence upfront. The VGM then generates a short clip conditioned on the current frame and the planned action. The generated clip is subsequently verified by the VLM, which produces a structured judgment consisting of an accept/reject verdict and a diagnostic report detailing the failure mode and a repair suggestion. If the clip is accepted, it is appended to the history, and the process continues with the last frame as the new conditioning input. If rejected, the action prompt is evolved using the diagnostic suggestion, and the VGM is re-invoked to generate a new clip, up to a maximum number of retries per step.

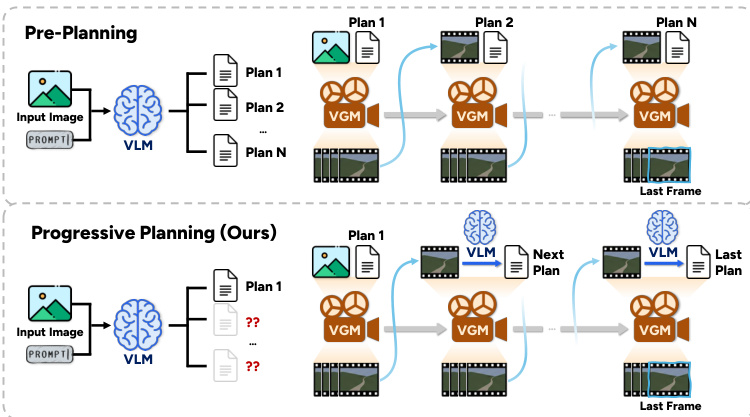

The VLM-Driven Progressive Planning module mitigates the issue of overloaded prompts and long-horizon drift by decoupling the planning phase from the generation phase. Instead of pre-decomposing the entire task into a sequence of actions at the outset, the VLM plans one action at a time, adapting its plan based on the actual output of the VGM. This adaptive planning allows the system to dynamically adjust the number of steps and subsequent actions in response to the realized generation, leading to a more efficient performance-cost trade-off compared to pre-planning approaches. The maximum number of planning steps is capped by a hyperparameter, ensuring termination.

The VLM-VGM Collaborative Reasoning module addresses execution failures by introducing a verification step after each clip generation. The VLM verifier analyzes the generated clip against the planned action, detecting specific failure modes such as incorrect direction, wrong target, or scene collapse. The diagnostic output, which includes a textual reason and an actionable suggestion, is then used to evolve the action prompt for the next generation attempt. This closed-loop feedback mechanism enables the system to repair detected failures directly, rather than relying on post-hoc critique or sampling multiple trajectories. The evolution of the prompt is designed to be efficient, reusing the verifier's output without requiring an additional VLM call.

The framework is designed to be agnostic to the specific VGM used, operating as a test-time scaling method that can be applied to any off-the-shelf generator. The overall process is formalized in Algorithm 1, which outlines the iterative loop of planning, generating, verifying, and evolving, with the final output being the concatenation of all accepted clips. The system can also incorporate auxiliary recovery strategies, such as partial re-generation in navigation tasks, where the VGM is re-invoked from the first failing frame to preserve previously correct progress, thereby making test-time compute more effective by focusing on the failed suffix rather than the entire trajectory.

Experiment

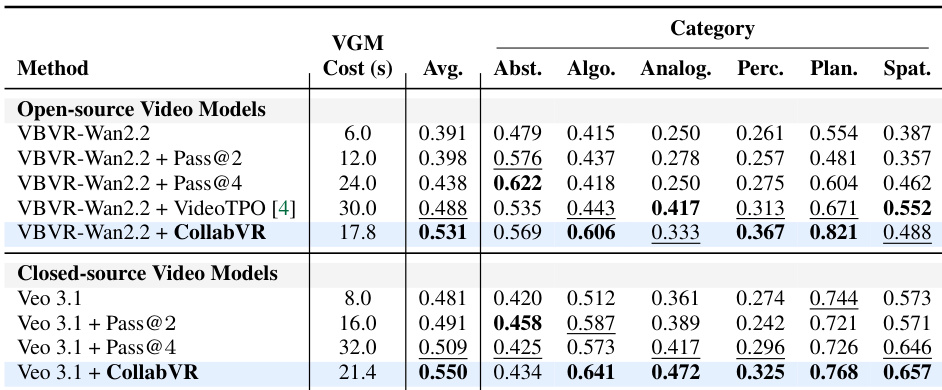

Evaluated across complementary video reasoning benchmarks and multiple generation models, the experiments demonstrate that CollabVR consistently improves task accuracy and human preference ratings while maintaining lower computational costs than standard sampling baselines. Ablation studies validate that progressive task decomposition and failure-aware verification operate as complementary mechanisms, with their relative contributions dynamically adapting to the complexity and structure of each reasoning category. Additional analyses confirm that the framework reliably aligns with human judgment in planning and verification, generalizes effectively across different model architectures, and ultimately highlights that test-time orchestration complements rather than replaces the need for stronger underlying video generation capabilities.

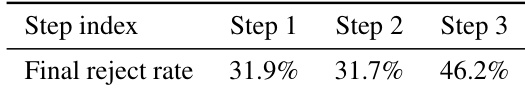

The authors analyze the verifier's performance across different steps in the CollabVR pipeline, showing that the final reject rate increases with each subsequent step, indicating a rise in failure detection as the task progresses. This trend suggests that cumulative errors or visual drift across steps make later stages more challenging for the verifier to accept outputs. The final reject rate rises significantly from Step 1 to Step 3, indicating increasing difficulty in accepting outputs as the task progresses. The verifier is exercised aggressively, with a notable proportion of steps triggering re-generation attempts. The increasing reject rate at deeper steps suggests that errors compound over time, making later stages more prone to failure.

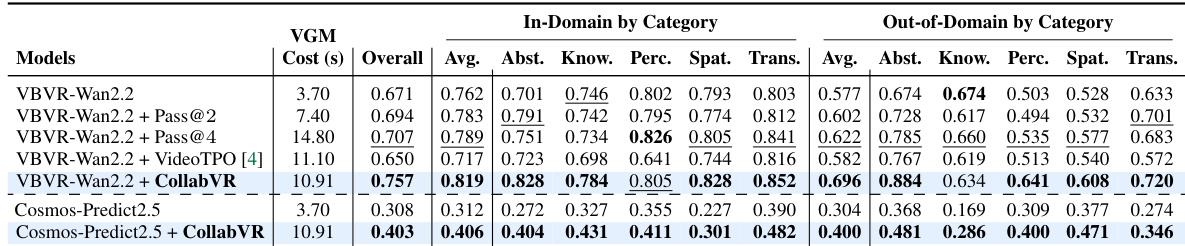

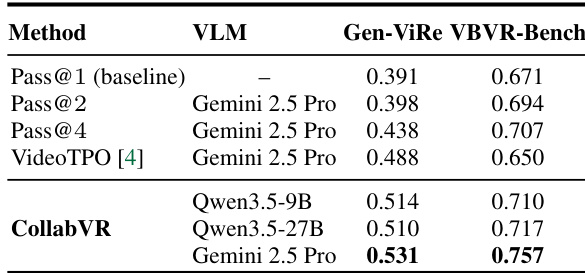

The authors evaluate CollabVR on two video reasoning benchmarks, showing that the framework consistently improves over baselines by combining progressive planning and failure-aware recovery. Results demonstrate that the effectiveness of each module varies by benchmark, with planning more impactful on multi-step tasks and verification more effective on single-step tasks, while the full pipeline achieves gains across all categories. The framework is shown to be robust across different video generation models and verifier choices, with performance scaling with the quality of the verifier. CollabVR improves over baselines by combining progressive planning and failure-aware recovery, with gains across all categories on both benchmarks. The dominant module shifts between planning and verification depending on the benchmark's task complexity, indicating adaptive behavior. The framework's effectiveness is sensitive to verifier quality, with better verifiers leading to more accurate outputs and recovery.

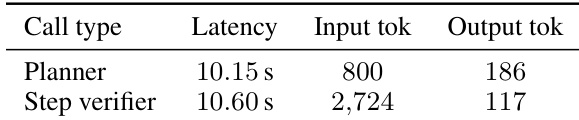

The authors analyze the computational cost of VLM calls in their framework, showing that both planner and step verifier calls have similar latency and input token usage, with the verifier requiring significantly more input tokens due to video content. The output tokens are minimal for both types of calls. This supports the claim that VLM compute is negligible compared to VGM compute. Planner and step verifier calls have comparable latency and output token usage. The step verifier requires substantially more input tokens than the planner due to video content. VLM compute is negligible relative to VGM compute, supporting the use of VGM generation time as a cost proxy.

The authors evaluate CollabVR on two video reasoning benchmarks, demonstrating consistent improvements over baseline methods across different video generation models. Results show that CollabVR achieves higher accuracy with lower generation cost compared to resampling-based approaches, and its effectiveness varies depending on the task complexity and the video generation model used. CollabVR outperforms baseline methods on both benchmarks, with gains most pronounced on tasks requiring multi-step reasoning. The framework achieves higher accuracy at lower per-sample generation cost compared to full-video resampling methods. Performance improvements are sensitive to the video generation model, with larger gains observed on models that benefit from progressive planning and verification.

The authors evaluate CollabVR on two video reasoning benchmarks, demonstrating consistent improvements over baseline methods across both open-source and closed-source video generation models. Results show that CollabVR achieves higher accuracy with lower generation costs, particularly on tasks requiring multi-step reasoning, and that the framework's effectiveness varies by task type and video generation model. The performance gains are attributed to adaptive planning and failure-aware recovery mechanisms, with the dominant module depending on the benchmark's task complexity profile. CollabVR achieves higher accuracy than baselines on both open-source and closed-source video models, with improvements most pronounced on complex reasoning tasks. The framework's effectiveness varies by task category, with different modules contributing more on different types of reasoning problems. CollabVR reduces generation cost while improving performance, indicating that adaptive planning and recovery are more efficient than full-video resampling.

Evaluated across two video reasoning benchmarks and diverse generation models, the experiments validate how progressive planning and failure-aware recovery collaboratively enhance video reasoning quality. Step-wise analysis demonstrates that rejection rates naturally accumulate as tasks progress due to compounding errors, while module comparisons reveal that planning drives performance on complex multi-step tasks and verification excels in simpler scenarios. Computational assessments further confirm that the vision-language model overhead remains negligible, ultimately proving that the adaptive framework achieves superior accuracy and efficiency compared to traditional resampling baselines.