Command Palette

Search for a command to run...

EVENT TENSOR : UNE ABSTRACTION UNIFIÉE POUR LA COMPILATION DE MÉGAKERNELS DYNAMIQUES

EVENT TENSOR : UNE ABSTRACTION UNIFIÉE POUR LA COMPILATION DE MÉGAKERNELS DYNAMIQUES

Résumé

Voici la traduction de votre texte en français, réalisée selon les standards de la rédaction scientifique et technologique :Les charges de travail modernes sur GPU, en particulier l'inférence des grands modèles de langage (LLM), souffrent de surcoûts liés au lancement de kernels (kernel launch overheads) et d'une synchronisation grossière qui limitent le parallélisme inter-kernel. Les techniques récentes de « megakernel » fusionnent plusieurs opérateurs en un seul kernel persistant afin d'éliminer les temps morts entre les lancements et d'exposer le parallélisme inter-kernel ; toutefois, elles peinent à gérer les formes dynamiques (dynamic shapes) et les calculs dépendants des données (data-dependent computation) rencontrés dans les charges de travail réelles. Nous présentons Event Tensor, une abstraction de compilateur unifiée pour les megakernels dynamiques. Event Tensor encode les dépendances entre des tâches pavées (tiled tasks) et permet une prise en charge native du dynamisme lié tant à la forme qu'aux données. S'appuyant sur cette abstraction, notre compilateur Event Tensor Compiler (ETC) applique des transformations d'ordonnancement statiques et dynamiques pour générer des kernels persistants de haute performance. Les évaluations démontrent qu'ETC atteint des performances de pointe (state-of-the-art) en termes de latence pour le service de LLM, tout en réduisant considérablement le surcoût lié à la phase de démarrage du système (system warmup overhead).

One-sentence Summary

The authors present Event Tensor, a unified compiler abstraction for dynamic megakernels that encodes dependencies between tiled tasks to enable shape and data-dependent dynamism, and the Event Tensor Compiler applies static and dynamic scheduling transformations to generate high-performance persistent kernels achieving state-of-the-art LLM serving latency while significantly reducing system warmup overhead.

Key Contributions

- Event Tensor is introduced as a unified compiler abstraction that encodes dependencies between tiled tasks to enable first-class support for shape and data-dependent dynamism. This abstraction compactly represents fine-grained dependencies across operator sub-tasks for GPU streaming multiprocessors.

- Built atop this abstraction, the Event Tensor Compiler applies static and dynamic scheduling transformations to generate high-performance persistent kernels. The pipeline fuses operator implementations into megakernels to unlock inter-kernel parallelism beyond single operator patterns.

- Evaluations demonstrate that the system achieves state-of-the-art large language model serving latency while significantly reducing system warmup overhead. These results validate the effectiveness of the proposed scheduling transformations in real workloads.

Introduction

Modern GPU workloads like Large Language Model inference suffer from kernel launch overheads and coarse synchronization that limit inter-kernel parallelism. While megakernel techniques fuse operators to address launch costs, they struggle with the dynamic shapes and data-dependent computation required by real workloads. To solve this, the authors present Event Tensor, a unified compiler abstraction that encodes task dependencies as first-class symbolic objects to support shape and data-dependent dynamism. Their Event Tensor Compiler uses this abstraction to generate high-performance persistent kernels that achieve state-of-the-art latency with significantly reduced warmup overhead.

Method

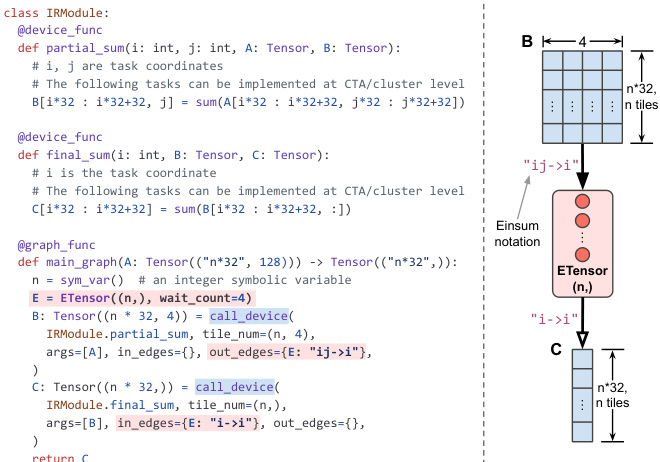

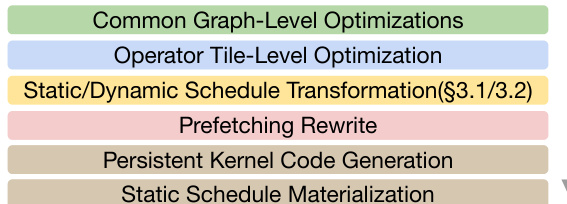

The Event Tensor Compilation (ETC) framework optimizes GPU workloads through a specialized programming model and advanced compilation strategies. The system is built on three primary language constructs: Device Functions, Event Tensors, and Graph Functions. Device functions define parallel task grids, while Event Tensors serve as multi-dimensional structures representing task completion events with operations like notify() and wait(). Graph functions orchestrate these components, explicitly managing dependencies.

Refer to the example below which demonstrates an IRModule where a summation task is partitioned, and dependencies are tracked using Event Tensors with specific coordinate mappings.

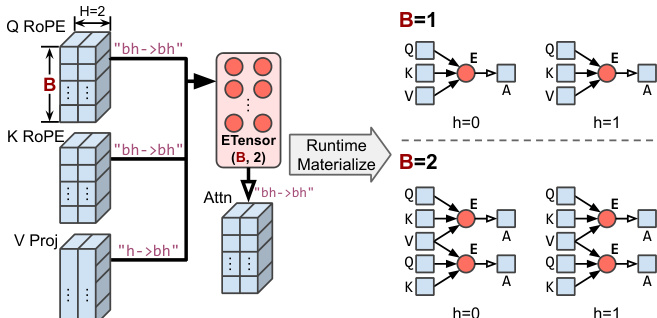

Handling Dynamism A key feature of ETC is its ability to handle both shape and data-dependent dynamism without recompilation. For shape dynamism, the system utilizes symbolic-shape Event Tensors that act as templates for different runtime shapes.

Refer to the diagram below which illustrates how a symbolic batch size B allows the dependency graph to adapt dynamically to different input sizes.

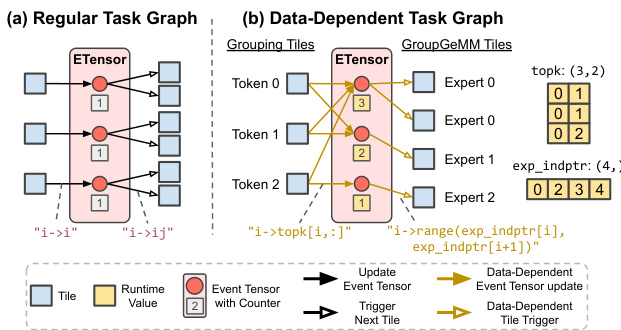

For data-dependent dynamism, such as in Mixture-of-Experts (MoE) layers, the system supports irregular task graphs where dependencies are determined at runtime.

Refer to the comparison below which contrasts a regular static task graph with a data-dependent task graph where runtime tensors define dynamic event updates and task triggers.

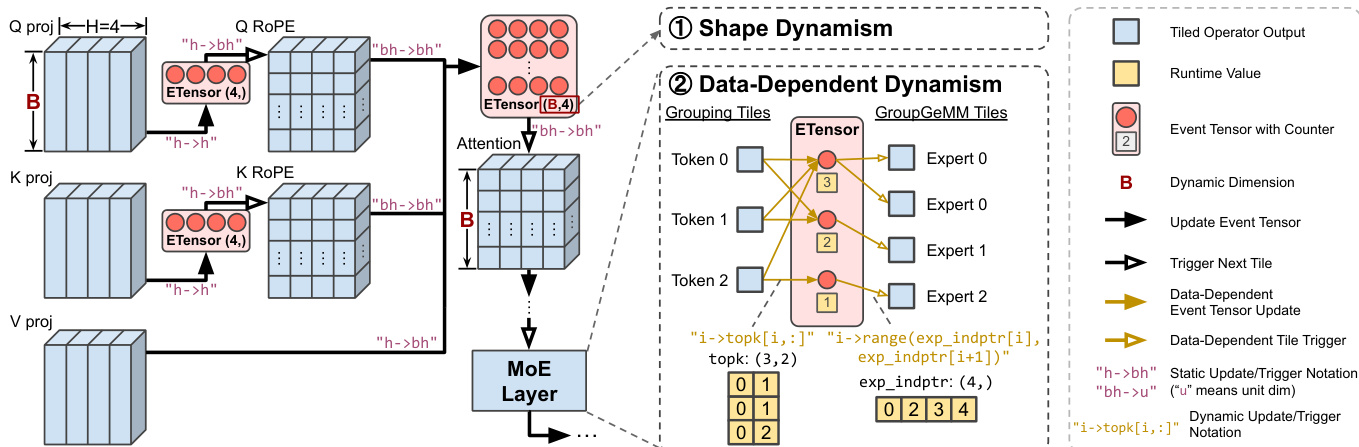

This capability is essential for complex workloads like Attention and MoE layers, where dynamic routing and variable shapes are common.

Refer to the high-level workflow below which depicts the flow from projections to MoE layers, highlighting the points where shape and data-dependent dynamism are managed.

Scheduling Strategies

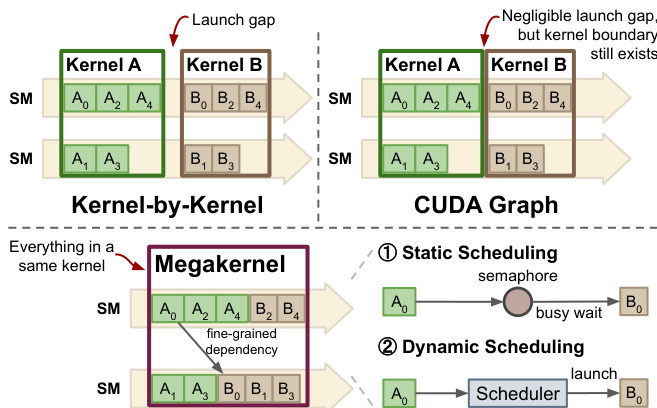

The compilation process transforms the computational graph into optimized megakernels using either static or dynamic scheduling. The figure below contrasts traditional kernel launches and CUDA Graphs with the proposed megakernel approach, which minimizes launch overhead and manages dependencies within the kernel.

In static scheduling, multiple device functions are fused into a single persistent kernel with tasks pre-assigned to specific SMs.

Refer to the code transformation below which shows GEMM and Reduce-Scatter functions being fused, with explicit notify and wait calls inserted to enforce execution order.

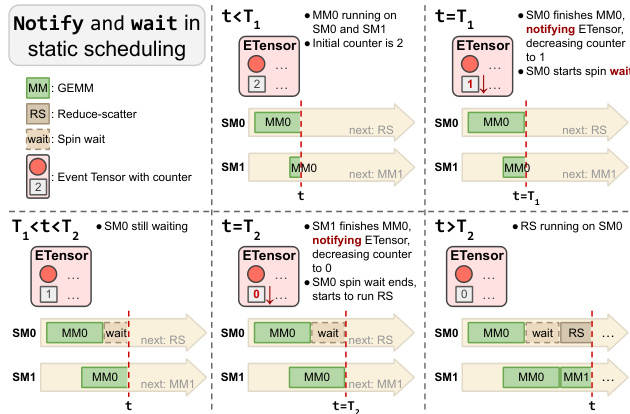

The mechanism relies on low-level synchronization where tasks wait for event counters to reach zero before proceeding.

Refer to the diagram below which visualizes the notify-and-wait mechanism, showing how an SM waits for dependent tasks on other SMs to complete.

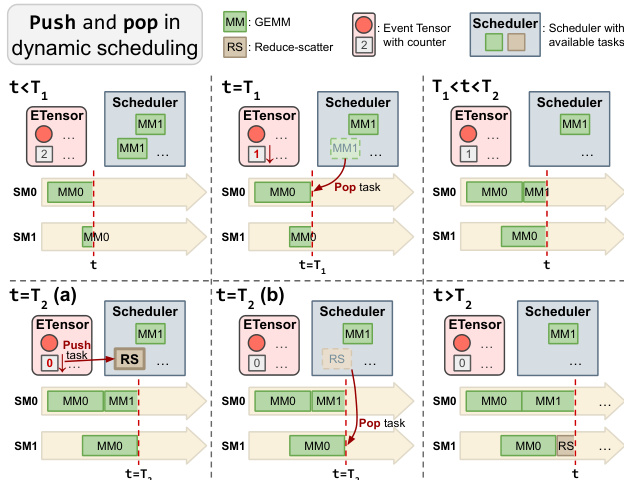

For unpredictable workloads, dynamic scheduling is employed, utilizing an on-GPU task scheduler where SMs atomically pop ready tasks.

Refer to the code transformation below which illustrates the push-pop mechanism where tasks are pushed to the scheduler upon dependency resolution.

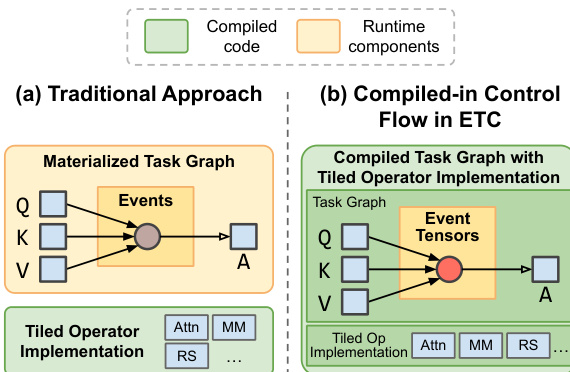

Runtime Architecture Finally, ETC minimizes runtime overhead by compiling scheduling logic directly into the megakernels, avoiding the need to materialize the entire task graph in memory.

Refer to the comparison below which contrasts the traditional runtime executor with ETC's approach of embedding scheduling logic into the compiled kernel.

Experiment

Conducted on NVIDIA B200 GPUs, the evaluation benchmarks ETC against leading systems to assess how the Event Tensor abstraction manages fine-grained dependencies in both static and dynamic task graphs. Experiments demonstrate that fused megakernels lower end-to-end latency in low-batch serving and Mixture-of-Experts layers through improved pipelining and load balancing. Additionally, the framework eliminates runtime warmup overhead via ahead-of-time compilation and reveals distinct performance trade-offs between static and dynamic scheduling strategies for different workload types.

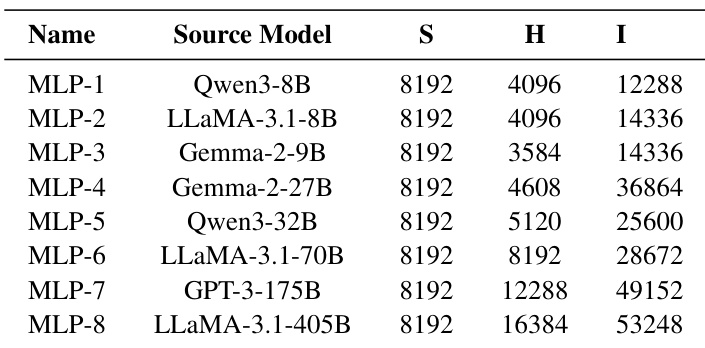

The the the table presents MLP configurations derived from a diverse set of modern large language models to evaluate fused communication and computation performance. While the sequence length remains constant across all entries, the hidden dimensions and intermediate sizes scale significantly to represent models ranging from smaller to massive architectures. This variety allows for assessing the system's effectiveness across different computational complexities and model scales. Configurations span a wide spectrum of model sizes, from smaller to massive architectures. A consistent sequence length is maintained across all tested model configurations. Hidden dimensions and intermediate sizes increase progressively as the model scale grows.

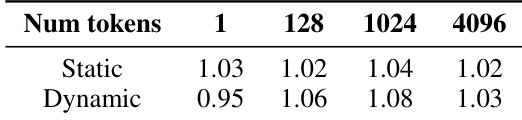

The authors analyze the trade-offs between static and dynamic scheduling strategies on a data-dependent Mixture-of-Experts (MoE) workload. The results demonstrate that dynamic scheduling generally yields higher relative performance for larger workloads due to better load balancing, while static scheduling is slightly more efficient for the smallest workload size. Dynamic scheduling provides superior relative performance compared to static scheduling for all token counts except the smallest. Static scheduling maintains a consistent performance advantage over dynamic scheduling when processing a single token. The dynamic scheduler achieves its maximum relative gain over the baseline at intermediate token counts before converging with the static method at higher counts.

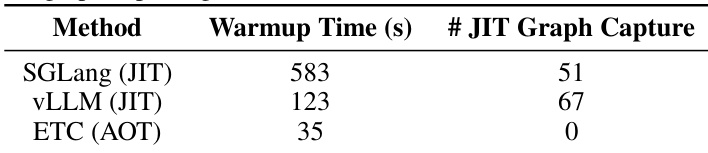

The authors evaluate the warmup overhead of ETC against JIT-based systems like SGLang and vLLM to assess deployment impact. Results demonstrate that ETC's ahead-of-time compilation strategy eliminates the need for runtime graph capture, leading to a substantial reduction in initialization time compared to baselines that require capturing multiple static graphs. ETC achieves significantly faster warmup performance compared to both SGLang and vLLM. The proposed method requires zero runtime JIT graph captures, whereas baselines require numerous captures. Baseline systems incur high warmup costs due to the necessity of capturing static graphs to handle shape variations.

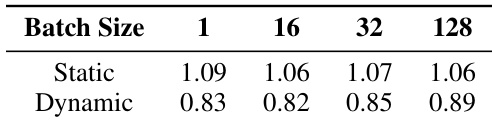

The authors evaluate the performance trade-offs between static and dynamic scheduling strategies on a regular, dense transformer workload with tensor parallelism. The results show that static scheduling consistently delivers higher relative performance than dynamic scheduling across all batch sizes, suggesting that the overhead of dynamic task management negatively impacts efficiency for regular workloads. Static scheduling consistently outperforms dynamic scheduling across all batch sizes. Dynamic scheduling shows reduced relative performance compared to the static approach. The performance advantage of static scheduling remains stable as batch size increases.

The study evaluates system effectiveness across diverse model scales and scheduling strategies to assess fused communication and computation performance. Results indicate that dynamic scheduling benefits data-dependent Mixture-of-Experts workloads through improved load balancing, while static scheduling yields higher efficiency for regular dense transformer workloads. Additionally, the proposed ETC strategy significantly reduces initialization overhead by avoiding the runtime graph captures required by baseline JIT systems.