Command Palette

Search for a command to run...

PersonaVLM : LLMs Multimodaux Personnalisés à Long Terme

PersonaVLM : LLMs Multimodaux Personnalisés à Long Terme

Chang Nie Chaoyou Fu Yifan Zhang Haihua Yang Caifeng Shan

Résumé

Les modèles de langage de grande taille multimodaux (MLLMs) servent d'assistants quotidiens à des millions de personnes. Cependant, leur capacité à générer des réponses alignées sur les préférences individuelles reste limitée. Les approches précédentes ne permettent qu'une personnalisation statique et à tour unique (single-turn) via l'augmentation des entrées ou l'alignement des sorties, et échouent ainsi à capturer l'évolution des préférences et de la personnalité des utilisateurs au fil du temps (voir Fig. 1). Dans cet article, nous présentons PersonaVLM, un cadre innovant d'agent multimodal personnalisé conçu pour une personnalisation à long terme. Il transforme un MLLM à usage général en un assistant personnalisé en intégrant trois capacités clés : (a) La Mémoire (Remembering) : Il extrait et résume de manière proactive des mémoires multimodales chronologiques issues des interactions, en les consolidant dans une base de données personnalisée. (b) Le Raisonnement (Reasoning) : Il effectue un raisonnement multi-tours en récupérant et en intégrant les mémoires pertinentes de la base de données. (c) L'Alignement des Réponses (Response Alignment) : Il infère l'évolution de la personnalité de l'utilisateur tout au long des interactions à long terme afin de garantir que les sorties restent alignées avec ses caractéristiques uniques. Pour l'évaluation, nous avons établi Persona-MME, un benchmark complet comprenant plus de 2 000 cas d'interaction sélectionnés, conçu pour évaluer la personnalisation des MLLMs à long terme à travers sept aspects clés et 14 tâches fines. Des expériences approfondies valident l'efficacité de notre méthode, améliorant les lignes de base (baselines) de 22,4 % (Persona-MME) et 9,8 % (PERSONAMEM) sous un contexte de 128k tokens, tout en surpassant GPT-4o de 5,2 % et 2,0 %, respectivement. Page du projet : https://PersonaVLM.github.io.

One-sentence Summary

To address the limitations of static personalization in multimodal large language models, the authors propose PersonaVLM, a framework that enables long-term user alignment through proactive multimodal memory extraction, multi-turn reasoning, and evolving personality inference, significantly outperforming baselines on the newly established Persona-MME benchmark.

Key Contributions

- The paper introduces PersonaVLM, a unified agent framework that enables long-term, dynamic personalization for Multimodal Large Language Models through the integration of proactive memory extraction, multi-turn reasoning via memory retrieval, and evolving response alignment.

- This work presents Persona-MME, a comprehensive benchmark consisting of over 2,000 curated interaction cases designed to evaluate long-term personalization across seven key aspects and 14 fine-grained tasks.

- Experimental results demonstrate that PersonaVLM significantly improves personalization capabilities, outperforming both proprietary models like GPT-4o and leading open-source alternatives by 22.4% on the Persona-MME benchmark.

Introduction

As Multimodal Large Language Models (MLLMs) become daily assistants, there is a growing need for them to move beyond general problem solving toward personalized, long-term interactions. Current personalization strategies are largely limited to static, single-turn approaches through input augmentation or output alignment. These methods fail to capture evolving user preferences and cannot adapt to personality shifts that occur over extended periods of interaction.

The authors leverage a new agent framework called PersonaVLM to achieve dynamic, long-term personalization. The framework integrates three core capabilities: proactive multimodal memory management, multi-turn reasoning through retrieval, and response alignment that adapts to a user's evolving personality. To support this, the authors introduce a specialized memory architecture and a comprehensive benchmark named Persona-MME to evaluate multi-faceted personalization performance.

Dataset

Dataset Overview

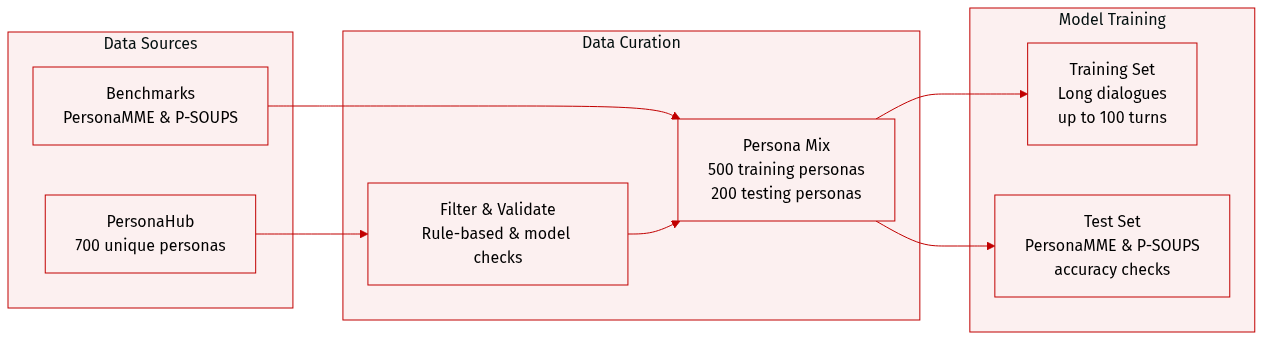

The authors utilize a combination of synthesized long-term multimodal dialogue data and established benchmarks to train and evaluate their model:

-

Synthesized Multimodal Dialogue Dataset

- Composition and Sources: The authors synthesized a large-scale dataset by sampling 700 unique personas from PersonaHub.

- Subsets and Distribution: The data is split into 500 personas for training and 200 personas for testing. Training dialogues consist of 20 to 100 turns and simulate a timeframe of up to one month.

- Processing and Validation: To ensure quality, accuracy, and safety, the authors implemented a two-stage filtering process involving rule-based checks and model-based validation. During synthesis, the model generates structured metadata, including timestamps and dialogue turn indices for episodic topics, which are used for data validation.

-

Evaluation Benchmarks

- PERSONAMEM: This benchmark evaluates timeline-aware conversational abilities through synthetic, multi-session data. The authors conduct evaluations using two context-length settings: 32k and 128k. The 128k setting is created by sampling half of the original 2,728 personas, resulting in 1,362 multiple-choice questions, while the 32k setting includes 589 questions.

- P-SOUPS: This benchmark measures personalization across three dimensions: Expertise, Informativeness, and Style. It contains 1,800 total test cases (600 per dimension). Each case provides a user prompt, a profile, and a pair of responses (one chosen and one rejected), requiring the model to select the profile-aligned response. For few-shot experiments, the authors augment inputs with a single example of Pair-wise Comparative Feedback.

Method

The PersonaVLM framework is designed to enable long-term personalization through a dual-stage process comprising a Response stage and an Update stage, both operating within a personalized memory architecture. The overall system architecture, as illustrated in the framework diagram, centers on a multimodal input that includes a user query, a profile, and conversation context. This input is processed by the PersonaVLM model, which interacts with a personalized memory system composed of four distinct memory types: Core Memory, Semantic Memory, Episodic Memory, and Procedural Memory. These memories are stored and managed to maintain a comprehensive, long-term user profile. The system's operation is structured around two collaborative phases: the Response stage, which generates context-aware, personalized responses, and the Update stage, which evolves the user's personality profile and proactively updates the memory database during idle periods.

The Response stage is responsible for generating a personalized response Rm at turn m by performing multi-step reasoning and timeline-based retrieval. This process is initiated with the user's current query Qm, which consists of a text instruction, an optional image, and a timestamp, along with the dialogue context Cm and the state of the personalized memory database Mm−1. The implementation of this stage involves a multi-step interaction between the PersonaVLM agent and its memory system. In the initial step, the model is prompted with the user's instruction, context, and a consolidated profile. The model then outputs a detailed reasoning process and an action result. If the model determines that retrieval is necessary, it generates retrieval conditions, including a time period and keywords, which are used to search the memory database. The agent isolates memories within the inferred time period and performs a parallel search across semantic, episodic, and procedural memory types. The top-k results from each type are collected and fed back to the model to initiate the next reasoning step. This iterative process continues until the model outputs the final response. This design enables precise retrieval by allowing the model to determine not just what to retrieve, but also if retrieval is necessary and from when, thereby addressing the limitations of direct semantic retrieval and the neglect of temporal cues.

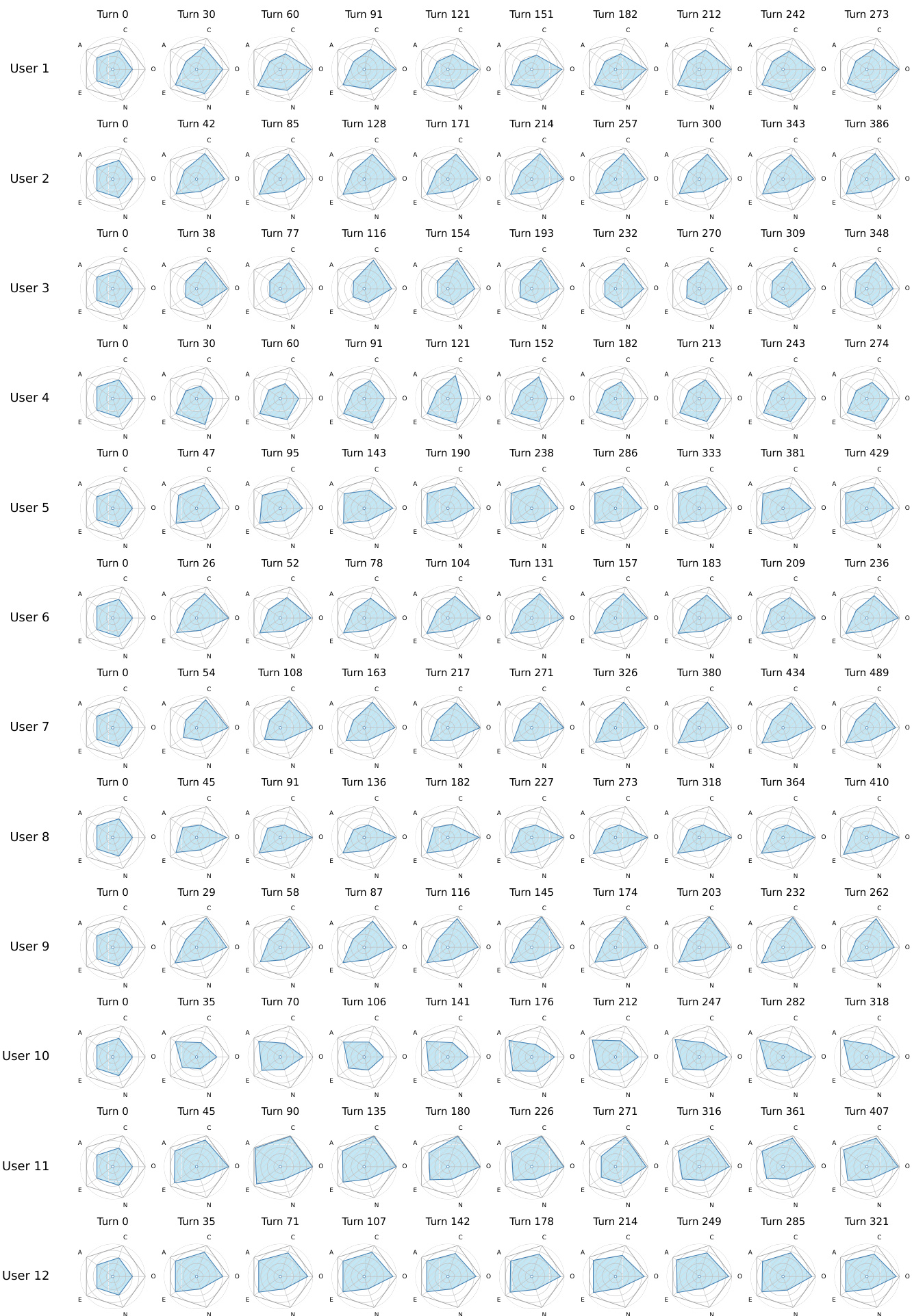

The Update stage, which executes automatically after a response is generated, is responsible for evolving the user's personality profile and proactively updating the memories. This stage updates the user's personality profile, Pm, using the proposed Personality Evolving Mechanism (PEM). The PEM maintains a long-term personality profile as a vector p∈R5, corresponding to the Big Five dimensions. At each turn m, the PEM infers a temporary personality vector pm′ from the user's latest query and updates the long-term profile using an exponential moving average (EMA) with a dynamic smoothing factor λ. To ensure high adaptability in early conversations while promoting stability over time, a cosine decay schedule is employed for λ. The updated numerical vector pm is then converted back into a descriptive textual summary, Pm, for use in the Response Stage. The memory types are updated selectively: semantic memory is updated after each turn by extracting and storing key information; core and procedural memory are updated at the end of each session through automated CRUD operations; and episodic memory is constructed by segmenting dialogues into topic-based entries, each containing a summary, keywords, and relevant dialogue turns.

The training of PersonaVLM is conducted in two stages using the Qwen2.5-VL-7B model as the backbone. The first stage is Supervised Fine-Tuning (SFT) on a curated synthetic dataset of 78k samples to equip the model with foundational memory management and multi-turn reasoning skills. The training data consists of examples for memory mechanisms, including personality inference and the four types of memory CRUD operations, as well as QA pairs with complete multi-step reasoning trajectories. After SFT, the model is capable of generating well-formed reasoning and retrieval actions. The second stage is Reinforcement Learning (RL), which aims to further enhance the model's multi-turn reasoning capability. This stage employs Group Relative Policy Optimization (GRPO), an improved PPO algorithm, to train the policy model πθ. During generation, a strictly structured output format is enforced, requiring the model to first output its reasoning process within <think> tags, followed by either retrieval conditions in <retrieve> tags or the final response in <answer> tags. For each training sample, a group of multi-turn trajectories is sampled, and the reward is calculated based on accuracy, logical consistency, and format adherence. The advantage for each trajectory is computed by standardizing its reward within the sampled group.

Experiment

The evaluation utilizes the newly constructed Persona-MME benchmark and the existing PERSONAMEM dataset to assess multimodal large language models across dimensions such as memory, intent, and personality alignment. Experiments validate the PersonaVLM framework by testing its ability to recall long-term user information, align with evolving personality traits, and perform personalized open-ended generation. Findings demonstrate that PersonaVLM significantly outperforms strong open-source models and achieves competitive results against proprietary models like GPT-4o, particularly in complex tasks involving growth modeling and behavioral awareness. Qualitative analysis further confirms that the framework's multi-step reasoning and integrated memory components effectively prevent hallucinations and maintain consistent tonal alignment during long-term interactions.

{"caption": "Persona-MME dataset statistics", "summary": "The the the table presents key statistics for the Persona-MME benchmark, highlighting the average dialogue length, multimodal content proportion, and question and answer lengths. These metrics reflect the benchmark's design for evaluating long-term personalization in multimodal interactions.", "highlights": ["The average dialogue spans over 140 turns, indicating extensive conversational history for evaluation.", "Approximately 15.87% of turns in the dataset are multimodal, emphasizing the inclusion of visual and textual inputs.", "A significant portion of questions require visual information, with 34.02% being image-related, underscoring the multimodal nature of the benchmark."]

The figure visualizes the dynamic evolution of personality traits for multiple users across a series of conversation turns. Each user's personality profile is represented as a radar chart that changes over time, showing shifts in traits such as openness, conscientiousness, and extraversion as interactions progress. Personality traits change over time for each user as interactions progress. The radar charts show distinct patterns of trait evolution across different users. The visualizations highlight longitudinal changes in user characteristics during extended conversations.

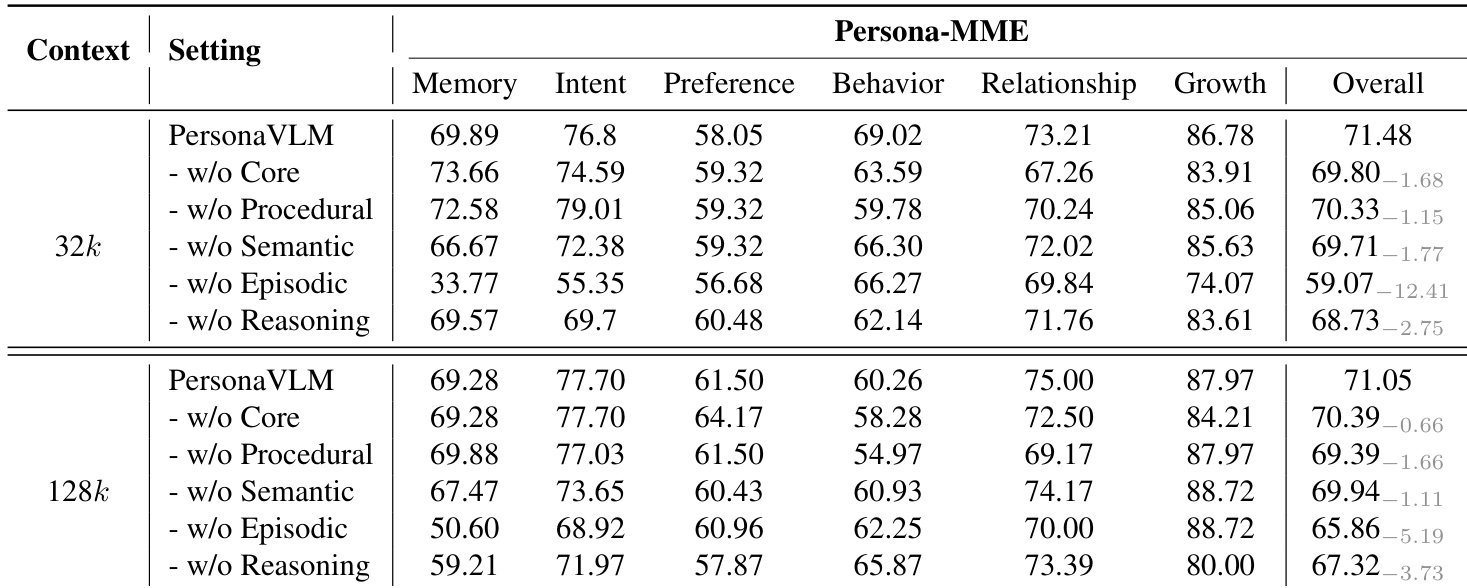

The the the table presents an ablation study on the Persona-MME benchmark, evaluating the impact of removing key components of the PersonaVLM framework. Results show that the full model achieves the highest performance across all context settings, with significant drops observed when specific components like episodic memory or reasoning are removed, indicating their critical role in long-term personalization. Removing episodic memory causes the largest performance drop in both context settings. The reasoning component contributes to improved performance, especially in the 128k context. All memory types and reasoning are essential, as their removal degrades overall performance.

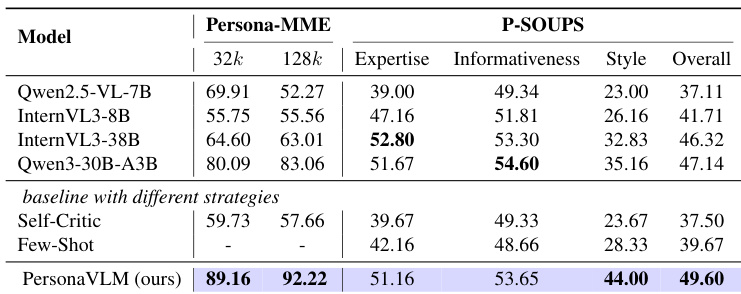

The the the table presents a comparison of various models on the Persona-MME and P-SOUPS benchmarks, focusing on performance across different context lengths and alignment dimensions. Results show that the proposed PersonaVLM model achieves competitive or superior performance, particularly in the 128k context setting and across key alignment metrics. PersonaVLM achieves the highest scores on the 128k context setting and overall alignment metrics. The model outperforms baseline models with different strategies, including Self-Critic and Few-Shot. PersonaVLM shows significant improvements in expertise and style alignment compared to other models.

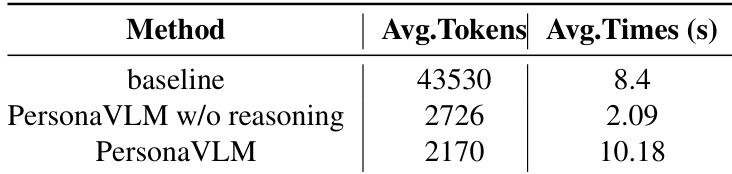

The the the table compares the efficiency of PersonaVLM with and without reasoning against a baseline model, measuring average token consumption and response time. Results show that disabling reasoning significantly reduces token usage and speeds up responses, while adding reasoning further reduces tokens but increases latency. Disabling reasoning reduces token consumption and response time significantly compared to the baseline. Adding reasoning further reduces token usage but increases response time compared to the non-reasoning version. PersonaVLM without reasoning achieves a 93.7% reduction in token consumption and a 4.8x speedup over the baseline.

The Persona-MME benchmark evaluates long-term multimodal personalization through extensive dialogue histories and evolving user personality traits. Ablation studies and comparative evaluations demonstrate that the PersonaVLM framework excels in expertise and style alignment, particularly in long-context settings, by leveraging critical components such as episodic memory and reasoning. While incorporating reasoning enhances performance and reduces token consumption, it introduces a trade-off by increasing response latency.