Command Palette

Search for a command to run...

KnowRL : Améliorer le raisonnement des LLM via l'apprentissage par renforcement avec un guidage de connaissances minimal-suffisant

KnowRL : Améliorer le raisonnement des LLM via l'apprentissage par renforcement avec un guidage de connaissances minimal-suffisant

Résumé

Voici la traduction de votre texte en français, respectant les standards de la communication scientifique et technique :Le RLVR (Reinforcement Learning from Verifiable Rewards) améliore le raisonnement des Large Language Models, mais son efficacité est souvent limitée par une sévère parcimonie de la récompense (reward sparsity) sur les problèmes complexes. Les méthodes récentes de RL basées sur des indices (hint-based RL) atténuent cette parcimonie en injectant des solutions partielles ou des templates abstraits ; cependant, elles augmentent généralement l'apport de guidage en ajoutant davantage de tokens, ce qui introduit de la redondance, de l'incohérence et une charge d'entraînement supplémentaire. Nous proposons KnowRL (Knowledge-Guided Reinforcement Learning), un framework d'entraînement par RL qui traite la conception des indices comme un problème de guidage "minimal-suffisant". Lors de l'entraînement par RL, KnowRL décompose le guidage en points de connaissance atomiques (KPs, Knowledge Points) et utilise la recherche de sous-ensembles contraints (CSS, Constrained Subset Search) pour construire des sous-ensembles compacts et conscients de l'interaction (interaction-aware) pour l'entraînement. Nous identifions en outre un paradoxe d'interaction d'élagage (pruning interaction paradox) — où la suppression d'un seul KP peut être bénéfique, tandis que la suppression de plusieurs KPs de ce type peut être préjudiciable — et nous optimisons explicitement la sélection de sous-ensembles robustes sous cette structure de dépendance.Nous avons entraîné KnowRL-Nemotron-1.5B à partir de OpenMath-Nemotron-1.5B. Sur huit benchmarks de raisonnement à l'échelle de 1.5B, KnowRL-Nemotron-1.5B surpasse systématiquement les modèles de référence (baselines) de RL et de hinting performants. Sans l'utilisation d'indices KP lors de l'inference, KnowRL-Nemotron-1.5B atteint une précision moyenne de 70,08, dépassant déjà Nemotron-1.5B de +9,63 points ; avec la sélection de KPs, la performance s'élève à 74,16, établissant un nouvel état de l'art (state of the art) à cette échelle. Le modèle, les données d'entraînement sélectionnées et le code sont disponibles publiquement à l'adresse suivante : https://github.com/Hasuer/KnowRL.

One-sentence Summary

The authors propose KnowRL, a reinforcement learning framework that enhances large language model reasoning by treating hint design as a minimal-sufficient knowledge problem, utilizing Constrained Subset Search to select compact, interaction-aware knowledge points that allow KnowRL-Nemotron-1.5B to achieve state-of-the-art performance across eight reasoning benchmarks.

Key Contributions

- The paper introduces KnowRL, a reinforcement learning training framework that treats hint design as a minimal-sufficient guidance problem by decomposing guidance into atomic knowledge points.

- This work presents Constrained Subset Search (CSS), a selection strategy that constructs compact, interaction-aware subsets of knowledge points to address the pruning interaction paradox where removing specific combinations of points can degrade performance.

- Experimental results across eight reasoning benchmarks demonstrate that the KnowRL-Nemotron-1.5B model achieves a new state of the art at the 1.5B scale, reaching 74.16 average accuracy when using selected knowledge point hints.

Introduction

Reinforcement Learning from Verifiable Rewards (RLVR) is essential for improving reasoning in large language models, but it often struggles with reward sparsity when models fail to generate correct answers on difficult tasks. While existing hint-based methods attempt to mitigate this by injecting partial solutions or reasoning templates, they often rely on excessive guidance that introduces redundancy, conceptual ambiguity, and increased computational overhead. The authors propose KnowRL, a framework that treats hint design as a minimal-sufficient guidance problem by decomposing information into atomic knowledge points (KPs). They introduce a Constrained Subset Search (CSS) strategy to identify the smallest, most effective subsets of KPs required to unlock rewards, specifically addressing a pruning interaction paradox where KPs exhibit complex dependencies. This approach allows the model to achieve state-of-the-art reasoning performance at the 1.5B scale while maintaining significantly more compact and efficient training guidance.

Dataset

Dataset Description

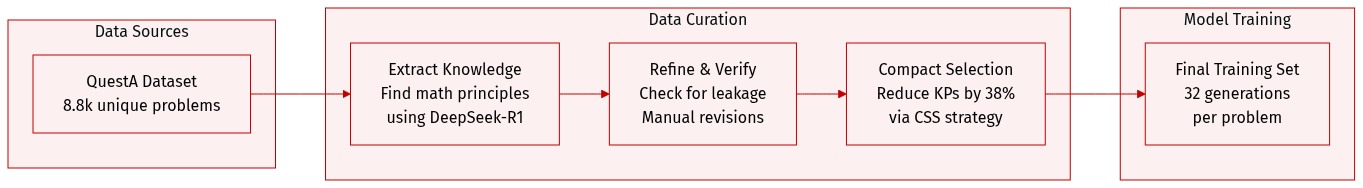

The authors construct the KnowRL training dataset through a multi-stage curation and processing pipeline:

-

Dataset Composition and Sources

- The core training data is derived from the open-source QuestA dataset.

- After deduplication, the authors retained 8.8k unique training instances.

-

Knowledge Point (KP) Extraction and Refinement

- Grounding: To ensure reasoning accuracy, the authors first sample responses from DeepSeek-R1 for each problem until a correct solution is obtained.

- Extraction: Using the problem and the verified solution, DeepSeek-R1 is prompted to extract only the essential mathematical principles, creating an initial set of candidate KPs.

- Verification: To prevent data leakage and ensure generalizability, DeepSeek-R1 acts as an automated reviewer to verify each KP. Any KPs that are instance-bound rather than generalizable are manually revised.

-

Data Processing and Selection

- Compactness Strategy: Rather than using all raw KPs, which can lead to cross-hint inconsistency, the authors apply a Compact Subset Selection (CSS) strategy. This process reduces the number of KPs by approximately 38% to create more efficient training hints.

- Sampling Procedure: For each training instance, the authors sample 32 generations using a top_p of 0.9 and a temperature of 0.9. This procedure is repeated over 8 independent runs to build the final training set.

Method

The authors present KnowRL, a framework designed to enhance mathematical reasoning through structured knowledge point (KP) curation and selection. At a high level, KnowRL follows an end-to-end workflow: for each training problem, it first constructs a set of candidate KPs, then filters out leakage and redundancy to obtain a compact, problem-specific subset, and finally uses this curated subset as hint data for reinforcement learning (RL) training only when necessary. The core technical contribution of KnowRL lies in the construction and selection of high-quality KP data, which is performed offline before any RL training begins to ensure reproducibility and efficiency.

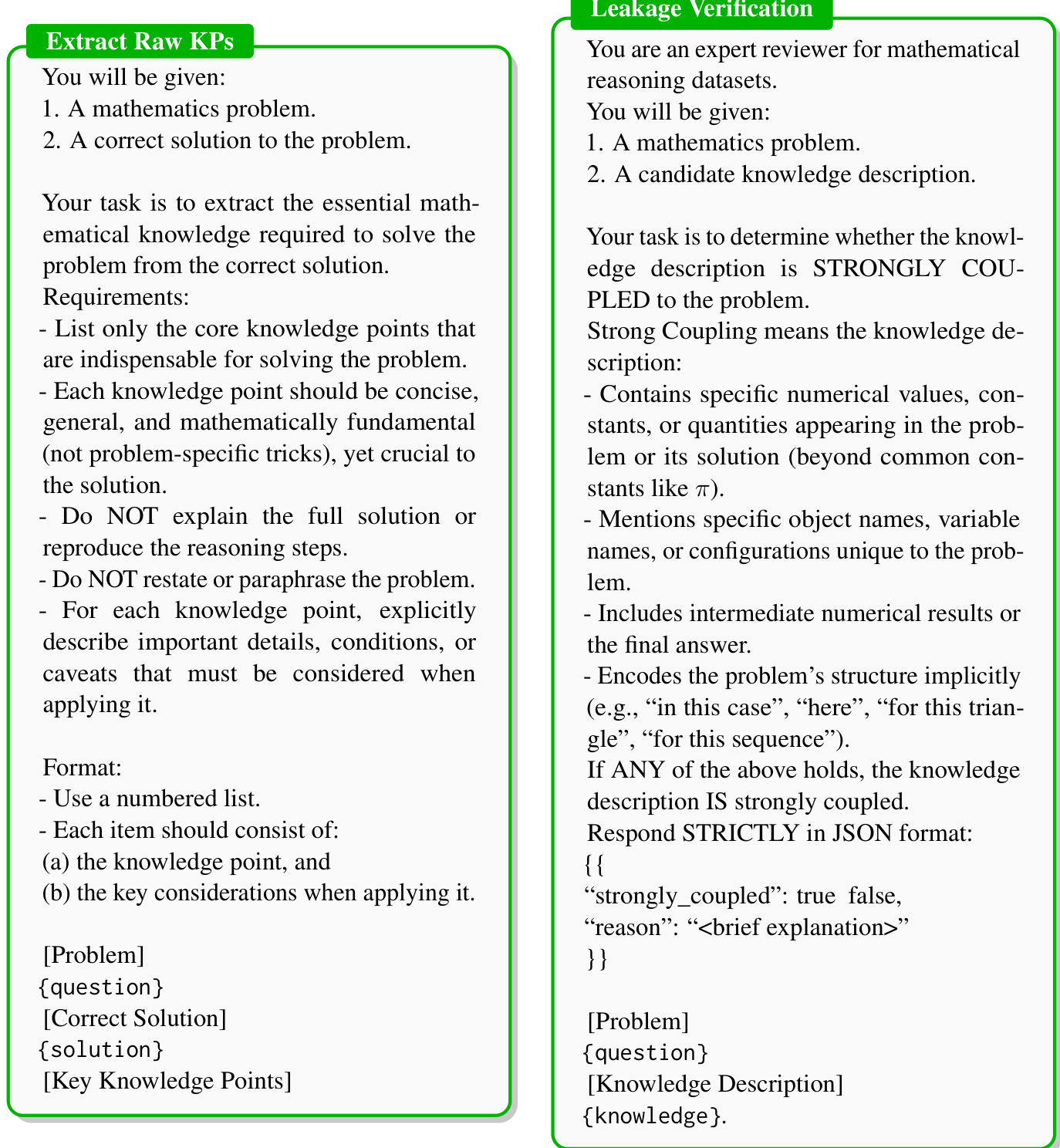

The KP construction process begins with the extraction of raw knowledge points from correct solutions. This stage, illustrated in the framework diagram, involves a prompt-based extraction step where the system is given a problem and its correct solution. The task is to identify the essential mathematical knowledge required to solve the problem, focusing on core concepts that are indispensable, general, and mathematically fundamental. The extracted KPs are not meant to reproduce the full solution or explain reasoning steps but to capture the key principles and conditions that must be applied. As shown in the figure below, the output is a concise, numbered list of knowledge points, each accompanied by key considerations that are crucial for their application.

Following extraction, a leakage verification step ensures the quality and independence of the KPs. This stage treats the system as an expert reviewer for mathematical reasoning datasets. Given a problem and a candidate knowledge description, the task is to determine whether the description is strongly coupled to the problem. A knowledge point is deemed strongly coupled if it contains specific numerical values, unique variable names, or configurations that are tied to the problem's structure. The goal is to filter out KPs that are overly specific or leak information from the problem itself, ensuring that the resulting KPs are generalizable and can be used effectively as hints for similar problems. The verification process requires a JSON-formatted response indicating whether the knowledge is strongly coupled and provides a brief explanation.

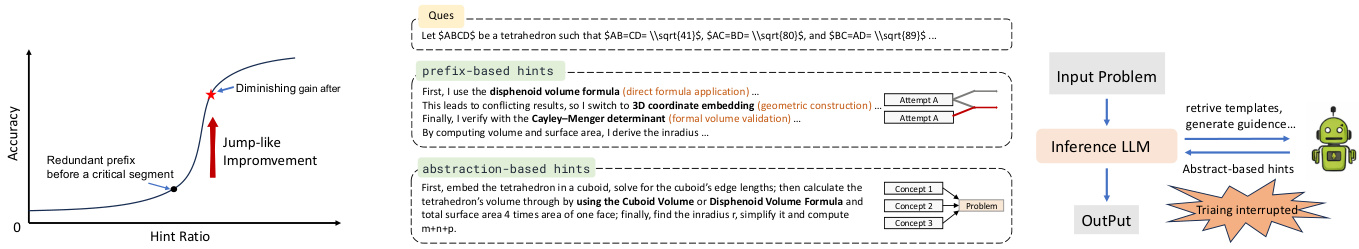

The resulting curated KP set undergoes a problem-wise selection process to determine the optimal subset to use as hints. This involves estimating offline accuracies for various configurations: using no KPs (A∅), using the full set (AK), and performing leave-one-out ablations (A−i). The authors evaluate several selection strategies, including Max-Score, Strict Leave-One-Out (S-LOO), and Tolerant Leave-One-Out (T-LOO), which are formalized as parameterized decision operators. These strategies aim to reduce dependency on KPs while preserving performance. However, a key challenge identified is the pruning interaction paradox, where removing individual KPs may improve performance, but removing them jointly can lead to significant degradation due to cross-hint inconsistency. To address this, the authors introduce Constrained Subset Search (CSS), which first prunes non-degrading and near-optimal KPs, then conducts a global search over the remaining candidate space, achieving a better balance of accuracy and compactness. Additionally, Consensus-Based Robust Selection (CBRS) aggregates results from multiple independent evaluation runs to identify robust, high-performing configurations, further enhancing the selection quality.

Experiment

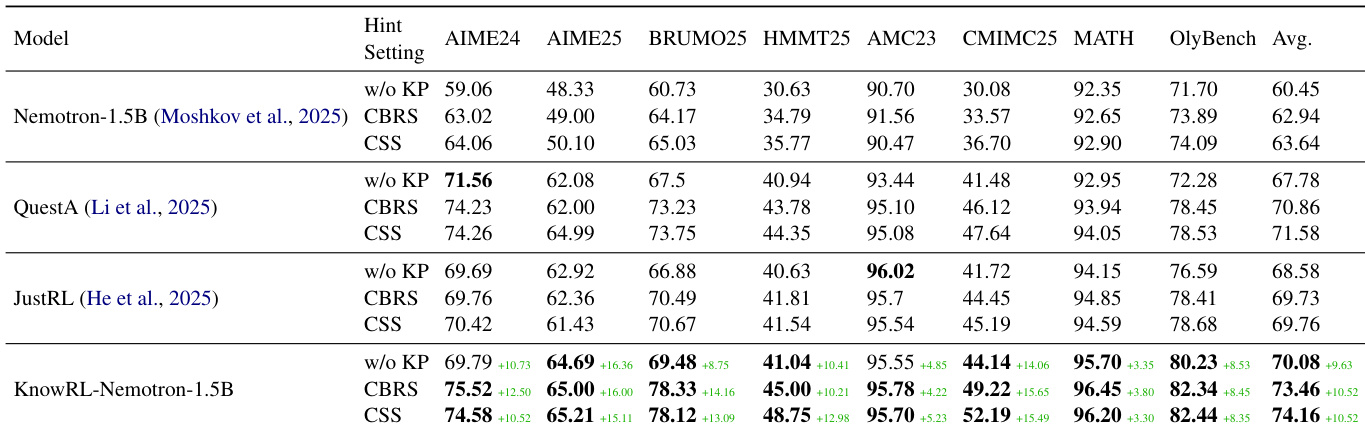

The experiments evaluate the KnowRL framework through various training configurations, selection strategies, and evaluation protocols to validate its ability to internalize structured reasoning. Results demonstrate that the model significantly improves its underlying policy rather than merely relying on test-time hints, showing particular strength in complex, competition-style reasoning tasks. Furthermore, the CSS selection strategy proves more robust and stable than CBRS, while techniques like entropy annealing effectively accelerate convergence and optimize performance.

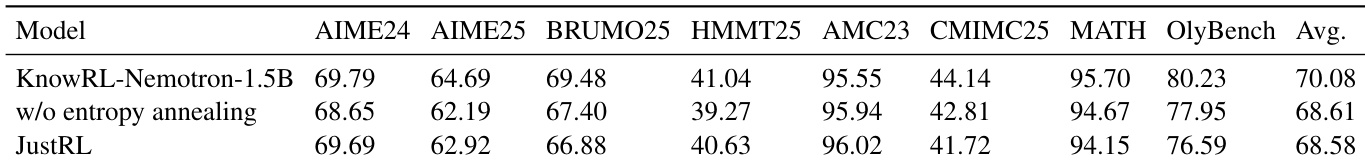

The authors compare KnowRL-Nemotron-1.5B against baseline models on multiple reasoning benchmarks, showing that KnowRL achieves superior performance both with and without knowledge point hints. Results indicate that the model's improvements stem from enhanced policy learning rather than reliance on test-time hinting. KnowRL-Nemotron-1.5B outperforms baseline models across all evaluated benchmarks, with notable gains on challenging competition-style datasets. The model achieves strong performance even without knowledge point hints, demonstrating that the training process improves the underlying reasoning capability. Using CSS-selected knowledge points leads to higher average accuracy compared to CBRS, indicating more effective hint construction.

The authors compare KnowRL-Nemotron-1.5B with variants and baselines across multiple reasoning benchmarks. Results show that KnowRL achieves superior performance, especially when using entropy annealing, and outperforms other models without relying on test-time hints. KnowRL-Nemotron-1.5B achieves the highest average performance across all benchmarks compared to other models. The model with entropy annealing outperforms the variant without it, demonstrating improved convergence and final accuracy. KnowRL consistently surpasses baseline models, indicating enhanced reasoning capabilities beyond simple hint injection.

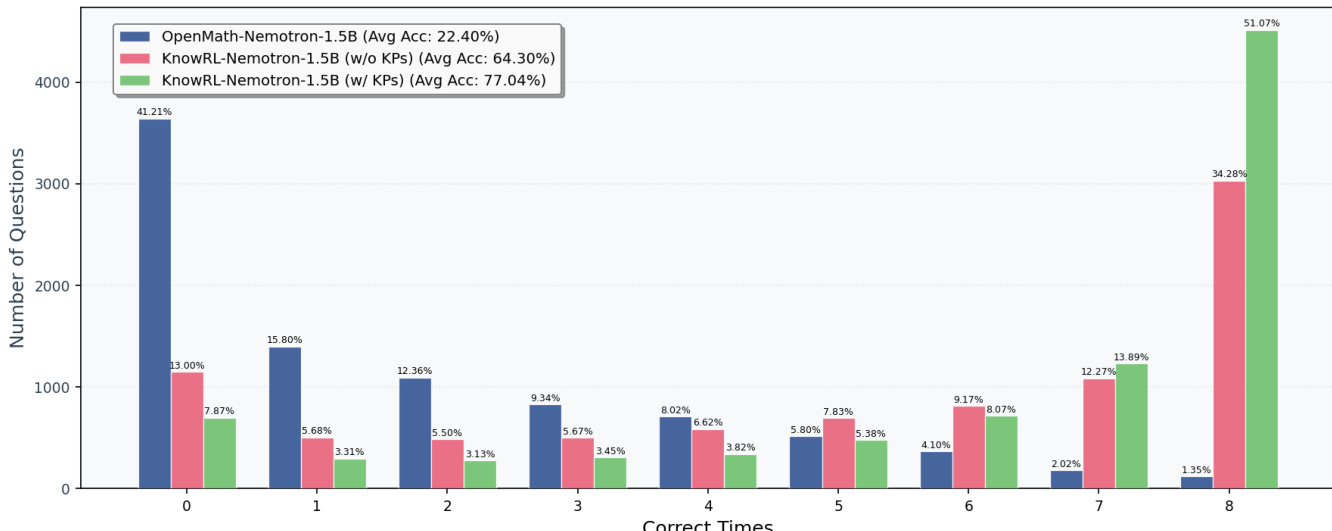

The authors compare the per-query correct count distribution for three models on the training set, showing how performance improves with training and the use of knowledge points. The distribution shifts significantly to the right when moving from the baseline model to the trained models, with the greatest improvement seen when knowledge points are used at inference. The baseline model shows a high frequency of zero correct answers and a low average accuracy. Training with KnowRL improves the distribution, reducing zero-correct queries and increasing the proportion of fully correct answers. Using knowledge points at inference further shifts the distribution toward higher correct counts, with a substantial increase in the highest bucket.

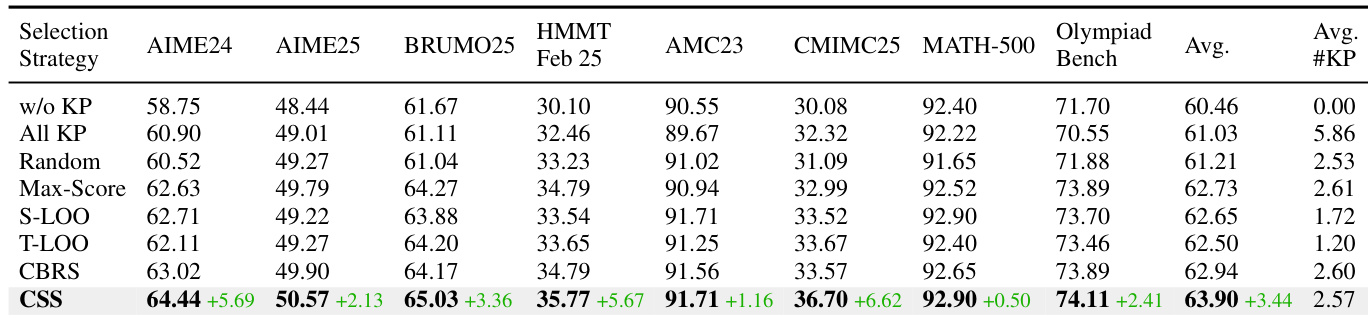

The authors compare different knowledge point selection strategies in a reinforcement learning setup, evaluating their impact on model performance across multiple reasoning benchmarks. Results show that the CSS strategy consistently outperforms other methods, particularly on challenging competition-style datasets, and achieves the highest average accuracy. CSS selection strategy achieves the highest performance across all benchmarks compared to other methods Performance improvements are most pronounced on challenging competition-style reasoning tasks The CSS method demonstrates consistent superiority over CBRS and other baseline selection strategies

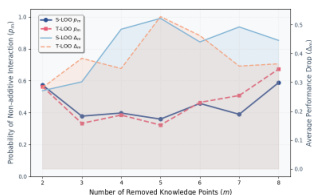

The authors analyze the impact of removing knowledge points on model performance during training. Results show that removing knowledge points reduces both the probability of non-additive interaction and average performance, with different removal strategies affecting these metrics in distinct ways. The model's performance degrades as more knowledge points are removed, indicating the importance of these points for effective reasoning. Removing knowledge points decreases the probability of non-additive interaction Performance drops as more knowledge points are removed Different removal strategies lead to varying impacts on model performance

The authors evaluate KnowRL-Nemotron-1.5B against various baselines and configurations across multiple reasoning benchmarks to validate its performance and the effectiveness of its training components. The results demonstrate that the model achieves superior reasoning capabilities through enhanced policy learning rather than a simple reliance on test-time hints, with entropy annealing further improving convergence and accuracy. Additionally, the experiments show that the CSS knowledge point selection strategy is highly effective for challenging tasks and that the inclusion of knowledge points is essential for maintaining high performance and reducing non-additive interactions.