Command Palette

Search for a command to run...

FlowInOne : Unifier la génération multimodale sous la forme d'un Flow Matching de type Image-in, Image-out.

FlowInOne : Unifier la génération multimodale sous la forme d'un Flow Matching de type Image-in, Image-out.

Junchao Yi Rui Zhao Jiahao Tang Weixian Lei Linjie Li Qisheng Su Zhengyuan Yang Lijuan Wang Xiaofeng Zhu Alex Jinpeng Wang

Résumé

Voici la traduction de votre texte en français, réalisée selon les standards de rigueur scientifique et de précision terminologique requis :La génération multimodale est depuis longtemps dominée par des pipelines pilotés par le texte, où le langage dicte la vision sans pour autant pouvoir raisonner ou créer au sein de celle-ci. Nous remettons en question ce paradigme en nous demandant si toutes les modalités — y compris les descriptions textuelles, les mises en page spatiales (spatial layouts) et les instructions d'édition — peuvent être unifiées en une représentation visuelle unique. Nous présentons FlowInOne, un framework qui reformule la génération multimodale comme un flux purement visuel, convertissant toutes les entrées en visual prompts et permettant un pipeline épuré de type « image-in, image-out » régi par un unique modèle de flow matching. Cette formulation centrée sur la vision élimine naturellement les goulots d'étranglement de l'alignement cross-modal, la planification du bruit (noise scheduling) et les branches architecturales spécifiques à chaque tâche, unifiant ainsi la génération text-to-image, l'édition guidée par le layout et le suivi d'instructions visuelles sous un paradigme cohérent. Pour soutenir cette approche, nous introduisons VisPrompt-5M, un dataset à grande échelle comprenant 5 millions de paires de visual prompts couvrant diverses tâches, notamment la dynamique des forces sensible à la physique et la prédiction de trajectoire, ainsi que VP-Bench, un benchmark rigoureusement élaboré pour évaluer la fidélité aux instructions, la précision spatiale, le réalisme visuel et la cohérence du contenu. Des expériences approfondies démontrent que FlowInOne atteint des performances de pointe (state-of-the-art) sur l'ensemble des tâches de génération unifiées, surpassant à la fois les modèles open-source et les systèmes commerciaux compétitifs, établissant ainsi une nouvelle base pour une modélisation générative entièrement centrée sur la vision, où la perception et la création coexistent au sein d'un espace visuel continu unique.

One-sentence Summary

By converting all inputs into visual prompts, FlowInOne unifies text-to-image generation, layout-guided editing, and visual instruction following into a single image-in, image-out flow matching paradigm that eliminates cross-modal alignment bottlenecks through the use of the VisPrompt-5M dataset and evaluation via the VP-Bench benchmark.

Key Contributions

- The paper introduces FlowInOne, a unified flow matching framework that reformulates multimodal generation as a vision-centric image-in, image-out paradigm. This approach converts all inputs into visual prompts to eliminate text encoders and modality-specific bridges, enabling a single model to handle text-to-image generation, layout-guided editing, and visual instruction following.

- This work presents VisPrompt-5M, a large-scale dataset consisting of 5 million visual prompt pairs that cover diverse tasks such as physics-aware force dynamics and trajectory prediction. The dataset provides supervision through continuous visual evolution, allowing for unified training and strong generalization across multiple generative tasks.

- The researchers developed VP-Bench, a curated evaluation benchmark designed to assess model performance across four key dimensions: instruction faithfulness, spatial precision, visual realism, and content consistency. Experiments using this benchmark demonstrate that FlowInOne achieves state-of-the-art performance, surpassing existing open-source and competitive commercial systems.

Introduction

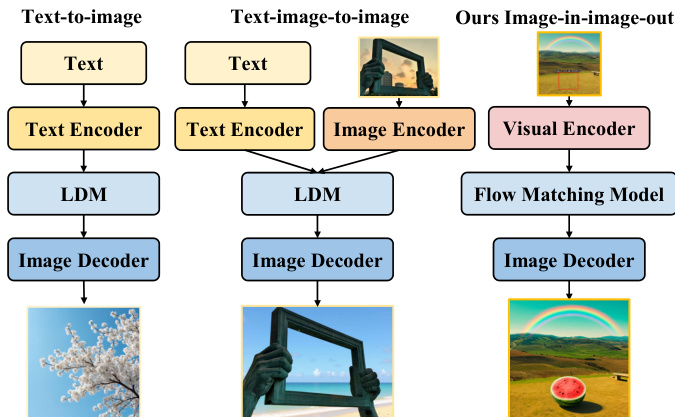

Multimodal generation currently relies on text-dominant pipelines where linguistic embeddings dictate visual output, creating a fundamental asymmetry where vision cannot reason or generate independently. These traditional architectures often suffer from cross-modal alignment bottlenecks and require complex, task-specific branches to handle different types of conditioning. The authors leverage a vision-centric approach called FlowInOne to reformulate multimodal generation as a pure image-in, image-out pipeline. By converting all inputs, including text and spatial layouts, into visual prompts and using a single flow matching model, they unify text-to-image generation, layout-guided editing, and visual instruction following into one continuous visual space.

Dataset

The authors developed VisPrompt-5M, a large-scale dataset of approximately 5 million image-to-image pairs designed to enable a unified vision-centric instruction-following paradigm. Instead of using separate text channels, the authors embed all instructions, such as text, bounding boxes, or arrows, directly onto the input image canvas.

Dataset Composition and Sources The dataset is organized into eight task categories grouped into three major capabilities:

- Fundamental Generation: Includes Text-to-Image (2M pairs from text-to-image-2M) and Class-to-Image (860K high-quality ImageNet subset) to establish basic semantic-to-visual mapping.

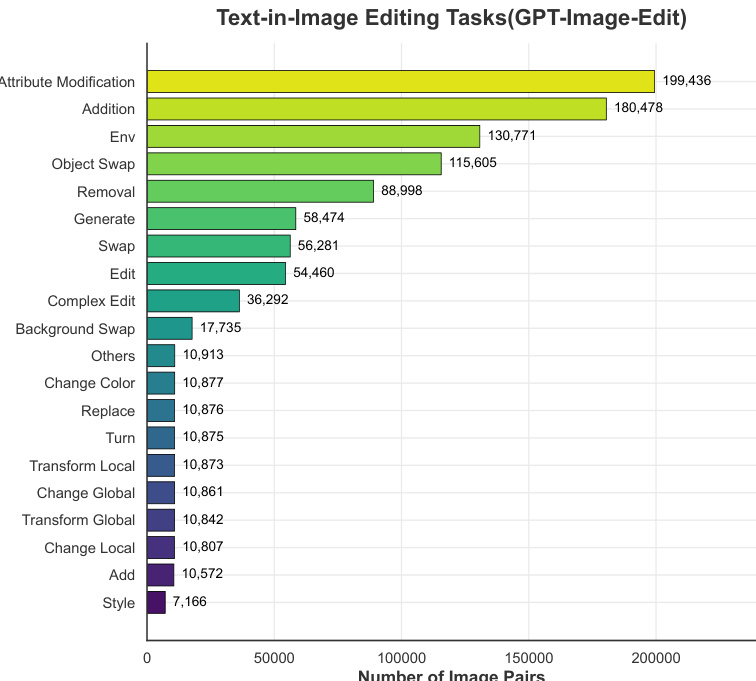

- Unified Image Editing: The largest component, covering semantic operations, attribute changes, and structural tasks. Sources include GPT-Image-Edit, Pico-Banana, UnicEdit (yielding 1.6M filtered semantic pairs), and PixWizard (315K structured pairs for tasks like inpainting and depth-to-image).

- Physics Understanding: A specialized subset for motion and dynamics, including Trajectory Understanding (1.5K Blender-rendered pairs) and Force Understanding (leveraging the Force Prompting dataset).

- Specialized Geometric Editing: Includes Text Bounding Box Editing (24K high-quality pairs synthesized via Qwen3-VL), Visual Marker Editing (250K pairs using arrow annotations), and Doodles Editing (1K high-fidelity pairs derived from web images).

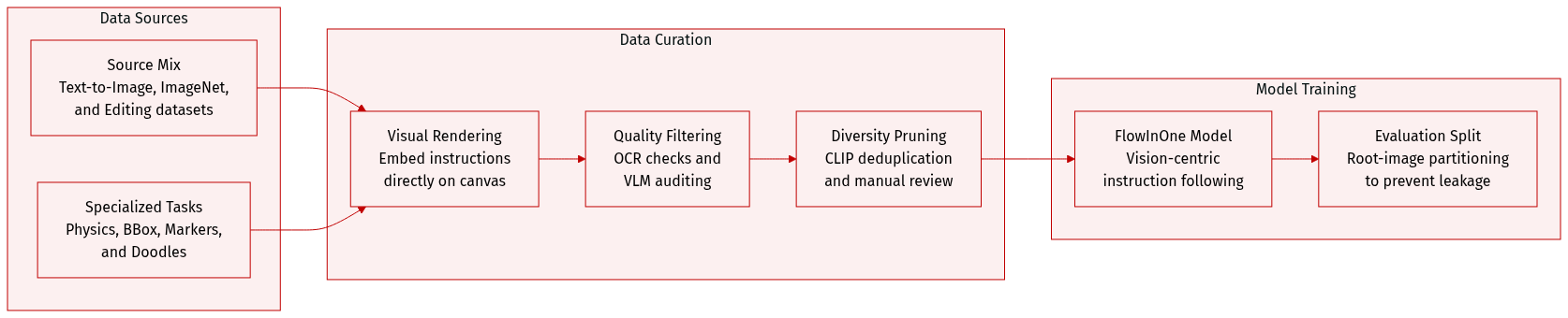

Processing and Quality Control The authors implemented a rigorous multi-stage pipeline to ensure high data fidelity:

- Visual Instruction Rendering: For text-based tasks, instructions are rendered onto the canvas with randomized fonts, sizes, colors, and positions to prevent typographical overfitting.

- OCR-based Verification: An OCR engine checks the legibility of rendered text, discarding pairs with high Character Error Rates (CER).

- VLM-based Auditing: Advanced Multimodal Large Language Models (e.g., Qwen3-VL) act as judges to verify semantic alignment, spatial precision (e.g., checking if objects match bounding boxes), and visual realism.

- Diversity Deduplication: The authors use CLIP embeddings and cosine similarity thresholds to prune redundant concepts and prevent mode collapse.

- Manual Inspection: For highly complex tasks like Doodles Editing, the authors perform manual curation to ensure absolute structural alignment and the absence of generative artifacts.

Data Usage and Leakage Prevention The dataset is used to train the FlowInOne model under a purely vision-centric paradigm. To ensure the integrity of the VP-Bench evaluation, the authors implemented a strict two-fold leakage prevention protocol:

- Root-Image Partitioning: The dataset is split based on underlying unedited base images rather than individual instruction pairs, ensuring the model never sees the benchmark backgrounds or layouts.

- Visual Deduplication: The authors use CLIP embeddings to perform feature-level filtering, aggressively discarding any training pairs that exhibit high visual similarity to the benchmark images.

Method

The authors leverage a flow matching framework to model image generation as a continuous transport process within a shared latent space, eliminating the need for complex noise scheduling and explicit conditioning branches. The core of the approach is a unified visual encoding strategy that integrates textual instructions and diverse visual cues directly onto the image canvas, thereby preserving spatial layouts and structural priors without relying on cross-modal alignment modules. This unified image is processed by a SigLIP Vision Transformer to extract patch-level semantic features, which are then projected into the target embedding space via an MLP projector. The resulting fused representation, Xfuse, encapsulates both textual semantics and visual geometry.

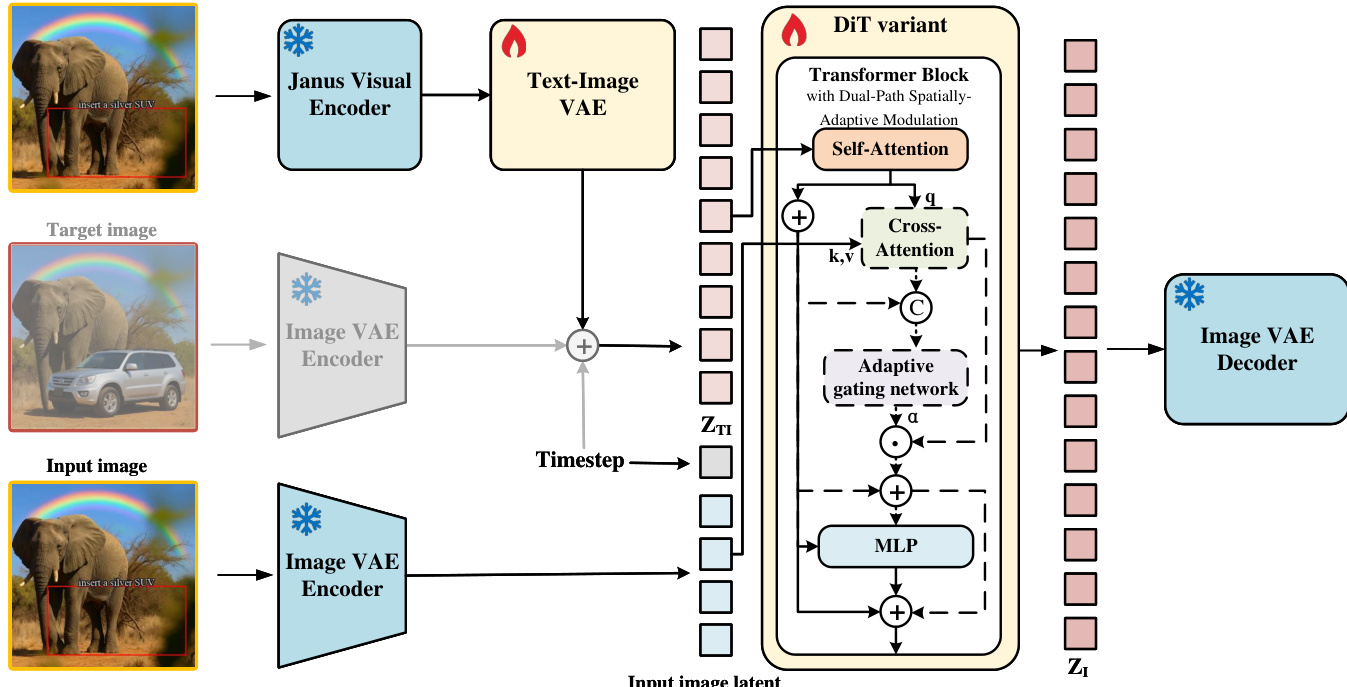

As shown in the figure below, the architecture employs a Dual-Path Spatially-Adaptive Modulation mechanism within a DiT variant to balance structural preservation and instruction adherence. The process begins with the input image and visual instruction being encoded into a shared latent space. The visual instruction is first processed by a Janus Visual Encoder and a Text-Image VAE, while the target and input images are encoded by a frozen Image VAE. These latent representations, ZTI and ZI, serve as the source and target distributions for the flow matching process.

During training, the model learns a time-dependent velocity field vθ(zt,t) by minimizing the Mean Squared Error (MSE) against the ground-truth velocity vt∗, which is derived from the linear interpolation zt=tz1+(1−(1−σmin)t)z0. Inference involves solving the Ordinary Differential Equation dtdzt=vθ(zt,t) from t=0 to t=1, deterministically evolving the visual instruction latent into the final target image.

The Dual-Path Spatially-Adaptive Modulation mechanism is designed to dynamically compensate for the missing structural manifold. For text-to-image generation, the model bypasses the cross-attention layer to prevent irrelevant noise, ensuring the generation trajectory strictly follows the text semantics. For image editing, a source image is mapped into the latent space, and a structural increment ΔHstruct is computed via cross-attention. A lightweight adaptive gating network predicts a token-level weight vector Λ, which controls the infiltration of the structural manifold. The final layer output is integrated via a conditional formulation, where the modulation term is activated only for editing tasks, effectively mitigating editing conflicts and reducing evolution error.

Experiment

The researchers evaluated FlowInOne using the curated VP-Bench benchmark, employing Vision-Language Models and human experts to assess instruction faithfulness, content consistency, visual realism, and spatial precision. Comparative experiments demonstrate that the model achieves state-of-the-art performance in the image-in, image-out paradigm, particularly excelling in fine-grained spatial control and physical reasoning compared to both open-source and commercial baselines. Ablation studies further confirm that joint training and spatially-adaptive modulation are critical for unifying diverse generation and editing tasks within a single framework.

The bar chart shows the number of image pairs across various text-in-image editing tasks, with attribute modification and addition being the most frequent categories. The data indicates a significant imbalance, where a few task types dominate the dataset while others have considerably fewer instances. Attribute modification and addition are the most common text-in-image editing tasks. A majority of tasks have fewer than 20,000 image pairs. The dataset is highly imbalanced, with several categories having significantly fewer samples than others.

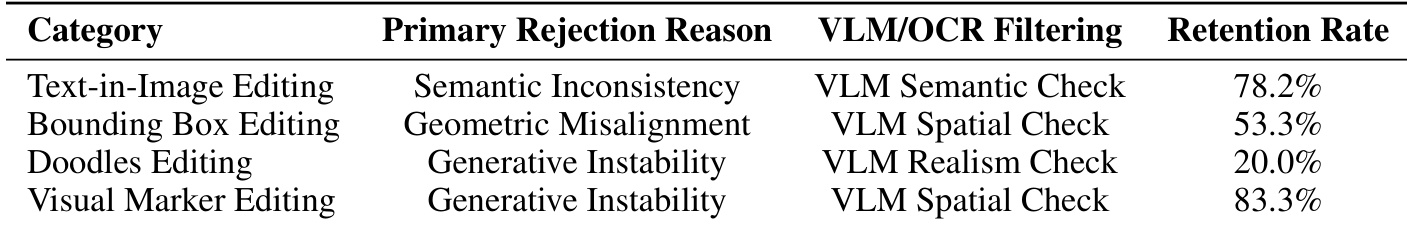

The the the table shows retention rates across different visual editing categories, with the primary rejection reasons and the corresponding VLM/OCR filtering methods used. The retention rate varies significantly by category, indicating differences in task difficulty and model performance. Retention rates differ across editing categories, with text-in-image editing showing the highest retention. Primary rejection reasons vary by category, including semantic inconsistency and geometric misalignment. Different VLM/OCR filtering methods are used for each category to assess specific failure modes.

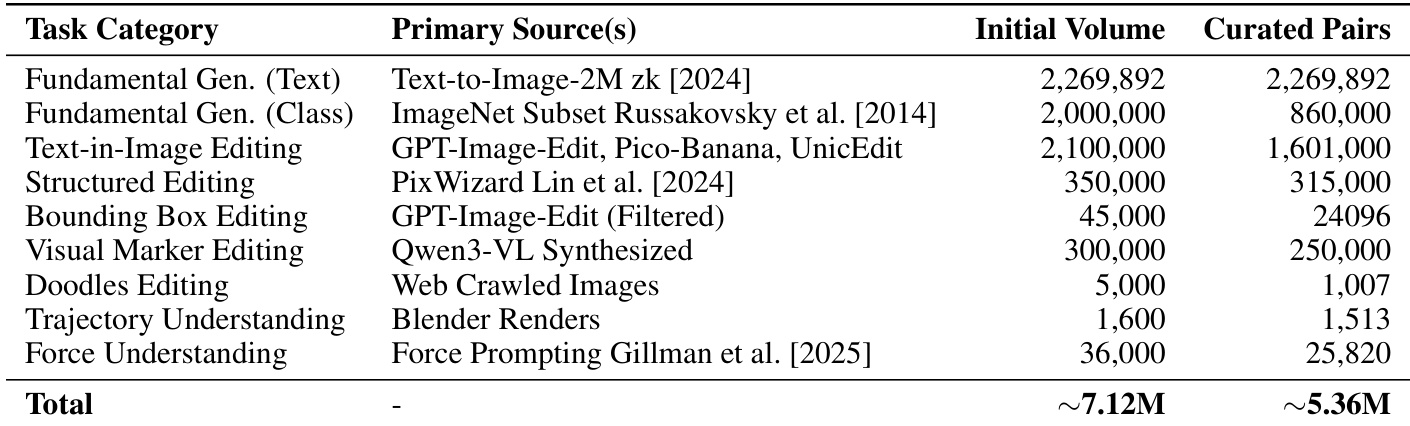

The the the table outlines the composition of a benchmark dataset, detailing the primary sources and the number of initial and curated pairs for various task categories. The dataset covers fundamental generation and editing tasks, with the total size of the curated dataset being approximately 5.36 million pairs. The dataset includes diverse task categories such as text-to-image generation and various forms of image editing. Sources for the dataset range from large-scale text-to-image datasets to specialized collections for specific editing tasks. The total number of curated pairs across all categories is approximately 5.36 million.

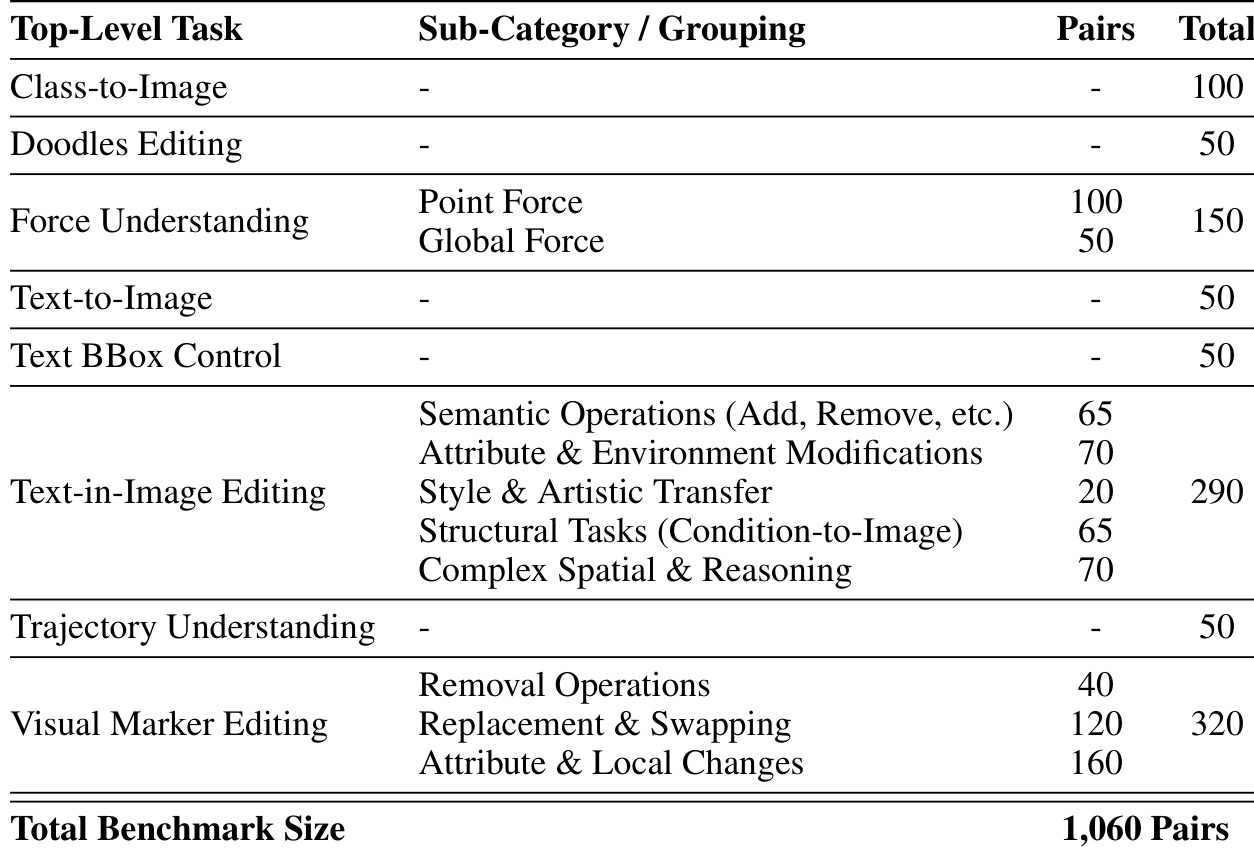

The the the table outlines the structure of the VP-Bench benchmark, detailing its top-level tasks and sub-categories with corresponding pair counts. It shows a diverse range of visual instruction tasks, including text-to-image, text-in-image editing, and spatial reasoning, with the total benchmark size comprising 1,060 pairs. The benchmark includes diverse visual instruction tasks such as text-to-image and text-in-image editing. Text-in-image editing is the largest category with 290 pairs, covering various sub-tasks. The total benchmark size is 1,060 pairs, with tasks distributed across different categories.

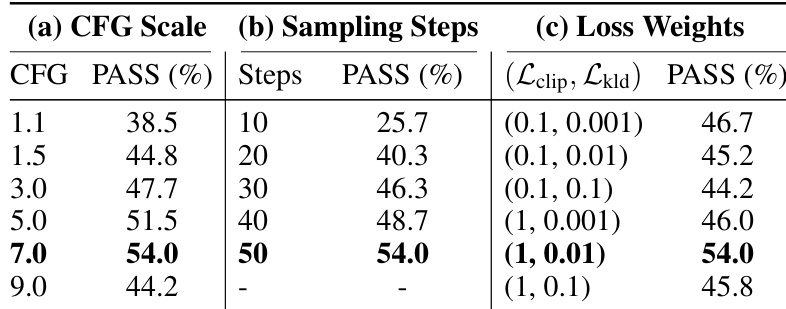

The study evaluates the impact of different hyperparameters on model performance, showing that optimal settings yield the highest pass rate. Performance varies significantly with changes in CFG scale, sampling steps, and loss weights, with specific configurations achieving peak results. Pass rate peaks at a CFG scale of 7 and 50 sampling steps. Performance declines with excessively high CFG scale or low sampling steps. Optimal loss weights balance CLIP alignment and KL divergence penalties.

The evaluation examines the composition and quality of the VP-Bench benchmark, alongside an ablation study to optimize model performance. The analysis reveals a highly imbalanced task distribution dominated by attribute modification and highlights that retention rates vary across editing categories due to specific semantic and geometric challenges. Furthermore, the hyperparameter study demonstrates that model success depends on finding a precise balance between sampling steps, CFG scale, and loss weights.