Command Palette

Search for a command to run...

DataFlex : Un cadre unifié pour l'entraînement dynamique centré sur les données des grands modèles de langage (LLM)

DataFlex : Un cadre unifié pour l'entraînement dynamique centré sur les données des grands modèles de langage (LLM)

Résumé

L'entraînement centré sur les données s'est imposé comme une perspective prometteuse pour améliorer les grands modèles de langage (LLM), en optimisant non seulement les paramètres du modèle, mais également la sélection, la composition et le pondération des données d'entraînement durant le processus d'optimisation. Toutefois, les approches existantes en matière de sélection de données, d'optimisation des mélanges de données et de rééchantillonnage pondéré sont souvent développées dans des bases de code isolées, dotées d'interfaces incohérentes, ce qui entrave la reproductibilité, les comparaisons équitables et l'intégration pratique. Dans cet article, nous présentons DataFlex, un cadre d'entraînement dynamique unifié centré sur les données, construit sur la base de LLaMA-Factory. DataFlex prend en charge trois paradigmes majeurs d'optimisation dynamique des données : la sélection d'échantillons, l'ajustement du mélange de domaines et la rééchantillonnage pondéré des échantillons, tout en restant pleinement compatible avec le flux de travail d'entraînement original. Il offre des abstractions d'entraîneur extensibles et des composants modulaires, permettant un remplacement direct des procédures d'entraînement standard de LLM, et unifie les opérations clés dépendantes du modèle telles que l'extraction d'embeddings, l'inférence et le calcul des gradients, avec un support pour des configurations à grande échelle incluant DeepSpeed ZeRO-3. Nous menons des expériences exhaustives couvrant plusieurs méthodes centrées sur les données. La sélection dynamique de données surpasse systématiquement l'entraînement statique sur l'ensemble complet des données sur le benchmark MMLU, tant pour Mistral-7B que pour Llama-3.2-3B. En ce qui concerne le mélange de données, DoReMi et ODM améliorent à la fois la précision sur MMLU et la perplexité au niveau du corpus par rapport aux proportions par défaut, lors de l'entraînement préliminaire de Qwen2.5-1.5B sur SlimPajama aux échelles de 6B et 30B tokens. DataFlex réalise également des améliorations constantes du temps d'exécution par rapport aux implémentations originales. Ces résultats démontrent que DataFlex constitue une infrastructure efficace, efficiente et reproductible pour l'entraînement dynamique centré sur les données des LLM.

One-sentence Summary

Researchers from Peking University and Shanghai Artificial Intelligence Laboratory present DATAFLEX, a unified framework built on LLaMA-Factory that integrates data selection, mixture optimization, and reweighting into a single dynamic training paradigm, enabling scalable, reproducible large language model optimization with superior performance over static baselines.

Key Contributions

- The paper introduces DATAFLEX, a unified framework built on LLaMA-Factory that supports dynamic sample selection, domain mixture adjustment, and sample reweighting through extensible trainer abstractions and modular algorithm components.

- This work unifies common model-dependent operations such as embedding extraction, model inference, and gradient computation while maintaining full compatibility with large-scale training settings like DeepSpeed ZeRO-3.

- Experiments demonstrate that the framework achieves consistent performance gains over static training and default data proportions across multiple backbones and datasets, while also delivering runtime improvements over original method-specific implementations.

Introduction

Large language model training is increasingly shifting toward data-centric approaches that optimize data selection, mixture, and weighting alongside model parameters to improve efficiency and performance. However, prior work suffers from significant fragmentation, as existing methods are often isolated in separate codebases with inconsistent interfaces that hinder reproducibility, fair comparison, and integration into scalable pipelines. The authors leverage the widely adopted LLaMA-Factory to introduce DATAFLEX, a unified framework that standardizes data-centric dynamic training by supporting selection, mixture adjustment, and reweighting through modular trainer abstractions. This system unifies shared model-dependent operations like embedding extraction and gradient computation while remaining compatible with large-scale training setups such as DeepSpeed ZeRO-3, enabling both systematic research evaluation and practical deployment.

Dataset

-

Dataset Composition and Sources: The authors utilize SlimPajama, a large-scale deduplicated English pretraining corpus derived from RedPajama. This dataset aggregates seven text domains: CommonCrawl (CC), C4, GitHub, Book, ArXiv, Wikipedia, and StackExchange (SE).

-

Subset Details and Filtering: Two subsets are employed to evaluate training budgets at different token scales: SlimPajama-6B and SlimPajama-30B. Both subsets are randomly sampled to strictly preserve the natural token-level domain proportions of the original corpus, which are CommonCrawl (54.1%), C4 (28.7%), GitHub (4.2%), Book (3.7%), ArXiv (3.4%), Wikipedia (3.1%), and StackExchange (2.8%). These natural proportions serve as the default baseline mixture and the initial weights for dynamic optimization methods.

-

Training Usage and Mixture Ratios: The authors train Qwen2.5 models from scratch with random initialization to isolate the effects of data mixture strategies. The baseline uses a static mixer with default SlimPajama proportions. The DoReMi method follows a three-step procedure where a reference model and proxy model are trained to compute per-domain excess losses, resulting in optimized static weights for the target model that notably increase high-quality domains like Book and Wikipedia while reducing CommonCrawl dominance. The Online Data Mixing (ODM) approach dynamically adjusts domain weights during a single training pass using the Exp3 multi-armed bandit algorithm without a separate reference model.

-

Processing and Configuration Details: All experiments train for one full epoch with a linear learning rate decay and a 5% warmup ratio. The Qwen tokenizer is applied to all models, and a fixed random seed of 42 is used. Training runs utilize BFloat16 mixed precision, DeepSpeed ZeRO Stage-3 for memory efficiency, and FlashAttention-2 for attention computation. The 6B-token experiments run on a single node with 8 GPUs, while the 30B-token experiments scale to 4 nodes with 32 H20 GPUs in total.

Method

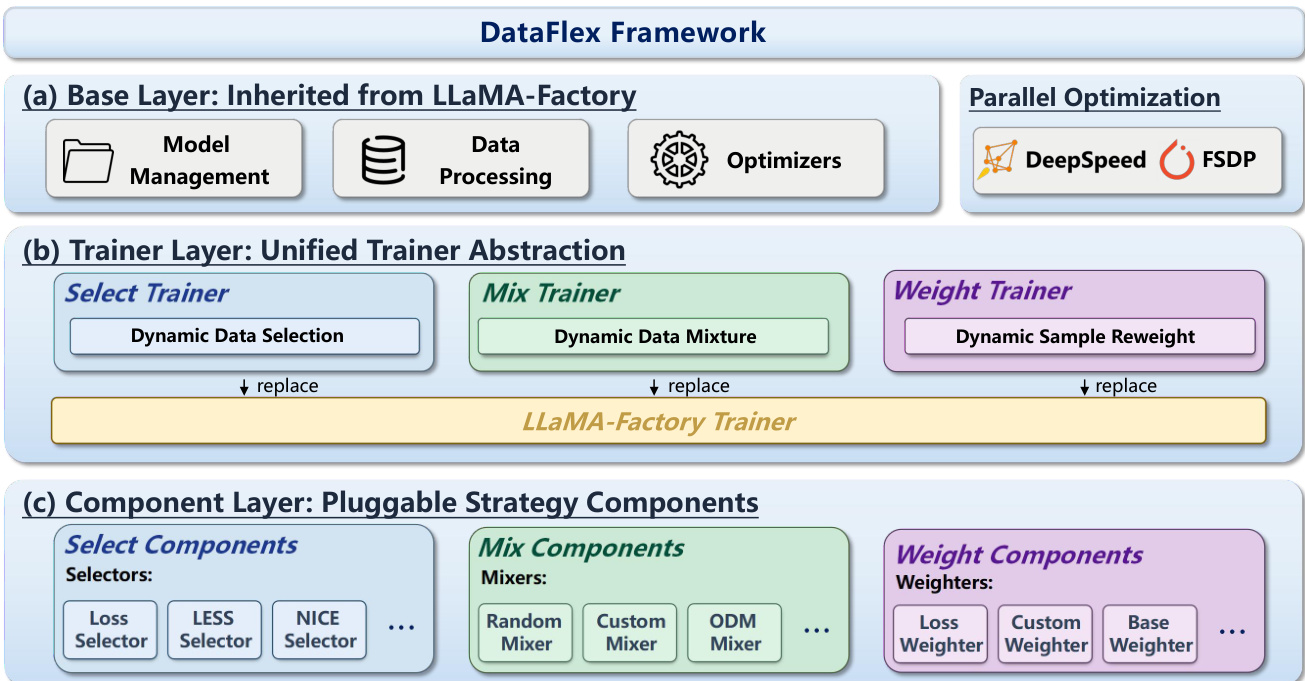

The authors propose DATAFLEX, a unified data-centric dynamic training framework designed to treat data as a first-class optimization variable. The system is built upon LLaMA-Factory and is structured into three primary layers to support dynamic control over data selection, mixture, and weighting.

Refer to the framework diagram for the high-level architecture. The Base Layer, labeled as (a), is inherited directly from LLaMA-Factory, providing standard infrastructure for model management, data processing, and optimizers. It also integrates parallel optimization strategies such as DeepSpeed and FSDP to ensure scalability.

At the core of the system is the Trainer Layer, labeled as (b), which implements a unified trainer abstraction. This layer replaces the original LLaMA-Factory trainer with three specialized dynamic training modes: the Select Trainer for dynamic data selection, the Mix Trainer for dynamic data mixture, and the Weight Trainer for dynamic sample reweighting. Each mode corresponds to a specific paradigm of data-centric optimization.

Beneath the trainer layer lies the Component Layer, labeled as (c), which consists of pluggable strategy components. The Select Trainer utilizes Selectors (e.g., Loss Selector, LESS Selector), the Mix Trainer employs Mixers (e.g., Random Mixer, ODM Mixer), and the Weight Trainer uses Weighters (e.g., Loss Weighter). These components encapsulate algorithm-specific logic while sharing a common interface. During training, the trainer invokes its associated component to generate control signals, such as sample subsets, domain mixing ratios, or per-sample weights, which are then fed back into the optimization process. This modular design allows researchers to implement and compare new data-centric algorithms with minimal engineering overhead while maintaining compatibility with existing large-scale training workflows.

Experiment

- Experiments on data selection and reweighting validate that dynamic, model-aware methods (such as LESS and Reweight) consistently outperform static full-data training baselines on both Mistral-7B and Llama-3.2-3B, with the performance gap widening for smaller models where dynamic selection is critical.

- Offline selection methods demonstrate faster early convergence due to precomputed high-value samples, while online methods achieve superior final accuracy by adapting to evolving model gradients.

- Data mixture experiments confirm that dynamic optimization strategies (DoReMi and ODM) improve both MMLU accuracy and perplexity compared to static baselines, with DoReMi excelling in high-resource domains and ODM effectively upweighting specialized, underrepresented domains.

- Efficiency evaluations show that the DATAFLEX framework reduces runtime for both online (LESS) and offline (TSDS) selection algorithms compared to original implementations, while uniquely enabling multi-GPU parallel scalability for online selection to handle large-scale datasets.