Command Palette

Search for a command to run...

GameplayQA : Un cadre d'évaluation pour la compréhension multimédia synchronisée à la perspective subjective de vidéos multiples, dense en décisions, appliquée aux agents virtuels 3D.

GameplayQA : Un cadre d'évaluation pour la compréhension multimédia synchronisée à la perspective subjective de vidéos multiples, dense en décisions, appliquée aux agents virtuels 3D.

Yunzhe Wang Runhui Xu Kexin Zheng Tianyi Zhang Jayavibhav Niranjan Kogundi Soham Hans Volkan Ustun

Résumé

Les LLM multimodaux sont de plus en plus déployés comme épine dorsale perceptive pour des agents autonomes évoluant dans des environnements 3D, allant de la robotique aux mondes virtuels. Ces applications exigent que les agents perçoivent des changements d'état rapides, attribuent les actions aux entités correctes et raisonnent sur des comportements multi-agents simultanés depuis une perspective à la première personne ; des capacités que les benchmarks existants n'évaluent pas de manière adéquate. Nous présentons GameplayQA, un cadre d'évaluation de la perception et du raisonnement centrés sur l'agent, fondé sur la compréhension vidéo. Plus précisément, nous annotons densément des vidéos de jeux en ligne 3D multijoueurs à raison de 1,22 étiquettes par seconde, avec des légendes synchronisées dans le temps et simultanées décrivant les états, les actions et les événements, structurées autour d'un système triadique : Soi, Autres Agents et Monde, une décomposition naturelle pour les environnements multi-agents. À partir de ces annotations, nous avons raffiné 2 400 paires de questions-réponses diagnostiques, organisées en trois niveaux de complexité cognitive, accompagnées d'une taxonomie structurée de leurres permettant une analyse fine des points où les modèles génèrent des hallucinations. L'évaluation des MLLMs de pointe révèle un écart substantiel par rapport aux performances humaines, avec des échecs récurrents dans l'ancrage temporel et inter-vidéo, l'attribution des rôles d'agent et la gestion de la densité décisionnelle du jeu. Nous espérons que GameplayQA stimulera les recherches futures à l'intersection de l'IA incarnée, de la perception agentique et de la modélisation du monde.

One-sentence Summary

Researchers from the University of Southern California introduce GAMEPLAYQA, a novel benchmark densely annotating multiplayer 3D gameplay to evaluate multimodal LLMs on agentic perception. This framework uniquely employs a triadic Self-Other-World system to expose critical failures in temporal grounding and agent attribution that prior benchmarks miss.

Key Contributions

- The paper introduces GAMEPLAYQA, a framework for evaluating agentic-centric perception and reasoning that densely annotates multiplayer 3D gameplay videos at 1.22 labels per second with time-synced captions structured around a triadic system of Self, Other Agents, and the World.

- This work presents 2.4K diagnostic QA pairs organized into three levels of cognitive complexity and a structured distractor taxonomy designed to enable fine-grained analysis of where models hallucinate.

- Experiments demonstrate a substantial performance gap between frontier MLLMs and human benchmarks, revealing specific failures in temporal grounding, agent-role attribution, and handling the high decision density of the game environment.

Introduction

Multimodal LLMs are increasingly deployed as perceptual backbones for autonomous agents in 3D environments like robotics and virtual worlds, where they must track rapid state changes and reason about concurrent multi-agent behaviors from a first-person perspective. Existing benchmarks fail to evaluate these capabilities because they rely on slow-paced, passive observations that lack the high-frequency decision loops and dense embodiment required for real-world agency. To address this gap, the authors introduce GAMEPLAYQA, a framework that densely annotates multiplayer 3D gameplay videos with time-synced captions structured around a triadic system of Self, Other Agents, and the World. They refine these annotations into 2.4K diagnostic QA pairs organized by cognitive complexity and a structured distractor taxonomy to pinpoint specific failure modes such as temporal grounding errors and agent-role attribution mistakes.

Dataset

-

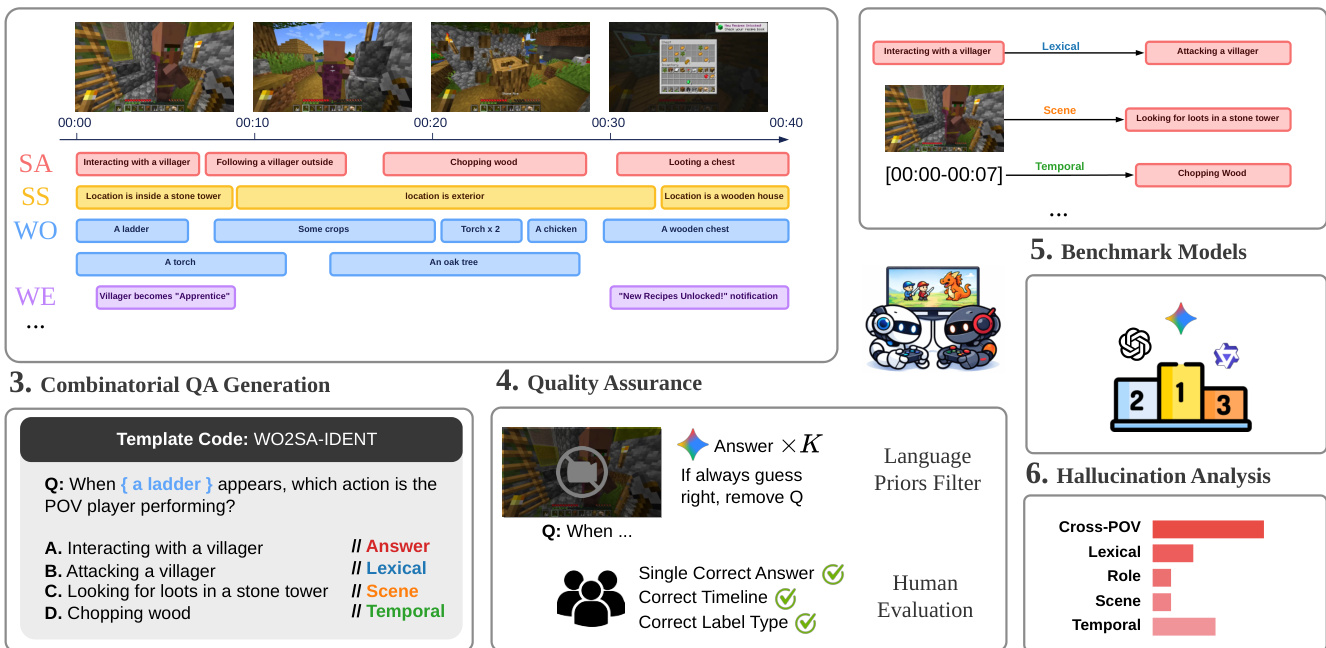

Dataset Composition and Sources The authors introduce GAMEPLAYQA, a benchmark derived from synchronized gameplay footage of 9 commercial multiplayer games spanning diverse genres. Data sources include YouTube, Twitch streams, and existing datasets. For multi-POV scenarios, the team identified streamer groups playing in the same match and manually aligned their individual recordings to create temporally synchronized video sets.

-

Key Details for Each Subset The final benchmark consists of 2,365 high-quality QA pairs generated from 2,709 true labels and 1,586 distractor labels across 2,219.41 seconds of footage. The data is organized around a Self-Other-World entity decomposition covering six primitive types: Self-Action, Self-State, Other-Action, Other-State, World-Object, and World-Event. Questions are categorized into three cognitive levels: Level 1 for basic perception, Level 2 for temporal reasoning, and Level 3 for cross-video understanding.

-

Data Usage and Generation Strategy The authors employ a combinatorial template-based algorithm to generate the dataset, initially producing 399,214 candidate pairs before downsampling to 4,000 to ensure balanced category coverage. This process systematically combines verified labels across five dimensions including video count, entity type, and distractor type. The resulting benchmark is used to evaluate MLLMs on fine-grained hallucination analysis, with distractors specifically designed to diagnose failures in lexical, temporal, role, or cross-video reasoning.

-

Processing and Annotation Workflow The pipeline utilizes a dense multi-track timeline captioning approach with a decision density of approximately 1.22 labels per second. Annotation follows a two-stage human-in-the-loop workflow where Gemini-3-Pro generates initial candidates that graduate student annotators verify and refine. The process includes a language prior filtering step where questions solvable without video input are removed, followed by human evaluation to resolve ambiguities and ensure semantic accuracy.

Method

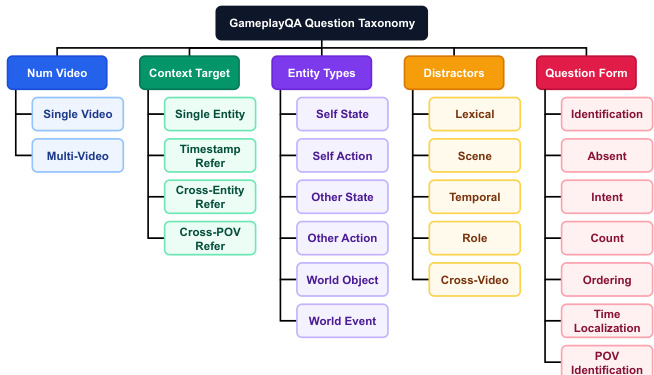

The authors establish a structured methodology for gameplay question answering, beginning with a detailed question taxonomy. Refer to the taxonomy diagram which outlines five orthogonal dimensions that define each question: Number of Videos, Context Target, Entity Types, Distractors, and Question Form. This hierarchical structure allows for the systematic generation of diverse query types ranging from simple identification to complex temporal localization.

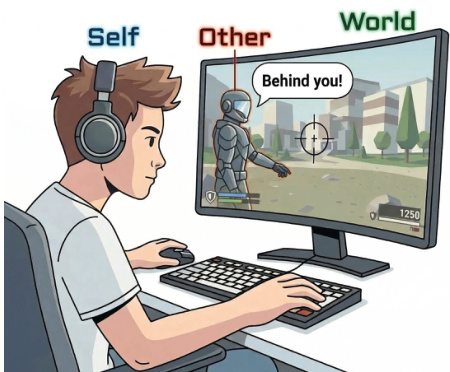

Central to the entity definition is the Self-Other-World perspective. As shown in the figure below, this framework categorizes entities into three groups: the player (Self), teammates or NPCs (Other), and the game environment (World). This tripartite division ensures that questions cover a broad spectrum of gameplay interactions and observations.

The dataset construction employs a combinatorial QA generation pipeline. Refer to the generation pipeline diagram which details the process from temporal event annotation to final question templating. The system extracts semantic segments such as Self Action and World Objects, then applies template codes to formulate multiple-choice questions. To maintain data integrity, a rigorous Quality Assurance phase is implemented. This includes an automated Language Priors Filter to eliminate questions solvable without visual input, alongside Human Evaluation to validate the answer key and timeline accuracy. For model evaluation, the authors utilize an LLM as a Judge to parse and extract the selected option from the model's raw output, ensuring consistent scoring across different benchmark models.

Experiment

- Evaluation of 16 open-source and proprietary MLLMs on GAME-PLAYQA validates a three-level cognitive hierarchy where performance consistently degrades from basic perception to temporal reasoning and cross-video understanding.

- Experiments identify Occurrence Count and Cross-Video Ordering as critical bottlenecks, confirming that current architectures struggle with sustained temporal attention and aligning events across multiple perspectives.

- Error analysis reveals that models handle static visual inputs better than temporal or cross-video distractors, with performance dropping significantly in fast-paced, decision-dense environments and when tracking other agents.

- Ablation studies demonstrate that genuine visual grounding is essential for task success, while temporal ordering is specifically critical for higher-level reasoning tasks but less so for basic perception.

- Cross-domain transfer experiments on autonomous driving and human collaboration datasets confirm that the benchmark framework generalizes to real-world spatiotemporal tasks while preserving relative difficulty rankings and model performance trends.