Command Palette

Search for a command to run...

Des modèles statiques aux graphes d'exécution dynamiques : une étude de l'optimisation des workflows pour les LLM Agents

Des modèles statiques aux graphes d'exécution dynamiques : une étude de l'optimisation des workflows pour les LLM Agents

Ling Yue Kushal Raj Bhandari Ching-Yun Ko Dhaval Patel Shuxin Lin Nianjun Zhou Jianxi Gao Pin-Yu Chen Shaowu Pan

Résumé

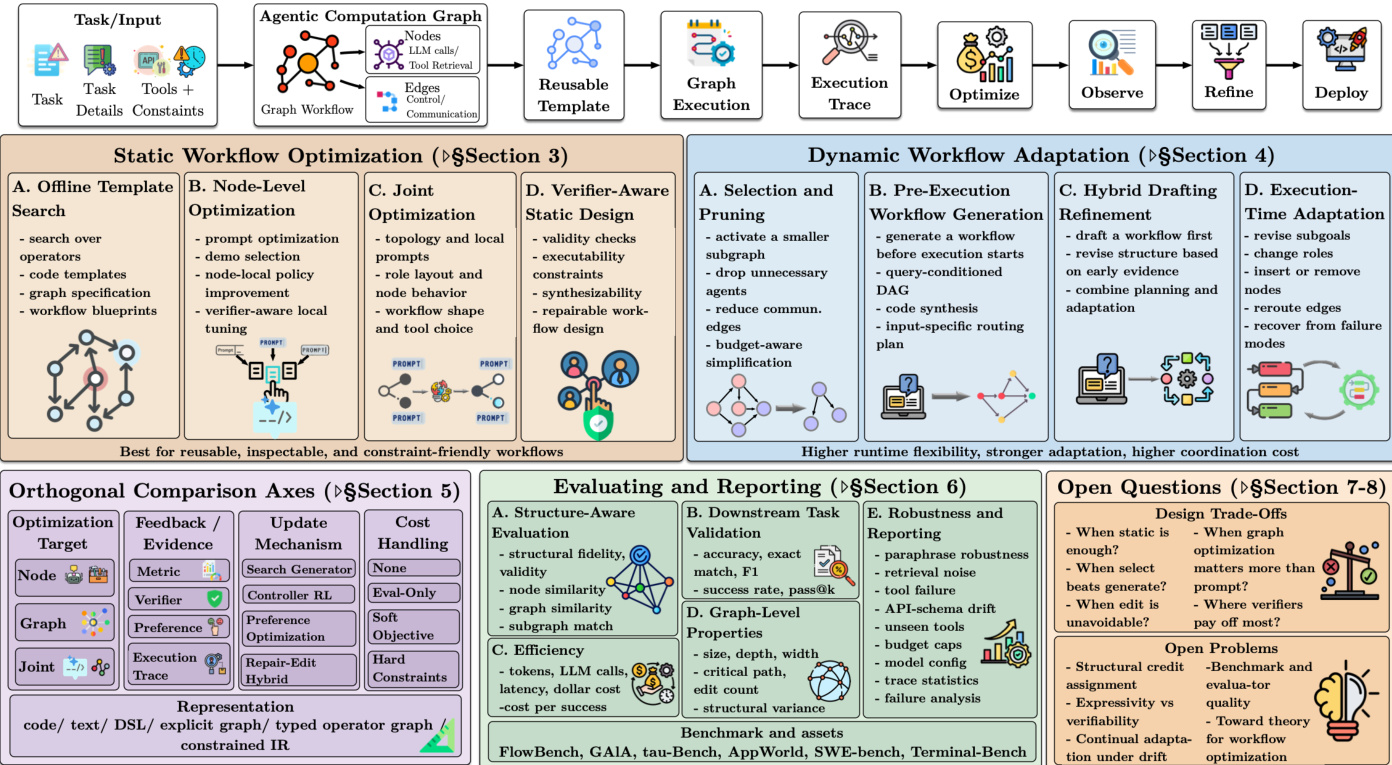

Les systèmes fondés sur les grands modèles de langage (LLM) gagnent en popularité pour résoudre des tâches en construisant des flux de travail exécutables qui intercalent des appels LLM, la récupération d'informations, l'utilisation d'outils, l'exécution de code, la mise à jour de mémoires et la vérification. Cette étude de revue examine les méthodes récentes de conception et d'optimisation de tels flux de travail, que nous considérons comme des graphes de calcul agentiques (ACG). Nous organisons la littérature selon le moment où la structure du flux de travail est déterminée ; par « structure », nous entendons quels composants ou agents sont présents, leurs dépendances mutuelles et la manière dont l'information circule entre eux. Cette perspective distingue les méthodes statiques, qui fixent un squelette de flux de travail réutilisable avant le déploiement, des méthodes dynamiques, qui sélectionnent, génèrent ou révisent le flux de travail pour une exécution donnée, avant ou pendant son exécution. Nous classons par ailleurs les travaux antérieurs selon trois dimensions : le moment de la détermination de la structure, la partie du flux de travail optimisée et les signaux d'évaluation guidant l'optimisation (par exemple, métriques de tâche, signaux de vérificateur, préférences ou feedback dérivé des traces). Nous distinguons également les modèles de flux de travail réutilisables, les graphes instantanés propres à chaque exécution et les traces d'exécution, séparant ainsi les choix de conception réutilisables des structures effectivement déployées lors d'une exécution donnée et des comportements d'exécution réalisés. Enfin, nous esquissons une perspective d'évaluation sensible à la structure, qui complète les métriques de tâche en aval par des propriétés au niveau du graphe, le coût d'exécution, la robustesse et la variation structurelle selon les entrées. Notre objectif est de fournir un vocabulaire clair, un cadre unifié pour positionner de nouvelles méthodes, une vision plus comparable du corpus existant et une norme d'évaluation plus reproductible pour les travaux futurs sur l'optimisation des flux de travail pour les agents LLM.

One-sentence Summary

Researchers from Rensselaer Polytechnic Institute and IBM Research propose a unified framework for agentic computation graphs, distinguishing static and dynamic workflow structures to optimize LLM agent systems. This survey introduces a structure-aware evaluation perspective that enhances reproducibility and clarifies design choices for complex, tool-intelligent workflows.

Key Contributions

- The paper introduces agentic computation graphs (ACGs) as a unifying abstraction for executable LLM workflows, distinguishing between static methods that fix scaffolds before deployment and dynamic methods that generate or revise structures during execution.

- A three-dimensional taxonomy is presented to organize existing literature based on when structure is determined, which workflow components are optimized, and the specific evaluation signals that guide the optimization process.

- A structure-aware evaluation perspective is outlined that complements downstream task metrics with graph-level properties, execution cost, robustness, and structural variation to establish a more reproducible standard for future research.

Introduction

Large language model (LLM) systems are evolving from simple chatbots into complex agentic computation graphs that coordinate tools, code execution, and verification to solve tasks. The overall workflow structure, which dictates component dependencies and information flow, often determines system effectiveness and cost more than individual model capabilities alone. However, prior research and surveys have largely treated workflow design as a fixed implementation detail or focused on adjacent topics like tool selection and agent collaboration, leaving the optimization of the workflow structure itself as a first-class object largely unaddressed. To fill this gap, the authors introduce a unified framework that treats workflows as agentic computation graphs and categorizes methods based on when the structure is determined, ranging from static offline template search to dynamic runtime generation and editing. They further synthesize the literature across optimization targets, feedback signals, and update mechanisms while proposing a new evaluation protocol that separates downstream task metrics from graph-level properties and execution costs.

Dataset

The provided text does not contain a dataset description. It is an appendix section (42. A.1) that catalogs supporting materials such as tables for node-level prompt optimizers, adjacent routing methods, and background frameworks. Consequently, there is no information available regarding dataset composition, sources, subset details, training splits, or data processing strategies to include in the blog post.

Method

The authors introduce the Agentic Computation Graph (ACG) as a unifying abstraction for executable LLM-centered workflows. In this framework, nodes perform atomic actions such as LLM calls, information retrieval, or tool use, while edges encode control, data, or communication dependencies. The overall optimization process follows a cycle where a task input is mapped to an ACG, which is then instantiated as a reusable template. This template is executed to produce a trace, which is subsequently analyzed to optimize, observe, and refine the workflow before deployment.

As shown in the figure below:

The framework distinguishes between three key objects: the ACG template, the realized graph, and the execution trace. The template is a reusable executable specification defined as Gˉ=(V,E,Φ,Σ,A), where V and E represent nodes and edges, Φ contains node parameters like prompts and tools, Σ is the scheduling policy, and A defines admissible actions. The realized graph Grun is the specific structure actually used for a particular run, which may differ from the template through selection or editing. The execution trace τ={(st,at,ot,ct)}t=1T records the sequence of states, actions, observations, and costs produced during execution.

Workflow optimization methods are categorized based on when the structure is determined. Static methods optimize a reusable template before deployment, focusing on offline template search, node-level optimization, or joint optimization of structure and local configuration. Dynamic methods determine part of the workflow at inference time, allowing for runtime adaptation. This includes selection and pruning of a fixed super-graph, pre-execution workflow generation based on query difficulty, or in-execution editing where the structure is revised during execution in response to feedback. The optimization objective generally balances task quality R(τ;x) against execution cost C(τ), formulated as maximizing E[R(τ;x)−λC(τ)].

The framework also outlines orthogonal comparison axes such as optimization target (node, graph, joint), feedback mechanisms (metric, verifier, preference), and update mechanisms (search generator, controller RL). Evaluation involves structure-aware assessment, downstream task validation, and efficiency metrics. Finally, the authors identify open questions regarding design trade-offs, such as when static optimization suffices versus when dynamic adaptation is necessary, and the role of verifiers in ensuring workflow validity.

Experiment

- A standardized classification card is used to compare methods across stable dimensions like structural settings, optimization levels, and update mechanisms, ensuring consistent evaluation rather than relying on paper-specific descriptions.

- Experiments validate that specific algorithm choices depend heavily on the available signals and evidence; for instance, search works best with trusted evaluators and discrete action spaces, while reinforcement learning suits sequential generation but requires careful reward design.

- Evaluation protocols are shown to require a separation between structure-aware assessment of workflow quality and downstream task validation to distinguish between plausible graph generation and actual task success.

- Studies demonstrate that reporting graph-level properties and robustness under perturbations, such as tool failures or schema drift, is essential to differentiate genuine structural improvements from brute-force compute or uncontrolled cost growth.