Command Palette

Search for a command to run...

Dans quelle mesure l'apprentissage par renforcement non supervisé avec vérification des résultats (RLVR) peut-il mettre à l'échelle l'entraînement des LLM ?

Dans quelle mesure l'apprentissage par renforcement non supervisé avec vérification des résultats (RLVR) peut-il mettre à l'échelle l'entraînement des LLM ?

Résumé

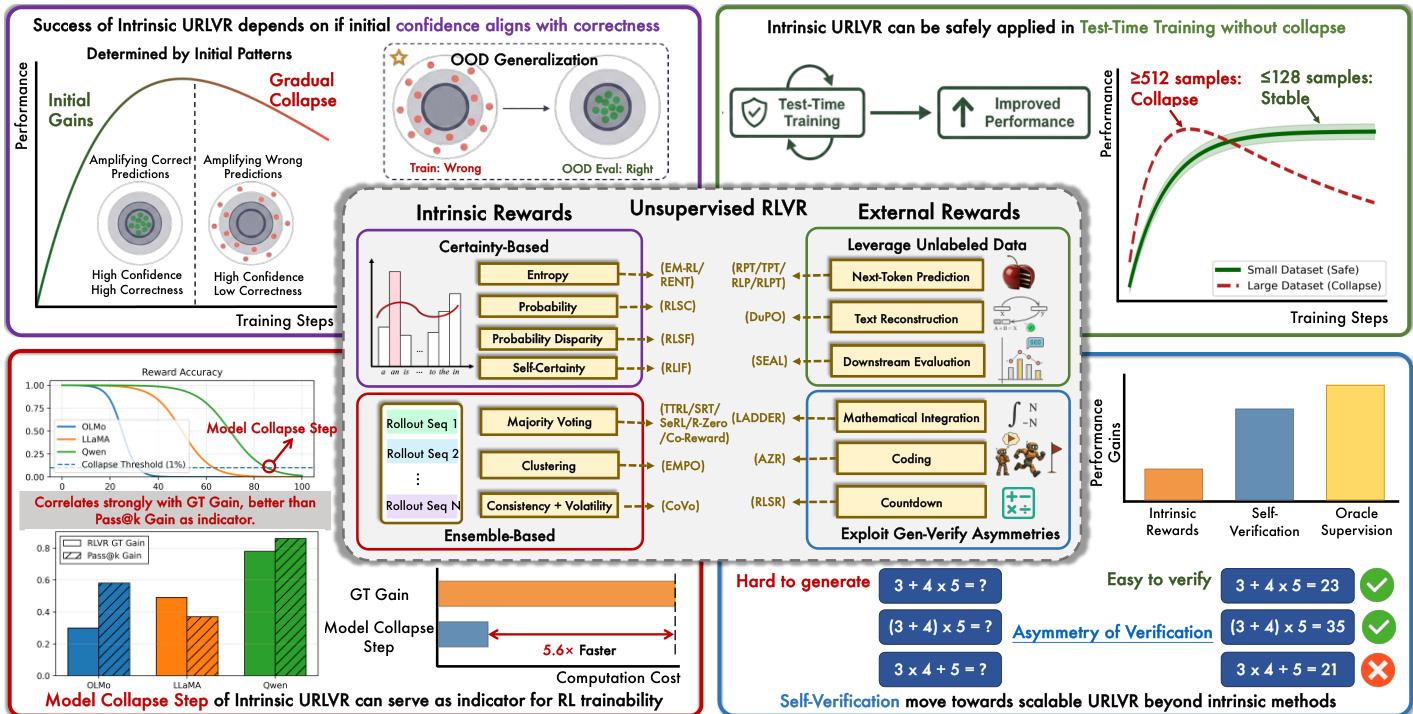

L'apprentissage par renforcement non supervisé avec récompenses vérifiables (URLVR) offre une voie pour dépasser le goulot d'étranglement de la supervision dans l'entraînement des grands modèles de langage (LLM), en générant des récompenses sans recourir à des étiquettes de vérité terrain. Des travaux récents exploitent des signaux intrinsèques au modèle, montrant des gains prometteurs dès les premières étapes ; pourtant, leur potentiel et leurs limites restent mal définis. Dans cet article, nous revisitons l'URLVR et proposons une analyse approfondie couvrant une taxonomie, des fondements théoriques et des expériences exhaustives. Nous classifions d'abord les méthodes d'URLVR selon l'origine des récompenses : intrinsèques ou externes. Ensuite, nous établissons un cadre théorique unifié révélant que toutes les méthodes intrinsèques convergent vers un affinement de la distribution initiale du modèle. Ce mécanisme d'affinement s'avère efficace lorsque la confiance initiale du modèle est alignée avec la justesse des réponses, mais échoue de manière catastrophique en cas de désalignement. Grâce à des expériences systématiques, nous montrons que les récompenses intrinsèques suivent systématiquement, quelle que soit la méthode, une dynamique de type « hausse puis chute », dont le moment de l'effondrement est déterminé par les a priori du modèle plutôt que par des choix d'ingénierie. Malgré ces limites de mise à l'échelle, nous constatons que les récompenses intrinsèques demeurent utiles pour l'entraînement au moment de l'inférence sur de petits jeux de données. Nous proposons également une mesure, le « pas d'effondrement du modèle » (Model Collapse Step), pour quantifier les a priori du modèle et servir d'indicateur pratique de la traçabilité en apprentissage par renforcement. Enfin, nous explorons des méthodes de récompense externe qui ancrent la vérification dans des asymétries computationnelles, fournissant des preuves préliminaires suggérant qu'elles pourraient échapper au plafond imposé par l'alignement confiance–justesse. Nos résultats délimitent les frontières de l'URLVR intrinsèque tout en ouvrant la voie à des alternatives évolutives.

One-sentence Summary

Researchers from Tsinghua University and collaborating institutes reveal that intrinsic unsupervised RLVR methods inevitably cause model collapse by sharpening initial distributions, proposing the Model Collapse Step metric to predict trainability while advocating external rewards for scalable LLM training.

Key Contributions

- The paper establishes a taxonomy for unsupervised RLVR and provides a unified theoretical framework showing that all intrinsic reward methods converge toward sharpening the model's initial distribution rather than discovering new knowledge.

- Extensive experiments reveal that intrinsic URLVR consistently follows a rise-then-fall pattern where performance collapses when the model's initial confidence misaligns with correctness, regardless of specific engineering choices.

- The authors propose the Model Collapse Step metric to predict RL trainability and demonstrate that intrinsic rewards remain effective for test-time training on small datasets, while external rewards grounded in computational asymmetries offer a potential path to escape these scaling limits.

Introduction

Large language models currently rely on reinforcement learning with verifiable rewards to enhance reasoning, but this approach faces a critical bottleneck as obtaining human-verified ground truth labels becomes prohibitively expensive and infeasible for superintelligent systems. Unsupervised RLVR aims to solve this by deriving rewards without labels, yet prior work relying on intrinsic model signals suffers from a fundamental rise-then-fall pattern where training initially improves performance before collapsing due to reward hacking and model degradation. The authors provide a comprehensive theoretical and empirical analysis revealing that intrinsic methods merely sharpen the model's initial distribution, which fails when confidence misaligns with correctness, while proposing the Model Collapse Step as a practical metric to predict trainability and advocating for external reward methods that leverage computational asymmetries to achieve scalable, stable improvement.

Dataset

- The training dataset consists of M prompt-answer pairs, where each entry includes a prompt xi and its corresponding ground-truth answer ai∗.

- For every prompt in the set, the authors generate N rollout responses using the current policy πθ.

- Each generated response contains a full reasoning trajectory and an extracted answer derived from that trajectory.

- The data serves as the foundation for training, where the model learns from the relationship between the initial prompts, the generated trajectories, and the verified ground-truth answers.

Method

The authors leverage a framework for Unsupervised Reinforcement Learning with Verifiable Rewards (URLVR) that eliminates the need for ground-truth labels by utilizing proxy intrinsic rewards generated solely by the model. This approach distinguishes between two primary paradigms for constructing rewards: Certainty-Based and Ensemble-Based methods.

Certainty-Based rewards derive signals from the policy's confidence, such as logits or entropy, operating on the assumption that higher confidence correlates with correctness. These methods include estimators like Self-Certainty, which measures the KL divergence from a uniform distribution, and Token-Level Entropy, which penalizes uncertainty at each generation step. Conversely, Ensemble-Based rewards leverage the wisdom of the crowd by generating multiple rollouts for the same prompt. They assume that consistency across these diverse candidate solutions, often formalized through majority voting or semantic clustering, serves as a robust proxy for correctness.

The underlying mechanism driving these methods is a sharpening process where the model converges towards its initial distribution. Theoretically, the training dynamics follow a KL-regularized RL objective. The optimal policy for this objective has the closed form:

πθ∗(y∣x)=Z(x)1πref(y∣x)exp(β1r(x,y))where Z(x) is the partition function and β controls regularization strength. For intrinsic rewards like majority voting, this creates a "rich-get-richer" dynamic. If the model's initial confidence aligns with correctness, the sharpening mechanism amplifies these correct predictions, leading to performance gains. However, if the initial confidence is misaligned, the same mechanism systematically reinforces errors, leading to a gradual collapse in performance.

To monitor this stability, the authors introduce the Model Collapse Step as an indicator of trainability. This metric tracks the training step where reward accuracy drops below a specific threshold, such as 1%. Models with stronger priors sustain intrinsic URLVR for longer periods before collapsing, allowing for efficient base model selection without the computational cost of full reinforcement learning training. This framework highlights the critical dependency of intrinsic URLVR success on the alignment between initial confidence and correctness.

Experiment

- Intrinsic URLVR methods universally exhibit a rise-then-fall pattern where early gains from aligning confidence with correctness eventually collapse into reward hacking, regardless of hyperparameter tuning or specific reward design.

- Fine-grained analysis reveals that training primarily amplifies the model's initial preferences rather than correcting errors on specific problems, yet this sharpening can still generalize to improve performance on unseen out-of-distribution tasks if initial confidence aligns with correctness.

- Model collapse is prevented when training on small, domain-specific datasets or during test-time training, as these conditions induce localized overfitting rather than the systematic policy shifts that cause failure in large-scale training.

- The "Model Collapse Step," measuring when reward accuracy drops during intrinsic training, serves as a rapid and accurate predictor of a model's potential for RL gains, outperforming static metrics like pass@k.

- External reward methods leveraging generation-verification asymmetry, such as self-verification, offer a more scalable path than intrinsic rewards by providing signals grounded in computational procedures rather than the model's internal confidence.