Command Palette

Search for a command to run...

Fiez-vous à votre modèle : calibration de la confiance guidée par la distribution

Fiez-vous à votre modèle : calibration de la confiance guidée par la distribution

Xizhong Yang Haotian Zhang Huiming Wang Mofei Song

Résumé

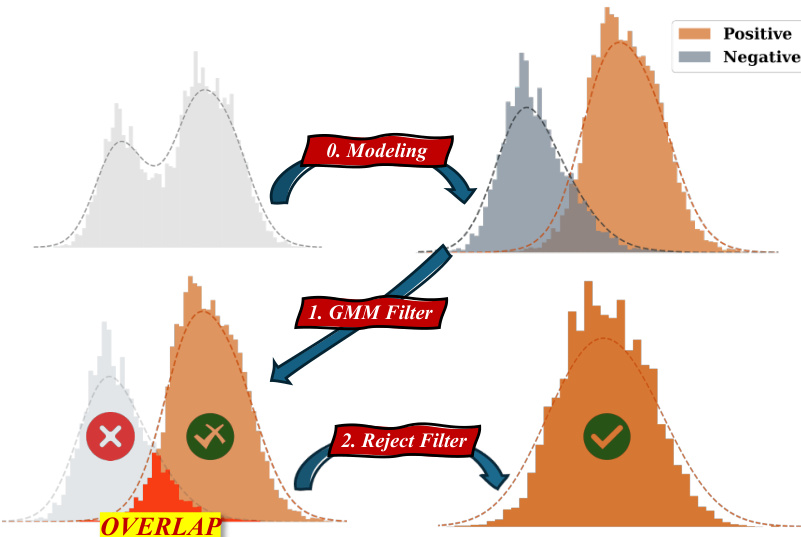

Les modèles de raisonnement à grande échelle ont démontré des performances remarquables grâce aux progrès des techniques de mise à l'échelle au moment du test, qui améliorent la précision des prédictions en générant plusieurs réponses candidates et en sélectionnant la réponse la plus fiable. Bien que des travaux antérieurs aient montré que des signaux internes du modèle, tels que les scores de confiance, peuvent partiellement indiquer l'exactitude d'une réponse et présenter une corrélation distributionnelle avec cette exactitude, ces informations distributionnelles n'ont pas été pleinement exploitées pour guider la sélection des réponses. Dans cette optique, nous proposons DistriVoting, une méthode qui intègre des priors distributionnels comme signal complémentaire au score de confiance lors du vote. Plus précisément, notre approche (1) décompose d'abord la distribution mixte des scores de confiance en composantes positives et négatives à l'aide de modèles de mélanges gaussiens (Gaussian Mixture Models), puis (2) applique un filtre de rejet basé sur des échantillons positifs et négatifs issus de ces composantes afin de réduire le chevauchement entre les deux distributions. Par ailleurs, pour atténuer davantage ce chevauchement du point de vue de la distribution elle-même, nous introduisons SelfStepConf, une méthode qui utilise la confiance au niveau de chaque étape pour ajuster dynamiquement le processus d'inférence, augmentant ainsi la séparation entre les deux distributions et améliorant la fiabilité des scores de confiance utilisés lors du vote. Des expériences menées sur 16 modèles et 5 benchmarks démontrent que notre méthode surpasse significativement les approches de l'état de l'art.

One-sentence Summary

Researchers from Southeast University and Kuaishou Technology propose DistriVoting, a novel framework that enhances Large Reasoning Models by integrating distributional priors with confidence scores. By decomposing confidence distributions and introducing SelfStepConf for dynamic inference adjustment, this method significantly improves answer selection reliability across diverse benchmarks compared to existing state-of-the-art approaches.

Key Contributions

- Large Reasoning Models struggle to evaluate answer quality during test-time scaling due to a lack of external reward signals, leaving distributional correlations between confidence scores and correctness underutilized for answer selection.

- The proposed DistriVoting method decomposes mixed confidence distributions into positive and negative components using Gaussian Mixture Models and applies a reject filter to mitigate overlap, while SelfStepConf dynamically adjusts the inference process using step-level confidence to further separate these distributions.

- Experiments across 16 models and 5 benchmarks, including HMMT2025 and AIME, demonstrate that this approach significantly outperforms state-of-the-art methods by improving the reliability of confidence-based voting.

Introduction

Large Reasoning Models rely on test-time scaling to generate multiple candidate answers, yet selecting the most accurate response remains difficult due to the absence of external labels during inference. While prior methods utilize internal confidence scores to guide this selection, they often fail to fully exploit the distinct statistical distributions that separate correct answers from incorrect ones, leading to significant overlap between high-confidence errors and low-confidence correct responses. To address this, the authors propose DistriVoting, a method that decomposes confidence distributions into positive and negative components using Gaussian Mixture Models to filter out unreliable samples before voting. They further introduce SelfStepConf, which dynamically adjusts the inference process using step-level confidence to increase the separation between correct and incorrect distributions, thereby significantly improving answer selection accuracy across various models and benchmarks.

Method

The proposed framework consists of two primary components: SelfStepConf (SSC) for dynamic confidence adjustment during inference, and DistriVoting for post-inference trajectory filtering and aggregation.

SelfStepConf (SSC) The authors leverage token negative log-probabilities to assess trajectory quality in real-time. For a trajectory containing N tokens, the trajectory confidence Ctraj is defined as the average negative log-probability of the top-k tokens in the final answer segment:

Ctraj=−NG×k1i∈G∑j=1∑klogPi(j)where G represents the subset of tokens used for confidence computation. During inference, the system monitors step-wise confidence CGm. If the relative change Δconf falls below a threshold δ and the confidence is declining, the system triggers self-reflection. This involves injecting reflection tokens by swapping the highest-probability token's probability with that of a reflection token, effectively forcing the model to reconsider its reasoning path without disrupting the confidence calculation mechanism.

DistriVoting Following inference, the method employs a distribution-based voting strategy to select the final answer from the generated trajectories. This process involves modeling the confidence distribution, filtering out low-quality samples, and performing hierarchical voting.

The core of this pipeline involves modeling the confidence scores of the sampled trajectories as a mixture of two Gaussian distributions, representing positive (correct) and negative (incorrect) reasoning paths. The authors apply a Gaussian Mixture Model (GMM) to approximate this bimodal distribution:

p(x)=π1N(x∣μ1,σ12)+π2N(x∣μ2,σ22)where π1 and π2 are mixing weights. The component with the higher mean is mapped to the positive distribution, while the lower mean corresponds to the negative distribution.

As shown in the figure below:

The framework proceeds through three distinct stages illustrated in the diagram. First, in the Modeling stage, the raw confidence histograms are fitted to the GMM components. Second, the GMM Filter separates the trajectories into potentially correct (Vpos) and potentially incorrect (Vneg) sets based on the fitted distributions. However, significant overlap often exists between these distributions. To address this, the Reject Filter stage utilizes the negative set to identify and eliminate false positive samples from the candidate pool. Specifically, the method identifies the most likely incorrect answer from the negative set and removes trajectories from the positive set that align with this incorrect answer, thereby refining the candidate pool V^pos.

Finally, the method employs Hierarchical Voting (HierVoting) on the refined positive pool. The confidence scores are divided into NC sub-intervals. Within each interval, a weighted majority voting is performed to select a sub-answer. These sub-answers are then aggregated using a final weighted majority vote to determine the final answer Afinal. This hierarchical approach compensates for potential performance deficiencies in the filtering stages by leveraging confidence intervals to ensure robust voting.

Experiment

- DistriVoting and SelfStepConf (SSC) are validated as superior to existing test-time scaling methods like Self-Consistency and Best-of-N, demonstrating that distribution-aware voting and enhanced confidence differentiation significantly improve reasoning accuracy.

- The adaptive GMM Filter consistently outperforms fixed Top-50 filtering by effectively modeling the bimodal distribution of correct and incorrect trajectories, leading to higher quality candidate pools for voting.

- SSC enhances voting performance by increasing the separation between confidence distributions of correct and incorrect samples, which improves the reliability of the filtering process without expanding the model's fundamental reasoning limits.

- Ablation studies confirm that the GMM Filter is the most critical component of DistriVoting, while the Reject Filter provides additional gains only when built upon a robust distribution split.

- The proposed methods show robustness across various model sizes and budgets, with performance gains becoming more pronounced as sample sizes increase to provide reliable distributional information.

- Step splitting using paragraph-level delimiters and reflection triggers based on confidence drops are identified as effective strategies for maintaining logical integrity and improving trajectory quality during inference.