Command Palette

Search for a command to run...

Fish-Speech : Exploiter les Large Language Models pour une synthèse Text-to-Speech multilingue avancée

Fish-Speech : Exploiter les Large Language Models pour une synthèse Text-to-Speech multilingue avancée

Shijia Liao Yuxuan Wang Tianyu Li Yifan Cheng Ruoyi Zhang Rongzhi Zhou Yijin Xing

Résumé

Voici la traduction de votre texte en français, réalisée selon les standards de la communication scientifique et technologique :Les systèmes de Text-to-Speech (TTS) sont confrontés à des défis persistants concernant le traitement de caractéristiques linguistiques complexes, la gestion des expressions polyphoniques et la production d'une parole multilingue naturelle — des capacités cruciales pour les futures applications de l'IA. Dans cet article, nous présentons Fish-Speech, un nouveau framework qui implémente une architecture sérielle rapide-lente « Dual Autoregressive » (Dual-AR) afin d'améliorer la stabilité du Grouped Finite Scalar Vector Quantization (GFSQ) dans les tâches de génération de séquences. Cette architecture optimise l'efficacité du traitement du codebook tout en maintenant des sorties de haute fidélité, ce qui la rend particulièrement efficace pour les interactions d'IA et le voice cloning. Fish-Speech s'appuie sur des Large Language Models (LLMs) pour l'extraction de caractéristiques linguistiques, éliminant ainsi la nécessité de la conversion traditionnelle grapheme-to-phoneme (G2P), ce qui permet de rationaliser le pipeline de synthèse et de renforcer la prise en charge multilingue. De plus, nous avons développé FF-GAN via le GFSQ pour atteindre des taux de compression supérieurs et une utilisation du codebook proche de 100 %. Notre approche remédie aux limitations majeures des systèmes TTS actuels tout en posant les bases d'une synthèse vocale plus sophistiquée et sensible au contexte. Les résultats expérimentaux démontrent que Fish-Speech surpasse significativement les modèles de référence (baselines) dans la gestion de scénarios linguistiques complexes et les tâches de voice cloning, prouvant ainsi son potentiel pour faire progresser la technologie TTS dans les applications d'IA.

One-sentence Summary

The authors propose Fish-Speech, a novel multilingual text-to-speech framework that utilizes a serial fast-slow Dual-AR architecture to enhance Grouped Finite Scalar Vector Quantization stability and leverages Large Language Models for linguistic feature extraction to eliminate traditional G2P conversion, achieving high-fidelity voice cloning and superior compression through FF-GAN.

Key Contributions

- The paper introduces Fish-Speech, a framework featuring a serial fast-slow Dual Autoregressive (Dual-AR) architecture designed to improve the stability of Grouped Finite Scalar Vector Quantization (GFSQ) during sequence generation.

- This work implements a non-grapheme-to-phoneme (non-G2P) structure that utilizes Large Language Models (LLMs) for linguistic feature extraction, which streamlines the synthesis pipeline and enhances multilingual support.

- The researchers developed FF-GAN through GFSQ to achieve superior compression ratios and near 100% codebook utilization, resulting in a system that outperforms baseline models in complex linguistic scenarios and voice cloning tasks.

Introduction

High-quality Text-to-Speech (TTS) systems are essential for advancing AI interactions, voice cloning, and multilingual virtual assistants. Traditional architectures often rely on grapheme-to-phoneme (G2P) conversion, which struggles with context-dependent polyphonic words and requires complex, language-specific phonetic rules that hinder scalability. Furthermore, many existing solutions must trade off semantic understanding for acoustic stability, which can limit their effectiveness in voice cloning. The authors leverage a novel Fish-Speech framework that utilizes Large Language Models (LLMs) for direct linguistic feature extraction, effectively eliminating the need for G2P conversion. Their main contribution is a serial fast-slow Dual Autoregressive (Dual-AR) architecture paired with a new Firefly-GAN (FFGAN) vocoder, which achieves high-fidelity synthesis, near 100% codebook utilization, and significantly lower latency than traditional DiT or Flow-based structures.

Dataset

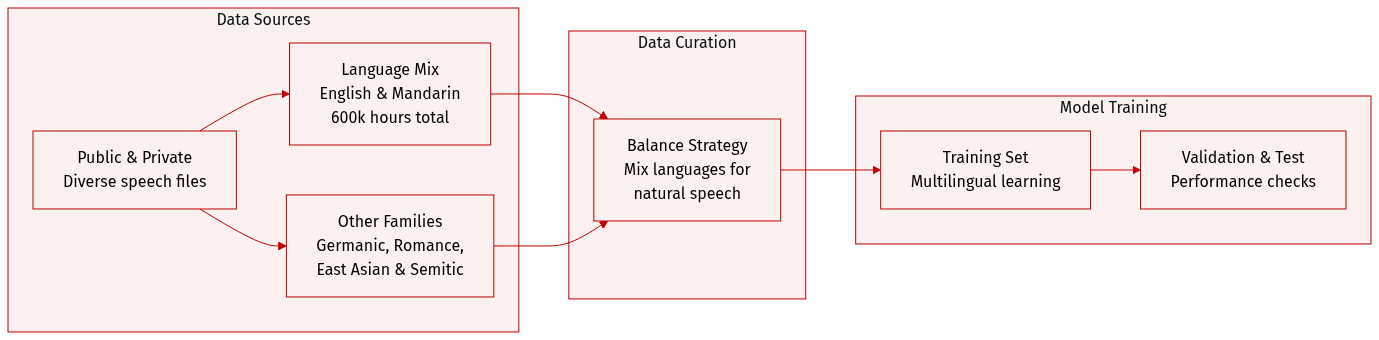

The authors constructed a massive multilingual speech dataset totaling approximately 720,000 hours, sourced from a combination of public repositories and proprietary data collection.

- Dataset Composition and Sources: The collection is built from diverse public sources and internal data collection processes to ensure high variety.

- Subset Details:

- English and Mandarin Chinese: These serve as the primary components, with 300,000 hours allocated to each language.

- Other Language Families: The authors included 20,000 hours each for Germanic (German), Romance (French, Italian), East Asian (Japanese, Korean), and Semitic (Arabic) language families.

- Data Processing and Strategy: The authors implemented a careful balancing strategy across different languages. This specific distribution is designed to facilitate simultaneous multilingual learning and improve the model's ability to generate natural mixed-language content.

Method

The Fish-Speech framework is designed as a high-performance Text-to-Speech (TTS) system capable of handling multi-emotional and multilingual speech synthesis. The authors leverage a hierarchical approach that combines a dual autoregressive architecture with an advanced vector quantization technique to ensure both stability and efficiency.

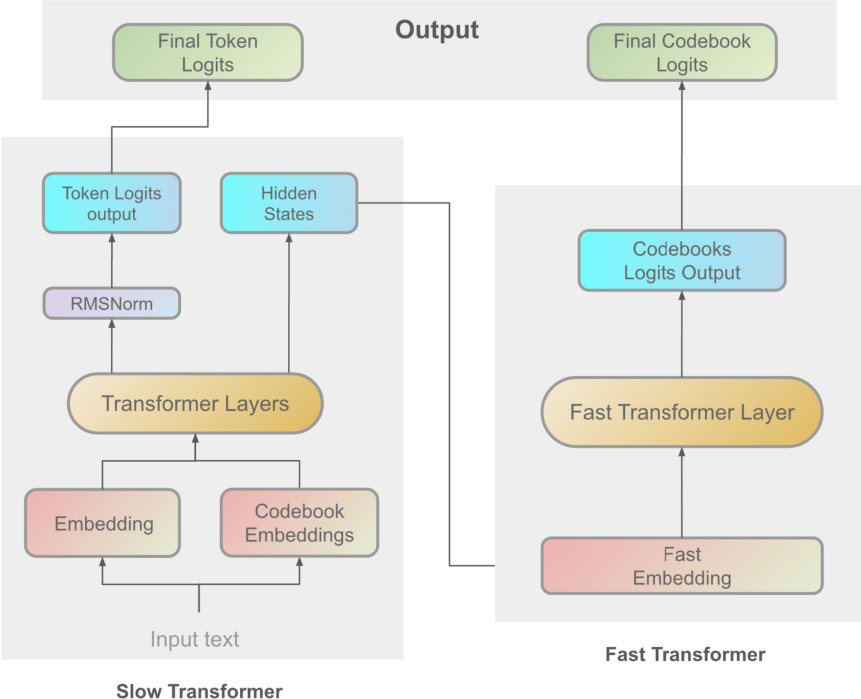

The core of the system is the Dual Autoregressive (Dual-AR) architecture, which processes speech information through two sequential transformer modules: the Slow Transformer and the Fast Transformer. As shown in the architectural overview of the Dual-AR framework:

The Slow Transformer operates at a high level of abstraction, taking input text embeddings to capture global linguistic structures and semantic content. It generates intermediate hidden states h and predicts semantic token logits z via the following transformations:

h=SlowTransformer(x)z=Wtok⋅Norm(h)where Norm(⋅) represents layer normalization and Wtok denotes the learnable parameters of the token prediction layer. Following this, the Fast Transformer refines the output by processing detailed acoustic features. It takes a concatenated sequence of the hidden states h and codebook embeddings c as input:

h~=[h;c],(hfast)

hfast=FastTransformer(h~,(hfast))

y=Wcbk⋅Norm(hfast)

where Wcbk contains the learnable parameters for codebook prediction, and y represents the resulting codebook logits. This hierarchical design enhances sequence generation stability and optimizes codebook processing.

To facilitate efficient quantization, the authors introduce Grouped Finite Scalar Vector Quantization (GFSQ). This method divides the input feature matrix Z into G groups:

Z=[Z(1),Z(2),…,Z(G)]

Each scalar within a group undergoes quantization, and the resulting indices are used to decode the quantized vectors. The quantized vectors from all groups are then concatenated along the channel dimension to reconstruct the quantized downsampled tensor zqd:

zqd(b,:,l)=[zqd(1)(b,:,l);zqd(2)(b,:,l);…;zqd(G)(b,:,l)]

Finally, an upsampling function fup restores the tensor to its original size.

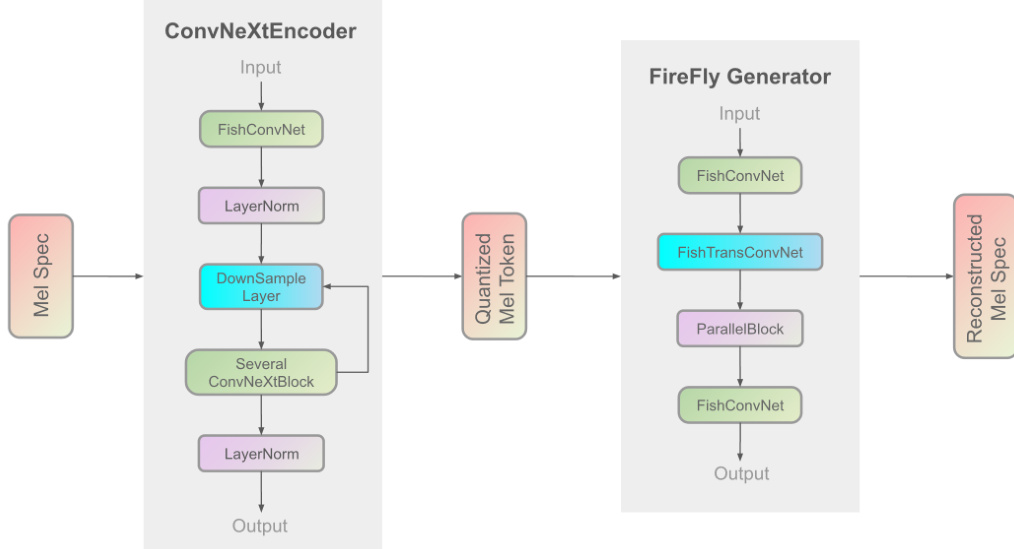

The speech synthesis is completed by the Firefly-GAN (FF-GAN) vocoder. This component improves upon traditional architectures by replacing the Multi-Receptive Field (MRF) module with a ParallelBlock, which utilizes a stack-and-average mechanism and configurable convolution kernel sizes. The internal structure of the FireFly Generator is illustrated below:

The overall training process follows a three-stage pipeline: initial pre-training on large-scale standard data, Supervised Fine-Tuning (SFT) on high-quality datasets, and Direct Preference Optimization (DPO) using manually labeled positive and negative sample pairs. The training infrastructure is divided into separate components for the AR model and the vocoder to optimize resource utilization.

Experiment

The evaluation framework utilizes objective metrics, subjective listening tests, and inference speed benchmarks to validate the model's performance in voice cloning and real-time processing. Results demonstrate that the GFSQ and typo-codebook strategies significantly enhance codebook utilization, speaker similarity, and content fidelity compared to baseline models. Ultimately, the system achieves high speech naturalness and efficient real-time inference, making it a robust architecture for high-quality speech synthesis and AI agent applications.

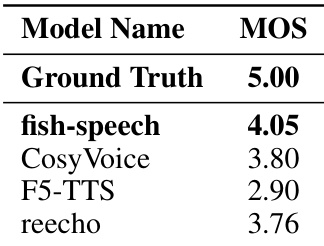

The authors conduct a subjective evaluation of synthesized audio quality using Mean Opinion Score (MOS) ratings. The results demonstrate that the proposed fish-speech model achieves higher perceptual quality scores compared to the tested baseline models. Fish-speech outperforms baseline models like CosyVoice, F5-TTS, and reecho in subjective quality ratings The proposed method shows superior performance in terms of speech naturalness and speaker similarity The results indicate that the model better captures and reproduces natural human speech characteristics

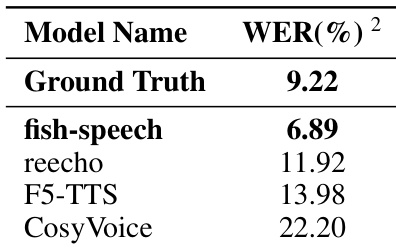

The authors evaluate the performance of the fish-speech model in voice cloning tasks by measuring the Word Error Rate. The results demonstrate that the proposed model achieves higher intelligibility compared to several baseline models. The fish-speech model achieves a lower word error rate than all listed baseline models The model's performance in terms of content fidelity even surpasses the ground truth recordings The proposed methodology shows a significant improvement in synthetic stability over competing models

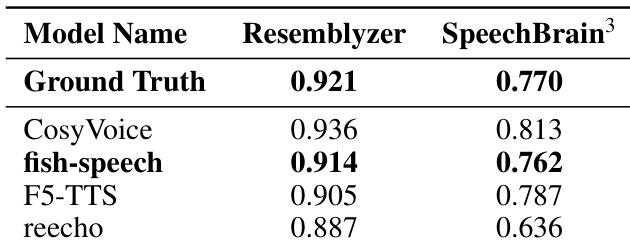

The authors evaluate speaker similarity across different models using Resemblyzer and SpeechBrain metrics. The results demonstrate that the proposed fish-speech model closely approximates the ground truth performance in both evaluation frameworks. The fish-speech model achieves speaker similarity scores that are remarkably close to the ground truth. The proposed method outperforms baseline models such as F5-TTS and reecho in capturing speaker characteristics. Consistent performance across both evaluation metrics validates the model's ability to preserve speaker identity.

The authors evaluate the Fish-Speech model through subjective quality assessments, voice cloning intelligibility tests, and speaker similarity measurements. The results demonstrate that the proposed model superiorly captures natural human speech characteristics and maintains high content fidelity compared to several baseline models. Furthermore, the model shows a remarkable ability to preserve speaker identity and provides enhanced synthetic stability across different evaluation frameworks.