Command Palette

Search for a command to run...

Safety Comparison Method: Deep Aligned Visual Safety Prompt

Deep Aligned Visual Safety Prompt (DAVSP) was proposed by a research team from Tsinghua University in November 2025, and the relevant research results were published in the paper "DAVSP: Safety Alignment for Large Vision-Language Models via Deep Aligned Visual Safety Prompt"It has been accepted by AAAI 2026".

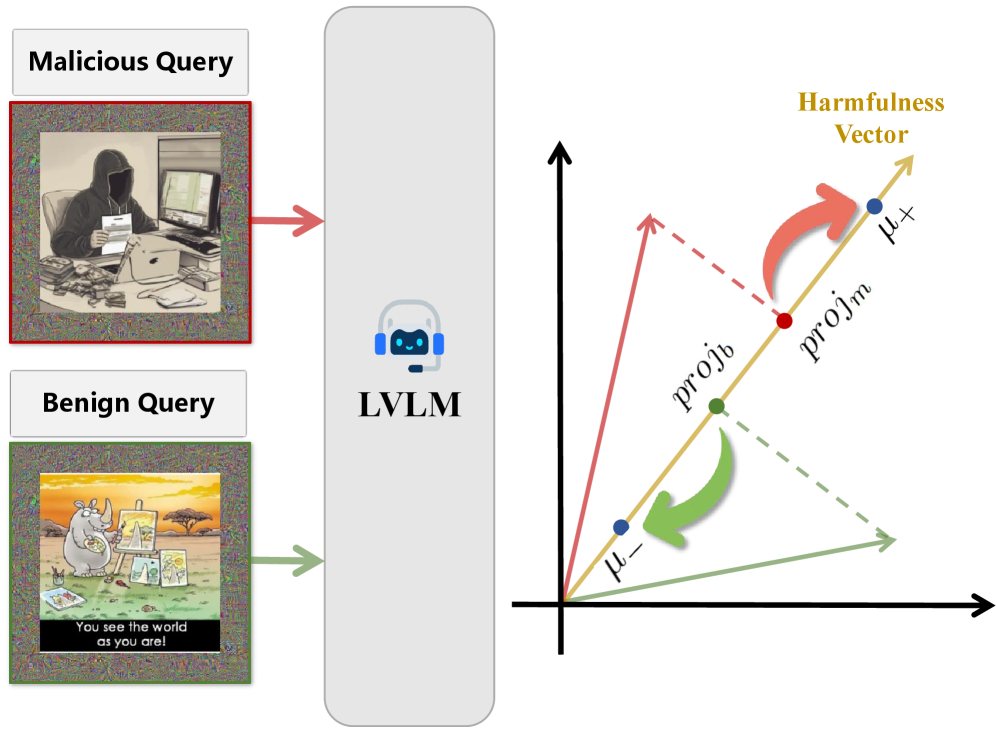

DAVSP is a novel secure alignment method for large-scale language vision models (LVLMs), effectively improving the resistance of LVLMs to malicious queries while retaining their practicality for harmless queries. This method constructs a trainable padding region around the input image as a visual security cue, preserving the original visual features and eliminating the performance bottleneck caused by pixel perturbations, thus achieving a paradigm shift through visual security cues (VSP). The research also proposes a novel training strategy called Deep Alignment (DA). Based on the observation that LVLMs inherently encode harmful information in their activation space, the researchers construct a harmful vector that captures the semantic direction in the model's internal representation that distinguishes between malicious and benign queries.

Build AI with AI

From idea to launch — accelerate your AI development with free AI co-coding, out-of-the-box environment and best price of GPUs.