"Streamlining LLM Workflows with Apache Airflow: A Beginner’s Guide"

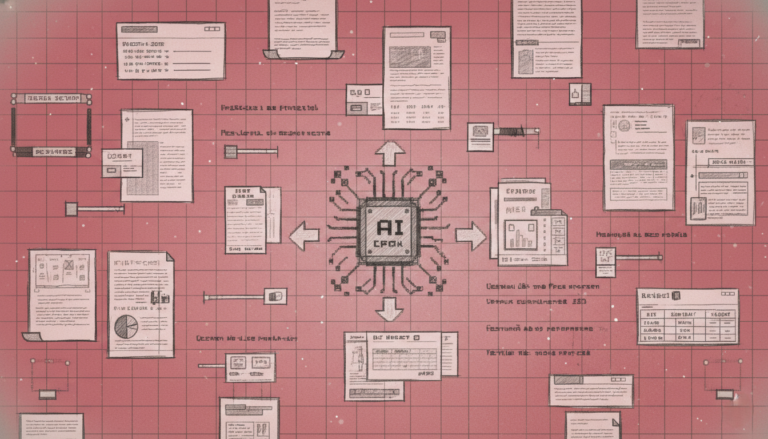

Large Language Models (LLMs) have become a cornerstone in the field of artificial intelligence, transforming the way we approach natural language processing tasks. However, managing the workflows and agents that interact with these models can be a complex and daunting task. This is where Apache Airflow comes in, providing a robust framework for orchestrating and automating these processes. In this article, we explore how to get started with Apache Airflow, specifically in the context of LLM workflows and agents. ### What is Apache Airflow? Apache Airflow is an open-source platform designed to programmatically author, schedule, and monitor workflows. Originally developed by Airbnb, it has since become a widely used tool in the data engineering and machine learning communities. Airflow allows users to define workflows as Directed Acyclic Graphs (DAGs), which are collections of tasks that run in parallel or sequentially, depending on their dependencies. This makes it ideal for managing the intricate steps involved in training, deploying, and maintaining LLMs. ### Key Features of Apache Airflow 1. **Scalability**: Airflow can handle workflows of any size, from small personal projects to large-scale enterprise operations. 2. **Flexibility**: Workflows can be defined using Python, making it easy to integrate with existing codebases and libraries. 3. **Reliability**: Airflow ensures that tasks are executed reliably, with built-in mechanisms for retrying failed tasks and alerting when issues arise. 4. **Visibility**: The Airflow web interface provides a clear and intuitive way to monitor the status of workflows and tasks. 5. **Extensibility**: Airflow can be extended with custom operators, sensors, and hooks to support a wide range of use cases. ### Setting Up Apache Airflow To get started with Apache Airflow, you need to install it and set up a basic environment. Here are the steps: 1. **Install Airflow**: You can install Airflow using pip, the Python package manager. Ensure you have Python 3.7 or later installed on your system. Open a terminal and run: ``` pip install apache-airflow ``` 2. **Initialize the Database**: Airflow uses a database to store workflow information. Initialize the database by running: ``` airflow db init ``` 3. **Create a User**: To access the Airflow web interface, create a user with administrative privileges: ``` airflow users create --username admin --firstname Admin --lastname User --role Admin --email [email protected] ``` 4. **Start the Web Server**: Launch the Airflow web server by executing: ``` airflow webserver --port 8080 ``` 5. **Start the Scheduler**: The scheduler is responsible for triggering workflows based on their defined schedules. Start it with: ``` airflow scheduler ``` ### Defining a Workflow Once Airflow is set up, you can start defining your workflows. A workflow is defined in a Python script, which is stored in the `dags` directory of your Airflow installation. Here’s a simple example of a workflow that trains an LLM and then deploys it: ```python from airflow import DAG from airflow.operators.python_operator import PythonOperator from datetime import datetime, timedelta # Default arguments for the DAG default_args = { 'owner': 'airflow', 'depends_on_past': False, 'start_date': datetime(2023, 10, 1), 'email_on_failure': False, 'email_on_retry': False, 'retries': 1, 'retry_delay': timedelta(minutes=5), } # Define the DAG dag = DAG( 'llm_training_pipeline', default_args=default_args, description='A simple pipeline to train and deploy an LLM', schedule_interval=timedelta(days=1), ) # Define the tasks def train_llm(): # Code to train the LLM print("Training the LLM...") def deploy_llm(): # Code to deploy the trained LLM print("Deploying the LLM...") # Create the tasks train_task = PythonOperator( task_id='train_llm', python_callable=train_llm, dag=dag, ) deploy_task = PythonOperator( task_id='deploy_llm', python_callable=deploy_llm, dag=dag, ) # Set the task dependencies train_task >> deploy_task ``` In this example, the `train_llm` function is responsible for training the LLM, and the `deploy_llm` function handles the deployment. The `>>` operator specifies that the `deploy_task` should run after the `train_task` has completed successfully. ### Managing Dependencies One of the strengths of Airflow is its ability to manage dependencies between tasks. This is crucial in LLM workflows, where the output of one step often serves as the input for the next. For instance, you might have a data preprocessing step that must complete before the training step can begin. You can define these dependencies in your DAG: ```python def preprocess_data(): # Code to preprocess data print("Preprocessing data...") preprocess_task = PythonOperator( task_id='preprocess_data', python_callable=preprocess_data, dag=dag, ) # Set the dependencies preprocess_task >> train_task >> deploy_task ``` ### Monitoring and Debugging Airflow’s web interface is a powerful tool for monitoring and debugging workflows. You can view the status of each task, see logs, and rerun tasks as needed. This interface also provides a visual representation of your DAG, making it easy to understand the flow of your workflow. ### Extending Airflow While the basic operators provided by Airflow are sufficient for many use cases, you might need to extend it for more complex tasks. Custom operators, sensors, and hooks can be created to integrate with specific tools and services. For example, you might create a custom operator to interact with a cloud-based machine learning platform. ### Best Practices 1. **Modularize Your Code**: Break down your workflow into smaller, reusable functions to improve maintainability. 2. **Use Variables and Connections**: Store configuration details and connection information in Airflow’s variables and connections to make your DAGs more flexible and secure. 3. **Document Your Workflows**: Add comments and documentation to your DAGs to make them easier to understand and maintain. 4. **Test Your DAGs**: Regularly test your DAGs to ensure they work as expected and to catch any issues early. ### Conclusion Apache Airflow is a powerful tool for managing and automating LLM workflows and agents. By defining your workflows as DAGs, you can ensure that each step is executed reliably and efficiently. The web interface provides valuable insights and control over your workflows, making it easier to monitor and debug. With its scalability, flexibility, and extensibility, Airflow is well-suited to handle the complexities of modern AI projects. Whether you’re a data scientist, machine learning engineer, or DevOps professional, Airflow can significantly enhance your workflow management capabilities.