Command Palette

Search for a command to run...

A post-00s Tsinghua University Student Uses AI to Defeat the "magic Attack" of the Atmosphere and Restore the True Appearance of the Universe

The existence of the atmosphere is vital to life on Earth, but for astronomical observations, the atmosphere can cause phenomena such as poor visibility, low seeing, and atmospheric extinction.Even with the best ground-based astronomical telescopes in the world, the astronomical images obtained are blurry.This blur sometimes causes erroneous physical measurements, affecting astronomers' scientific research progress.

In order to meet this challenge, recently, researchers including Li Tianao from Tsinghua University and Emma Alexander from Northwestern University in the United States,A computer vision algorithm was trained using simulated data to sharpen and “restore” astronomical images.The algorithm was also matched to the imaging parameters of the Vera C. Rubin Observatory, making the tool directly compatible with the observatory when it opens next year.

Currently, the research results, called Galaxy image deconvolution for weak gravitational lensing with unrolled plug-and-play ADMM,It has been published in the Monthly Notices of the Royal Astronomical Society.

The research results were published in Monthly Notices of the Royal Astronomical Society

Paper address:

https://academic.oup.com/mnrasl/article/522/1/L31/7075894

Experiment details: Using AI algorithms to "demystify" astronomical images

In this paper, the research team benchmarked classic and modern deblurring methods on real simulation data, including ellipticity errors, computation time, and sensitivity to PSF errors. The results show thatUnrolled-ADMM reduces the error by 38.6% compared with the traditional deblurring method and by 7.4% compared with the modern method.

Modern Methods GitHub Address:

https://github.com/lukeli0425/galaxy-deconv

Image Processing

To benchmark the classical methods and train the neural network, the clean ground truth image must be paired with the true PSF and the noisy blurred image.Researchers used Galsim (modular galaxy image simulation toolkit) and COSMOS (COSMOS real galaxy dataset) to simulate ground-based observations and create experimental datasets.

Galsim:

https://github.com/GalSim-developers/GalSim

COSMOS real galaxy dataset:

https://doi.org/10.5281/zenodo.3242143

The original images are randomly cropped and rotated to simulate weak lensing effects, and then convolved with random atmospheric and optical PSFs and scaled in brightness before adding Gaussian noise. Finally, all images are downsampled to the pixel scale of LSST.

The dataset is now available on HyperAI's official website:

https://hyper.ai/datasets/23544

Neural network training

The researchers used the Adam optimizer to train 40,000 samples on an NVIDIA GeForce RTX 2080 Ti GPU.The multi-scale L1 loss function was used to train the ground truth images and reconstructed images for 50 epochs.A separate network was trained for each number of iterations (N=2,4,8), with ResUNet as the denoising plugin (17,007,744 parameters).Introducing skip connection into neural network,This avoids the vanishing or exploding gradient caused by the increase in the number of iterations and the deepening of the neural network.Train a CNN (80,236 parameters) to learn the step size hyperparameter.

During neural network training,The researchers used PyTorch,Simultaneously provides dataset generation and benchmarking tools.

GitHub address:

https://github.com/Lukeli0425/Galaxy-Deconv

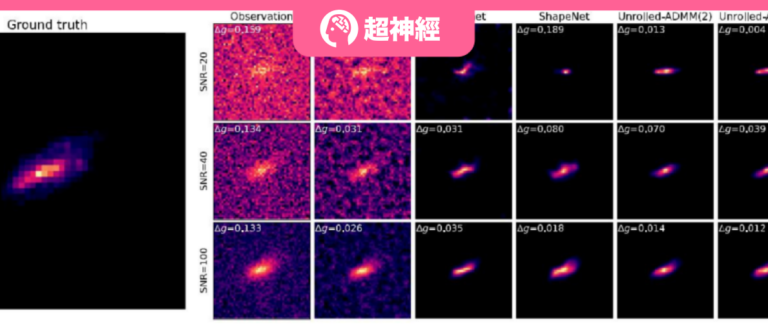

Performance at different SNR levels

The researchers tested the proposed method at different SNR levels and compared its performance with other algorithms (as shown in the figure below). It can be found that at low SNR, the Richardson-Lucy algorithm produces a noisier reconstruction result, whereThe galaxy will gradually be lost in the noisy pattern of the background.

Although modern methods can suppress background noise,But it will distort the shape of the galaxy.This is especially noticeable at low signal-to-noise ratios. The researchers used the FPFS suite for ellipticity estimation, combined with a known PSF for inverse filtering, or used a single-point PSF as a stable estimator without inverse filtering, which was applied to the output of all deconvolution methods involved in this paper.

As the SNR decreases,Except for the unrolled-ADMM proposed in this paper, all methods gradually become ineffective.

As shown in the figure above, as the SNR decreases,The 8-iteration unrolled-ADMM neural network proposed in the paper has the best performance in different SNRs.

Time-Performance Tradeoff

As shown in the figure below, the galaxy image signal-to-noise ratios corresponding to the three images are 20, 40, and 100. The error bar shows 5 times the standard error, and the number in brackets indicates the number of iterations.

The 8-iteration unrolled-ADMM method proposed in the paper shows better performance and lower variance than other methods at different SNR levels.But the cost is longer computation time.

Robustness to systematic errors in PSF

In actual observations, the PSF at the center of a galaxy is calculated by interpolating the PSFs measured by stars. However, the spatial variance of the PSF in the field of view is often difficult to model. Therefore, interpolation cannot accurately reconstruct the effective PSF between galaxies.This requires the deconvolution algorithm to be robust to systematic errors in the PSF.

As shown in the figure below, the left figure corresponds to the shear error in the assumed PSF, and the right figure corresponds to the size error (FWHM) in the PSF. The cartoon bar below the horizontal axis can intuitively display the systematic error of the PSF.

The unrolled-ADMM and classical methods proposed by the researchers are more sensitive to model mismatch.When the PSF error is large, unrolled-ADMM performs comparable to or better than modern methods.

Ablation studies

By changing the network structure and retraining,The researchers isolated the effects of individual design choices on the unrolled-ADMM.As shown in the figure below, changing the loss function from the proposed multi-scale loss to Shape Loss (as shown in the cyan dashed line) or MSE (as shown in the cyan solid line) will slightly degrade the performance; removing the hyperparameter SubNet (purple dashed line) or subsequent iterative layers will degrade the performance at high SNR; training the denoiser without deconvolution (ADMMNet-style training) will significantly degrade the performance.

Through experiments, this study achieved the above results.However, the researchers suggest that future improvements can still be made in the following areas:

* Consider and resolve higher-order errors in the PSF to achieve better performance;

* The simulation data can be improved with more accurate LSST PSF optical models and more realistic generative models;

* PSF interpolation can be included in the Pipeline and combined with low-rank deconvolution.

at present,The code to regenerate the simulated data for this study and the dataset link have been open sourced.

Tsinghua University's top student perfectly combines interests with scientific research

It is worth noting that the first author of this paper, Li Tianao,Undergraduate studyDepartment of Electronic Engineering, Tsinghua University.According to his personal website, he worked as a research assistant at the Visual Intelligence and Computational Imaging Laboratory at Tsinghua University.Currently working as a research intern at the Biologically Inspired Vision Laboratory at Northwestern University.Studied under Emma Alexander (second author of this paper).

According to reports, he mainly focuses on the intersection of computational imaging, computer vision, signal processing, optimization and machine learning, especially on solving inverse problems in imaging, physics-guided machine learning and astronomical imaging research.

Nowadays, Science AI is emerging in all walks of life, and astronomy is no exception.The increase in computing power and the advent of the big data era have brought more possibilities to the field of astronomy, which originally required enormous computing power.

Japanese scientists are using smart AI-driven telescopes to classify objects in space, helping physicists write and test hypotheses. Indian astronomers have created a new AI algorithm that has identified about 60 potentially habitable planets out of 5,000 known planets. A group of astronomers used AI for the first time in a study of galaxy mergers, confirming that galaxy mergers lead to starbursts.Tencent and the National Astronomical Observatory have launched the "Star Exploration Project" to improve the efficiency of star exploration...

More and more astronomers are using AI as a powerful exploration tool. As Li Qi, a researcher at the National Astronomical Observatory of the Chinese Academy of Sciences and chief scientist of FAST, said,Asking whether astronomy uses machine learning, deep learning and other technologies is like asking whether astronomy used computers 20 years ago.