Command Palette

Search for a command to run...

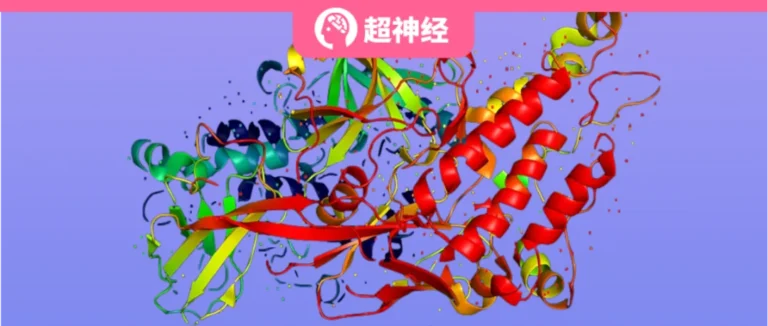

Without Experimental Data to Guide Protein Directed Evolution, the Research Group of Shanghai Jiaotong University Published the microenvironment-aware Graph Neural Network ProtLGN

Protein engineering plays a vital role in modern biotechnology and pharmaceutical research. By modifying the amino acid sequence of a protein, protein engineering can improve or give it new biochemical properties, such as enhancing the catalytic efficiency of an enzyme, increasing the affinity of a drug, or improving its thermal stability. These improvements are critical for developing new drugs, treating diseases, and improving the efficiency of biomanufacturing.

Protein engineering requires screening out the best mutants from tens of thousands of candidate mutants. Beneficial mutations are genetic variations that can improve one or more biochemical properties of a protein, enhance protein stability, affinity, selectivity or catalytic efficiency, and make it more suitable for specific applications. However,It is costly and time-consuming to experimentally verify highly adaptive mutants. In addition, the combination of multiple beneficial mutations is often affected by negative epigenetic effects.These factors increase the complexity of efficient protein design to varying degrees, causing the function of the protein to be reduced due to mutations.

In recent years, prediction and screening methods based on deep learning have been verified and applied in practical applications: by analyzing large amounts of data and learning the relationship between protein sequence, structure and function, the accuracy and efficiency of protein design can be improved. However, most methods are based on multiple sequence alignment (MSA) or protein language model (PLM) to extract features from protein sequences, which has many limitations.For example, the quality of multiple sequence alignment is limited by homology information; or a large amount of data and complex models are required, and the training cost is high. In addition, directly applying pre-trained models to new tasks is a major challenge to the generalization and expression capabilities of the model.

to this end,Hong Liang's research group at Shanghai Jiao Tong University developed a newROTLGN’s microenvironment-aware graph neural network,It can learn and predict beneficial amino acid mutation sites from protein 3D structures, guide the design of single-site mutations and multi-site mutations with different functions, and achieve a P of more than 40%.ROTLGN designed single-point mutant proteins that outperform their wild-type counterparts. The results have been published in JCM.

Paper address:

https://pubs.acs.org/doi/10.1021/acs.jcim.4c00036

Follow the official account and reply "protein design" to get the complete PDF

PROTLGN: Building a Lightweight Graph Neural Denoising Network

PROTLGN framework: Protein learning network based on graph neural network

PROTLGN is a protein representation learning model based on graph neural network. Its core architecture is as follows:

PROTLGN Architecture

* kNN graph (k-Nearest Neighbors Graph):

The amino acid residues of the input protein are used as nodes in the graph, and the spatial distance between the edge base and the amino acid residues is determined through the k-nearest neighbor algorithm, thereby constructing the topological structure of the protein, providing a basis for subsequent graph neural network processing.

* Equivariant GNN (Equivariant Graph Neural Network):

In three-dimensional space, the structure of a protein may be rotated or reflected. As the core network layer, the equivariant GNN is designed to recognize and maintain this rotational invariance structure, that is, no matter how the protein graph is rotated, the output of the network should be consistent for the same protein structure.

* Node Embedding:

In a graph representation of a protein, each amino acid residue is represented as a node in the graph so that machine learning models can capture and understand the complex relationships between nodes.

* Output layer and score (read-out layer & score):

The node representation learned by equivariant GNN is used to identify beneficial mutation sites and predict the potential impact of mutations on protein function or structure. At the same time, as the last layer of the model, the prediction results are converted into quantitative scores.

* Validation:

Experimental biological methods such as enzyme-linked immunosorbent assay (ELISA) and differential scanning fluorescence thermal stability analysis (DSF) were used to experimentally verify the mutants predicted by the model and test their biological functions.

PROTLGN training process: training-prediction-fine-tuning

PROTThe training process of LGN is shown in the figure below, which includes training, prediction, and model fine-tuning:

PROTLGN pre-training and prediction process

* Self-supervised Pretraining:

PROTLGN is first self-supervised pre-trained on wild-type proteins for the task of AA-type-denoising.

The three-dimensional coordinate information contained in the input graph is part of the node attributes and is used to more accurately represent the positions of amino acid residues in the three-dimensional space of the protein.

The three-dimensional coordinate information and the physical and biochemical properties of amino acids (such as amino acid type, SASA, B-factor, etc.) together constitute the attributes of the nodes and edges of the input graph. These attributes are used to construct the KNN graph, in which each node (amino acid residue) is connected to each other according to its spatial distance to other nodes.

PROTSelf-supervised learning process of LGN

* Equivariant graph convolution layer (EGC):

Equivariant graph neural networks (EGC layers) are used in pre-training to process the input protein graph. Through this layer, the model can learn node embeddings that remain unchanged under rotation and translation transformations, helping to process the structures of different proteins.

The EGC layer is the core of the graph neural network, which can process graph structure data and maintain sensitivity to changes in the spatial structure of proteins, which is crucial for understanding the three-dimensional structure of proteins.

In the self-supervised learning process, the EGC layer receives a noisy wild-type protein graph as input and outputs embedding representations of the nodes that take into account the spatial relationships between amino acid residues.

* Noisy Input Attributes:

During training, noise is injected into the input properties of the wild-type protein to simulate random mutations in nature.

* Zero-shot Prediction:

The blue arrows indicate that when a protein mutation is considered, the model uses the knowledge learned during the pre-training phase to predict the likely impact of the mutation on protein function.

* Wet Biochemical Assessments:

Combining mutant predictions with wet experimental evaluation allows updating pre-trained models to better fit specific proteins and functions.

* Fine-tuning:

As shown in the green arrow part of the diagram, combined with the evaluation of wet experiments, the pre-trained model can be updated and optimized according to specific proteins and functions to improve the accuracy and adaptability of the prediction.

In order to further utilize biological prior information to improve the generalization and expressiveness of the model, the researchers also took three additional measures: * Noise the input amino acid type to simulate random mutations in nature; * In the scoring mechanism of the loss function for amino acid node prediction, label smoothing was introduced to encourage substitutions between similar amino acids;

* Utilize a multi-task learning strategy to allow the pre-trained model to learn multiple prediction targets, thereby training a "one word, multiple uses" graph representation learning model.

Exploring the potential of directed protein evolution: PROTLGN provides effective strategies

In order to verify PROTIn order to verify the accuracy of LGN in predicting the activity of protein mutants, this study conducted extensive validation work on various biological functions of multiple proteins to ensure that PROTThe universality of LGNs, which include VHH antibodies, multiple fluorescent proteins (such as green, blue, and orange fluorescent proteins), and endonucleases (KmAgo), covers common functional modification targets in protein engineering, such as thermal stability, binding affinity, fluorescence brightness, and single-stranded DNA cleavage activity.

Experimental data show that even in the absence of experimental data or in the absence of experimental data on similar proteins, PROTLGN can still achieve the single-point mutation success prediction rate of 40% and in some cases can simultaneously enhance multiple biological functions.

PROTLGN and fluorescent proteins: predictive model of migration ability

The researchers used PROTThe LGN model was fine-tuned for green fluorescent protein (GFP) to develop a scoring function optimized specifically for fluorescence intensity. 1,000 labeled GFP mutants were randomly selected from the Deep Mutation Scanning (DMS) database for fine-tuning training, which improved the accuracy of the model in predicting fluorescence intensity variation.

Fluorescent protein experimental results

* The protein structure is shown on the left, with the red spheres highlighting the mutated amino acid residues

* Fluorescence intensity data are shown on the right, comparing different mutants with WT

Figure a evaluates the utility of a function-specific fitness scoring function learned from a small number of labeled green fluorescent protein (GFP) variants. Among the 10 mutants,Five of them showed higher fluorescence intensity than the wild type (WT), and the best performing mutant had a fluorescence intensity that was twice that of the WT.

In addition, the experiment tested the performance of the same scoring function on orange fluorescent protein (orangeFP), which comes from a different protein family, has a different active region, and has a sequence homology of about 21% to GFP.ROTLGN ranked the single-point mutants of orangeFP and selected the top 10 variants for wet assay expression and testing.Seven of them showed higher fluorescence intensity than WT, and this result highlights the strong migration ability of the model.

PROTLGN and VHH antibodies: zero samples PROTPerformance of LGN

The experimenters used PROTThe LGN model, in the absence of experimental data, was pre-trained on approximately 30,000 unlabeled protein structures, and the top 10 mutants among the VHH antibody variants with the highest fitness prediction were selected for wet experimental evaluation.

PROTResults of LGN-designed VHH antibodies

(a) The structure of the VHH antibody is shown on the left, and the binding affinity of the VHH antibody and its single-point mutants is shown on the right.

(b): The left side shows the structure of the VHH antibody, where mutations occur at different sites, and the right side shows the melting point temperature of the VHH antibody and its single-point mutants

Three mutants showed excellent performance in both binding affinity and thermal stability.This confirms that PROTThe effectiveness of LGN in guiding the design of VHH antibody mutations, especially in improving antibody performance. PROTThe self-supervised learning strategy of LGN provides a powerful tool for protein engineering, enabling accurate mutation prediction in the absence of experimental data.

PROTLGN and Ago proteins: finding the optimal single-point mutation combination

The researchers used PROTLGN performed a combined scoring of 12 known single-point mutations and screened out the top 5 high-order mutation candidates at 2-7 sites, for a total of 30 mutants, in order to find Ago protein variants with better performance through wet experiment evaluation.

PROTKmAgo mutants designed by LGN and experimental results

* Top left: Structure of KmAgo protein

* Top right: Optimal activity of KmAgo mutants with different numbers of mutation sites. This may indicate how the activity changes with the number of mutation sites.

* Middle and lower: Cleavage activity of KmAgo and its multiple mutation site mutants

The experimental results show:

* Activity enhancement:Compared with the wild type (WT), the mutants of 90% showed enhanced DNA cleavage activity.

* Best Mutant:The best mutant was a 7-site mutant with an activity 8-fold higher than that of WT.* Advantages of higher-order mutants:High-order mutants tend to show higher activity than low-order mutants, both in terms of maximum activity improvement and average improvement.

PROTThe LGN model was able to successfully identify high gain-of-function mutants and positive epistatic effects when combining single mutation sites. This confirmed the PROTThe effectiveness of LGN in guiding the design of Ago protein mutations, especially in improving antibody performance.

PROTComparison of LGN with other self-supervised models: more efficient and more accurate

In the latest study, scientists used PROTThe LGN model predicts protein fitness in the deep mutation scanning (DMS) dataset and is compared with other self-supervised learning models.

Protein prediction effects of different models

a: Inference efficiency and effectiveness of zero-shot deep learning models

b: Prediction performance of multiple mutation site effects

c: Improved performance of high-order mutation prediction

The experimental results show that PROTLGN performs best among all compared models.It not only accurately predicts the fitness of proteins, but also uses the minimum number of trainable parameters.This is important because fewer parameters means the model is cheaper to train and fine-tune, and it also means the model can learn effectively on less labeled data.

In the final stage of the experiment, the researchers used some of the available experimental labels to enhance the fine-tuning of the model, further improving the accuracy of the predictions.The results show that PROTLGN significantly outperforms other methods, especially when dealing with high-order mutants.

PROTLGN prediction of protein subcellular localization: comprehensive analysis of protein three-dimensional structure

In a groundbreaking study, scientists used PROTThe LGN model is used to predict the subcellular localization (PSL) of proteins, that is, the specific location of proteins in cells, which is closely related to the function of proteins.

Model prediction of protein subcellular localization

The research team first used PROTThe LGN model analyzed 9,366 labeled proteins, each consisting of its amino acid-level representation. It was then evaluated on 2,738 test proteins to predict the 10 possible locations of these proteins within the cell. The experimental results showed that PROTLGN significantly surpasses existing baseline methods based on amino acid sequence or homology information in prediction accuracy.

Conclusion: The “AI Revolution” in Biomedicine Has No Boundaries

Starting with AlphaFold, artificial intelligence has continuously refreshed the cognitive boundaries of biomedical engineering, but deep learning is still limited by high-quality data. For this limitation, PROTLGN's zero-shot learning training may provide an answer. After entering the AGI era with zero data, the next generation of structural biologists will likely no longer be experts in experimental methods, but will be more responsible for interpreting, designing, and executing structure-based experiments to prove or disprove mechanisms in biology, or designing new protein functions and clinical treatments.