Command Palette

Search for a command to run...

Sows Know When to Give Birth, This Time NNU Uses NVIDIA Edge AI Jetson

Contents at a glance:For the pig farming industry, sow farrowing is a key part of the process. Therefore, improving the survival rate of piglets and ensuring the safety of sow farrowing have become important issues. Existing AI monitoring methods have problems with high equipment costs and unstable information transmission. Researchers from Nanjing Agricultural University use a lightweight deep learning method to provide early warning and effective monitoring of the sow farrowing process, reducing costs while improving monitoring accuracy.

Keywords:Embedded Development Board Lightweight Deep Learning

This article was first published on HyperAI WeChat public platform~

my country's pig farming industry ranks first in the world, but the industry as a whole still faces the problem of poor farming standards. For many large pig farms, the most important thing is to reduce costs while increasing the survival rate of piglets. Traditional methods rely on manual supervision, which is difficult and subjective.Faced with a series of problems such as dystocia during sow delivery and piglet suffocation, it is difficult to deal with them in a timely and effective manner.

In recent years, AI monitoring has become an important method to solve this problem. Most of its principles are based on deep learning based on cloud computing to carry out monitoring.However, this method has high requirements on equipment and network bandwidth, and is highly restrictive and unstable.

According to China Pig Farming Network, sows often exhibit nesting behavior and increase the frequency of posture changes within 12-24 hours before giving birth due to the effects of oxytocin or prolactin.Based on this, the experimental team designed a model to monitor sow posture and piglet birth through the YOLOv5 algorithm and deployed it on the NVIDIA Jetson Nano development board.This enables the process to be monitored and analyzed in complex scenarios, with the characteristics of low cost, low latency, high efficiency and easy implementation.

This achievement was published in the journal "Sensors" in January 2023, titled "Sow Farrowing Early Warning and Supervision for Embedded Board Implementations".

Paper address:

Experiment Overview

Data and processing

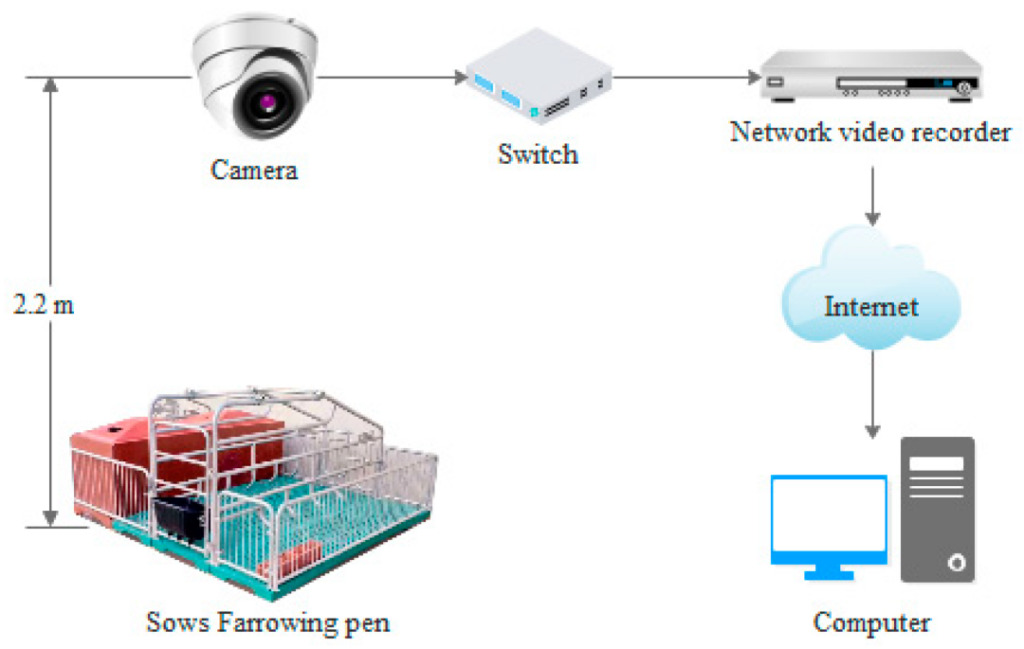

The video data comes from two farms in Suqian and Jingjiang, Jiangsu.Video data of 35 periparturient sows were collected.The data of 11 sows in Jingjiang Farm were recorded from April 27 to May 13, 2017, and the data of 24 sows in Suqian Farm were recorded from June 9 to 15, 2020. Sows were randomly placed in farrowing beds of specific size (2.2mx 1.8m), and the video data was recorded continuously by the camera for 24 hours.

The process is as follows:

Next, the data was preprocessed. The experimental team first selected videos recorded one day before and after sow farrowing, and then processed them into image data using Python and OpenCV.The labeling software was used to manually annotate and enhance the data of sow posture and newborn piglets in the 12,450 images acquired., and 32,541 images are obtained to form the dataset.

Data Augmentation (Data Augmentation): This refers to cropping, translation, rotation, mirroring, changing brightness, adding noise, and shearing)

This dataset is divided into 5 categories: 4 sow postures (side recumbent, sternal recumbent, standing and sitting) and piglets,The ratio of training set, validation set and test set is 7:1:2.

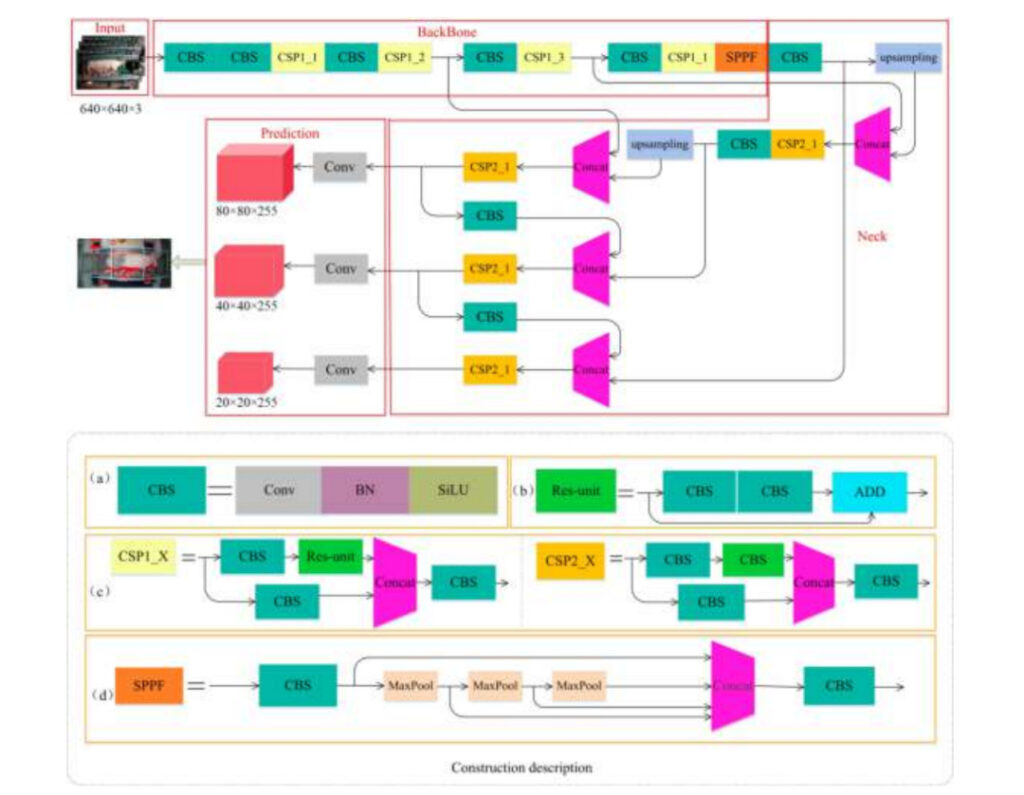

Experimental model

The experimental team used YOLOv5s-6.0 to build a model to detect sow posture and piglets.This model consists of 4 parts:

Input:Image Input

BackBone:Extraction of sow and piglet image features

Neck:Fusion of image features

Prediction:Prediction (due to the large size difference between sows and piglets, this part uses 3 different feature maps to detect large, medium and small targets)

a:CBS module details

b:Res-unit module details

c:CSP1_X and CSP2_X module details

d:SPPF module details structure

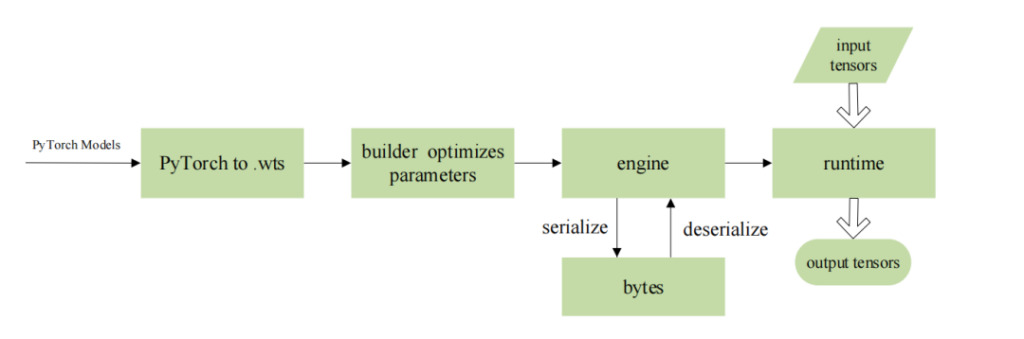

The experimental team deployed the algorithm on NVIDIA's Jetson Nano series embedded AI computing platform and used TensorRT to optimize the model.This enables its subsequent operation on the embedded development board to have higher throughput and lower latency, while avoiding possible data leakage during network transmission.

The specific parameters are as follows:

Model training environment:Ubuntu 18.04 operating system, Intel(R) Xeon(R) Gold 5118 @ 2.30 GHz CPU, NVIDIA Quadro P4000 GPU, 8 GB video memory, 64 GB memory, 2-TB hard disk, PyTorch 1.7.1 and Torchvision 0.8.2 deep learning frameworks, CUDA version 10.1.

Model deployment environment:ARM-adapted Ubuntu 16.04 operating system, 4-core ARM A57 @ 1.43 GHz CPU, 128-core Maxwell architecture GPU, 4 GB memory, JetPack 4.5, Cuda10.2.89, Python 3.6, TensorRT 7.1, Opencv 4.1.1, CMake 3.21.2 deep learning environment.

Model parameters:(1) For YOLOv5 training, set epoch 300, learning_rate 0.0001, batch_size 16; (2) For TensorRT optimized network, batch_size is 1 and precision is fp16.

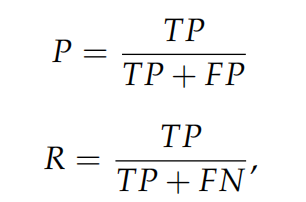

Finally, the experimental team used indicators such as precision, recall rate, and detection speed to evaluate the performance of different algorithms.

in,Precision and recall can be used to measure the ability of an algorithm to detect all categories of data., including 4 sow positions (side lying, sternal lying, standing and sitting) and newborn piglets;Model size and detection speed are used to measure whether the algorithm is suitable for deployment on embedded devices.

The calculation formula is as follows:

TP:The number of correct predictions for positive samples

FP:The number of incorrect predictions for positive samples

FN:The number of incorrect predictions for negative samples

Experimental Results

Model performance

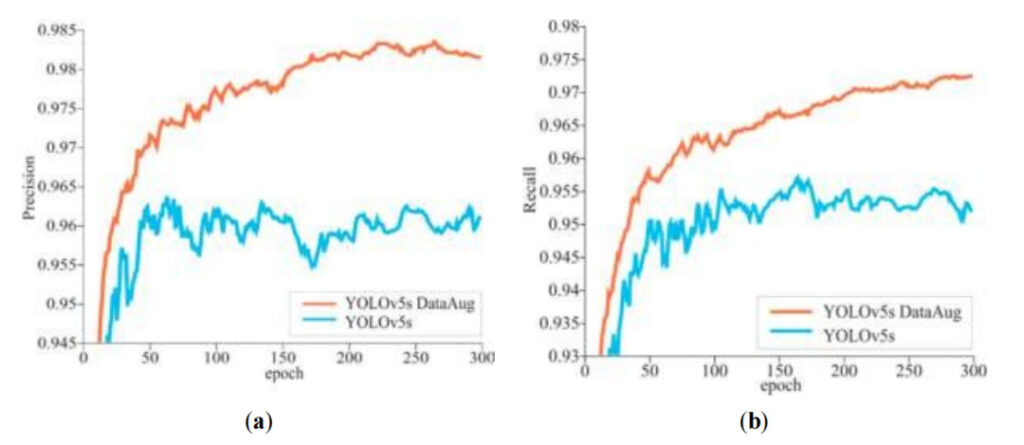

The experimental team found that in the 300 epochs of model training,As the iteration cycle increases, the precision and recall rate generally show an upward trend.At the same time, it can be found thatThe YOLOv5s model with data augmentation has consistently higher precision and recall.

a:Precision

b:Recall

Orange line:Precision/Recall of YOLOv5s model after data augmentation

Blue Line:Precision/Recall of YOLOv5s model without data augmentation

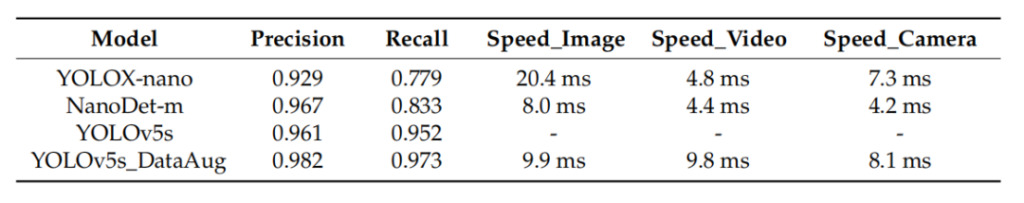

In the experiments, mean average precision (mAP) is used to evaluate the ability of the algorithm to detect all categories.While evaluating the YOLOv5s algorithm, the experimental team also compared the performance of the YOLOX-nano and NanoDet-m algorithms. The results showed that the detection speed of YOLOX-nano and NanoDet-m was slightly faster than that of YOLOv5s, but the accuracy was lower, and there were cases of missed detection and false detection of piglets. The YOLOv5s algorithm had a good effect on the detection of targets of different sizes, and the average detection speed of the model in images, local videos and cameras was comparable to the other two.Moreover, the data-augmented YOLOv5s model has the highest precision and recall, which are 0.982 and 0.937 respectively.

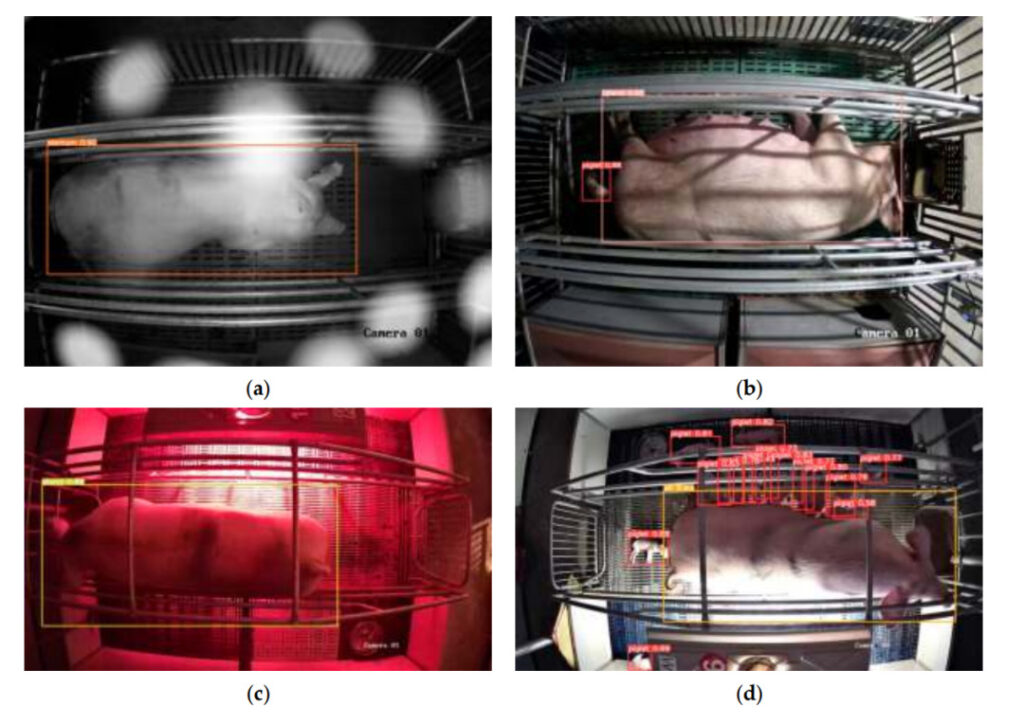

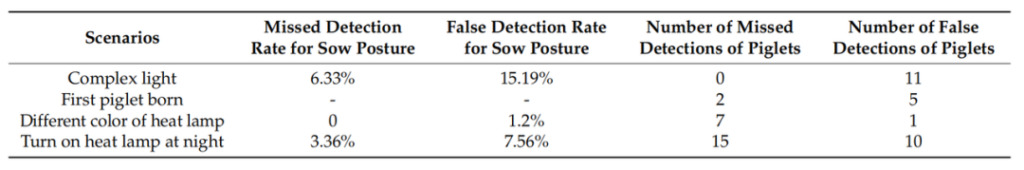

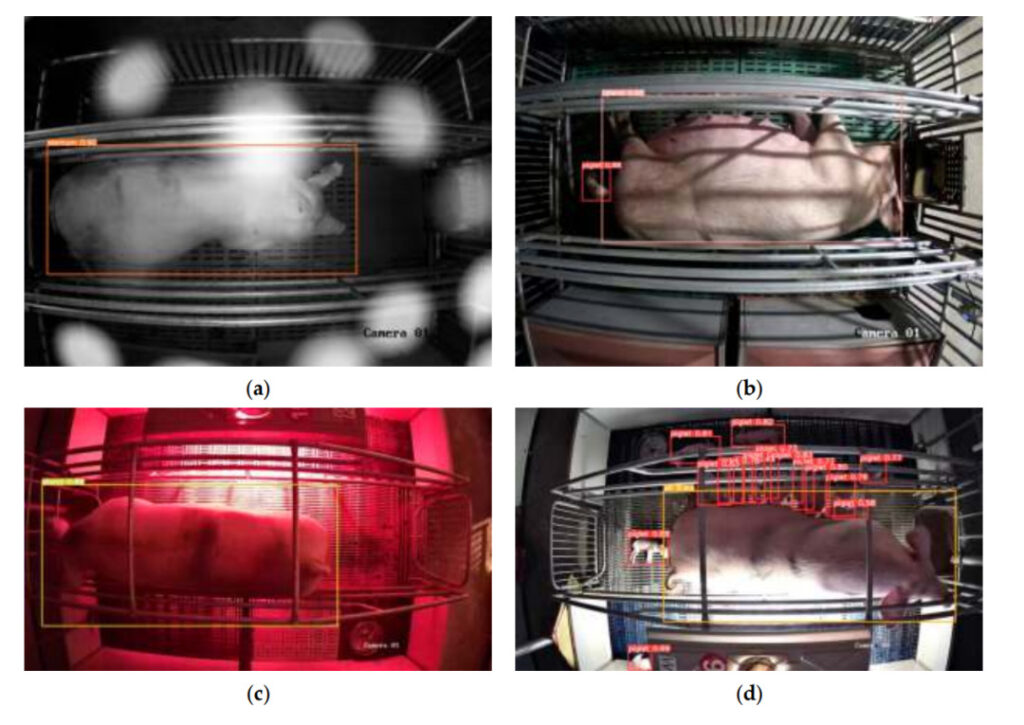

In order to test the generalization ability and anti-interference ability of the model, the experimental team retained one of the sows as a "new sample" when training the model, and selected 410 images containing different complex scenes to test the model. The results showed thatMissed detection and false detection of sow posture are mainly affected by changes in lighting; piglets are mainly affected by the turning on of heat lamps, that is, piglets are difficult to identify under strong light; the time when the first piglet is born and scenes of heat lamps of different colors have little effect on the detection ability of the model.

Second column from left:The missed detection rate of sow posture is highest under complex lighting conditions

Left three columns:The false detection rate of sow posture is higher under complex lighting conditions and when heat lamps are turned on at night

Left four columns:The number of false positives was higher in mixed lighting conditions and at night when heat lamps were on.

Left five columns:The number of missed piglets is higher at night when heat lights are on

a:Under complex lighting

b:First piglet born

c:Different colors of heat lamps

d:Heat lamp on at night

Before and after deployment

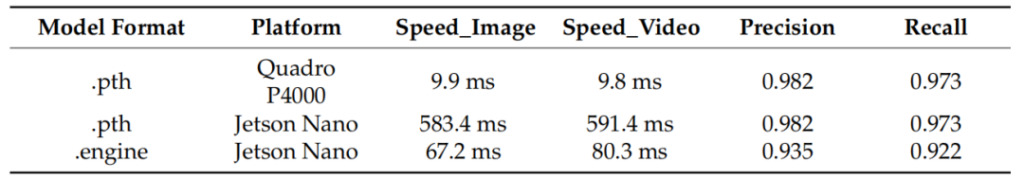

After the experimental team deployed the model on NVIDIA Jetson Nano, they were able to accurately detect the posture of sows and piglets. After comparing the test results, they found thatAlthough the model showed a slight decrease in accuracy after being deployed on NVIDIA Jetson Nano, its speed increased by more than 8 times.

Left column:Model format

Second column from left:Model deployment platform, Quadro P4000 is the platform used for comparative testing.

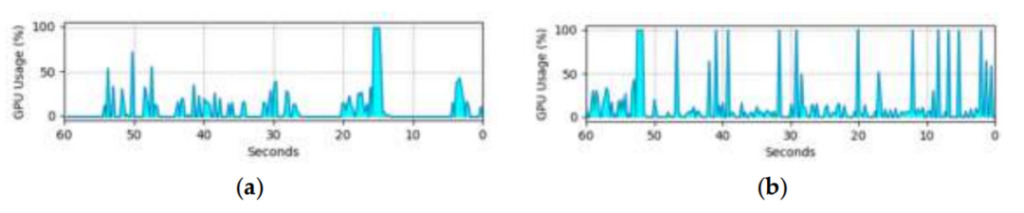

The GPU utilization on the embedded development board limits the practical application of the model. The figure below shows the GPU utilization of the model when detecting image and video targets on the embedded development board. Since the video stream needs to be decoded, the GPU utilization when detecting videos is higher than when detecting images, but this does not affect the performance of the model.The test results show that the model in the study can be applied to different production scenarios.

(a) GPU utilization in image detection

(b) GPU utilization in video detection

Test results

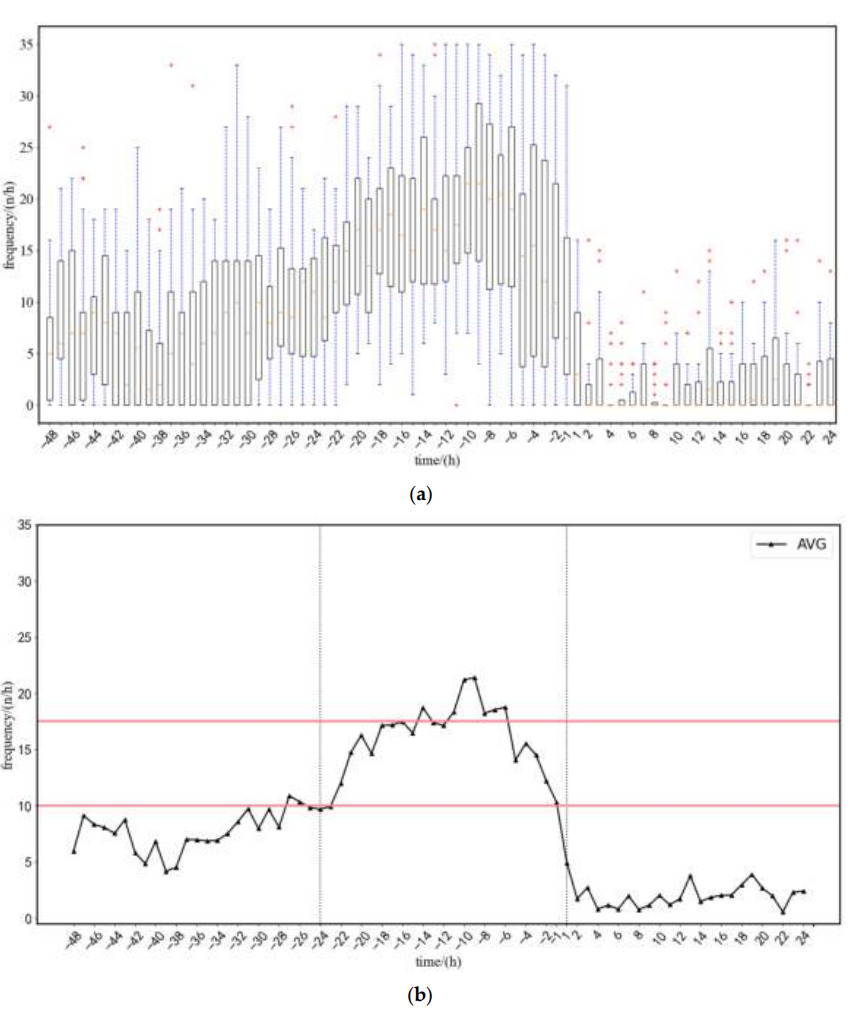

The experimental team tested and analyzed the data of 22 sows.The average frequency of posture changes in sows from 48 hours before farrowing to 24 hours after farrowing was obtained.Based on the frequency of changes (as shown in the figure below), the team summarized the model's early warning strategy as follows:

1. An alarm will be issued when the posture transition frequency exceeds the upper limit (17.5 times/hour) or falls below the lower limit (10 times/hour).

2. In order to reduce the impact of the sow's daily activities on the warning, the upper or lower limit must be more than 5 hours.

Tests on the samples showed thatThe model was able to issue an alarm 5 hours before farrowing began, with the error between the warning time and the actual farrowing time being 1.02 hours.

(a) Average posture transition rate range

(b) Average posture transition frequency

48 hours to 24 hours before delivery,Sow activity is normal during this period

24 hours to 1 hour before delivery,The frequency of posture changes gradually increases and then gradually decreases

1 hour to 24 hours after delivery,The frequency of posture transitions is close to 0, and then increases slightly

When the first newborn piglet is detected, the delivery alarm will be triggered, displaying "Delivery started! Starting time: XXX". In addition, the flashing LED light can also help breeders quickly locate the sow in labor and determine whether manual intervention is needed.

However, when the detection speed is too high, piglets are often detected incorrectly. Therefore, in order to achieve real-time detection and reduce false positives, the experimental team adopted "Three consecutive tests".Only when newborn piglets are detected three times in a row can they be judged as piglets.This method has 1.59 false positives, while the traditional single detection method has 9.55 false positives. The number of false positives has dropped significantly, and the overall average accuracy is 92.9%.

AI Pig Farming: A New Era of Smart Farming

As a major pig-breeding country in the world, my country has an annual pig output of about 700 million heads from 2015 to 2018. However, in recent years, due to the impact of swine fever and other factors, the number of pigs on hand and the number of pigs slaughtered have continued to fluctuate greatly. According to published industry research data,In recent years, the proportion of individual pig farmers has continued to decline, while the scale of production has continued to increase, requiring more efficient and intensive breeding technologies to be applied to the pig farming industry.

In China, AI pig farming already has reliable products. Alibaba Cloud, together with Aibo Machinery and Qishuo Technology, launched AI pig farming solutions to meet the needs of multiple scenarios. JD Agriculture and Animal Husbandry's smart farming solution is based on AI, the Internet of Things and other technologies, and has achieved "pig face recognition and full-chain traceability." The smarter and more sophisticated farming model brought by AI is gradually being promoted.

However, the promotion of AI pig farming is still facing urgent problems such as high cost and complex operation.There is probably still a long way to go before more pig farms can embrace AI.

This article was first published on HyperAI WeChat public platform~

Reference Links:

[1]https://www.163.com/dy/article/HCSQN810055360T7.html

[2]https://xueshu.baidu.com/usercenter/paper/show

[3]https://www.aliyun.com/solution/govcloud/ai-pig

[4]http://www.dekanggroup.com/index/news/detail/id/182.html

[5]https://www.thepaper.cn/newsDetail_forward_3695180

[7] Ding Qian, Liu Longshen, Chen Jia, Tai Meng, Shen Mingxia. Target detection of suckling piglets based on Jetson Nano[J/OL]. Transactions of the Chinese Society of Agricultural Machinery.