Command Palette

Search for a command to run...

Columbia University Launches an Upgraded Version of the Neural Network Org-NN to Accurately Predict Extreme Precipitation

Contents at a Glance:With the intensification of environmental changes, extreme weather phenomena have occurred frequently around the world in recent years. Accurately predicting precipitation intensity is very important for both humans and the natural environment. The variance of traditional models in predicting precipitation is small, tending to be light rain, and insufficient in predicting extreme precipitation.

Keywords: Implicit Learning Neural Networks for Extreme Weather

This article was first published on HyperAI WeChat public platform~

Affected by Typhoon Dusurui, Beijing has experienced continuous heavy rainfall since July 29, with some areas experiencing extremely heavy rainstorms. The extremely heavy rainfall caused a large flood in the Haihe River Basin, and serious flood disasters occurred in Mentougou, Zhuozhou and other places.

According to a report by CCTV.com on July 31, during this heavy rainfall, Beijing has drained more than 10 million cubic meters of water, which is equivalent to draining about five Kunming Lakes in the Summer Palace.Timely, accurate and effective prediction of extreme precipitation can minimize casualties and reduce losses caused by meteorological disasters.

Traditional climate model parameterization lacks subgrid-scale cloud structure and organization information, which affects the intensity and randomness of precipitation at coarse-grained resolution, resulting in the inability to accurately predict extreme precipitation conditions.Columbia University's LEAP Lab used global storm-analyzing simulations and machine learning to create a new algorithm that addresses the problem of missing information and provides a more accurate forecasting method.

Currently, the research has been published in PNAS, and the title of the article is "Implicit learning of convective organization explains precipitation stochasticity".

The paper has been published in PNAS

Paper address: https://www.pnas.org/doi/10.1073/pnas.2216158120#abstract

Preparation: 10 days of weather data + 2 neural networks

Data and processing

The dataset used by the experimental team isDYAMOND (DYnamics of the Atmospheric general circulation Modeled On Non-hydrostatic Domains) The second phase of the comparison project simulated atmospheric circulation dynamics. This project simulated 40 days of winter in the northern hemisphere. The experimenters used the initial 10 days as the spin-up of the model and randomly selected 10 days in the last 30 days as the training set.

The researchers selected appropriate data.These data are coarse-grained and divided into subdomains with grids equal to or comparable to the GCM-size.

Next, in order to provide training, validation, and test data sets, the team divided the 10 days into 6 days, 2 days, and 2 days for training, validation, and testing, respectively.Only samples with precipitation greater than the threshold (0.05 mm/h) are retained so that we can focus only on the intensity of precipitation rather than the cause of precipitation. Finally, the total number of samples was 108.

Neural Network Architecture

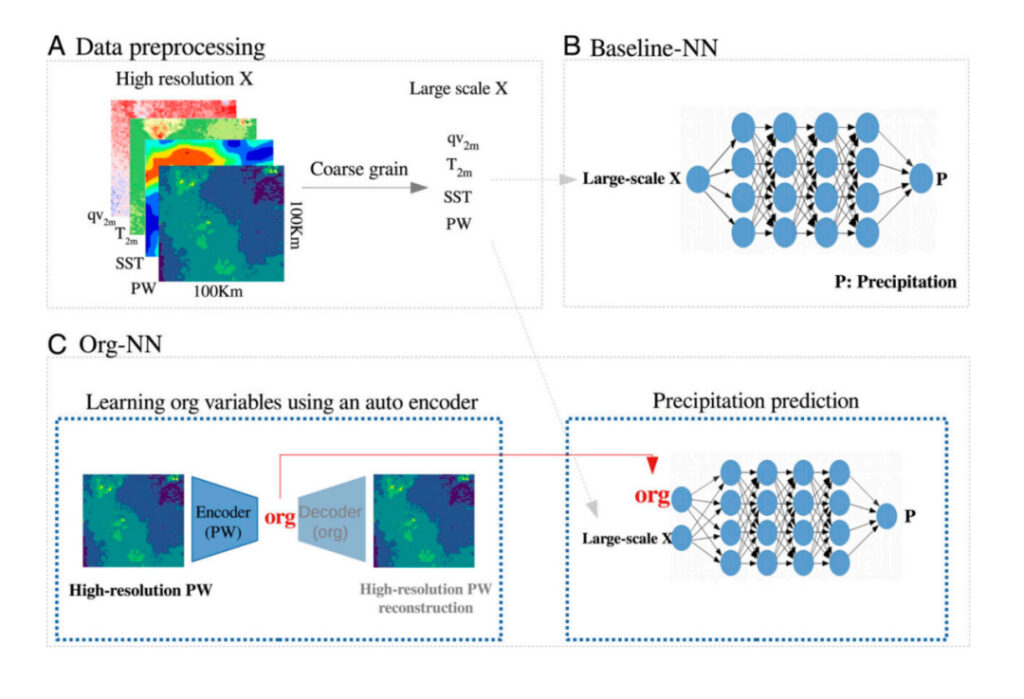

In their experiments, the researchers used two neural networks:The traditional model Baseline-NN (baseline neural network) and the newly proposed Org-NN .

Baseline-NN is a fully connected feed-forward network with a learning rate adjusted per generation.As a traditional model, Baseline-NN can only access large-scale variables and predict precipitation.

Org-NN contains an autoencoder, whose encoder part includes three one-dimensional convolutional layers and two fully connected layers.The encoder takes as input a high-resolution PW (precipitable water) anomaly of size 32 x 32 and outputs an org variable, where the org dimension is a hyperparameter of the network, which the researchers set to 4. The decoder takes the org variable and reconstructs the original high-resolution field, which is the opposite of the encoder. The neural network part of Org-NN is similar to Baseline-NN, with the addition of an organizational latent variable (org) as its input. .

Both were implemented using TensorFlow version 2.9, and the hyperparameters were tuned using the Sherpa optimization library.

Experimental Results

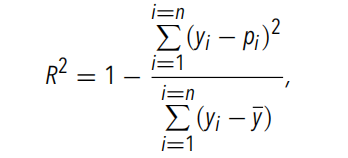

The experimental team pre-trained two models.To evaluate the predictive performance of the neural network, the researchers chose R2, a metric commonly used to quantify the performance of regression models.The calculation formula is as follows:

Traditional Model Baseline-NN

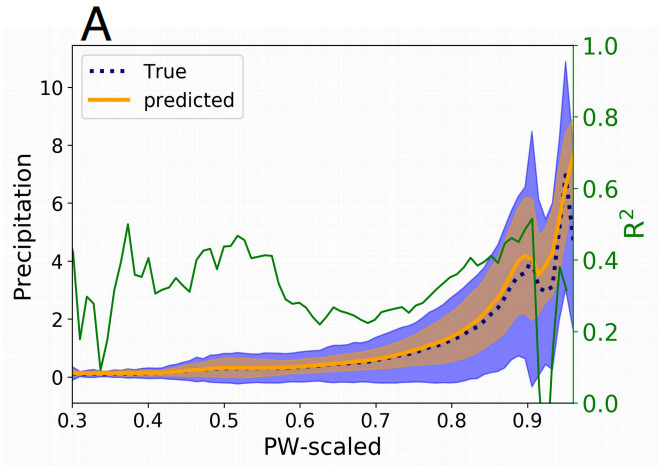

The experimental team first used Baseline-NN.The figure below shows the precipitation predictability when using coarse-grained PW, SST, qv2m and T2m as inputAmong them, qv2m and T2m are used to provide boundary-layer condition information to Baseline-NN. The experimental team grouped the coarse-grained PWs and averaged the predicted and actual values of coarse-grained precipitation in each group.The variance of the coarse-grained precipitation values within each group was also calculated..

PW: precipitable water

SST:sea surface temperature, sea surface temperature

qv2: near-surface specific humidity

T2m:Air humidity 2m near the surface, surface temperature

Figure 1: Average value of coarse-grained precipitation on PW bin

dotted line: The true average precipitation

Orange Line: Predicted precipitation average

Green Line: R2 calculated in each PW bin

Shadow: Variance within each group

Baseline-NN accurately recovers the key behavior of the precipitation mean (i.e., the average of the groups) under PW conditions, as well as the rapid transitions that occur near the critical point.The experimental team found that it could not explain the precipitation variability observed in global storm simulations, and its performance (measured by the R2 value of all samples) is about 0.45. A low R2 value indicates thatAlthough some precipitation variability can be captured, no strong relationship between input and precipitation can be found., and the R2 value calculated for each PW bin did not exceed 0.5.

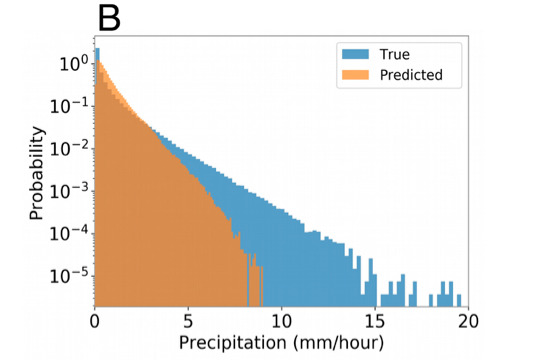

At the same time, the experimental team also compared the probability density function of precipitation predicted by Baseline-NN with the actual precipitation.This shows that the model cannot predict the tail of precipitation distribution, that is, it cannot predict extreme precipitation..

Figure 2: Schematic diagram of the probability density function of precipitation

Blue part: Probability density function of actual precipitation

Orange part: The probability density function of the predicted precipitation

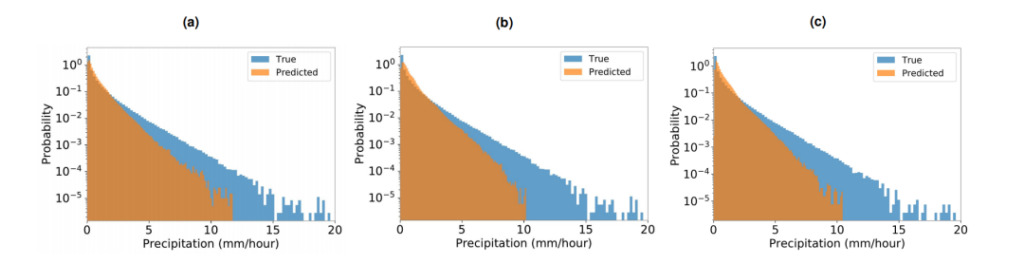

The researchers also used the total cloud cover at a coarse-grained level as one of the inputs to the neural network to further test the Baseline-NN.Total cloud cover is a parameterized variable in climate models and has no direct relationship to precipitation, so using it as an input to the neural network may provide clues about condensation, which is directly used to parameterize precipitation. This actually has little effect on the improvement of predictions, but it emphasizes that average cloud cover does not provide relevant information for accurately predicting precipitation. In addition, the experimental team conducted further analysis,It was confirmed that CAPE and CIN cannot be used as predictors and cannot improve the prediction results..

Figure 3: Precipitation probability density function

Blue part: True precipitation probability density function

Orange part: Predict the precipitation probability density function

a: input is [PW, SST, qv2m, T2m, sensible heat flux, latent heat flux]

b: input is [PW, SST, qv2m, T2m, total cloud cover]

c: Input is [PW, SST, qv2m, T2m, CAPE, CIN]

The conclusion is that Baseline-NN has low ability in accurately predicting precipitation and variability..

New Model Org-NN

The experimental team then overturned the traditional method and used Org-NN for prediction. Because Org-NN contains an autoencoder, it can directly receive feedback from the objective function of the neural network through back propagation.Therefore, the autoencoder will be able to passively extract relevant information to improve precipitation forecasts..

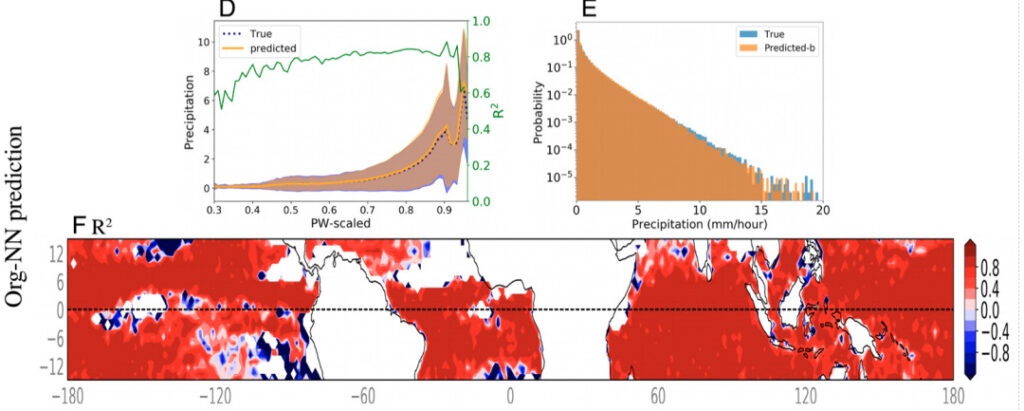

The figure below shows the precipitation prediction results of Org-NN with coarse-grained variables and org as input. Compared with Baseline-NN, Org-NN has made significant progress.When calculated over all data points, the predicted R2 increases to 0.9. For every interval of PW, except for the interval with less precipitation, the calculated R2 values are almost close to 0.80.

Figure 4: Org-NN prediction results

D: Average value of coarse-grained precipitation on PW bin

E:Schematic diagram of the probability density function of precipitation

F: R2 values calculated over the time step for each latitude and longitude location in Figure D. The white areas in the figure indicate precipitation less than 0.05 mm/h, which are excluded from the model input. Except for the areas near the points that did not reach the precipitation threshold, the R2 values of Org-NN in most areas are significantly higher than 0.8.

The experimental team further quantified the performance of Org-NN by comparing the probability density function of real precipitation between Org-NN and a high-resolution precipitation model. The results showed that Org-NN fully captured the probability density function, including the tail of its distribution, which corresponds to the extreme precipitation.This shows that Org-NN can accurately predict extreme precipitation..

The results obtained by the experimental team show that precipitation predictions are significantly improved by incorporating org as input. This suggests that subgrid scale structure may be important missing information in convection and precipitation parameterizations in current climate models..

Experimental process summary

Figure 5: Overview of the experimental process

A:Data processing process: coarse-grained high-resolution data

B: Baseline-NN: This network receives coarse-scale variables (such as SST and PW) as input and predicts coarse-scale precipitation.

C:Org-NN: The left figure shows the autoencoder that receives high-resolution PW as input and reconstructs it after passing through the bottleneck. The right figure shows the neural network predicting coarse-scale precipitation.

Traditional climate models are about to change

The team for this experiment came from Learning the Earth with Artificial Intelligence and Physics (LEAP), an NSF science and technology center launched by Columbia University in 2021,Its main research strategy is to combine physical modeling and machine learning, using expertise in climate science and climate simulation with cutting-edge machine learning algorithms to improve near-term climate predictions.This will benefit both climate science and data science.

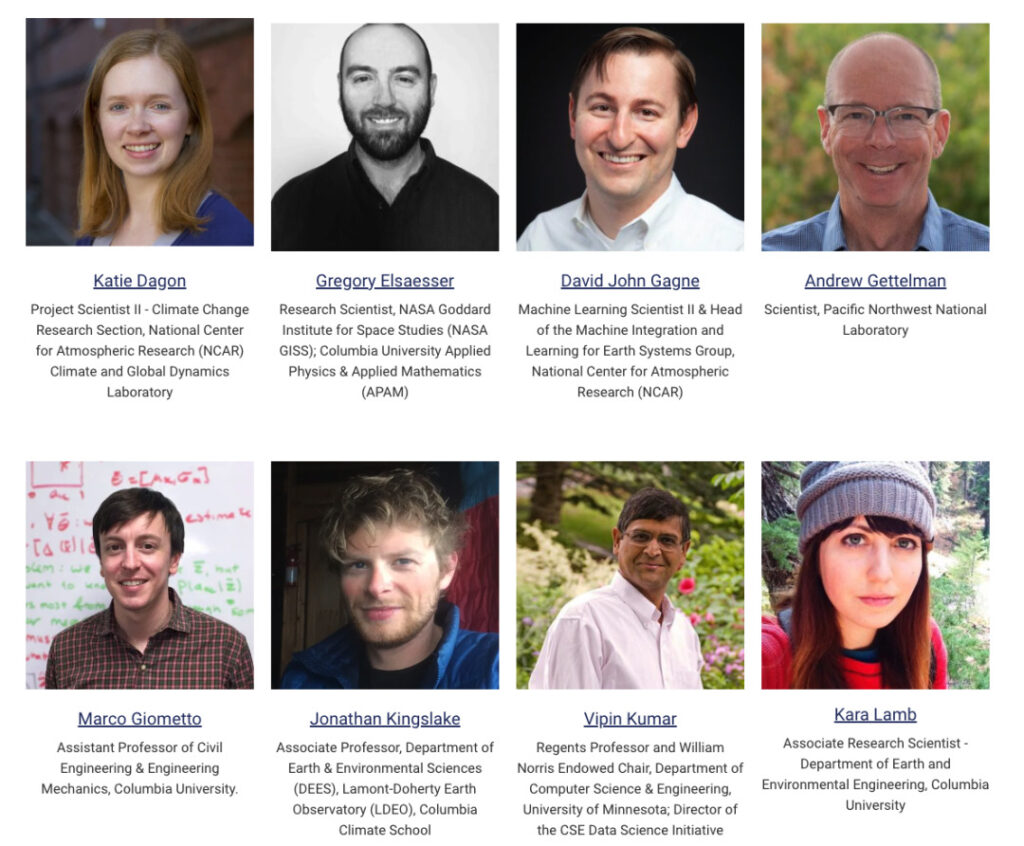

Brief introduction of some members of LEAP Lab

|Laboratory official website: https://leap.columbia.edu

The researchers are currently applying their machine learning approach to climate models.Improved forecasts of precipitation intensity and variability, and enabling scientists to more accurately predict changes in the water cycle and extreme weather patterns in the context of global warming.

At the same time, this study also opens up new research directions, such as exploring the possibility that precipitation has a memory effect, that is, the atmosphere retains information about recent weather conditions, which in turn affects subsequent atmospheric conditions. This new method may have a wide range of applications beyond precipitation simulations, such as better simulations of ice sheets and ocean surfaces.

This article was first published on HyperAI WeChat public platform~