By Super Neuro

The title is a bit alarmist. We also wrote about Turing’s gossip a few days ago. There is no doubt that he is regarded as the "Father of Artificial Intelligence" by the industry.

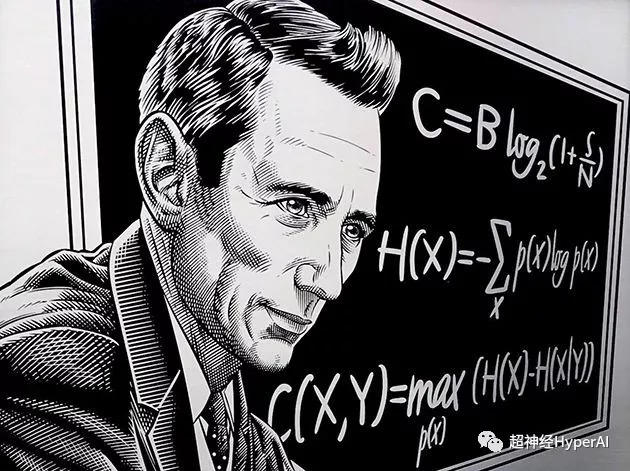

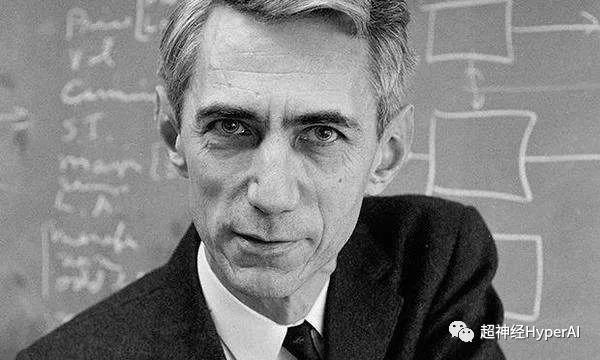

The elder I am writing about today is also a remarkable figure - Shannon, who is honored by the academic community as the "Father of Information Science". As we all know, information theory has greatly promoted the artificial intelligence industry, so we call Shannon the "Uncle of Artificial Intelligence".

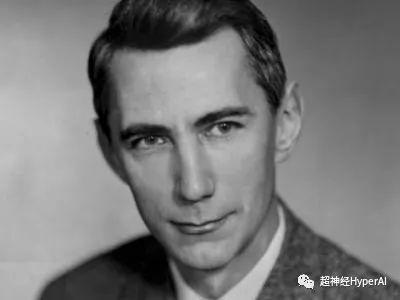

Shannon was handsome and confident when he was young, and he was also a distant relative of inventor Edison

Claude Shannon was born in a small town called Gaylord, Michigan, on April 30, 1916. At that time, Turing was in England and had grown to the age of four. Shannon received a bachelor's degree in mathematics and electrical engineering from the University of Michigan in 1936. In 1940, he received a master's and doctoral degree in mathematics from MIT, and in 1941 he joined Bell Labs.

Compared to Turing, Shannon's life was smoother. He died on February 26, 2001 at the age of 84.

My uncle’s great support for AI

The information theory pioneered by Shannon is not only the cornerstone of information and communication science, but also remains the most importantIt is an important theoretical basis for deep learning.

Information theory combines calculus, probability theory, statistics and other disciplines, and plays a very important role in deep learning:

-

Common cross entropy loss functions;

-

Construct a decision tree based on maximum information gain;

-

The Viterbi algorithm is widely used in NLP and speech;

-

RNNs are commonly used in machine translation and encoders and decoders are commonly used in various types of models;

A brief history of the development of information theory

Let's start with a simple example. The following two sentences contain different amounts of information.

"Bruno is a dog."

"Bruno is a big brown dog."

It is obvious that the two sentences convey different amounts of information. Compared with the first sentence, the second sentence contains more information. It not only tells us that Bruno is a dog, but also tells us the dog's coat color and body shape.

But these two simple sentences were the biggest headaches for scientists and engineers in the early 20th century.

They wanted to quantify the differences between this information and describe it mathematically.

Unfortunately, there was no readily available analytical or mathematical method to do this at the time.

Since then, scientists have been searching for the answer to this question, hoping to find the answer from aspects such as the semantics of the data. However, it turns out that such research has no other use except to increase the complexity of the problem.

It wasn't until Shannon, a mathematician and engineer, introduced "entropy"After the concept of digital information was first proposed, the problem of quantitative measurement of information was finally solved, which also marked the beginning of our entry into the "digital information age".

Shannon did not focus on the semantics of data, but rather on probability distribution and "Uncertainty"To quantify information and introduce 「bit」The concept of measuring information.

He believes that the semantics of data is not important when it comes to information content.

This revolutionary idea not only laid the foundation for information theory, but also opened up new paths for the development of fields such as artificial intelligence. Therefore, Claude Shannon is also recognized as the father of the information age.

Common Elements in Deep Learning: Entropy

Information theory has many application scenarios. Here we mainly look at its four more common applications in the fields of deep learning and data science.

Entropy

Also known as information entropy or Shannon entropy, it is used to measure uncertain results. We can understand it through the following two experiments:

-

Toss a fair coin, so that the probability of the result is 0.5;

-

Toss a biased coin so that the probability of the result is 0.99;

Obviously, it is easier for us to predict the results of Experiment 2 than Experiment 1. Therefore, from the results, Experiment 1 is more uncertain than Experiment 2, and entropy is specifically used to measure this uncertainty.

If the experimental results have more uncertainty, then its entropy is higher, and vice versa.

In a deterministic experiment where the outcome is completely certain, the entropy is zero. In a completely random experiment, such as a fair dice, where there is a lot of uncertainty about each outcome, the entropy will be large.

Another way to determine entropy is to define entropy as a function of the average information obtained when observing the results of a random experiment. The fewer the results, the less information is observed, and the lower the entropy.

For example, in a deterministic experiment, we always know the outcome, so no new information is gained from observing the outcome, and thus the entropy is zero.

Mathematical formula

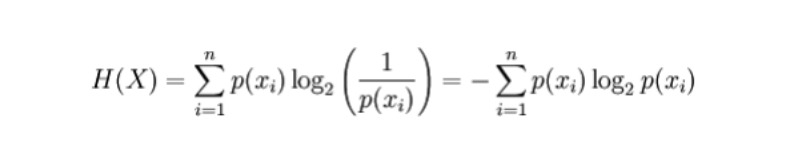

For a discrete random variable X, the possible results are recorded as x_1, ..., x_n, then the entropy calculation formula is as follows (entropy is represented by H, unit bit):

Where p(x_i) is the probability of the outcome produced by variable X.

application

-

Build an automatic decision tree. During the construction process, all feature selection can be done using the entropy criterion.

-

The greater the entropy, the more information there is, and the more predictive value it has. Based on this, we can find the most valuable model from the two competing models, that is, the model with the highest entropy.

Common and important elements in deep learning: cross entropy

Cross-Entropy

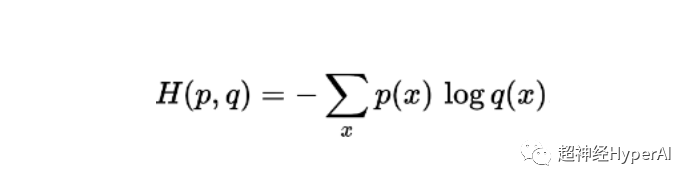

definition:Cross entropy is mainly used to measure the difference information between two probability distributions. It can tell us how similar the two probabilities are.

Mathematical formula

The cross entropy calculation formula for two probabilities p and q defined in the same sample is (entropy is represented by H, unit bit):

application

-

Cross entropy loss function is widely used in classification models like Logistic Regression. When the prediction deviates from the true output, the cross entropy loss function increases.

-

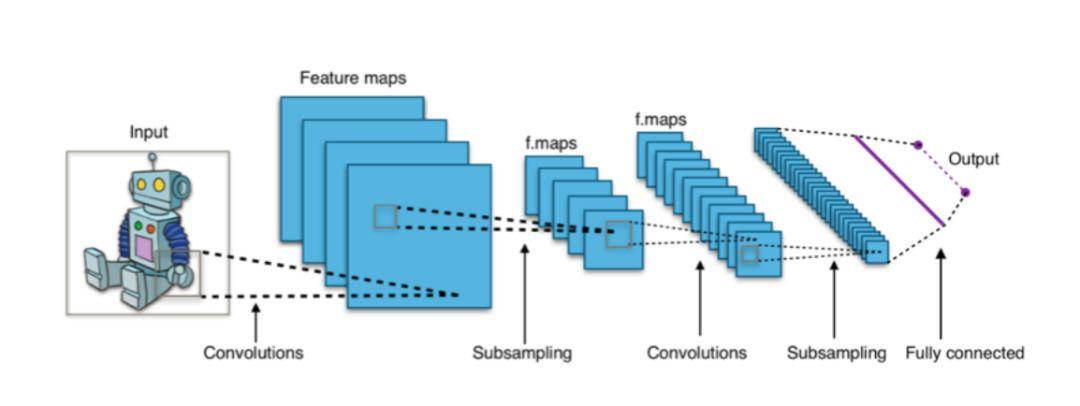

In deep learning architectures like CNNs, the final output “softmax” layer often uses a cross-entropy loss function.

Figure 1: CNN-based classifiers usually use the softmax layer as the final layer and use the cross entropy loss function for training.

Common and important elements in deep learning: mutual information

Mutual Information

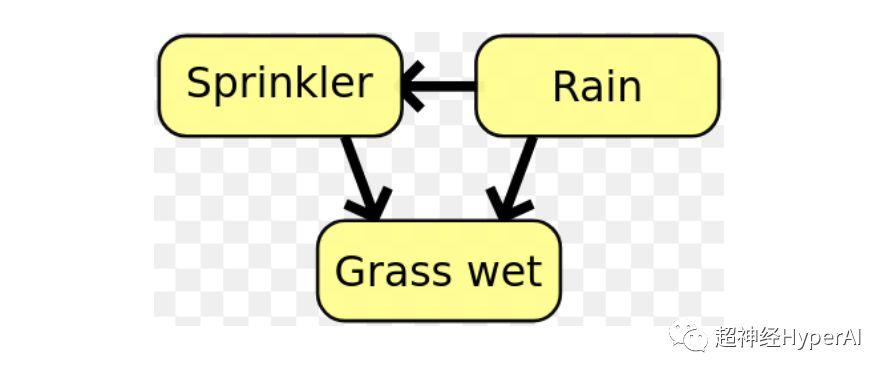

Definition Mutual information is used to measure the degree of mutual dependence between two probability distributions or random variables. Simply put, it is how much information one variable carries with it.

The correlation between random variables captured by mutual information is different from the general correlation which is limited to the linear field. It can also capture some nonlinear related information and has a wider range of applications.

Mathematical formula

The mutual information formula of two discrete random variables X and Y is:

Where p(x,y) is the joint probability distribution of x and y, and p(x) and p(y) are the marginal probability distributions of x and y, respectively.

application

- Feature selection: Mutual information can not only capture linear correlation, but also pay attention to nonlinear correlation, which makes feature selection more comprehensive and more accurate.

- In Bayesian networks, mutual information is used to learn the relationship structure between random variables and define the strength of these relationships..

Figure 1: In a Bayesian network, the relationship structure between variables can be determined using mutual information.

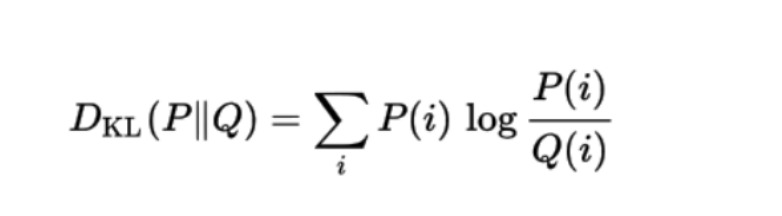

Common and important elements in deep learning: KL divergence

KL divergence (Kullback–Leibler divergence)

KL divergence, also known as relative entropy, is used to measure the degree of deviation between two probability distributions.

Suppose the data we need is in the true distribution P, but we don’t know this P. In this case, we can create a new probability distribution Q to fit the true distribution P.

Since the data in Q is only approximate to P, Q is not as accurate as P. Therefore, relative to P, some information is lost in Q, and the amount of this lost information is measured by KL divergence.

KL divergence tells us how much information we will lose when we decide to use Q (the approximation of P). The closer the KL divergence is to zero, the closer the data in Q is to P.

Mathematical formula

The mathematical formula for the KL divergence of a probability distribution Q with another probability distribution P is:

application

KL divergence is currently used in VAE (Variational Autoencoder) in unsupervised machine learning systems.

In 1948, Claude Shannon formally proposed "Information Theory" in his groundbreaking paper "A Mathematical Theory of Communication", opening a new era. Today, information theory has been widely used in many fields such as machine learning, deep learning, and data science.

The First Shannon Award

As we all know, the highest honor in the computer industry is the Turing Award. The Turing Award was established in 1966 by the American Computer Association to commemorate Turing's outstanding contributions. Similarly, the Shannon Award is equally important in the information field.

The difference is that Turing died in 1954 and did not have the chance to know that the world had set up this award for him.

Shannon was much luckier. The Shannon Award was given by The IEEE Society was established in 1972 to honor scientists and engineers who have made outstanding contributions in the field of information theory. In the first session, Shannon himself received the Shannon Award.

"Shannon won the Shannon Award, and history calls it the Shannon Routine"