Command Palette

Search for a command to run...

DRACO Cross-Disciplinary Deep Research Benchmark Dataset

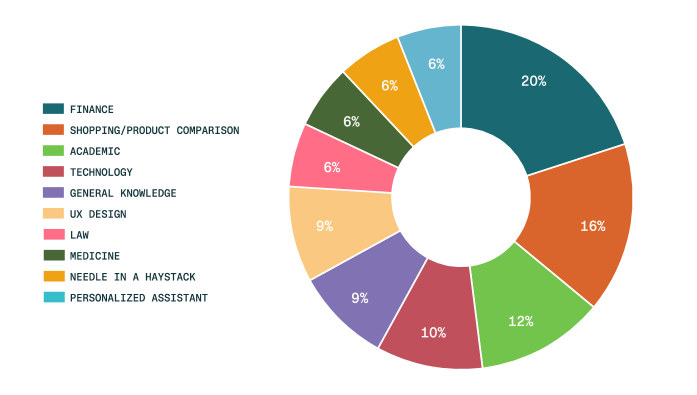

The DRACO cross-domain deep research benchmark dataset is a dataset released by the Perplexity team for evaluating complex research tasks. Related papers include... DRACO: A Cross-Domain Benchmark for Deep Research Accuracy, Completeness, and ObjectivityThe aim is to systematically evaluate the comprehensive capabilities of in-depth research systems in terms of accuracy, completeness, and objectivity. This dataset contains 100 complex research tasks, covering 40 countries and regions across five continents, and encompassing 10 major application areas including finance, shopping/product comparison, academia, and technology. Each task corresponds to a multi-step, multi-source information retrieval and analysis problem, and is accompanied by evaluation criteria designed and validated by 26 domain experts. Each criterion contains an average of approximately 40 evaluation metrics, providing fine-grained evaluation of the model output from four dimensions: factual accuracy, breadth and depth of analysis, presentation quality, and citation quality. The task distribution by field is shown in the following figure:

Data Fields:

- id: A unique identifier for the task

- domain: The domain to which the task belongs

- problem: A complete research query that requires an answer

- Answer: The evaluation criteria are encoded in JSON format and include the specific standards for each evaluation dimension.

Build AI with AI

From idea to launch — accelerate your AI development with free AI co-coding, out-of-the-box environment and best price of GPUs.