Command Palette

Search for a command to run...

Omni-MATH Mathematical Reasoning Benchmark Dataset

Date

Size

Publish URL

Paper URL

* This dataset supports online use.Click here to jump.

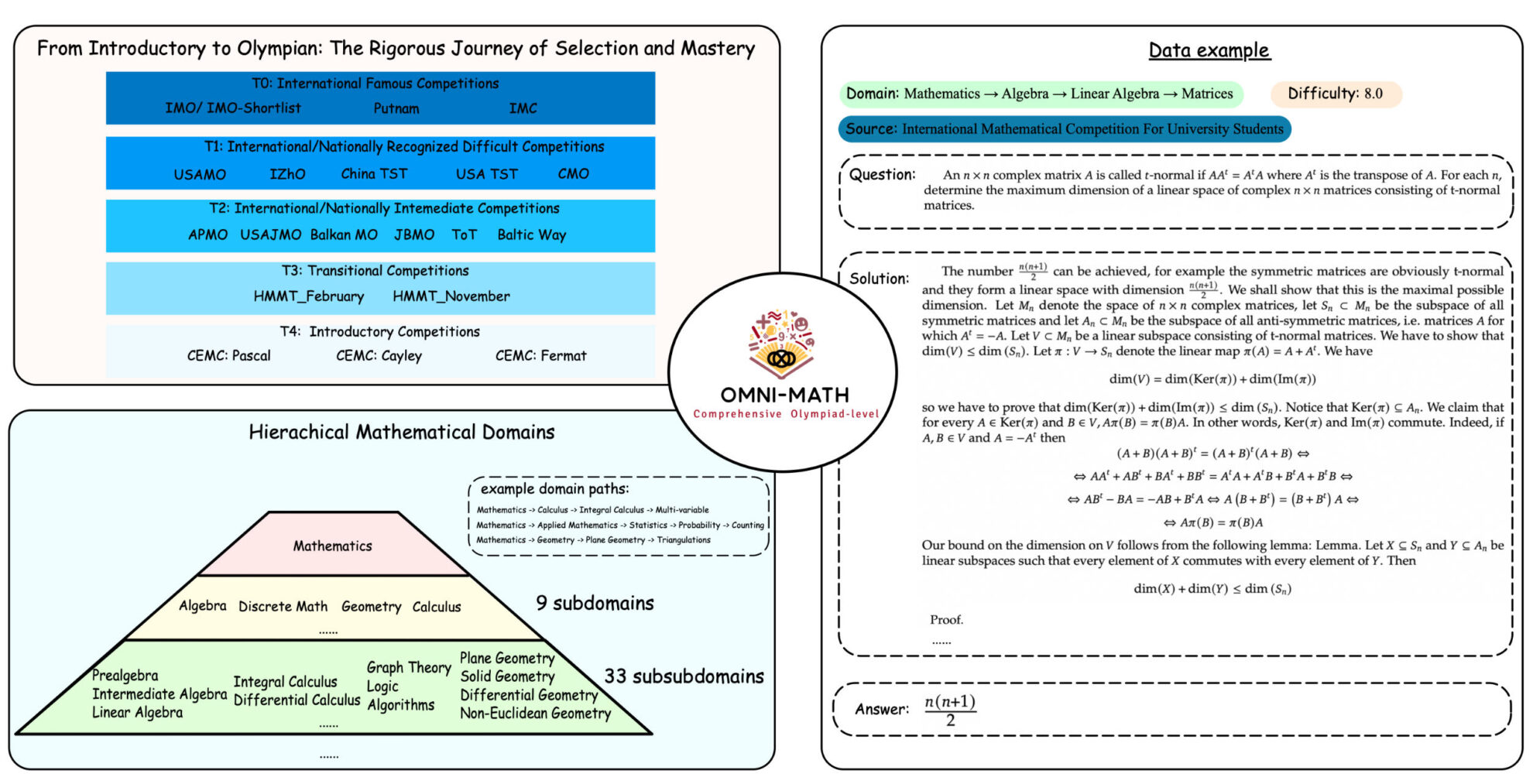

Omni-MATH is an Olympic-level mathematical reasoning benchmark dataset created by Peking University and Alibaba, which aims to evaluate the performance of large language models (LLMs) on Olympic-level mathematical problems.Omni-MATH: A Universal Olympiad Level Mathematic Benchmark For Large Language Models".

This dataset contains 4,428 rigorously manually annotated competition-level math problems, covering 33 subfields and more than 10 different difficulty levels, from the Olympiad preparatory level to top Olympiad mathematics competitions such as IMO (International Mathematical Olympiad), IMC (International Mathematical Contest) and Putnam Mathematics Competition.

The creation process of Omni-MATH includes collecting data from global mathematics competitions and verifying it through manual annotation to ensure the high quality and diversity of the data. During the construction of the dataset, the research team used GPT-4o to classify the questions and divide them into different mathematical fields to evaluate the performance of the model in different mathematical fields.

Build AI with AI

From idea to launch — accelerate your AI development with free AI co-coding, out-of-the-box environment and best price of GPUs.